Exam Code: NSE5_FAZ-7.0

Exam Name: Fortinet NSE 5 - FortiAnalyzer 7.0

Certification Provider: Fortinet

Corresponding Certification: NSE5

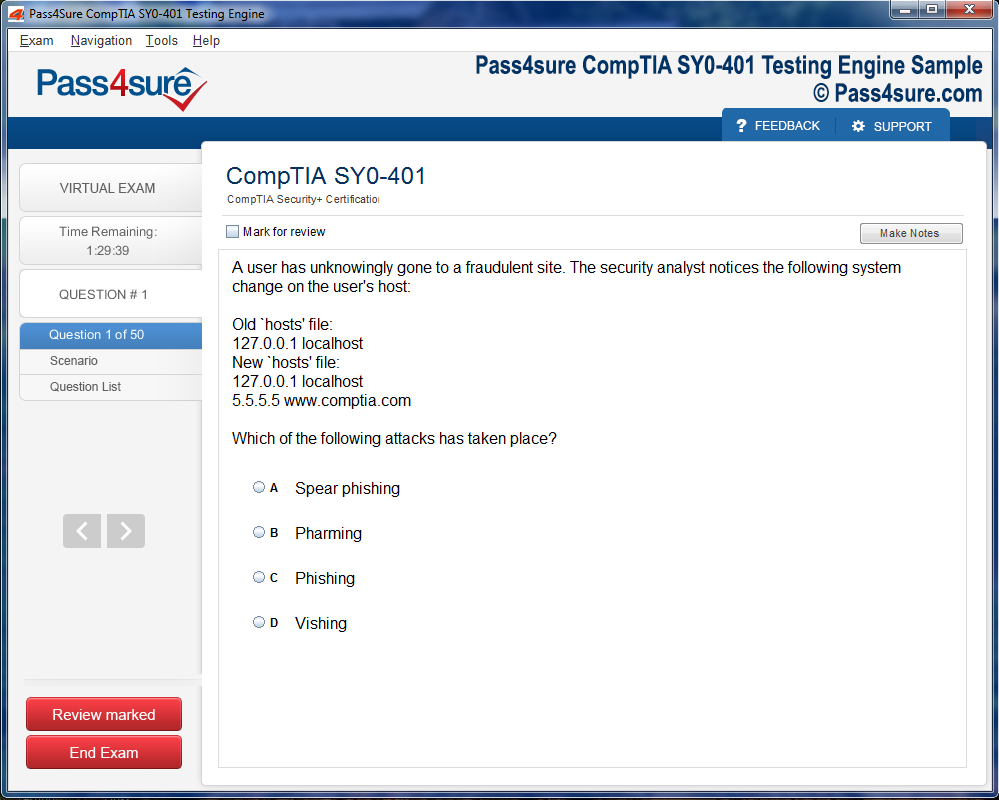

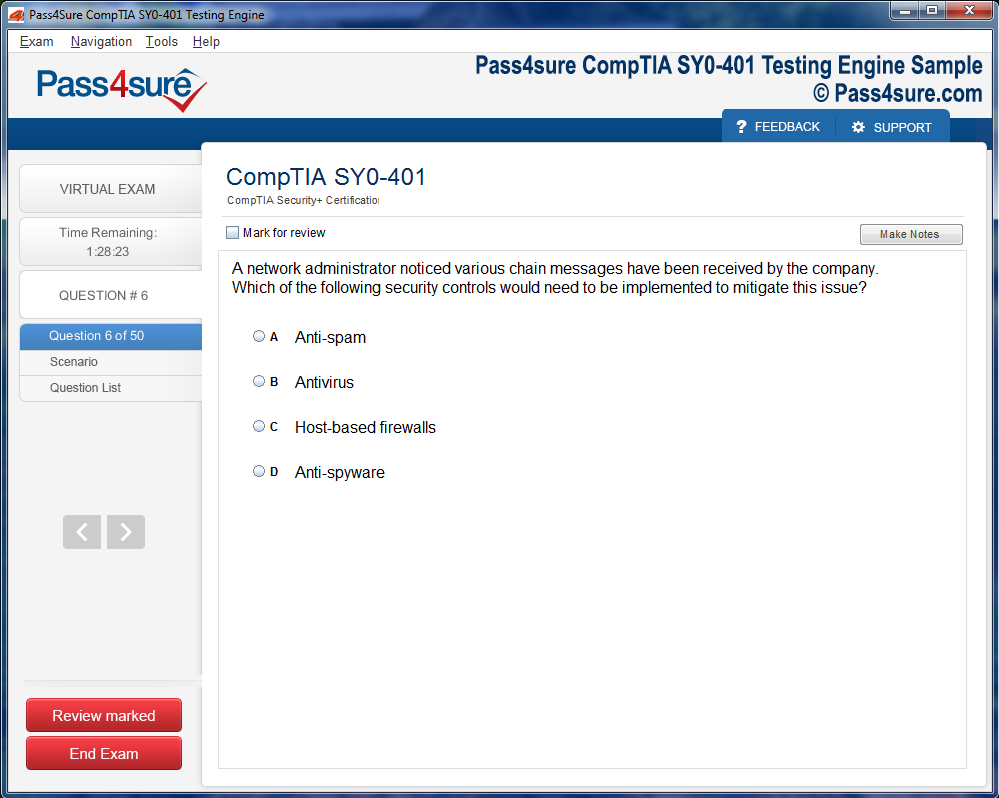

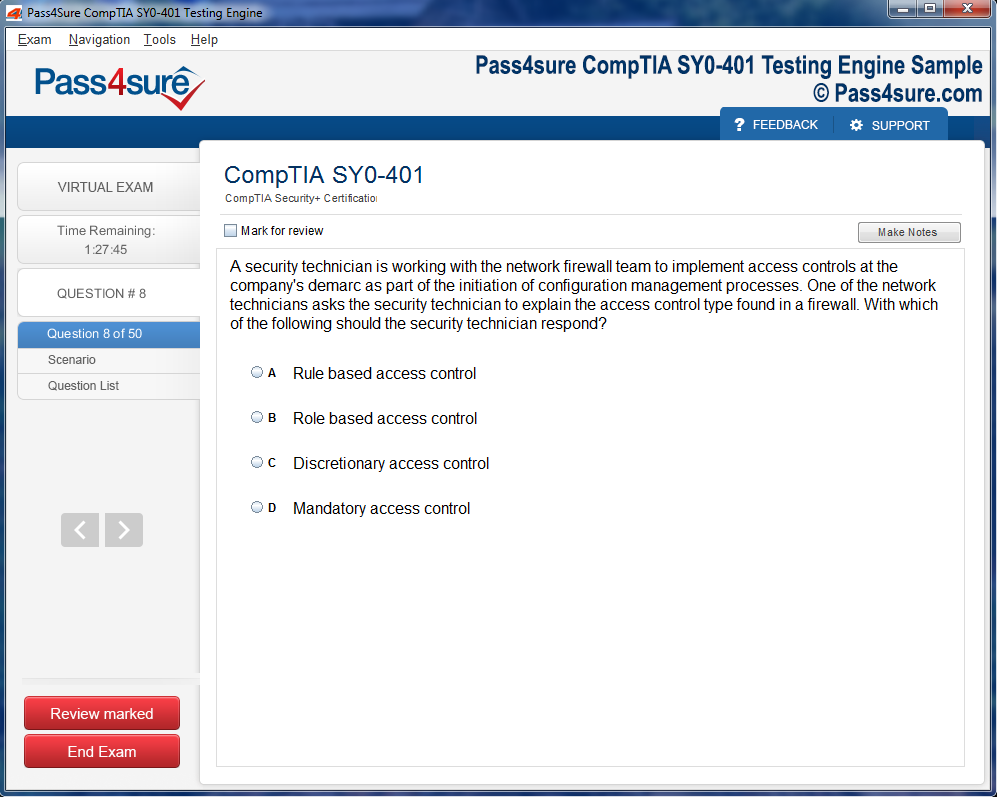

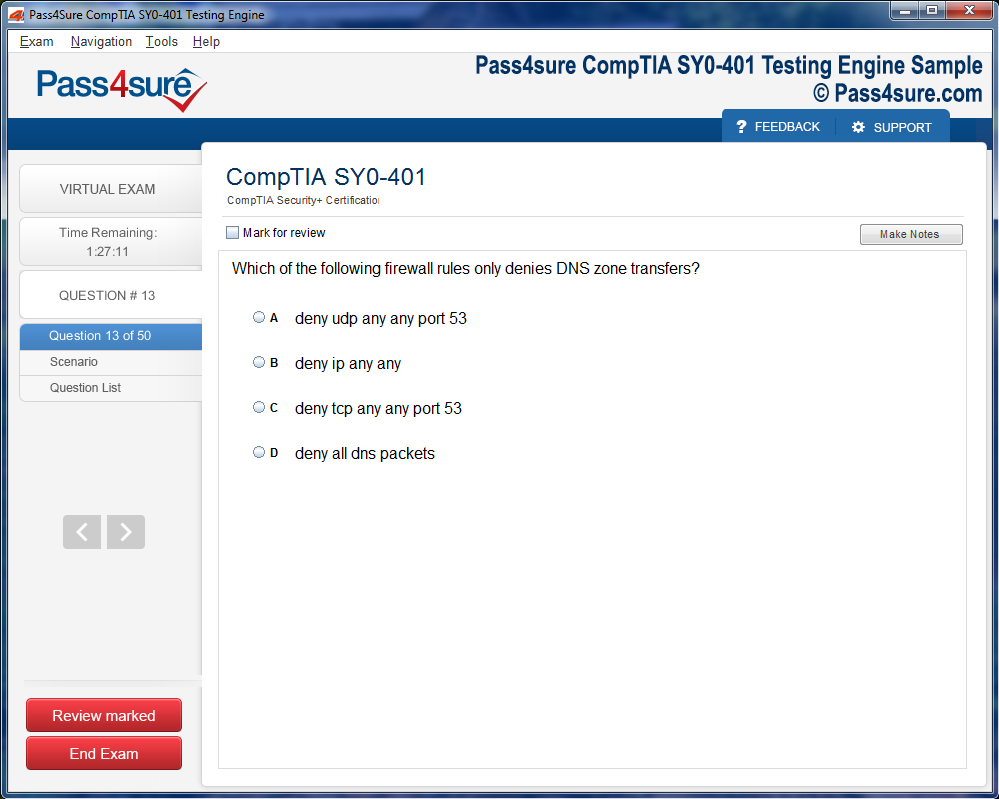

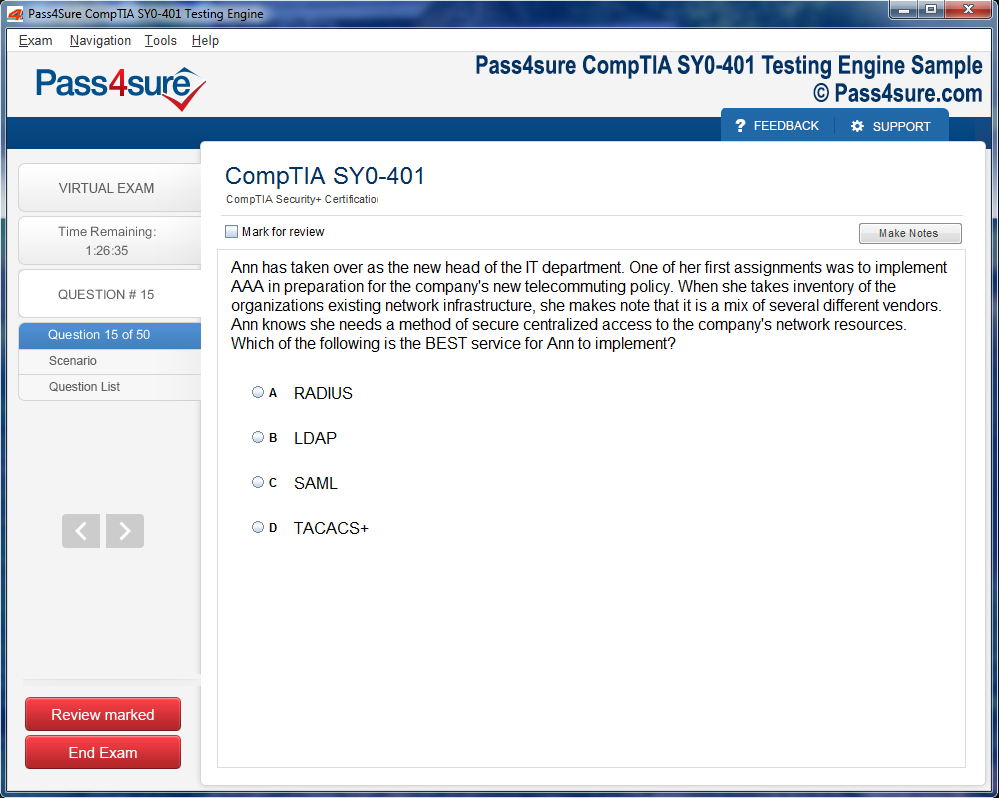

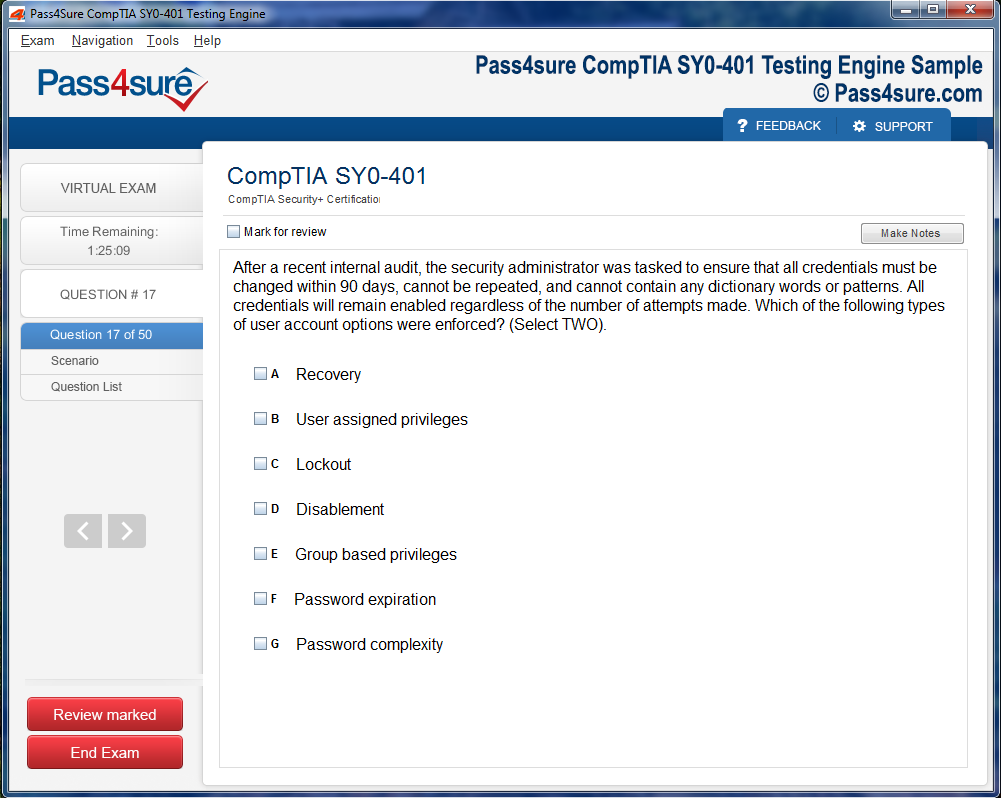

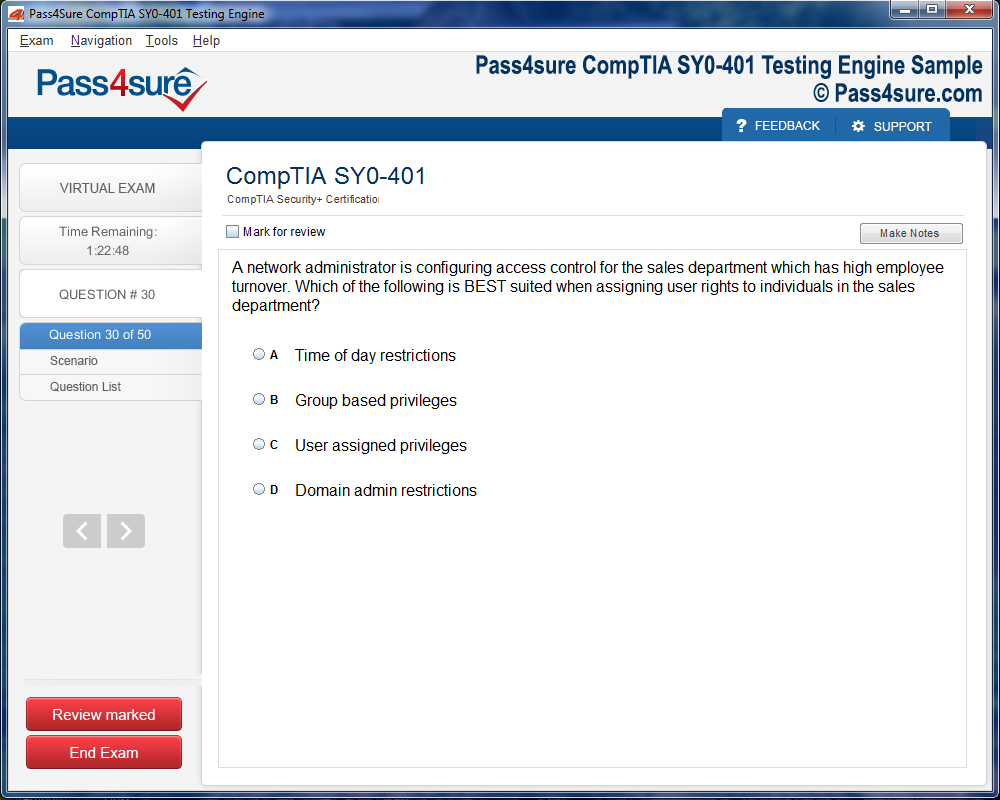

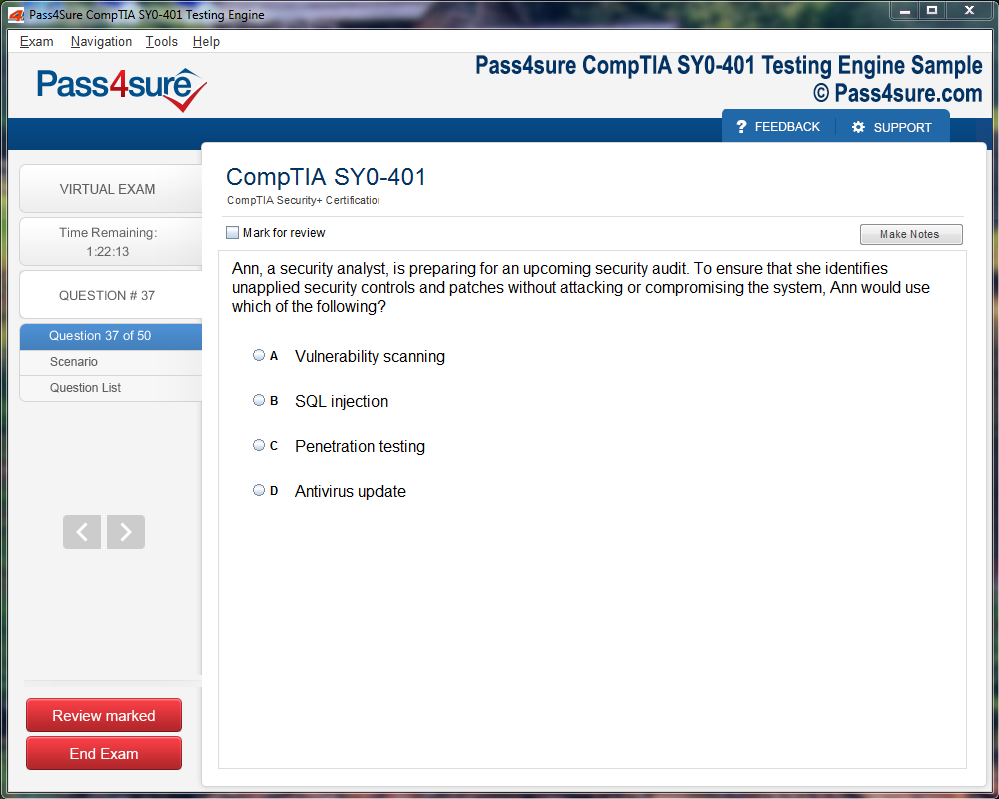

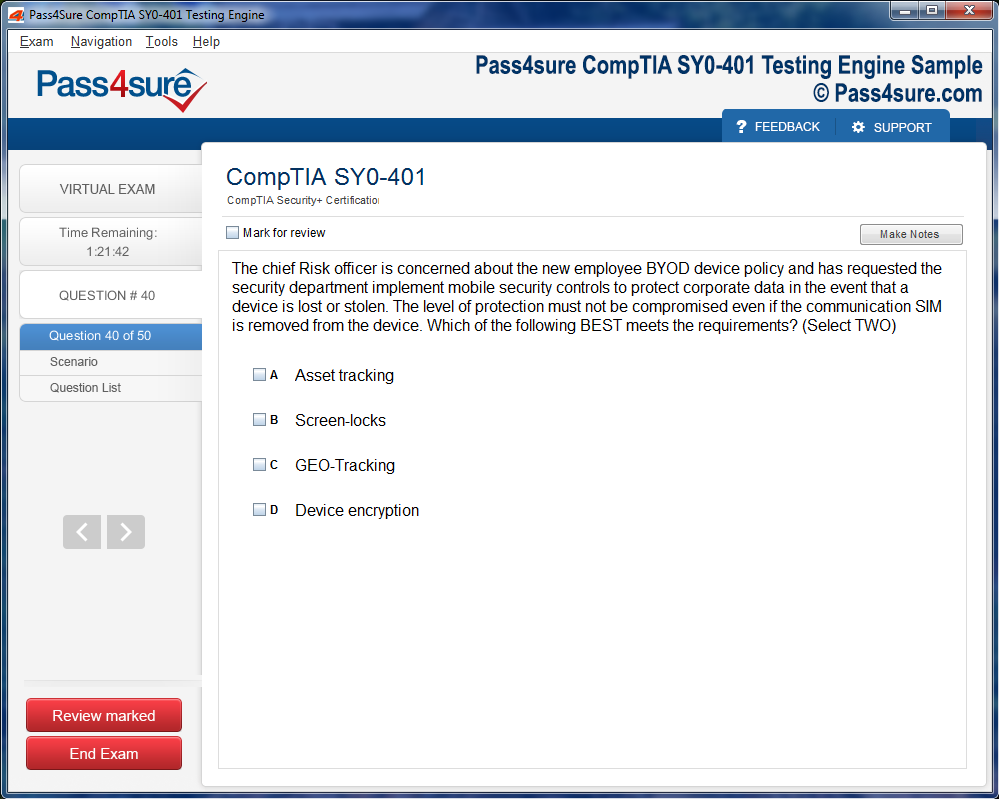

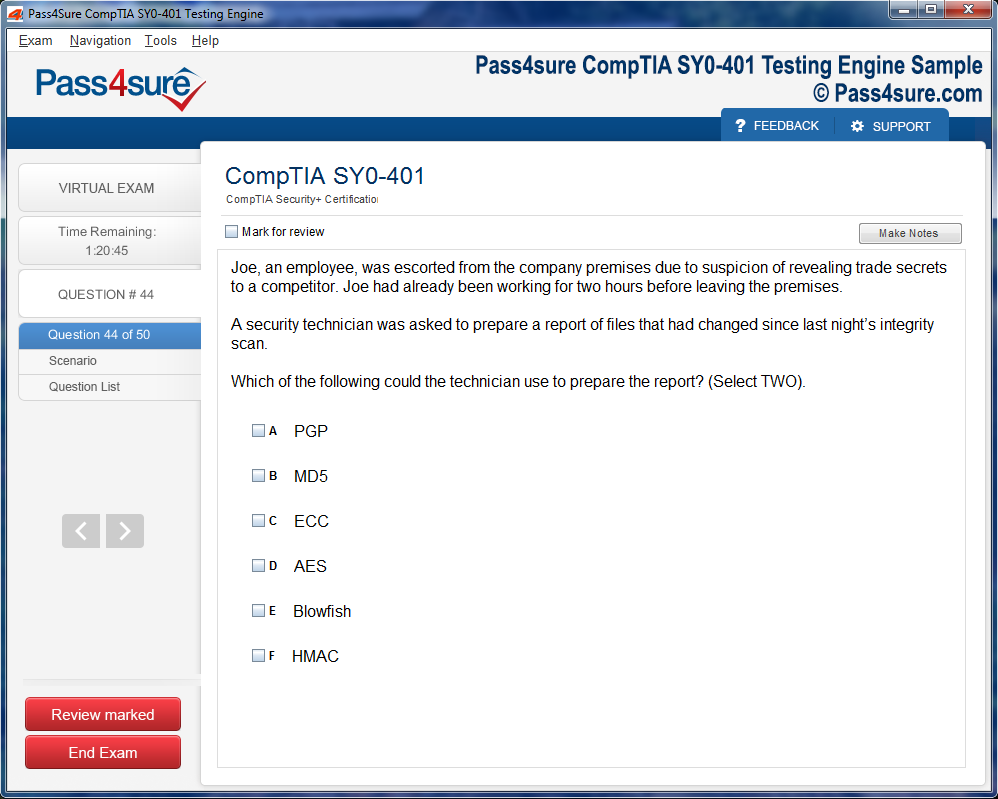

Product Screenshots

Frequently Asked Questions

How does your testing engine works?

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations.

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.

NSE5_FAZ-7.0 – Fortinet Professional Certification in FortiAnalyzer

FortiAnalyzer emerges as a linchpin within contemporary cybersecurity architectures, orchestrating a symphony of visibility, telemetry, and forensic analysis that transcends conventional logging paradigms. In an era where cyber threats are polymorphic, decentralized, and increasingly obfuscated, the ability to consolidate, correlate, and scrutinize security data becomes indispensable. FortiAnalyzer functions as the cerebral nexus of Fortinet's security ecosystem, rendering opaque network activities into decipherable, actionable intelligence.

The raison d'être of FortiAnalyzer is not merely to archive logs but to metamorphose raw telemetry into strategic insights. Logging, reporting, and analytics constitute the trinity of operational sagacity that enterprises necessitate. Logs capture ephemeral occurrences in real-time, reporting structures synthesize these occurrences into intelligible narratives, and analytics imbue these narratives with prognostic and diagnostic power. This trinity empowers organizations to preempt incursions, orchestrate compliance adherence, and optimize the defensive posture across multifaceted network landscapes.

Architectural Intricacies and Deployment Paradigms

FortiAnalyzer’s architecture epitomizes modularity and scalability. The platform is designed to ingest vast torrents of log data from heterogeneous Fortinet devices, ranging from next-generation firewalls to secure access gateways and endpoint protection appliances. Central to its design is a dual-layered approach: a high-throughput logging engine capable of handling prodigious log volumes and an analytical layer that facilitates deep-dive investigations and cross-correlation of anomalous events.

Deployment scenarios are fluid, accommodating on-premises, cloud-native, and hybrid topologies. Enterprises can leverage FortiAnalyzer as a centralized log repository within an isolated datacenter, as a distributed cluster spanning geospatially disparate locations, or in conjunction with cloud orchestration platforms to harness elastic scaling and enhanced resilience. Integration with Fortinet products is seamless, owing to standardized APIs and native telemetry pipelines, ensuring that log ingestion is both synchronous and non-disruptive.

Logging: The Bedrock of Security Intelligence

Logging, often perceived as a mundane technicality, is, in reality, the bedrock upon which security intelligence is constructed. FortiAnalyzer’s logging capabilities extend beyond rudimentary event capture; it encompasses granular data such as session metadata, application-specific attributes, threat signatures, and behavioral anomalies. This granularity permits forensic precision when unraveling sophisticated threat vectors, including polymorphic malware, lateral movement within internal networks, and advanced persistent threats.

Moreover, FortiAnalyzer’s logs are temporally indexed, enabling chronological reconstruction of events. This temporal mapping is invaluable for incident responders, as it permits a stepwise deconstruction of attack sequences, identifies potential pivot points, and elucidates the causative chain of events. Enterprises benefit from predictive insights by analyzing historical log patterns, thereby anticipating vulnerabilities before they are exploited.

Reporting: From Data to Narratives

While logging is the act of archiving, reporting is the art of storytelling through data. FortiAnalyzer provides a plethora of pre-configured report templates, yet the platform’s true potency lies in its capacity for bespoke reporting. Enterprises can construct reports that synthesize multidimensional data into cohesive narratives, tailored to operational teams, compliance auditors, or executive dashboards.

Reports generated by FortiAnalyzer are not static artifacts; they are dynamic, interactive constructs. Users can drill down into anomalous events, filter by severity, device type, or time window, and correlate incidents across geographies. This interactivity transforms raw data into decision-grade intelligence, augmenting situational awareness and empowering swift, informed responses to emerging threats.

Analytics: Extracting Predictive Insight

Analytics is where FortiAnalyzer transcends traditional security tools, converting data repositories into prescient instruments. Through behavioral analytics, anomaly detection, and machine learning integration, the platform can discern subtle deviations from normative patterns that may presage cyber incursions. For instance, an unexpected burst of outbound traffic from a dormant endpoint or anomalous access patterns to sensitive databases triggers alerts before actual compromise occurs.

The platform’s analytical engine leverages both heuristic and statistical models, enabling nua anced interpretation of security telemetry. By correlating disparate events across multiple devices and temporal windows, FortiAnalyzer constructs threat topologies that illuminate the modus operandi of adversaries. This empowers security teams to not merely react to incidents but anticipate and neutralize them proactively.

Integration with Fortinet Ecosystem

FortiAnalyzer’s true strength is amplified through its seamless integration with the broader Fortinet ecosystem. It communicates natively with FortiGate, FortiMail, FortiSandbox, and FortiEDR devices, consolidating telemetry into a unified analytical framework. This integration facilitates a synchronized defense strategy, where insights gleaned from firewall events, endpoint telemetry, and sandbox detonation reports coalesce into a comprehensive threat landscape.

The synergy extends to automated workflows: detected anomalies can trigger policy adjustments, quarantine directives, or notifications across devices, minimizing latency between detection and mitigation. This orchestration transforms isolated security apparatuses into a cohesive, adaptive defense architecture.

Initial Setup and System Prerequisites

FortiAnalyzer, as a centralized log management and analytics solution, demands meticulous groundwork for optimal functionality. Before provisioning the appliance, it is crucial to delineate network topology and ascertain IP schema congruity. Device orchestration relies heavily on precise DNS resolution, NTP synchronization, and the calibration of network interfaces. The initial boot sequence reveals a plethora of configuration prompts, including administrative credential establishment and management interface designation, which must be curated with cryptographic robustness in mind. Furthermore, understanding the architectural nuances between virtual, hardware, and cloud instances enables the architect to anticipate resource allocation and logging throughput.

Device Registration and Network Integration

The confluence of devices with FortiAnalyzer is predicated upon secure registration protocols. FortiGate, FortiMail, FortiWeb, and other ecosystem appliances communicate through encrypted channels, necessitating certificate verification and mutual authentication. Each device, when inducted into FortiAnalyzer, contributes event streams that enrich analytical capabilities. The registration procedure entails specifying device identifiers, selecting operational zones, and configuring log forwarding settings. A perspicacious administrator must also anticipate network latency, log volume, and retention periods to prevent bottlenecks in log aggregation. Subtle misconfigurations at this stage may manifest as incomplete data ingestion, thus impairing correlation and forensic analysis.

Log Storage Architecture and Retention Strategy

Optimizing log storage requires a synoptic comprehension of database structuring and indexing mechanisms. FortiAnalyzer leverages a tiered storage model, balancing high-speed storage for real-time analytics against bulk archival repositories. Administrators must meticulously plan retention policies based on regulatory compliance, operational exigencies, and storage economics. Techniques such as log compression, deduplication, and partitioning are indispensable for sustaining storage efficiency while ensuring rapid retrieval. Strategic foresight in this domain mitigates the risk of storage exhaustion, facilitates accelerated querying, and undergirds long-term security auditing requirements.

Policy Creation and Granular Access Control

Crafting robust policies entails a precise alignment of organizational objectives, threat models, and regulatory frameworks. FortiAnalyzer enables intricate policy construction encompassing log filters, event categorization, and device-specific rules. The judicious deployment of access control lists ensures that sensitive logs remain impervious to unauthorized inspection. Policies may be parameterized to trigger automated responses, escalate alerts, or aggregate events for longitudinal analysis. The subtle art of policy tuning involves balancing verbosity with conciseness, thereby preserving both operational clarity and analytic acuity.

Event Correlation and Contextual Analysis

FortiAnalyzer’s event correlation engine synthesizes disparate logs into coherent narratives. By juxtaposing seemingly innocuous events, administrators can unearth latent threats and anomalous patterns. Correlation rules can be crafted using temporal, behavioral, and semantic dimensions, transforming raw logs into actionable intelligence. Integrating contextual metadata, such as geolocation, user identity, and device topology, amplifies the precision of threat detection. Continuous refinement of correlation policies fosters a proactive posture, enabling the enterprise to preempt intrusions and operational disruptions with prescient efficacy.

Alerting Mechanisms and Notification Architecture

Timely alerting forms the cornerstone of proactive security management. FortiAnalyzer facilitates multi-tiered notification paradigms, encompassing email, syslog forwarding, SNMP traps, and RESTful API integrations. Each alert can be meticulously calibrated for severity thresholds, recurrence intervals, and suppression criteria to avert alarm fatigue. Advanced configurations may incorporate machine learning-derived baselines, permitting the system to discern aberrations that deviate from normal operational cadence. Crafting an alerting architecture with precision ensures that critical anomalies garner immediate attention while trivial fluctuations remain inconspicuous.

Log Visualization and Analytic Dashboards

The translation of complex log data into intelligible dashboards is indispensable for operational oversight. FortiAnalyzer provides customizable widgets, heatmaps, and temporal trend graphs that distill voluminous data into comprehensible insights. Analysts can correlate traffic patterns, security incidents, and compliance deviations through visual paradigms, accelerating decision-making processes. Strategic dashboard design emphasizes clarity, prioritization, and the reduction of cognitive load, allowing stakeholders to focus on emergent risks rather than procedural minutiae.

Workflow Optimization and Automation

Operational efficiency is magnified when repetitive tasks are automated through FortiAnalyzer’s scripting and policy orchestration features. Scheduled report generation, bulk device configuration, and automated remediation scripts liberate administrators from manual labor while reducing the probability of human error. Implementing structured workflows that integrate logging, alerting, and incident response cultivates a cohesive operational ecosystem. Meticulous documentation of automated procedures ensures reproducibility, auditability, and knowledge transfer within the security team.

Compliance Auditing and Forensic Capabilities

FortiAnalyzer’s log management architecture is inherently conducive to compliance verification and forensic investigations. Customizable log retention schedules, coupled with immutable storage options, satisfy regulatory mandates across diverse jurisdictions. In forensic scenarios, the ability to trace events with millisecond granularity, coupled with contextual metadata, provides a robust evidentiary chain. Administrators must implement rigorous indexing and search strategies to facilitate rapid incident reconstruction, thereby enhancing investigative efficacy and minimizing organizational exposure to security breaches.

High-Availability Configuration and Redundancy

Ensuring uninterrupted log availability requires meticulous implementation of high-availability (HA) paradigms. FortiAnalyzer supports active-active and active-passive HA configurations, providing resilience against hardware failure, network partitioning, or maintenance windows. Synchronous replication, heartbeat monitoring, and failover orchestration safeguard the integrity of logging operations. A well-engineered HA environment mitigates data loss, enhances performance under peak load, and instills operational confidence, especially in enterprises with stringent uptime requirements.

Integrating Threat Intelligence and External Feeds

Augmenting FortiAnalyzer with external threat intelligence enriches analytical depth and predictive capability. By ingesting curated threat feeds, including emerging malware signatures, phishing heuristics, and vulnerability advisories, administrators can anticipate adversarial activity. Correlation engines can fuse these intelligence inputs with internal logs, facilitating proactive defense measures. Integration requires meticulous mapping of feed structures, normalization of indicators, and the establishment of automated update mechanisms to maintain relevance and accuracy.

Scalability Considerations and Performance Tuning

As the organizational footprint expands, the FortiAnalyzer environment must scale to accommodate increased device density, log volume, and analytic complexity. Performance tuning involves optimizing database indices, configuring log compression algorithms, and balancing processing loads across distributed nodes. Predictive capacity planning, coupled with historical data analysis, informs resource provisioning, ensuring that analytical throughput remains consistent even under peak operational stress. Neglecting scalability considerations can lead to latency, incomplete data ingestion, and diminished situational awareness.

Security Hardening and Access Governance

FortiAnalyzer itself becomes a sensitive asset requiring rigorous security hardening. Administrative interfaces must enforce multifactor authentication, granular role-based access control, and IP-based access restrictions. Encrypting log transmission and storage, coupled with periodic credential rotation, safeguards against exfiltration and insider threats. Implementing audit trails for administrative actions enhances accountability, ensuring that operational modifications are traceable and compliant with internal governance mandates.

Unraveling the Art of Log Parsing

Log parsing is the meticulous process of transmuting raw, chaotic log entries into structured, intelligible data that can be analyzed with precision. Logs, often voluminous and labyrinthine, conceal invaluable insights amidst seemingly random sequences of timestamps, error codes, and event descriptors. By employing sophisticated parsing mechanisms, one can distill patterns, segregate salient events, and construct a coherent narrative of system behaviors. This process frequently necessitates regular expressions, tokenization, and semantic interpretation to disentangle nested or obfuscated information streams. Mastery of log parsing engenders an unprecedented capacity to anticipate system anomalies before they precipitate operational disruptions.

Trend Analysis in Data Streams

Trend analysis transcends mere observation; it is the art of discerning subtle, latent patterns that traverse temporal dimensions. In log analytics, this entails scrutinizing sequences of events to unearth recurrent motifs, cyclical fluctuations, or insidious drift in system performance metrics. Employing statistical frameworks, moving averages, or exponential smoothing techniques enables analysts to prognosticate future behaviors with considerable accuracy. Moreover, incorporating multidimensional analysis—cross-referencing temporal, categorical, and hierarchical facets—enhances the fidelity of insights. When executed with dexterity, trend analysis metamorphoses raw data into predictive intelligence, empowering stakeholders to enact preemptive measures.

Anomaly Detection in Complex Environments

Anomaly detection is the alchemy of identifying deviations from normative patterns within sprawling datasets. In the domain of log analysis, these anomalies often presage critical system failures, security breaches, or operational inefficiencies. Techniques range from heuristic thresholds to machine learning paradigms that autonomously discern outliers. High-dimensional data may necessitate clustering, principal component analysis, or ensemble learning methods to isolate aberrations with minimal false positives. The subtleties of anomaly detection lie in balancing sensitivity and specificity—too lax a threshold may obscure critical deviations, while excessive rigidity may inundate analysts with trivial alerts.

Crafting Custom Reports with Panache

Custom report creation is a nuanced endeavor, synthesizing raw log data into narrative forms tailored to precise operational or strategic objectives. Unlike generic dashboards, bespoke reports emphasize context, granularity, and actionable insights. Analysts can employ dynamic filtering, aggregation, and temporal slicing to highlight pivotal trends, anomalies, and correlations. The inclusion of comparative metrics or anomaly heatmaps further accentuates critical deviations, rendering reports not merely informative but prescriptive. By embracing customization, organizations unlock the capacity to communicate complex operational phenomena lucidly, ensuring stakeholders understand the subtleties of systemic performance.

Automating Reporting Schedules

Automation of reporting schedules transcends convenience; it is an imperative for ensuring the timely dissemination of intelligence across organizational hierarchies. Sophisticated scheduling frameworks allow reports to be generated and distributed at precise intervals, aligned with operational cycles. Automation can encompass conditional triggers, whereby reports are synthesized in response to specific events, thresholds, or anomalies. This mechanization mitigates human latency, fosters consistency, and ensures decision-makers have continuous access to the most current insights. Moreover, integrating alert mechanisms with automated reports amplifies responsiveness, allowing organizations to pivot strategies with alacrity when emergent patterns surface.

Visualization Techniques for Enhanced Comprehension

Data visualization is the cognitive conduit through which complex log datasets are rendered intuitively comprehensible. Beyond mere graphical embellishments, effective visualizations elucidate latent relationships, temporal dynamics, and performance anomalies. Techniques may include heatmaps to illustrate intensity, scatter plots for correlation mapping, or Sankey diagrams to trace event flows. Employing interactivity—drill-downs, filters, and dynamic overlays—enhances analytical agility, enabling stakeholders to traverse from macroscopic trends to granular anomalies seamlessly. Optimal visualization not only augments comprehension but also catalyzes decision-making, transforming voluminous logs into actionable intelligence.

Optimizing Performance with Expansive Datasets

Navigating large datasets necessitates meticulous performance optimization strategies. Indexing, sharding, and data partitioning are instrumental in mitigating latency when querying colossal log repositories. Parallel processing and distributed computing frameworks, such as map-reduce paradigms, facilitate efficient computation across vast data landscapes. Memory management, caching mechanisms, and incremental updates further enhance system responsiveness, ensuring that analytics pipelines remain nimble even under substantial loads. Performance tuning is an iterative endeavor, often requiring empirical profiling, bottleneck identification, and algorithmic refinement to achieve optimal throughput without compromising accuracy or granularity.

Integrating Real-Time Analytics Pipelines

Real-time analytics pipelines represent the zenith of operational intelligence, enabling instantaneous ingestion, processing, and interpretation of log streams. These pipelines demand seamless orchestration of data acquisition, transformation, and visualization modules. Stream processing frameworks, event-driven architectures, and low-latency messaging queues converge to create a synchronous ecosystem where insights emerge contemporaneously with system events. The integration of real-time anomaly detection, trend monitoring, and automated reporting transforms raw telemetry into a living, actionable narrative, allowing organizations to respond proactively rather than reactively to systemic perturbations.

Leveraging Semantic Context in Logs

Embedding semantic context into log analysis elevates it from procedural scrutiny to cognitive interpretation. By associating log entries with domain ontologies, metadata schemas, and contextual hierarchies, analysts can infer not only what occurred but why it occurred. Semantic enrichment aids in disambiguating superficially similar events, clustering related anomalies, and prioritizing actions based on operational relevance. Techniques such as natural language processing, entity recognition, and sentiment analysis—when applied judiciously—can transform mundane log data into a knowledge asset, illuminating relationships, causality, and latent systemic risks previously obscured by raw text.

Predictive Analytics for Preemptive Interventions

Predictive analytics leverages historical log patterns to forecast potential disruptions, enabling preemptive interventions. Regression models, time-series forecasting, and probabilistic simulations coalesce to anticipate performance degradation, system failures, or anomalous activity. Integrating these forecasts with automated alerting mechanisms transforms reactive operations into a proactive paradigm. Beyond mere prediction, sophisticated predictive analytics can prescribe remediation strategies, offering insights into optimal configurations, load balancing, or resource allocation to mitigate emergent risks. By harnessing foresight, organizations can preserve continuity, enhance reliability, and optimize operational efficacy.

Deployment Conundrums and Their Arcane Manifestations

In the labyrinthine expanse of modern deployment pipelines, ineffable complications often manifest as subtle perturbations that elude cursory scrutiny. These can emerge from idiosyncratic versioning conflicts, latent dependency obfuscations, or ephemeral network anomalies. Such anomalies, if unrecognized, propagate insidiously through operational strata, catalyzing cascades of system instability. Developers frequently encounter ephemeral misconfigurations that masquerade as standard runtime errors, yet their etiology is often entwined with an amalgamation of infrastructure misalignments and unanticipated code interactions.

The esoteric nature of container orchestration, coupled with the mercurial tendencies of distributed systems, introduces a panoply of edge cases. These edge cases defy conventional wisdom and necessitate meticulous inspection of transactional flows, inter-service latency, and concurrency bottlenecks. The challenge lies in discerning between symptomatic aberrations and the root cause, a task often exacerbated by voluminous log data and asynchronous execution sequences. As deployments scale, even minor discrepancies in environment variables or schema migrations can precipitate disproportionate operational dissonance.

Diagnostic Instruments for Systemic Perspicacity

Effective troubleshooting mandates the employment of sophisticated diagnostic apparatus. Log aggregators and observability frameworks function as cognitive amplifiers, translating cryptic system whispers into interpretable narratives. Time-series analyzers and anomaly detection modules elucidate latent patterns that may presage systemic instability. By harnessing these diagnostic instruments, engineers attain perspicacity into asynchronous event propagation, memory consumption aberrations, and sporadic I/O latency.

Structured log retention strategies are paramount for forensic examination. Employing tiered storage solutions ensures that ephemeral logs are preserved long enough for post-mortem analysis, without encumbering primary storage or impeding system throughput. Curated indices, coupled with semantic tagging, enable rapid filtration of relevant events from voluminous log corpora. This approach mitigates cognitive overload while enhancing the accuracy of root-cause analysis, facilitating expedient remediation of recurrent deployment anomalies.

Performance Tuning Through Subtle Calibration

Optimization within complex ecosystems requires nuanced calibration rather than brute-force adjustments. Profiling tools illuminate computational hot spots, memory leaks, and thread contention, guiding the meticulous redistribution of resources. Fine-tuning garbage collection cycles, request throttling thresholds, and database connection pools can yield exponential improvements in throughput and latency. These interventions, while subtle, forestall systemic degradation and enhance the resilience of mission-critical services.

Load simulation and stress testing constitute essential preemptive methodologies. By subjecting infrastructure to synthetic extremities, latent vulnerabilities are exposed before production exposure. Metrics gathered during these simulations inform adaptive algorithms that regulate resource allocation dynamically, preempting bottlenecks and ensuring continuity under variable operational exigencies. Iterative tuning, guided by empirical telemetry, transforms reactive maintenance into proactive stewardship.

Preemptive Safeguards Against Data Attrition

Data loss represents an existential threat within contemporary information architectures, necessitating rigorous prophylactic measures. Versioned backups, redundant replication across geographically disparate nodes, and continuous data verification routines constitute a triad of defense against inadvertent attrition. Employing checksums and hash-based integrity verification ensures that replicated datasets remain congruent, while snapshot-based recovery provides temporal rollback capabilities in case of corruption.

Redundant architectures, including multi-zone failover configurations and quorum-based consensus protocols, safeguard against both hardware failure and network partitions. Integrating these strategies within automated orchestration pipelines reduces human error, ensuring consistency in execution and reliability in restoration. Continuous monitoring of storage subsystem health, coupled with predictive failure analytics, preempts catastrophic loss events before they materialize.

Observability and Latency Deconstruction

Observability transcends mere monitoring, encapsulating the capacity to infer system states from signals dispersed across heterogeneous subsystems. Trace propagation, event correlation, and dependency mapping are central to discerning the hidden causalities behind latency spikes and throughput irregularities. By instrumenting services with high-fidelity telemetry, engineers acquire granular insight into execution pathways, queue buildup, and asynchronous error propagation.

Analyzing tail latency, rather than merely averages, reveals subtle systemic fragilities. Rare, high-impact delays often portend architectural constraints or inefficient concurrency models. By focusing on percentile distributions and outlier behaviors, optimization efforts target the phenomena most detrimental to user experience. Such a precision-guided methodology converts vast telemetry streams into actionable intelligence, reducing noise and amplifying signal fidelity.

Contingency Planning and Systemic Resilience

Proactive resilience engineering is pivotal for sustaining operational integrity. Scenario modeling, including black-swan event simulations and controlled chaos experiments, cultivates robustness against unpredictable perturbations. By deliberately inducing controlled failures, system dependencies and recovery protocols are stress-tested, revealing latent vulnerabilities that might otherwise remain concealed until critical failures occur.

Adaptive throttling, circuit-breaking mechanisms, and graceful degradation strategies transform potential catastrophes into manageable contingencies. By integrating these mechanisms within orchestration layers, infrastructure can dynamically reconfigure itself to sustain service availability, even under duress. This iterative resilience paradigm fosters a culture of anticipatory maintenance, in which failure modes are understood, mitigated, and continuously refined.

Integration and Automation with FortiAnalyzer

FortiAnalyzer, a linchpin in cybersecurity operations, offers an unparalleled nexus for integrating multifarious security data streams. Organizations striving for hyper-efficient security postures often encounter an inundation of logs, events, and alerts. FortiAnalyzer transcends rudimentary logging by coalescing these disparate data points into a coherent, actionable intelligence repository. Its integration capabilities are pivotal for enterprises seeking both granular visibility and strategic oversight.

Synergizing FortiAnalyzer with SIEM Platforms

FortiAnalyzer's interoperability with Security Information and Event Management (SIEM) platforms empowers organizations to traverse the chasm between raw data and actionable intelligence. By channeling logs into SIEM ecosystems, it enables advanced threat analytics, anomaly detection, and correlation-driven insights. Through structured APIs and native connectors, FortiAnalyzer orchestrates a seamless data continuum, obviating manual ingestion and mitigating latency in threat detection.

Interfacing with Third-Party Security Ecosystems

The FortiAnalyzer ecosystem is not insular; it is architected for pervasive connectivity with third-party tools. Endpoint detection solutions, intrusion detection systems, and vulnerability scanners can all be harmonized with FortiAnalyzer. This interoperability engenders a panoramic view of organizational security, wherein disparate signals coalesce into a singular operational narrative. The malleability of integrations ensures adaptability even as security architectures evolve, fostering resilience in dynamic threat landscapes.

Harnessing APIs for Custom Automation

At the fulcrum of FortiAnalyzer's automation potential lies its robust API framework. These APIs enable bespoke scripts, event-driven workflows, and dynamic configurations, allowing security teams to transcend the drudgery of repetitive manual tasks. From automated report generation to real-time alert triaging, APIs transform FortiAnalyzer into a programmable nexus of operational efficiency. Developers can craft intricate routines that respond to evolving threats with minimal human intervention, cultivating a proactive security posture.

Orchestrating Alerts for Operational Precision

Alert fatigue is a pervasive challenge in modern Security Operations Centers (SOCs). FortiAnalyzer's alert orchestration capabilities mitigate this by implementing priority-based workflows, conditional triggers, and automated escalations. Alerts can be categorized by severity, source, or contextual relevance, ensuring that critical threats receive immediate attention while less pertinent events are processed asynchronously. This stratified approach to alert management enhances decision-making acuity and conserves valuable human resources.

Automating Repetitive Security Tasks

Repetitive tasks, such as log normalization, event categorization, and compliance reporting, are prime candidates for automation within FortiAnalyzer. By leveraging scripting and built-in automation modules, security personnel can eliminate laborious manual interventions. This not only accelerates operational throughput but also reduces the probability of human error, enhancing overall data fidelity. Automation cultivates a vigilant, continuously responsive security environment, essential in high-velocity threat landscapes.

Scenario-Based Efficiency Enhancements

Consider a mid-sized enterprise managing a labyrinthine network topology. FortiAnalyzer can automatically aggregate logs from firewalls, endpoints, and cloud instances, triggering automated alerts for anomalous activities. Scripts can execute predefined remediation actions, such as isolating compromised endpoints or adjusting firewall policies. This scenario exemplifies how automation amplifies operational efficiency, transforming reactive responses into anticipatory defenses.

Another scenario involves a global corporation with disparate regional SOCs. By integrating FortiAnalyzer with a centralized SIEM, alerts from multiple geographies are correlated and prioritized. Automated workflows distribute critical incidents to relevant teams based on location, expertise, and threat severity. This minimizes response latency and ensures cohesive, organization-wide threat mitigation.

Scripting Options for Tailored Security Workflows

FortiAnalyzer’s scripting ecosystem is replete with options to customize security workflows. Python and RESTful API-based scripts allow intricate rule sets, dynamic data queries, and contextual reporting. Security teams can construct conditional routines that respond to specific threat patterns, automate compliance audits, or synchronize policy enforcement across diverse network segments. These scripting capabilities render FortiAnalyzer a versatile tool for both prescriptive and adaptive security orchestration.

Streamlining Compliance Through Automation

Regulatory compliance is an omnipresent concern for modern enterprises. FortiAnalyzer facilitates automated evidence collection, log retention, and policy verification to align with frameworks such as ISO 27001, NIST, or GDPR. By codifying compliance tasks into repeatable automated workflows, organizations minimize the operational burden of audits and inspections. Automated compliance reduces human error, ensures consistency, and provides a verifiable trail for regulatory scrutiny.

Optimizing Threat Intelligence Integration

FortiAnalyzer serves as a conduit for enriching threat intelligence pipelines. By ingesting feeds from multiple threat intelligence sources, it contextualizes events and alerts within a broader security landscape. Automation scripts can correlate threat indicators with internal network logs, proactively flagging potential compromises. This predictive posture enhances situational awareness and fortifies the enterprise against emerging threats.

Enhancing SOC Productivity Through Orchestration

Operational efficiency within Security Operations Centers is magnified when FortiAnalyzer orchestrates tasks across tools, teams, and geographies. By automating incident classification, escalation, and reporting, SOC analysts are liberated to focus on higher-order threat investigations. This orchestration reduces cognitive load, accelerates response cycles, and ensures that human expertise is leveraged where it is most impactful.

Leveraging Machine-Driven Insights

The amalgamation of FortiAnalyzer with machine-driven analytics introduces a dimension of anticipatory intelligence. Automated anomaly detection, heuristic evaluations, and pattern recognition can be programmed through scripts and integrations. These machine-driven insights complement human judgment, enabling a dual-layer defense strategy that is both adaptive and predictive. The convergence of automation and analytics creates an operational ecosystem primed for hyper-resilient security outcomes.

Adaptive Automation in Dynamic Environments

In highly dynamic network environments, static workflows are insufficient. FortiAnalyzer’s automation capabilities adapt to evolving threats, network topologies, and operational contexts. Scripts and APIs can dynamically recalibrate alert thresholds, reroute logs, and initiate targeted responses based on real-time intelligence. This adaptive automation transforms FortiAnalyzer into a continuously learning sentinel, capable of evolving alongside both organizational infrastructure and threat vectors.

Understanding the NSE5_FAZ-7.0 Certification Exam

The NSE5_FAZ-7.0 examination represents a sophisticated foray into network security and firewall administration. This credential transcends rudimentary networking concepts and requires a perspicacious understanding of Fortinet’s FortiOS environment. Candidates embarking on this intellectual odyssey must cultivate an analytical mindset capable of disentangling complex topologies and troubleshooting cryptic configurations. Familiarity with advanced routing paradigms, VPN orchestration, and granular policy implementation is indispensable.

Decoding the Exam Structure

The exam encompasses a myriad of question typologies designed to probe both theoretical acumen and practical dexterity. Questions are typically scenario-based, requiring candidates to apply principles to intricate network configurations. Multiple-choice queries are often augmented with simulations where candidates must manipulate virtual devices, a ppracticedesigned to emulate real-world operational exigencies. Time management becomes a crucible for success, as navigating multi-layered scenarios demands meticulous prioritization and focused cognition.

Strategizing for Effective Study

Embarking on a preparatory regimen necessitates a methodical approach. Begin with a comprehensive delineation of the FortiOS documentation, ensuring familiarity with command-line syntax, GUI navigation, and logging paradigms. Employ an iterative study cycle, interleaving theoretical review with hands-on experimentation. Conceptual scaffolding can be enhanced by mnemonic devices, which facilitate retention of policy hierarchies, VPN modalities, and intricate routing tables.

Mastering Practical Labs and Simulations

Practical laboratories are the crucible wherein knowledge transmutes into competence. Establish a virtualized environment using Fortinet’s simulation tools or home lab configurations. Experiment with firewall policies, NAT translation rules, and SSL inspection mechanisms. Simulate multi-site VPN topologies and intricate routing failovers to observe real-time network behavior. These exercises cultivate an intuitive grasp of operational intricacies that purely theoretical study cannot provide.

Leveraging Self-Assessment Techniques

Self-assessment constitutes an indispensable facet of exam readiness. Deploy iterative quizzes to gauge retention and identify cognitive lacunae. Scenario-based exercises, where the candidate configures firewalls under contrived operational constraints, foster adaptive problem-solving capabilities. Maintain a reflective journal to chronicle errors, elucidate misconceptions, and consolidate strategies for resolution. Such metacognitive practices engender both confidence and proficiency, fortifying the candidate against exam-day exigencies.

Navigating VPN and Security Architectures

VPN orchestration and security framework comprehension constitute pivotal competencies for the NSE5_FAZ-7.0 exam. Candidates must internalize site-to-site, client-to-site, and SSL VPN mechanisms, understanding encryption protocols and authentication hierarchies. Firewall policies, including deep packet inspection and threat mitigation paradigms, must be interpreted with precision. Mastery in this domain requires continuous engagement with evolving security exploits and defense methodologies, ensuring readiness for dynamic threat landscapes.

Optimizing Time Management for Exam Scenarios

The exam’s temporal constraints necessitate a disciplined chronometry strategy. Allocate initial minutes to peruse the entire question set, identifying scenarios that demand complex configuration versus those solvable through rapid analysis. Prioritize questions with high point yield and mitigate cognitive fatigue through intermittent mental pauses. Temporal vigilance ensures candidates allocate cognitive resources efficiently, reducing error propensity under pressure.

Advanced Routing and Network Topologies

A granular understanding of advanced routing protocols—OSPF, BGP, and policy-based routing—is imperative. Candidates must visualize network topologies, predict routing convergence, and troubleshoot anomalous packet flows. Configuring redundant paths and interpreting dynamic route propagation are core competencies. Simulated network environments amplify experiential understanding, enabling candidates to internalize best practices for resilient and secure infrastructure deployment.

Continuous Learning and Professional Growth

The NSE5_FAZ-7.0 certification catalyzes not merely credential acquisition but an ongoing intellectual journey. Post-certification, candidates are encouraged to engage in iterative knowledge augmentation, exploring emergent Fortinet functionalities, advanced threat landscapes, and network automation paradigms. Professional growth is accelerated through participation in community forums, scenario-based workshops, and continuous skills reinforcement, ensuring long-term efficacy in dynamic IT ecosystems.

Forensic Investigations and Threat Reconstruction

FortiAnalyzer excels in the realm of forensic cybersecurity, transforming opaque log streams into lucid narratives that reveal the anatomy of an attack. The platform enables analysts to perform meticulous event reconstruction, tracing an adversary’s movement across network nodes, endpoints, and cloud environments. Through its temporal indexing and correlation mechanisms, FortiAnalyzer allows the chronological synthesis of attack sequences, highlighting entry vectors, lateral maneuvers, and exfiltration attempts.

Analysts can employ drill-down queries to isolate specific anomalies, such as unexpected protocol usage, suspicious session durations, or irregular user behavior. This forensic precision is indispensable when investigating advanced persistent threats (APTs), which are often characterized by stealth, persistence, and adaptability. FortiAnalyzer’s capacity to collate and correlate multi-device logs ensures that even obfuscated attack paths can be illuminated, enhancing both reactive and proactive threat mitigation strategies.

Moreover, the platform’s audit trail capabilities provide legal and regulatory assurance. Every investigative step is logged, ensuring that forensic findings are reproducible and defensible in regulatory or judicial scenarios. This layer of accountability strengthens an organization’s governance posture while enhancing operational resilience against sophisticated cyber incursions.

Compliance Reporting and Regulatory Alignment

In modern enterprise environments, compliance is both a legal imperative and a strategic differentiator. FortiAnalyzer is engineered to simplify regulatory adherence by providing customizable reports aligned with industry standards such as GDPR, HIPAA, PCI DSS, and ISO 27001. The platform’s templated reporting modules allow organizations to generate comprehensive evidence of security controls, policy enforcement, and incident handling.

Beyond templated reporting, FortiAnalyzer enables the creation of bespoke compliance dashboards, which visualize adherence metrics in real-time. These dashboards allow executives, auditors, and compliance officers to rapidly identify deviations from mandated practices, ensuring that corrective measures are deployed promptly. By centralizing security data from disparate devices, the platform mitigates the complexity of fragmented reporting, reducing operational overhead while enhancing visibility.

The analytical dimension of FortiAnalyzer further reinforces compliance efforts. Through anomaly detection and trend analysis, organizations can proactively identify potential compliance gaps before they manifest into violations. For example, unusual access attempts to sensitive data repositories or unauthorized configuration changes can trigger alerts, ensuring continuous adherence to regulatory requirements.

Cloud-Native Deployment and Elastic Scalability

FortiAnalyzer transcends traditional on-premises constraints through cloud-native deployment capabilities. Leveraging cloud elasticity, organizations can scale storage, compute, and analytical resources dynamically in response to fluctuating log volumes. This is particularly valuable for global enterprises, where distributed networks generate prodigious amounts of telemetry that must be ingested, normalized, and analyzed in real-time.

Cloud-native FortiAnalyzer deployments provide seamless integration with virtualized Fortinet devices, enabling centralized logging and analysis without the limitations of physical infrastructure. Hybrid deployment models are also supported, allowing organizations to maintain sensitive logs on-premises while offloading volumetric analytics to the cloud. This hybrid architecture optimizes both security and cost-efficiency, ensuring that enterprises can achieve high performance without compromising data sovereignty or compliance requirements.

Elastic scaling also enhances resilience. By leveraging cloud infrastructure, FortiAnalyzer can distribute workloads across multiple nodes, ensuring uninterrupted log ingestion and analytical processing even during peak traffic periods or large-scale cyber incidents. The platform’s ability to handle surges in telemetry enables enterprises to maintain uninterrupted situational awareness and threat detection capabilities.

Behavioral Analytics and Anomaly Detection

One of FortiAnalyzer’s most compelling capabilities lies in behavioral analytics. Traditional rule-based detection systems rely on predefined signatures, which can be circumvented by sophisticated adversaries. In contrast, behavioral analytics identifies deviations from established baselines, detecting novel threats without relying on explicit signatures.

FortiAnalyzer constructs behavioral baselines across multiple dimensions, including user activity, network traffic patterns, device interactions, and application usage. Machine learning algorithms continuously refine these baselines, enabling the detection of subtle deviations that may indicate insider threats, compromised accounts, or lateral movement within networks. For example, a user accessing sensitive financial records at atypical hours or transferring data to unfamiliar endpoints may trigger high-priority alerts, even in the absence of known malware signatures.

This approach enhances predictive security. By identifying anomalous behaviors early, FortiAnalyzer enables preemptive mitigation, reducing the likelihood of large-scale data breaches. Furthermore, analysts can correlate behavioral anomalies with contextual data from other Fortinet devices, providing a multi-dimensional view of emerging threats and facilitating more precise incident response.

Threat Intelligence Integration

FortiAnalyzer does not operate in isolation; it synergizes with global threat intelligence frameworks to enrich log data with contextual insights. By integrating external threat feeds and Fortinet’s proprietary intelligence, the platform enhances the detection of known indicators of compromise (IoCs) and tactics, techniques, and procedures (TTPs) associated with advanced cyber adversaries.

This integration allows for automated threat enrichment. When a log entry indicates suspicious activity, FortiAnalyzer can cross-reference it against threat intelligence databases, providing real-time insights into potential adversaries, attack vectors, and malware signatures. Security teams benefit from accelerated triage and investigation, reducing dwell time and enhancing overall response efficacy.

Moreover, threat intelligence integration enables proactive defense. FortiAnalyzer can identify emerging threats targeting similar industries or geographies, allowing organizations to implement preemptive measures. This predictive capability transforms security from a reactive function into a strategic operational advantage.

Operational Optimization and Security Orchestration

Beyond threat detection, FortiAnalyzer enhances operational efficiency through security orchestration and automated workflows. The platform can trigger actions based on analytical insights, such as modifying firewall rules, initiating endpoint quarantines, or escalating incidents to security operations center (SOC) personnel. By automating repetitive tasks, FortiAnalyzer reduces response latency and minimizes human error, freeing analysts to focus on strategic threat hunting and investigation.

Operational dashboards provide real-time visibility into security posture, highlighting metrics such as event volume, threat severity, and incident resolution time. This level of transparency supports continuous improvement, enabling organizations to fine-tune security policies, optimize device configurations, and allocate resources effectively.

Security orchestration also facilitates cross-device collaboration. For example, an anomaly detected on a FortiGate firewall can trigger coordinated actions across FortiEDR endpoints and FortiMail systems. This synchronization ensures that threats are neutralized holistically rather than in isolation, reinforcing a unified defense framework.

Multi-Tenancy and Enterprise Segmentation

FortiAnalyzer’s architecture supports multi-tenancy, allowing large organizations and managed security service providers (MSSPs) to segregate data, dashboards, and reporting by department, client, or geographic region. This capability is crucial for enterprises with complex organizational structures or regulatory requirements that mandate strict data separation.

Segmentation extends to access controls, ensuring that only authorized personnel can view or manipulate specific datasets. This granular control enhances both security and compliance, preventing inadvertent exposure of sensitive information while facilitating tailored insights for different operational units. Multi-tenancy also streamlines reporting, enabling each segment to receive customized analytical outputs without compromising overall visibility or coherence.

Data Normalization and Enrichment

The sheer volume and diversity of log data present a significant challenge for enterprises. FortiAnalyzer addresses this through robust data normalization and enrichment capabilities. Incoming telemetry from disparate devices is parsed, standardized, and categorized, ensuring consistent interpretation across the entire infrastructure.

Enrichment adds contextual value to logs by appending metadata, such as geolocation, threat classification, device type, and user profile. This process transforms raw data into actionable intelligence, enabling faster correlation, anomaly detection, and incident response. Normalization and enrichment also enhance analytical accuracy, reducing false positives and providing security teams with more precise insights into actual threats.

Historical Analysis and Trend Forecasting

FortiAnalyzer’s archival capabilities enable longitudinal analysis, offering unprecedented insight into historical security trends. By examining past incidents, event volumes, and anomaly patterns, enterprises can identify systemic vulnerabilities, recurring attack vectors, and seasonal threat fluctuations.

Trend forecasting leverages this historical data to anticipate future threats. Machine learning models analyze temporal patterns, user behaviors, and network interactions to predict potential security incidents. This foresight empowers organizations to preemptively adjust policies, fortify defenses, and allocate resources to areas of heightened risk, transforming data archives into strategic foresight tools.

Real-Time Alerting and Incident Response

FortiAnalyzer excels at converting analytical insights into immediate operational action. Real-time alerting mechanisms notify security personnel of anomalies, policy violations, and threat signatures with minimal latency. Alerts are prioritized based on severity, enabling SOC teams to focus on high-impact incidents first.

Integrated response workflows allow alerts to trigger automated or semi-automated remediation steps, such as isolating compromised devices, blocking suspicious IP addresses, or initiating forensic logging. This rapid response capability is vital in minimizing dwell time, containing threats, and mitigating potential damage from security breaches.

Initial Setup and System Prerequisites

FortiAnalyzer's efficacy is predicated upon the assiduous establishment of a robust foundation. The appliance, whether physical, virtual, or cloud-based, requires a precise configuration of network parameters, time synchronization, and system-level authentication. Administrators should meticulously delineate IP schemas, VLAN segregation, and routing tables to avoid inadvertent packet loss or suboptimal log routing. NTP configuration is not merely perfunctory but is essential for correlating event timestamps across distributed devices. Misalignment in system clocks may yield incoherent analytics, complicating incident reconstruction.

Equally critical is the establishment of cryptographically resilient administrative credentials. Password entropy should be augmented with periodic rotation policies and multifactor authentication. Early configuration steps also involve defining management interfaces and segregating them from production traffic to prevent inadvertent exposure. Additionally, understanding hardware-specific idiosyncrasies, such as SSD wear leveling, CPU saturation thresholds, and memory buffer allocation, contributes to optimal system longevity and consistent log ingestion.

Device Registration and Network Integration

Device onboarding is a delicate interplay of security and network engineering. FortiAnalyzer ingests event streams from an array of devices, each with unique log schemas and transmission protocols. FortiGate firewalls, FortiMail gateways, FortiWeb application firewalls, and other endpoints must be registered using secure channels, typically leveraging SSL/TLS certificates to ensure authenticity. During registration, administrators assign device identifiers and categorize them within operational zones, enabling coherent policy mapping and reporting.

The subtleties of log forwarding protocols, such as reliable UDP, TCP, or encrypted syslog, must be accounted for. Network latency and packet fragmentation can impede the timely arrival of events, especially in geographically dispersed deployments. Moreover, administrators should anticipate peak log throughput, particularly during attack simulations or high-volume operational periods, and configure buffering or queuing mechanisms accordingly. Failure to integrate devices accurately can result in partial log ingestion, which severely undermines the fidelity of event correlation and subsequent forensic analysis.

Log Storage Architecture and Retention Strategy

The art of log storage in FortiAnalyzer is a sophisticated endeavor that balances immediacy, durability, and compliance. Logs are voluminous, and the appliance employs tiered storage methodologies, optimizing SSDs or high-speed NVMe drives for recent events while relegating archival data to cost-efficient, high-capacity drives. Strategic partitioning, compression, and deduplication are paramount to maintain performance without sacrificing retention integrity.

Administrators must architect retention schedules aligned with both regulatory mandates and operational requirements. For instance, PCI DSS compliance may necessitate the retention of firewall logs for a minimum of one year, whereas incident-specific forensic logs might be preserved for longer durations in immutable storage. Utilizing incremental backup schemes and deduplicated snapshots prevents storage sprawl and ensures rapid log retrieval. It is prudent to simulate storage consumption under various attack scenarios to avoid saturation, which can precipitate dropped events and compromise incident investigation fidelity.

Policy Creation and Granular Access Control

FortiAnalyzer’s policy framework allows for precise regulation of log handling, event categorization, and access control. Policy creation begins with the identification of operational priorities and potential threat vectors. Event filters are established to segregate logs based on device type, severity, or operational relevance. Complex rules may incorporate conditional logic, enabling dynamic adaptation to evolving network patterns.

Access control within the policy framework is critical. Role-based access control (RBAC) can delineate visibility and modification rights, ensuring that sensitive data remains impervious to unauthorized personnel. Policies may also trigger automated actions, such as alert generation or incident escalation, based on pre-defined criteria. Administrators must strike a delicate balance between verbosity and conciseness; overly permissive policies can inundate analysts with irrelevant data, whereas excessively restrictive rules may obscure critical anomalies.

Event Correlation and Contextual Analysis

Event correlation transforms raw logs into actionable intelligence. FortiAnalyzer synthesizes temporal, behavioral, and contextual data points, constructing narratives that reveal hidden threats or operational irregularities. For instance, a series of failed login attempts from geographically disparate locations can be correlated to identify credential stuffing attacks. Contextual metadata, including IP geolocation, device type, and user identity, enhances correlation accuracy, enabling more precise detection of anomalous activity.

Administrators should develop correlation rules iteratively, validating them against historical data to minimize false positives. Incorporating adaptive thresholds based on normal operational baselines allows the system to distinguish between benign anomalies and genuine threats. Furthermore, the correlation engine can integrate external threat intelligence feeds, mapping known malicious indicators against internal logs to proactively mitigate risk.

Alerting Mechanisms and Notification Architecture

Alerting is the linchpin of proactive security operations. FortiAnalyzer supports intricate notification mechanisms, including SMTP-based email, SNMP traps, syslog forwarding, and RESTful API callbacks. Alerts can be finely tuned by severity, recurrence, and suppression parameters, minimizing alert fatigue while ensuring critical incidents receive immediate attention.

Advanced alerting paradigms may leverage machine learning-derived baselines to identify subtle deviations from expected patterns, such as anomalous protocol usage or abnormal bandwidth consumption. Multi-channel alert delivery ensures redundancy, so that notifications persist even if one communication pathway fails. Administrators must also define escalation hierarchies, ensuring that unresolved alerts are automatically propagated to higher-level stakeholders or incident response teams.

Log Visualization and Analytic Dashboards

FortiAnalyzer’s dashboarding capabilities are essential for transforming extensive log data into comprehensible insights. Customizable widgets, heatmaps, temporal trend graphs, and tabular summaries enable analysts to rapidly discern operational anomalies, security incidents, and compliance deviations. Strategic visualization emphasizes clarity, prioritization, and minimization of cognitive load, allowing stakeholders to focus on emergent threats rather than procedural minutiae.

Dashboard design should also consider audience-specific requirements. Executives may require high-level summary metrics, whereas security analysts necessitate granular visibility into event sequences and device-specific trends. Integrating drill-down capabilities allows users to transition seamlessly from macro-level overviews to micro-level forensic analysis, enhancing operational agility and incident response efficiency.

Workflow Optimization and Automation

FortiAnalyzer’s scripting and automation capabilities enable administrators to transcend manual task execution. Scheduled reports, bulk device configuration, and automated remediation scripts reduce human error while conserving operational resources. Automation workflows can encompass log ingestion, correlation, alerting, and incident response, forming a cohesive, repeatable operational ecosystem.

Documenting these automated workflows is essential for reproducibility, auditability, and knowledge transfer within the security team. Administrators should also implement monitoring mechanisms to validate automation efficacy and detect anomalies in execution, ensuring that automated processes do not inadvertently introduce vulnerabilities or operational gaps.

Compliance Auditing and Forensic Capabilities

FortiAnalyzer provides an ideal platform for compliance auditing and forensic investigations. Immutable log storage, customizable retention schedules, and granular search capabilities satisfy diverse regulatory requirements. Administrators can trace events with millisecond precision, reconstructing complex attack sequences or operational anomalies with forensic rigor.

Effective auditing necessitates meticulous indexing, tagging, and metadata enrichment to facilitate rapid retrieval. Integration with external compliance tools or SIEM platforms can further enhance visibility and reporting capabilities. By maintaining a structured, auditable log ecosystem, organizations can substantiate regulatory adherence while expediting incident response and forensic analysis.

High-Availability Configuration and Redundancy

High-availability (HA) paradigms in FortiAnalyzer ensure uninterrupted log availability and operational resilience. Configurations may employ active-active or active-passive clustering, with synchronous replication and heartbeat monitoring to safeguard data integrity. Failover orchestration ensures minimal disruption during hardware failures, network partitions, or maintenance activities.

HA deployment requires careful planning, including load balancing, network path redundancy, and capacity provisioning. Administrators should simulate failover scenarios to validate resilience, verifying that log ingestion, correlation, and alerting remain operational under adverse conditions. Effective HA design minimizes operational risk and instills confidence in the reliability of centralized log management.

Integrating Threat Intelligence and External Feeds

Incorporating external threat intelligence augments FortiAnalyzer’s predictive capabilities. Curated feeds provide real-time indicators of compromise, malware signatures, phishing heuristics, and vulnerability advisories. By integrating these feeds, administrators can correlate external threat indicators with internal events, enhancing situational awareness and preemptive mitigation.

Feed integration involves mapping disparate data structures, normalizing indicators, and establishing automated update mechanisms to maintain accuracy. Analysts should evaluate feed relevance and reliability continuously, ensuring that the system responds to credible threats without succumbing to noise from low-quality intelligence sources.

Scalability Considerations and Performance Tuning

Scaling FortiAnalyzer to accommodate organizational growth necessitates deliberate resource planning. Increased device density, log volume, and analytic complexity require optimization of database indices, compression algorithms, and processing load distribution. Historical log analysis informs predictive capacity planning, enabling administrators to anticipate future demand and provision resources accordingly.

Performance tuning also involves monitoring system health metrics, including CPU utilization, memory consumption, storage latency, and network throughput. Proactive adjustment of buffer sizes, indexing strategies, and log retention policies ensures consistent operational performance, even under peak log ingestion conditions or during large-scale attack simulations.

Security Hardening and Access Governance

FortiAnalyzer, as a repository of sensitive operational data, must itself be fortified against intrusion and misuse. Multifactor authentication, granular RBAC, IP-based access controls, and encrypted log transmission constitute fundamental security measures. Administrators should implement periodic credential rotation, audit trails, and activity monitoring to mitigate insider threats and ensure accountability.

Hardening extends to system-level parameters, including disabling unused services, applying security patches promptly, and enforcing configuration baselines. Continuous security assessment, including vulnerability scanning and penetration testing, reinforces FortiAnalyzer’s resilience against sophisticated adversarial attempts, preserving both log integrity and organizational trust.

Advanced Reporting and Custom Analytics

Beyond dashboards, FortiAnalyzer enables granular, customizable reporting that can be tailored for compliance, operational insight, or executive briefings. Administrators may leverage SQL-like queries, dynamic report parameters, and automated scheduling to disseminate actionable intelligence across the organization.

Custom analytics can incorporate historical trend analysis, anomaly detection, and predictive modeling. By synthesizing long-term log patterns with operational data, analysts can identify latent vulnerabilities, optimize network configurations, and preemptively mitigate risk. Advanced reporting fosters data-driven decision-making and strengthens the organization’s security posture over time.

Incident Response Integration and Automation

FortiAnalyzer’s integration with incident response frameworks enhances the timeliness and effectiveness of threat mitigation. Event triggers can automatically initiate predefined response workflows, including firewall policy adjustments, quarantine actions, or alert escalation.

Automated playbooks reduce response latency, ensure consistency, and minimize human error. Administrators should rigorously test these integrations to confirm that automated interventions align with organizational policy and operational expectations. By embedding FortiAnalyzer into the incident response lifecycle, organizations can transform raw log data into rapid, actionable security operations.

Multi-Tenancy and Organizational Segmentation

In large enterprises or managed service provider environments, multi-tenancy and organizational segmentation are crucial. FortiAnalyzer allows the segregation of logs, policies, and dashboards across distinct organizational units, preserving privacy and operational autonomy.

Segmentation involves defining role hierarchies, access privileges, and policy scopes. Administrators must ensure that inter-tenant visibility is strictly controlled while enabling centralized oversight where necessary. Properly implemented, multi-tenancy enhances operational scalability and governance without compromising security or data integrity.

Behavioral Analytics and Anomaly Detection

FortiAnalyzer increasingly leverages behavioral analytics to identify deviations from normative operational patterns. Machine learning models can profile user activity, network traffic, and device behavior, detecting subtle indicators of compromise that traditional rule-based correlation might overlook.

Anomaly detection facilitates early identification of insider threats, lateral movement, and zero-day exploits. Administrators must calibrate these models using historical baselines and continuously refine thresholds to balance sensitivity against false positives. Behavioral insights complement traditional log analysis, enriching the organization’s defensive repertoire.

Advanced Log Parsing Strategies

Beyond conventional tokenization and regular expressions, advanced log parsing incorporates hierarchical pattern recognition and contextual inference. Logs generated by distributed systems often contain multi-layered structures, where events from disparate subsystems intertwine temporally and semantically. By employing recursive parsing frameworks, one can isolate nested log segments, identify causality chains, and reconstruct system narratives with remarkable fidelity. Pattern matching augmented with fuzzy logic allows analysts to detect variations of similar events, even when formatting inconsistencies or sporadic anomalies are present. This approach is particularly crucial in heterogeneous environments where logs from diverse platforms, middleware, and microservices converge.

Temporal Pattern Recognition in Logs

Temporal pattern recognition focuses on discerning sequences and rhythms embedded in log entries. Beyond static trend analysis, it involves detecting repetitive cycles, phase shifts, and periodic anomalies that may indicate underlying systemic stressors. Techniques such as autocorrelation, spectral analysis, and wavelet transformations reveal hidden periodicities that conventional aggregation methods might overlook. These methods can uncover subtle precursors to failures, such as intermittent latency spikes, fluctuating resource consumption, or cyclical error bursts. The sophistication of temporal analysis lies in its capacity to reveal micro-patterns, offering a predictive lens into system behavior across short and extended time horizons.

Multivariate Anomaly Detection

Anomalies seldom occur in isolation; they often manifest as intricate interactions among multiple variables. Multivariate anomaly detection models these interdependencies, using statistical correlation, covariance analysis, or multidimensional clustering to detect deviations that are invisible when variables are examined independently. For instance, an unusual combination of CPU utilization, disk I/O latency, and network throughput might signal a critical bottleneck that single-metric monitoring would miss. Advanced approaches incorporate ensemble learning, neural networks, or Bayesian inference to improve the robustness of detection, minimizing both false positives and overlooked anomalies.

Semantic Log Analysis

Semantic log analysis transcends syntactic parsing by incorporating meaning and context into evaluation. Natural language processing techniques can decipher unstructured log messages, categorize them, and extract actionable intelligence. Entity recognition can isolate components, processes, or error codes, while sentiment or severity scoring highlights critical events. By mapping log semantics onto operational ontologies, analysts can contextualize events within business processes, allowing strategic decision-making to be informed by operational realities. Semantic enrichment transforms logs from a passive repository into an interpretive knowledge base, enabling nuanced insights and more informed interventions.

Automated Report Customization

Reports can be elevated from static summaries to dynamic instruments of insight through automation. Customization involves not only selecting relevant metrics but also tailoring visualization modalities, temporal granularity, and comparative baselines. For example, a report focusing on system stability might integrate rolling averages, anomaly heatmaps, and trend projections, while a security-oriented report could highlight failed authentications, privilege escalations, and intrusion attempts. Automating the customization process ensures consistency and timeliness while allowing for contextual adjustments, such as changing focus depending on current operational priorities or incident severity.

Interactive Dashboards for Log Intelligence

Interactive dashboards provide a tactile interface for exploring log data. Unlike static reports, dashboards allow users to drill into specific metrics, filter event types, and dynamically adjust temporal windows. Widgets such as sliders, dropdowns, and heatmaps enable multidimensional analysis, revealing hidden correlations and temporal trends. Coupled with real-time updates, interactive dashboards empower teams to respond to emergent anomalies immediately. Effective dashboards balance visual appeal with cognitive clarity, ensuring that operational personnel can assimilate complex log patterns rapidly without being overwhelmed by data density.

Scaling Analysis for Expansive Datasets

Massive datasets necessitate more than basic optimizations; they demand scalable architectures. Partitioning logs by time, source, or event type reduces query latency, while distributed computation frameworks like cluster-based processing facilitate parallel analysis. Data compression and incremental storage techniques ensure that archives remain accessible without incurring excessive overhead. Furthermore, metadata tagging accelerates searches, enabling targeted retrieval of relevant logs. Scaling also involves algorithmic refinements: approximate querying, streaming aggregation, and probabilistic data structures allow analyses to remain performant even as dataset volume expands exponentially.

Real-Time Event Stream Processing

Real-time processing introduces a paradigm shift from retrospective analytics to immediate intelligence. Event streams can be ingested, normalized, and processed continuously using low-latency architectures. Stream processing frameworks apply filters, enrich events with contextual metadata, and compute metrics on-the-fly, delivering actionable insights within milliseconds. Integration with automated alerts allows operational teams to respond instantaneously to deviations, mitigating risks before they escalate. Real-time analytics also supports predictive intervention, as immediate patterns feed into forecasting models, enabling proactive system adjustments in near-real time.

Advanced Visualization Techniques

Visualization is both an analytical and cognitive instrument. Beyond standard charts, sophisticated methods such as Sankey diagrams, chord diagrams, and multivariate scatter plots elucidate complex relationships and interactions among system components. Temporal heatmaps reveal event densities, while interactive graphs allow dynamic exploration of dependencies and sequences. Visualization can also employ adaptive scaling, where data resolution adjusts according to focus or zoom level, preserving clarity even when navigating terabytes of log data. By transforming abstract metrics into interpretable visuals, these techniques enhance situational awareness and facilitate strategic decision-making.

Optimizing Query Performance

Efficient querying is crucial for timely log analysis. Techniques such as index optimization, query caching, and partition pruning dramatically reduce retrieval latency. Employing columnar storage formats or compressed data structures minimizes I/O overhead, while parallelized query execution leverages multi-core and distributed architectures to accelerate computation. Query optimization also involves pre-aggregating common metrics, utilizing approximate algorithms for exploratory analyses, and intelligently caching intermediate results. By refining the interplay between data structures and query execution strategies, organizations can interrogate expansive logs rapidly without compromising analytic depth.

Integrating Predictive Models