Product Screenshots

Frequently Asked Questions

How does your testing engine works?

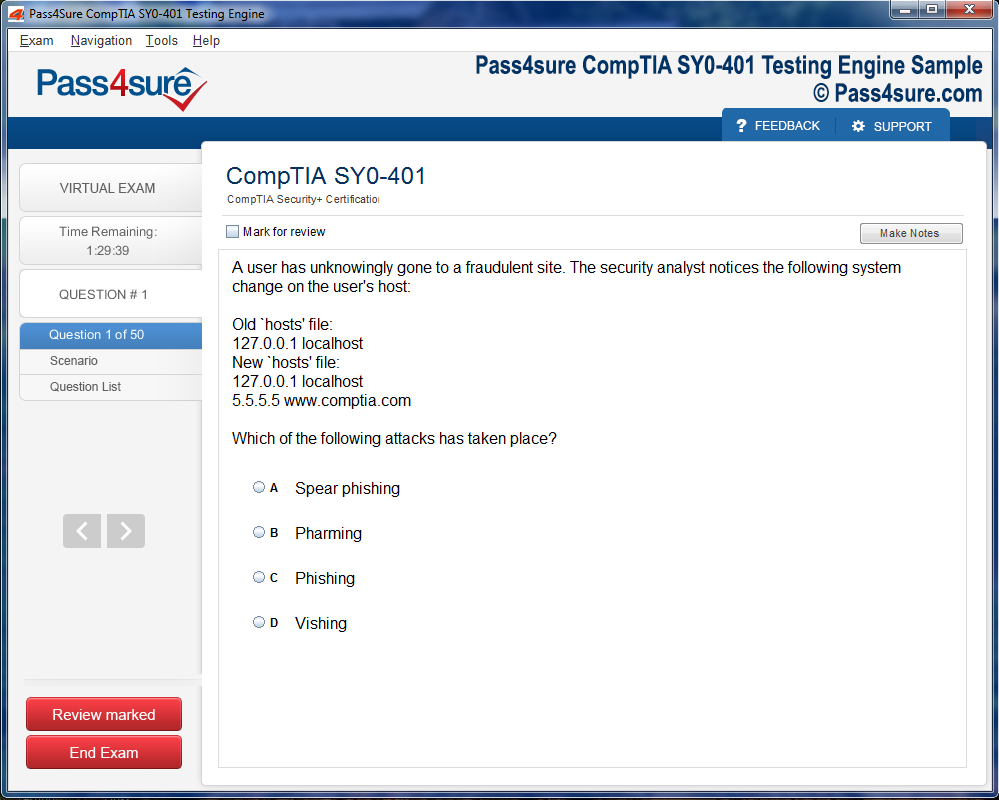

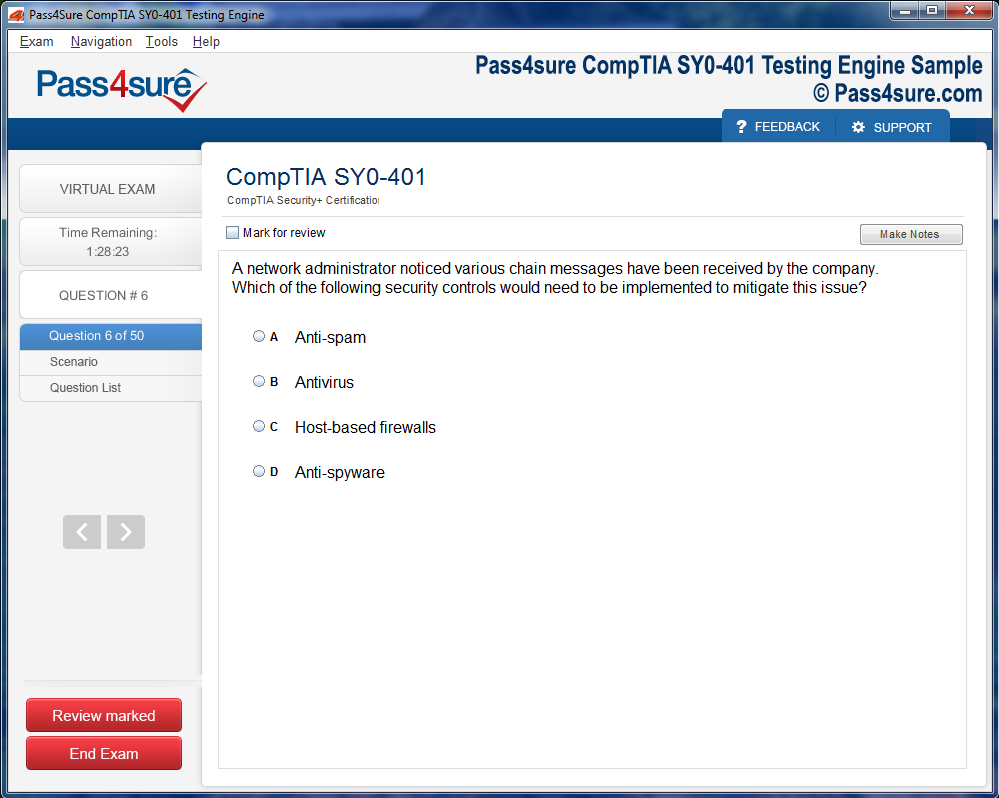

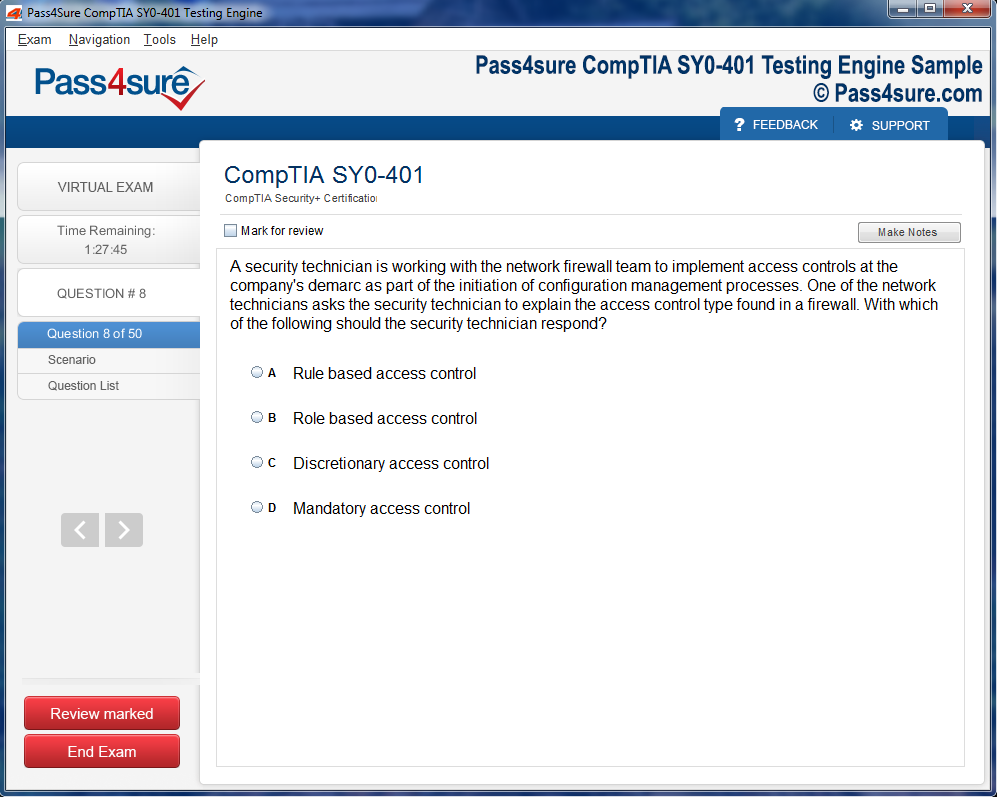

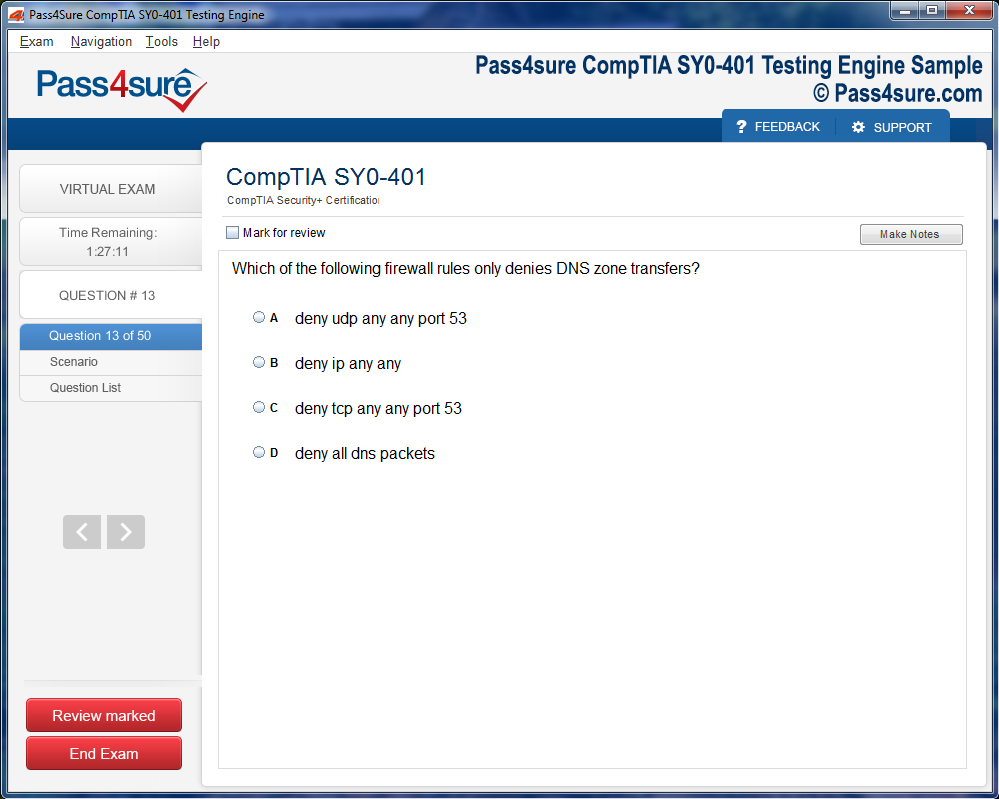

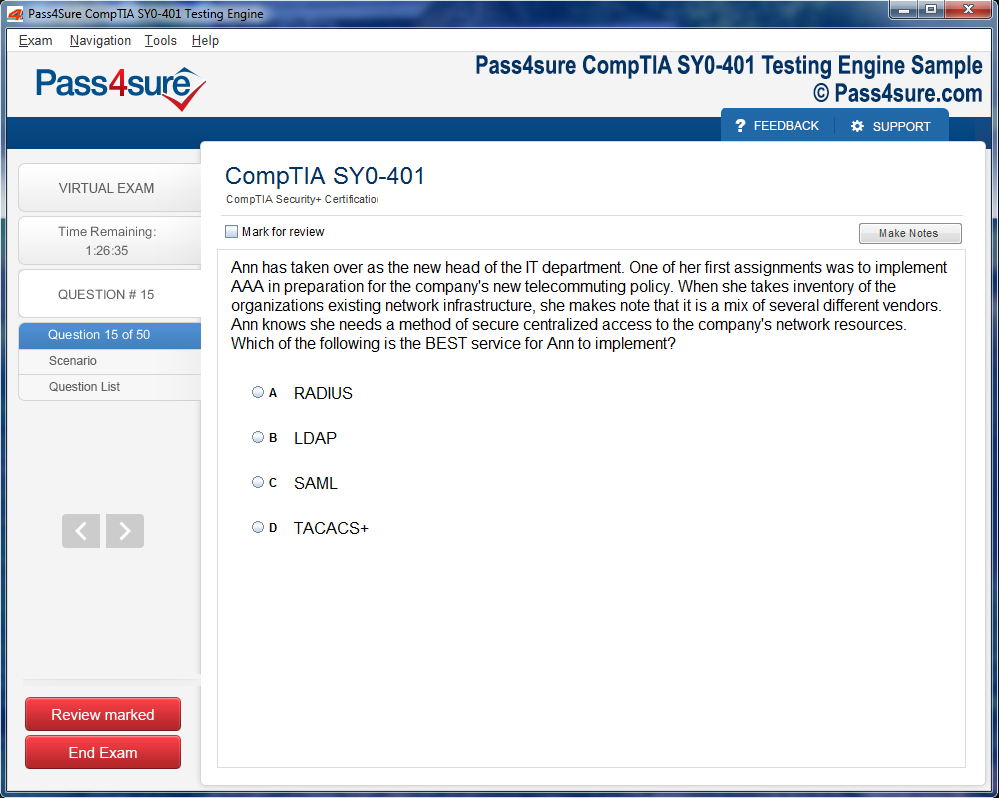

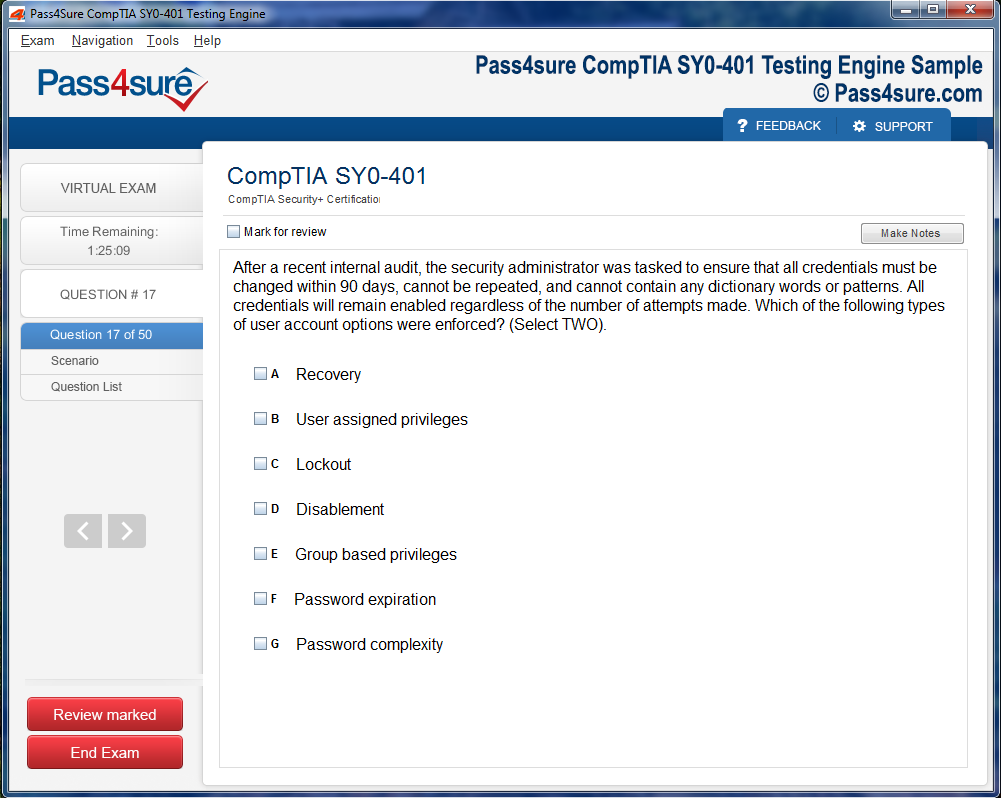

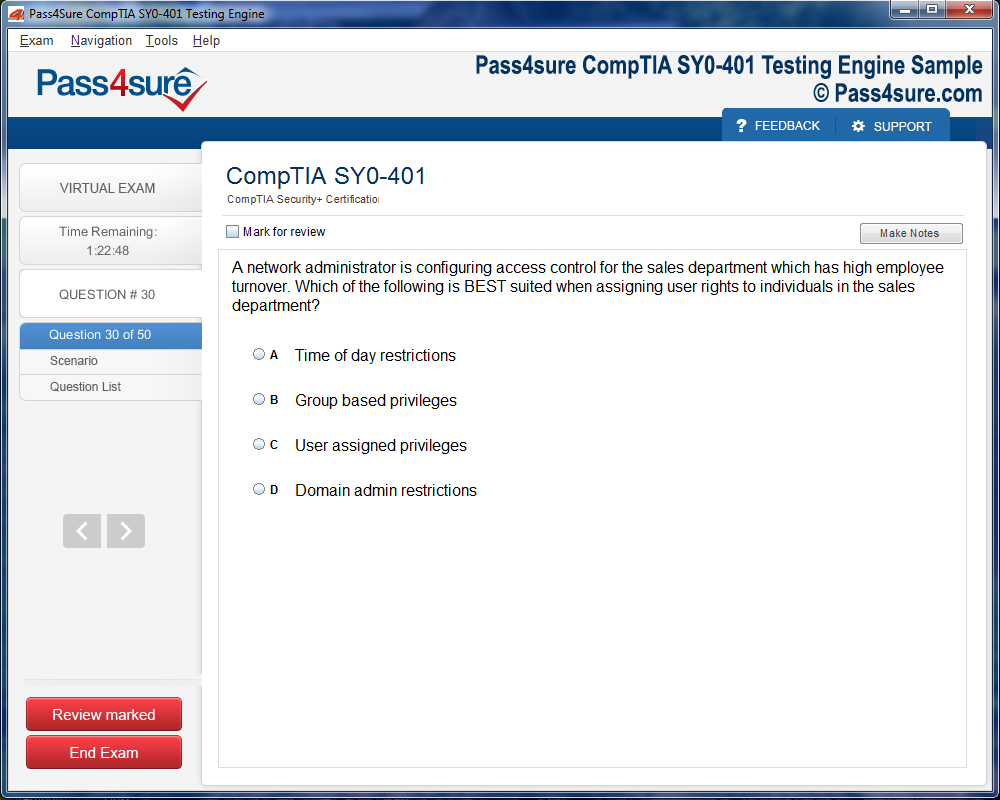

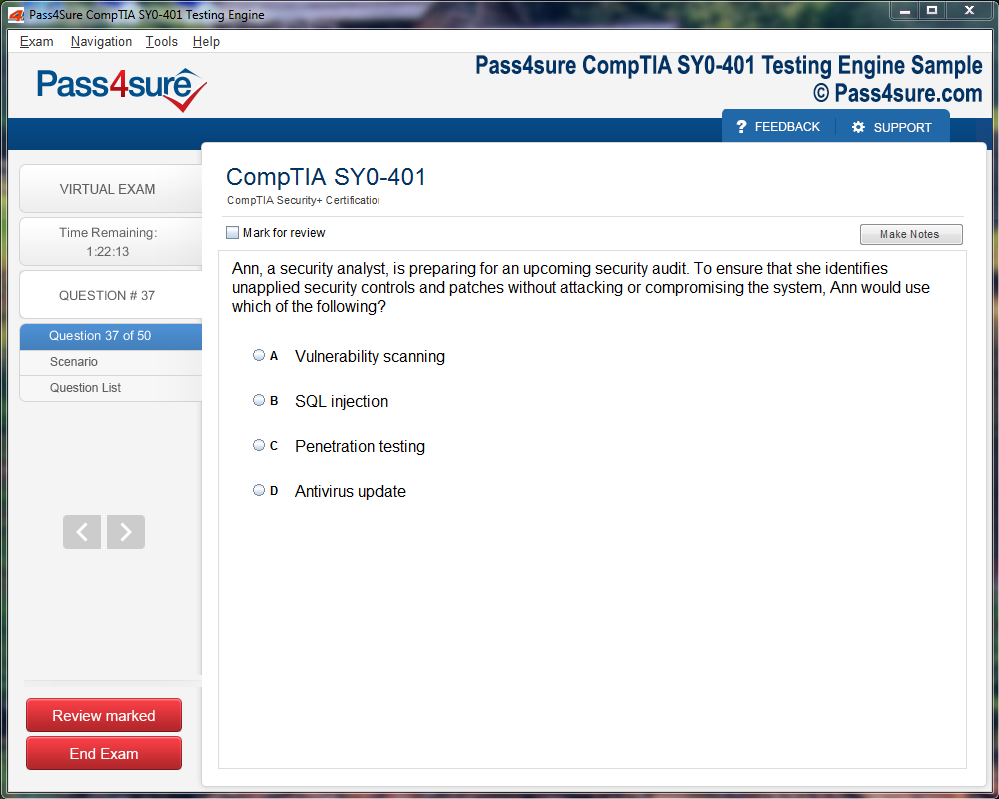

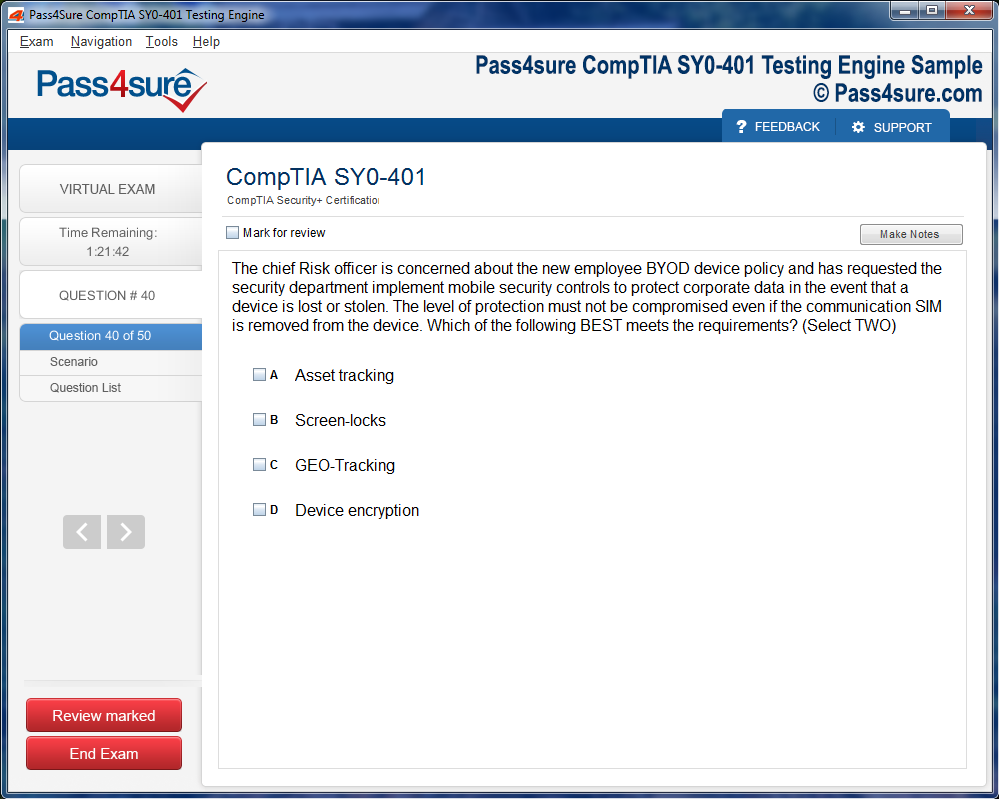

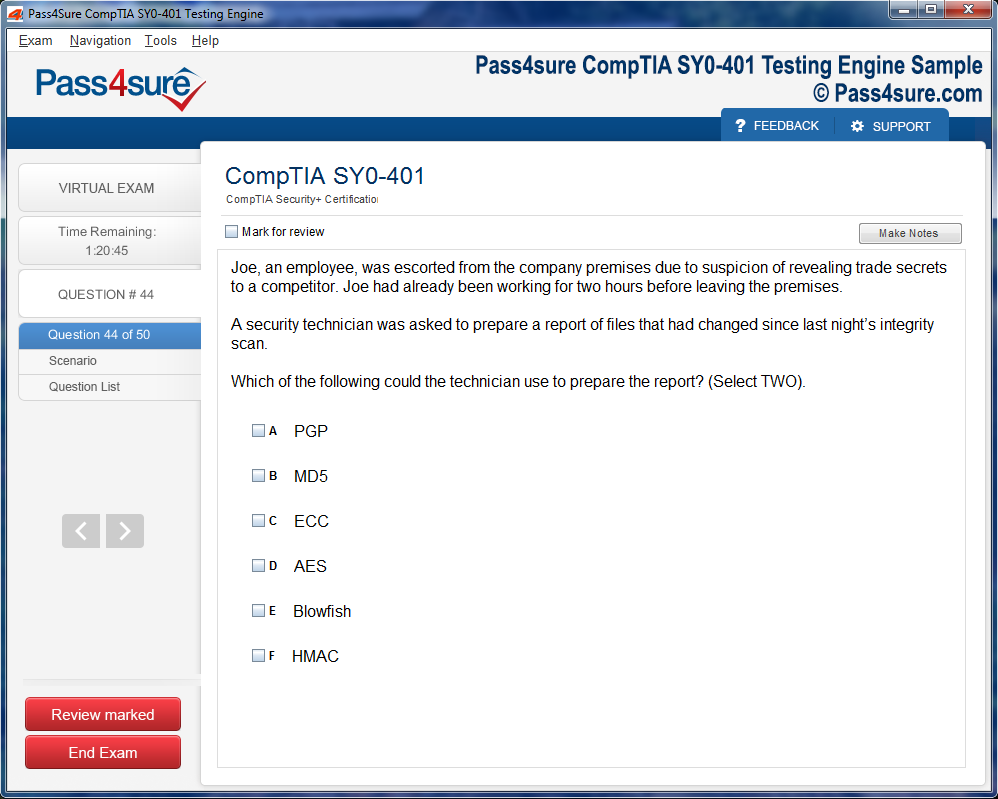

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations.

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.

Your Guide to Mastering 4A0-112 Nokia Certification

Exploring advanced network topologies unveils a realm where intricacy meets strategic foresight. Nokia networks employ a multitude of topological arrangements, each designed to optimize performance and reliability. Mesh architectures, with their labyrinthine interconnections, ensure resilience by offering multiple pathways for data traversal. Star and hybrid topologies, conversely, balance simplicity with scalability, mitigating points of failure while preserving operational efficiency. Understanding the selection criteria for these architectures requires an appreciation of traffic flow patterns, latency considerations, and redundancy imperatives that govern contemporary network environments.

The subtle nuances of hierarchical designs also merit scrutiny. Core, distribution, and access layers orchestrate the seamless passage of packets, with each layer possessing distinct responsibilities. Core layers emphasize high-speed transport, distribution layers manage aggregation and policy enforcement, and access layers serve as the interface with end devices. This hierarchical stratification not only enhances performance but also simplifies troubleshooting by localizing potential fault domains. Candidates preparing for the 4A0-112 certification must internalize these architectural principles, recognizing the symbiosis between topology, protocol selection, and network resilience.

Mastering Routing and Switching Dynamics

Routing and switching constitute the lifeblood of Nokia networking infrastructure, dictating how data navigates complex digital landscapes. Mastery of static and dynamic routing mechanisms illuminates the decision-making processes that govern path selection. Protocols such as OSPF, IS-IS, and BGP dictate inter- and intra-network communication, each with unique convergence characteristics and scalability profiles. Understanding metric computation, route prioritization, and loop avoidance strategies empowers candidates to engineer networks that are both efficient and robust.

Switching paradigms, including VLAN segmentation, trunking, and spanning tree protocols, enhance the granular control over data flow within local segments. Layer 2 and Layer 3 switches offer distinct functionalities, blending packet forwarding speed with intelligent routing capabilities. Comprehending the intricate interplay between switching fabrics and routing engines is crucial for optimizing throughput while minimizing latency and congestion. This domain demands meticulous attention to detail, as subtle misconfigurations can propagate inefficiencies across the network fabric.

Integrating Security and Compliance Mechanisms

Network security in Nokia architectures extends beyond conventional firewall deployment. Candidates must cultivate a deep understanding of access control models, encryption methodologies, and intrusion detection mechanisms. Role-based access ensures that users interact only with resources pertinent to their responsibilities, while encryption fortifies data integrity and confidentiality across transport layers. Security auditing and logging mechanisms provide visibility into anomalous behaviors, enabling proactive mitigation of potential threats.

Compliance with regulatory standards further complicates network management, necessitating awareness of data retention policies, privacy mandates, and sector-specific guidelines. Integrating security seamlessly with operational protocols ensures uninterrupted service delivery while maintaining regulatory alignment. This dual focus on defense and compliance transforms candidates into holistic network stewards capable of safeguarding complex telecommunications ecosystems.

Embracing Automation and Orchestration

The advent of software-defined networking and network automation has revolutionized Nokia infrastructures, embedding intelligence into operational workflows. Automation frameworks enable preemptive configuration, reducing manual intervention and human error. Orchestration platforms facilitate dynamic provisioning, scaling network resources according to demand patterns with precision and agility. Candidates who internalize these paradigms gain a strategic advantage, understanding how automation enhances fault tolerance, accelerates deployment cycles, and optimizes resource utilization.

Programmable interfaces and APIs offer granular control over network elements, bridging the gap between abstract design and practical deployment. By leveraging automation tools, administrators can enforce consistency across distributed environments, minimizing configuration drift and enhancing predictability. The convergence of programmability with operational oversight defines a new era of network mastery, aligning seamlessly with the competencies assessed in the 4A0-112 certification.

Navigating Protocol Stacks and Layered Architectures

A thorough grasp of the OSI and TCP/IP models is indispensable for Nokia network professionals. Each layer, from the physical medium to application services, embodies specific responsibilities that collectively govern data flow. Understanding encapsulation, decapsulation, and header analysis allows candidates to pinpoint anomalies with surgical precision. Layered abstractions facilitate modular troubleshooting, enabling focused interventions without disrupting broader network operations.

The interactions between transport, network, and data link layers reveal subtle interdependencies that influence latency, throughput, and error handling. Candidates benefit from simulating traffic scenarios, examining protocol behavior under varying load conditions, and interpreting diagnostic metrics. This analytical proficiency translates directly into enhanced problem-solving skills, ensuring readiness for complex operational challenges inherent to the 4A0-112 curriculum.

Exploring Emerging Networking Paradigms

Contemporary Nokia networks increasingly embrace paradigms that transcend conventional architectures. Software-defined wide-area networks, network slicing, and virtualization introduce flexibility and efficiency previously unattainable in static designs. These innovations allow networks to adapt to fluctuating workloads, prioritize critical services, and isolate traffic domains with surgical accuracy. Candidates who engage deeply with these concepts develop an anticipatory mindset, capable of preempting challenges and implementing solutions that align with evolving technological landscapes.

The integration of cloud-native principles with traditional networking further expands the candidate's horizon. Hybrid deployments necessitate mastery over both on-premises and cloud orchestration, ensuring seamless interoperability across heterogeneous environments. This synthesis of foundational knowledge with avant-garde techniques epitomizes the aspirational expertise cultivated through the 4A0-112 certification journey.

Optimizing Performance and Reliability

Performance optimization in Nokia networks transcends mere throughput enhancement; it encompasses latency reduction, jitter control, and packet loss minimization. Techniques such as traffic shaping, quality of service prioritization, and congestion avoidance ensure that critical applications receive preferential treatment without compromising overall network efficiency. Understanding the interplay between hardware capabilities and protocol efficiencies enables precise tuning of network parameters.

Reliability is fortified through redundancy mechanisms, failover strategies, and proactive monitoring. Link aggregation, backup paths, and load-balancing schemes distribute traffic intelligently, mitigating the impact of potential failures. Candidates are encouraged to simulate fault scenarios, analyze system responses, and refine configurations to achieve resilient infrastructures capable of sustaining high availability under diverse conditions.

Harnessing Monitoring and Troubleshooting Proficiency

Effective network management relies on continuous monitoring and diagnostic acuity. Nokia platforms provide extensive telemetry, including interface statistics, flow analytics, and event logs, which empower administrators to detect and resolve issues proactively. Tools for real-time visualization and historical analysis facilitate pattern recognition, anomaly detection, and predictive maintenance.

Troubleshooting extends beyond reactive measures; it encompasses strategic planning and iterative refinement. By dissecting packet captures, evaluating routing convergence, and scrutinizing protocol interactions, candidates develop an investigative mindset crucial for operational excellence. This competency bridges theoretical understanding with practical execution, reinforcing the foundational expertise essential for 4A0-112 certification mastery.

Harnessing Network Automation and Orchestration

Network automation has transcended mere convenience to become a fulcrum of operational agility in contemporary Nokia networks. Automation frameworks streamline configuration, deployment, and remediation tasks that once demanded painstaking manual intervention. Orchestration layers further empower engineers to choreograph complex workflows, integrating disparate systems into a cohesive, responsive network fabric.

The efficacy of automation relies on precise scripting and an intimate understanding of network protocols. YANG models and NETCONF interfaces exemplify the mechanisms through which configuration intent is communicated and enacted. Harnessing these tools reduces error susceptibility, accelerates service provisioning, and fortifies consistency across sprawling infrastructures. Additionally, automation introduces predictive capabilities, where network behavior can be anticipated and preemptive adjustments enacted to avoid degradation or downtime.

Orchestration expands this vision by enabling holistic management across multi-domain environments. Tasks such as service chaining, policy enforcement, and traffic engineering can be programmatically synchronized, yielding a network that is both adaptive and resilient. Engineers versed in these paradigms cultivate the dexterity to deploy complex topologies efficiently, transforming network administration into a strategic, insight-driven endeavor.

Fortifying Network Security and Resilience

Security within Nokia networks extends beyond mere perimeter defense; it encompasses a layered, proactive methodology designed to anticipate and neutralize multifaceted threats. Intrusion detection systems, next-generation firewalls, and microsegmentation techniques coalesce to provide comprehensive protection. The implementation of security zones and access control policies ensures that sensitive data remains insulated, even within a highly dynamic topology.

Resilience complements security by emphasizing continuity and recoverability. High-availability architectures, redundant pathways, and failover mechanisms collectively safeguard against operational disruption. Techniques such as link aggregation and multiprotocol redundancy ensure that a single point of failure does not compromise network integrity. Moreover, resilience planning requires rigorous scenario testing and capacity forecasting, allowing engineers to respond swiftly to exigent circumstances.

A nuanced comprehension of threat vectors, from distributed denial-of-service attacks to protocol-specific exploits, empowers network specialists to proactively defend their infrastructures. This dual focus on security and resilience cultivates a network ecosystem that is both robust and agile, aligning with the expectations of enterprise-grade deployments and critical telecommunications frameworks.

Elevating Performance Monitoring and Analytics

Sophisticated performance monitoring constitutes the nervous system of a high-functioning network. Collecting telemetry data through SNMP, IPFIX, and syslog feeds provides visibility into traffic patterns, latency anomalies, and device performance. Advanced analytics platforms convert this deluge of raw data into actionable intelligence, illuminating trends that can guide optimization efforts and capacity planning.

Key performance indicators extend beyond basic throughput and error rates, encompassing jitter, packet loss, and end-to-end latency measurements. Correlating these metrics across multi-domain topologies reveals systemic bottlenecks and potential points of degradation before they manifest as service interruptions. Furthermore, predictive analytics leverage historical datasets to forecast traffic surges, informing preemptive adjustments to routing policies and bandwidth allocation.

Network engineers who master these monitoring methodologies gain the foresight to maintain optimal service levels while minimizing operational overhead. This analytical rigor transforms network management from reactive troubleshooting to strategic stewardship, ensuring that infrastructure operates with both efficiency and foresight.

Implementing Virtualization and Cloud Integration

The advent of virtualization has catalyzed a paradigm shift in network architecture. Nokia networks increasingly leverage virtualized routing and switching instances, decoupling logical functions from physical infrastructure. This abstraction fosters flexibility, allowing multiple isolated network domains to operate concurrently on shared hardware without interference.

Integration with cloud services further extends the network’s versatility. Hybrid deployments, spanning on-premises and cloud-based resources, necessitate sophisticated orchestration and connectivity strategies. Virtual private networks, overlay tunnels, and cloud interconnect protocols ensure seamless interoperability, enabling enterprises to scale dynamically while maintaining security and performance standards.

Virtualized environments also introduce novel operational considerations. Resource contention, virtual instance provisioning, and dynamic traffic steering require vigilant monitoring and adaptive configuration strategies. Mastery of these concepts equips engineers to design networks that are not only agile but also economically and operationally efficient, aligning with the demands of modern digital enterprises.

Advancing Troubleshooting and Proactive Maintenance

In complex Nokia ecosystems, troubleshooting extends beyond mere problem identification. Engineers employ root-cause analysis methodologies, tracing issues through intricate protocol interactions and multi-layered topologies. Leveraging diagnostic tools, log correlation, and packet-level inspection, specialists can isolate anomalies with surgical precision, minimizing service impact.

Proactive maintenance amplifies this discipline by anticipating failures before they occur. Predictive algorithms assess component wear, traffic stress, and configuration drift, prompting preemptive interventions. This approach transforms network stewardship into a proactive science, reducing downtime and optimizing operational reliability.

The cultivation of these competencies requires not only technical acumen but also analytical intuition. Engineers who internalize these principles enhance their ability to sustain high-performance environments, ensuring that network infrastructure remains resilient, responsive, and strategically aligned.

Fortifying Network Perimeters with Strategic Vigilance

In contemporary telecommunications ecosystems, fortifying the network perimeter transcends mere implementation of firewalls. It encompasses an orchestrated strategy of layered defenses, integrating intrusion detection systems with adaptive response protocols. Proactive reconnaissance, coupled with continuous anomaly analytics, cultivates an anticipatory posture, transforming potential threats into manageable contingencies. Administrators must cultivate an intuition for subtle irregularities in traffic patterns, enabling preemptive mitigation before incursions materialize.

The integration of micro-segmentation architectures enhances resilience by isolating sensitive nodes and critical applications from lateral threats. Each segment functions as a controlled enclave, with policy-driven access and dynamic monitoring. This approach mitigates cascading failures during breaches, preserving operational integrity while limiting exposure to nefarious actors.

Cryptographic Integrity and Confidentiality

Encryption remains the linchpin of network confidentiality, providing both obfuscation and authentication. End-to-end encryption protocols must be meticulously implemented, ensuring that data traversing public and private channels retains its sanctity. Advanced cryptographic algorithms, such as elliptic-curve constructions and post-quantum resistant methodologies, provide robust defenses against evolving threat paradigms.

Key management strategies form a critical adjunct to cryptographic deployments. Rotational keying, hierarchical trust models, and automated revocation systems ensure that cryptographic assets remain uncompromised, even under sustained adversarial scrutiny. These measures synergize to create a resilient communication environment where sensitive exchanges retain their integrity, fostering confidence across the organizational network spectrum.

Proactive Threat Intelligence and Behavioral Analytics

Predictive threat intelligence transforms reactive security postures into anticipatory mechanisms. By leveraging historical attack vectors and emergent threat signatures, administrators cultivate an environment of vigilance, wherein anomalies are discerned in near-real-time. Behavioral analytics provide a granular lens into network dynamics, discerning deviations from established baselines with remarkable acuity.

Machine learning algorithms amplify these capabilities, detecting subtle indicators of compromise that elude conventional monitoring tools. Automated orchestration of alerts and mitigative actions ensures that response times are minimized, reducing the window of opportunity for attackers. Consequently, organizations develop a self-healing network fabric capable of dynamically adapting to evolving threat landscapes without sacrificing performance or user experience.

Endpoint Hardening and Access Governance

Endpoints, as ingress points into the network, represent both operational utility and potential vulnerability. Rigorous hardening protocols—including multifactor authentication, device attestation, and policy-enforced configurations—transform endpoints from liability into fortified nodes. Continuous auditing of endpoint integrity ensures that compromised devices are promptly quarantined, preventing propagation of malicious activity.

Access governance complements endpoint security by enforcing principle-of-least-privilege policies. Role-based and attribute-driven access controls limit exposure, ensuring that users interact only with requisite resources. Dynamic session monitoring and behavioral profiling further refine these controls, enabling administrators to revoke or escalate privileges in response to risk indicators.

Resilient Incident Response and Forensic Acumen

Incident response transcends operational reaction, evolving into a disciplined methodology of forensic investigation and strategic recovery. The construction of playbooks, aligned with both regulatory frameworks and organizational risk appetite, enables methodical containment and eradication of threats.

Forensic examination of network artifacts—including packet captures, log repositories, and configuration snapshots—reveals both the mechanics of the intrusion and latent vulnerabilities. This intelligence informs iterative improvements in security posture, transforming reactive experiences into proactive enhancements. The synergy between forensic acumen and operational resilience fosters a culture of continuous learning, reducing recurrence probability and strengthening network trustworthiness.

Compliance and Ethical Stewardship

Maintaining adherence to regulatory frameworks represents both a legal mandate and an ethical imperative. Structured compliance programs ensure alignment with data protection statutes, cybersecurity mandates, and industry-specific standards. Beyond formal obligations, this adherence fosters transparency and accountability, elevating organizational credibility.

Auditing mechanisms, coupled with automated compliance monitoring, allow administrators to demonstrate due diligence, detect deviations, and remediate gaps proactively. Ethical stewardship, underpinned by comprehensive security awareness initiatives, cultivates a workforce attuned to the nuances of risk, ultimately fortifying both human and technological components of the network infrastructure.

Intricacies of Network Latency

Network latency is often the silent saboteur of seamless communication, manifesting as imperceptible delays that cumulatively degrade performance. Understanding its multifaceted origins—from propagation delays in fiber optics to processing bottlenecks within routers—is essential for 4A0-112 practitioners. Tools such as traceroute and ping, when employed with meticulous attention to packet path anomalies, unveil latency hot spots that might otherwise remain undetected. Network architects must cultivate an anticipatory mindset, correlating latency patterns with peak traffic behaviors to anticipate congestion before it escalates.

The Art of Packet Dissection

Packet analysis is not merely a technical procedure but a cognitive exercise in forensic networking. Each frame and datagram carries a story of traversal, error, and transformation. Mastery of protocol analyzers like Wireshark allows administrators to decode packet headers, sequence numbers, and flag fields with unprecedented granularity. By interpreting these microcosms of network activity, candidates can discern hidden misconfigurations, unauthorized intrusions, or inefficient routing pathways. This meticulous packet scrutiny serves as the foundation of an optimized, resilient network infrastructure.

Dynamic Load Balancing Mechanisms

Network optimization transcends troubleshooting by incorporating mechanisms that distribute workloads intelligently. Dynamic load balancing ensures that no single node or path is overwhelmed, promoting equilibrium across complex topologies. Techniques like hash-based distribution, least-connection routing, and adaptive algorithms enable networks to self-regulate in real time. Professionals who internalize these mechanisms cultivate the dexterity to enhance throughput without compromising latency, a competency directly aligned with 4A0-112 proficiency.

Predictive Analytics in Network Health

Emergent technologies now permit predictive insight into network performance, leveraging historical metrics to forecast anomalies. By analyzing patterns in throughput fluctuations, packet loss, and jitter variations, administrators can anticipate failures before they materialize. Predictive models harnessing machine learning can identify subtle precursors to congestion or hardware degradation, transforming reactive troubleshooting into proactive orchestration. This forward-looking paradigm exemplifies the caliber of expertise expected of a 4A0-112 aspirant.

Strategic Traffic Shaping

Traffic shaping is an artful orchestration of data flow, calibrated to prioritize critical operations while mitigating bandwidth saturation. By delineating traffic classes, setting bandwidth ceilings, and enforcing policy-driven queuing, administrators sculpt network performance to precise operational specifications. Understanding the interplay between latency-sensitive applications and bulk transfers allows for judicious allocation, ensuring mission-critical services maintain continuity even under high demand. This mastery of flow control signifies a deep, operational fluency in network stewardship.

Automated Monitoring and Alerting

In contemporary network environments, automation is indispensable. Deploying intelligent monitoring systems that continuously analyze link status, interface errors, and protocol anomalies allows administrators to respond with immediacy. Automated alerts configured for nuanced thresholds can distinguish between benign fluctuations and genuine threats, streamlining incident response and reducing human error. The integration of these monitoring paradigms reinforces the robustness of network architecture, reflecting the advanced competencies assessed in 4A0-112 evaluations.

Resilience through Redundancy

Optimization extends beyond performance into reliability. Network redundancy—through parallel links, failover configurations, and clustering—fortifies systems against unforeseen disruptions. Administrators must balance redundancy with efficiency, avoiding superfluous pathways while maintaining fault-tolerant designs. Understanding the stochastic nature of hardware failures and traffic spikes informs the construction of resilient networks capable of sustaining service continuity under diverse conditions.

Fine-Tuning Routing Protocols

Routing protocols constitute the cerebral cortex of network infrastructure, governing the flow of information across myriad nodes. Fine-tuning protocol parameters, from metric weights to convergence timers, allows administrators to optimize routing decisions dynamically. Understanding the subtleties of OSPF, IS-IS, and BGP behaviors, including route flapping mitigation and path preference hierarchies, equips 4A0-112 candidates with the capability to engineer networks that are both performant and stable. These adjustments are subtle yet potent, directly influencing overall network efficiency.

The Confluence of Security and Performance

Effective network optimization does not occur in isolation from security. Packet inspection, anomaly detection, and firewall rule harmonization ensure that performance gains do not compromise protective measures. Administrators must reconcile throughput objectives with intrusion prevention, maintaining a delicate equilibrium where both security and performance are maximized. Mastery of this confluence is emblematic of the advanced analytical thinking expected of professionals targeting 4A0-112 certification.

The Evolution of Network Cognition

Network ecosystems have undergone a profound metamorphosis, transitioning from static infrastructures to cognitive frameworks that respond to stimuli with unprecedented agility. This evolution hinges on the assimilation of artificial intelligence and predictive algorithms into the fabric of network operations. By harnessing these sophisticated paradigms, engineers orchestrate data flows with meticulous precision, preempting anomalies before they manifest as disruptions. Cognitive networking reframes traditional paradigms, transforming reactive troubleshooting into anticipatory, self-optimizing processes.

The integration of autonomous decision-making agents amplifies operational dexterity, enabling networks to reconfigure dynamically under fluctuating load conditions. This capability transcends conventional automation, manifesting as an intricate ballet of interdependent mechanisms that coalesce into harmonized, resilient infrastructures.

Telemetry-Driven Insight

Telemetry has emerged as the fulcrum of modern orchestration, furnishing granular visibility into network behavior and performance metrics. Beyond rudimentary monitoring, contemporary telemetry systems provide context-rich insights that illuminate latent inefficiencies and potential bottlenecks. By aggregating and analyzing real-time data streams, these systems facilitate predictive maintenance and optimize resource allocation, enhancing service continuity.

This telemetry-driven insight underpins the strategic orchestration of network resources, empowering operators to synchronize traffic flows, calibrate bandwidth allocation, and implement adaptive policies with surgical accuracy. The fusion of data science with networking engenders a proactive operational paradigm, wherein decisions are informed by empirical foresight rather than reactive necessity.

Programmable Network Fabric

Programmability lies at the nexus of automation and orchestration, enabling the translation of abstract policies into tangible network configurations. Through APIs, configuration scripts, and intent-based interfaces, operators can dictate network behavior with unparalleled granularity. Programmable fabrics eschew rigidity, fostering an environment where modifications propagate seamlessly, minimizing latency and operational friction.

Such malleability proves indispensable in multi-cloud environments, where heterogeneity and dynamic resource demands necessitate agile, responsive infrastructures. By orchestrating these programmable elements, networks achieve a state of fluidity, adapting to fluctuating workloads and evolving application landscapes without compromising stability.

Machine Learning in Orchestration

Machine learning has transcended its nascent applications, becoming a cornerstone of advanced orchestration. By assimilating historical data, models discern patterns and infer predictive behaviors, enabling preemptive mitigation of network congestion, latency spikes, and configuration anomalies. Reinforcement learning agents iteratively refine routing and policy decisions, achieving near-autonomous operational optimization.

The symbiosis of machine learning and orchestration yields a network that evolves organically, continuously optimizing performance and resilience. Engineers equipped with mastery of these paradigms navigate complex infrastructures with surgical precision, anticipating challenges before they manifest and deploying remedies instantaneously.

Service Choreography

Orchestration extends beyond mere automation, encompassing the choreography of services across heterogeneous domains. This entails the alignment of compute, storage, and networking elements to deliver cohesive, end-to-end service experiences. Service choreography ensures that interdependent components operate in concert, maintaining performance SLAs while optimizing resource utilization.

By employing abstraction layers and declarative configurations, orchestration platforms empower operators to manage intricate topologies with simplicity and efficacy. The resultant networks exhibit both elasticity and stability, capable of assimilating new services or decommissioning legacy elements without operational perturbation.

Resilience Through Predictive Analytics

Predictive analytics serve as the vanguard of resilient network design. By modeling potential failure scenarios and simulating performance trajectories, operators preemptively reinforce vulnerable nodes and optimize redundancy strategies. This foresight reduces unplanned downtime, enhances user experience, and safeguards critical applications against environmental and operational perturbations.

In tandem with orchestration, predictive analytics facilitates self-healing networks that detect anomalies, isolate faults, and execute corrective measures autonomously. The network thus becomes not merely a conduit for data but a sentient entity capable of self-preservation and adaptation under duress.

Cross-Domain Integration

Modern orchestration transcends singular network domains, embracing multi-layered, cross-domain integration. Coordinating diverse subsystems—ranging from traditional routing fabrics to virtualized overlays—requires seamless interoperation and unified policy enforcement. Such integration mitigates silos, reduces operational complexity, and enables holistic visibility across sprawling infrastructures.

By unifying these domains, orchestration platforms facilitate coherent service delivery, dynamic scaling, and efficient lifecycle management. Engineers leverage these integrated views to optimize capacity, streamline fault detection, and deploy services with unprecedented alacrity.

Intent-Based Networking Paradigm

Intent-based networking epitomizes the zenith of orchestration sophistication. By specifying desired outcomes rather than procedural configurations, operators delegate the translation into actionable network policies to intelligent orchestration engines. These engines interpret, validate, and implement intents across the infrastructure, continuously reconciling outcomes with objectives.

This paradigm diminishes human error, accelerates deployment cycles, and fosters adaptive infrastructures capable of responding to emergent requirements autonomously. Operators transition from configuration artisans to strategic overseers, guiding networks toward operational excellence with minimal manual intervention.

Immersive Conceptual Familiarization

Attaining mastery in the 4A0-112 certification demands profound conceptual familiarization. Candidates must cultivate a granular understanding of intricate networking paradigms, including protocol orchestration, routing intricacies, and fault-tolerant architectures. Engaging deeply with these concepts creates a cognitive scaffolding that underpins advanced problem-solving abilities and enhances retention through mental visualization of network topologies and data flow sequences.

Experiential Learning in Controlled Environments

Practical immersion is paramount for internalizing theoretical knowledge. Simulated environments, virtual labs, and emulators serve as cognitive playgrounds where aspirants experiment with configurations, troubleshoot anomalies, and test performance under varying network loads. This experiential methodology promotes synaptic reinforcement, ensuring that theoretical frameworks translate seamlessly into actionable skills during real-world network deployments.

Strategic Cognitive Segmentation

Dividing the syllabus into cohesive cognitive segments optimizes assimilation and reduces mental fatigue. Employing temporal partitioning allows focused attention on individual networking modules while maintaining continuity across interrelated topics. Conceptual chunking, reinforced by mnemonic aids and iterative review cycles, fortifies long-term retention, allowing candidates to recall intricate processes under the temporal pressure of examination settings.

Analytical Simulation Exercises

Analytical simulations serve as crucibles for honing diagnostic acumen. Candidates engage with scenario-based problem sets that mimic operational anomalies, latency fluctuations, and protocol conflicts. Through iterative experimentation and reflective analysis, aspirants develop anticipatory reasoning, enabling rapid identification and rectification of network discrepancies in both virtual and physical infrastructures.

Integrative Study Alliances

Collaboration through integrative study alliances enhances comprehension and cognitive flexibility. Dialogue with peers in focused cohorts encourages the exchange of diverse methodologies, troubleshooting heuristics, and optimization strategies. Collective inquiry fosters a multifaceted perspective, empowering candidates to approach complex network scenarios with adaptable strategies and a nuanced understanding of systemic interdependencies.

Temporal Discipline and Revision Cadence

Structured temporal discipline is vital for navigating the extensive curriculum efficiently. Establishing a deliberate cadence for study, practical application, and revision consolidates learning while mitigating cognitive saturation. Periodic review sessions, interspersed with reflective exercises and self-assessment evaluations, embed neural pathways that enhance long-term retention and cognitive agility in problem-solving contexts.

Technological Vigilance and Trend Assimilation

Remaining attuned to technological evolutions enriches preparation for 4A0-112 certification. Awareness of emergent protocols, hardware innovations, and industry benchmarks cultivates a dynamic understanding of network ecosystems. This vigilant engagement ensures that candidates not only memorize static content but also develop a forward-looking proficiency that aligns with contemporary professional exigencies.

Cognitive Endurance through Iterative Challenges

Sustaining cognitive endurance is essential for managing the complexity of advanced networking scenarios. Iterative exposure to progressively intricate challenges, coupled with reflective analysis, enhances mental resilience and fortifies strategic thinking. This enduring practice ensures that candidates approach the certification examination with both intellectual stamina and procedural confidence.

Synthesis of Theoretical and Practical Dimensions

The confluence of theoretical rigor and practical dexterity epitomizes effective preparation. Synthesizing abstract concepts with hands-on implementation bridges cognitive gaps and fosters integrative intelligence. This dual-focused approach ensures that aspirants emerge not only as adept problem solvers but also as innovators capable of optimizing network performance in dynamic and demanding environments.

Cognitive Calibration through Mock Evaluations

Mock evaluations serve as critical instruments for calibrating knowledge and performance under simulated examination conditions. Engaging with timed assessments, adaptive question banks, and scenario-based inquiries enables candidates to refine pacing, enhance accuracy, and cultivate strategic prioritization. This cognitive calibration fortifies confidence and mitigates examination-related anxiety, ensuring measured and effective responses under evaluative pressure.

Neurocognitive Enhancement Techniques

Optimizing neural pathways can significantly amplify retention and problem-solving capacity. Candidates can employ visualization exercises that map network topologies, protocol interactions, and fault resolutions in mental schematics. Cognitive rehearsal of configuration scenarios, combined with spaced repetition, engrains complex sequences into long-term memory. This mental orchestration enhances synaptic efficiency, ensuring rapid recall under examination pressure.

Iterative Scenario Deconstruction

Breaking down intricate networking scenarios into elemental components facilitates granular comprehension. By isolating variables such as bandwidth constraints, routing loops, or device hierarchies, candidates gain clarity on causal relationships. Deconstructive analysis, when repeated across multiple case studies, cultivates a sophisticated diagnostic lens, enabling practitioners to predict and preempt network anomalies with confidence.

Adaptive Troubleshooting Frameworks

Developing adaptive troubleshooting frameworks is essential for mastering dynamic network environments. Candidates should integrate heuristic strategies with algorithmic reasoning to tackle diverse challenges. This synthesis encourages flexible cognition, allowing rapid recalibration of methods when conventional approaches falter. Over time, this adaptability transforms reactive problem-solving into anticipatory network governance.

Cognitive Resilience in High-Stakes Simulation

Endurance under high-pressure conditions is a pivotal determinant of success. Engaging with high-stakes simulation exercises fosters cognitive resilience, mitigating stress-induced performance degradation. Incorporating incremental difficulty in practice environments cultivates tolerance to complex problem sets, ensuring that aspirants retain clarity and analytical precision even when confronted with unfamiliar configurations or cascading network failures.

Holistic Network Ecosystem Comprehension

A profound understanding of the holistic network ecosystem transcends rote memorization. Candidates should internalize the interconnectivity between layers, the synergy of protocols, and the emergent behaviors of networked devices. Appreciating these systemic relationships fosters strategic insight, enabling optimized decision-making in real-world deployments, where changes in one subsystem may propagate unforeseen consequences across the entire architecture.

Metacognitive Reflection and Self-Assessment

Regular metacognitive reflection is instrumental in identifying strengths, weaknesses, and cognitive biases. Candidates should systematically evaluate their problem-solving approaches, time management strategies, and conceptual understanding. Self-assessment not only consolidates knowledge but also cultivates self-awareness, allowing aspirants to recalibrate study methods dynamically and optimize learning efficacy for maximum examination readiness.

Integrative Lab-Based Experimentation

Advanced preparation mandates prolonged engagement with lab-based experimentation. Beyond routine configurations, candidates should explore stress-testing protocols, simulating node failures, latency spikes, and security breaches. This proactive experimentation deepens practical comprehension and exposes subtleties in protocol behavior that are rarely addressed in theoretical study, cultivating a robust, experience-driven expertise.

Interdisciplinary Knowledge Application

Integrating insights from adjacent domains such as cybersecurity, cloud orchestration, and data analytics can enrich networking comprehension. Candidates who understand how auxiliary technologies interact with core network functions gain strategic leverage. This interdisciplinary approach sharpens analytical acuity, allowing candidates to design resilient, optimized network architectures that account for cross-domain interactions.

Algorithmic Optimization Practice

Algorithmic proficiency is central to mastering complex routing and traffic management. Candidates should engage with exercises that optimize path selection, bandwidth allocation, and latency reduction. By modeling and simulating algorithmic adjustments, aspirants refine their computational thinking, which is critical for anticipating bottlenecks and implementing scalable, efficient solutions in production-grade networks.

Cognitive Load Management Strategies

Effective preparation hinges on managing cognitive load to prevent burnout and enhance assimilation. Techniques such as distributed practice, dual coding of theoretical and practical content, and strategic interleaving of challenging modules reduce mental strain. Proper cognitive load management ensures sustained attention, deeper comprehension, and a steady pace toward mastery of the multifaceted 4A0-112 syllabus.

Scenario-Driven Conceptual Synthesis

Merging abstract concepts with scenario-based applications reinforces holistic understanding. Candidates should routinely craft hypothetical network problems, integrating multiple subsystems, routing protocols, and redundancy mechanisms. Synthesizing solutions encourages cross-referential reasoning, promoting a cohesive mental framework where theoretical knowledge seamlessly translates into actionable network strategies.

Dynamic Feedback Loops

Implementing dynamic feedback loops enhances learning efficiency. Candidates can engage in iterative exercises where outcomes are critically analyzed, and strategies adjusted in real-time. Feedback loops accelerate the identification of misconceptions, refine procedural accuracy, and cultivate a mindset attuned to continuous improvement, essential for navigating the rapidly evolving landscape of modern networking technologies.

Resilient Knowledge Mapping

Creating resilient knowledge maps transforms fragmented information into structured, retrievable frameworks. Candidates can employ mind mapping, layered schematics, and annotated flowcharts to visualize protocol hierarchies, device interdependencies, and fault propagation pathways. These cognitive artifacts serve as persistent reference points, reducing recall latency and reinforcing the integration of complex concepts.

Advanced Troubleshooting Simulations

Immersive troubleshooting simulations expose candidates to cascading failures, hybrid protocol interactions, and emergent anomalies. By engaging with multi-dimensional challenges that blend security, performance, and redundancy considerations, aspirants cultivate holistic problem-solving dexterity. These simulations mirror operational pressures and foster the analytical agility required for rapid, precise intervention in live network environments.

Temporal Spacing of Revision Cycles

Implementing temporal spacing in revision cycles significantly enhances retention. Candidates should stagger review sessions over increasing intervals, interleaving related concepts and revisiting high-complexity modules. This temporal distribution leverages the spacing effect, ensuring that critical knowledge is consolidated into long-term memory and readily accessible for both examination and practical application.

Proactive Risk Anticipation

Strategic preparation involves anticipating potential network risks and failure modes. Candidates should cultivate a proactive mindset that evaluates latency congestion, protocol incompatibilities, and security vulnerabilities before they manifest. Anticipatory analysis strengthens decision-making under uncertainty, a skill that distinguishes proficient network engineers from those reliant solely on reactive troubleshooting.

Cognitive Integration of Protocol Ecosystems

Understanding the synergistic interplay among protocol ecosystems is critical. Candidates should explore how routing, signaling, and transport protocols influence one another under varied conditions. Integrating this knowledge fosters an ecosystemic perspective, enabling practitioners to design resilient architectures, optimize traffic flows, and predict systemic impacts with precision and foresight.

Reflective Performance Journals

Maintaining reflective performance journals allows candidates to track progress, document anomalies, and articulate insights from practical exercises. Journaling consolidates experiential learning and encourages self-directed improvement. This practice sharpens analytical clarity and embeds iterative refinement into the preparation process, fostering a culture of disciplined mastery and intellectual resilience.

Simulation of Unforeseen Network Anomalies

Exposure to unforeseen network anomalies during preparation cultivates adaptive intelligence. Candidates should simulate rare events, protocol conflicts, and cascading failures to develop contingency strategies. This deliberate engagement with atypical scenarios equips aspirants with the agility to maintain operational continuity under unpredictable conditions, enhancing confidence and problem-solving efficacy.

Synergistic Knowledge Networks

Collaborative learning extends beyond individual study, forming synergistic knowledge networks. Candidates who interact with mentors, peers, and cross-disciplinary professionals benefit from diverse perspectives, heuristics, and optimization strategies. These networks accelerate conceptual integration, encourage innovative problem-solving, and cultivate a professional ethos attuned to the collaborative nature of modern network engineering.

Autonomous Network Topologies

The emergence of autonomous network topologies has redefined traditional infrastructure paradigms. In these self-governing architectures, nodes communicate seamlessly, adapting dynamically to fluctuating loads and unexpected failures. Autonomy enables decentralized decision-making, allowing each element to optimize routing, bandwidth allocation, and latency mitigation independently while maintaining cohesion across the system.

This paradigm reduces dependency on centralized control, creating networks that thrive under complexity. Engineers orchestrating these topologies leverage algorithms that balance local optimization with global performance objectives, ensuring robust and scalable architectures.

Dynamic Policy Enforcement

Dynamic policy enforcement has emerged as a cornerstone of next-generation orchestration frameworks. Unlike static configurations, dynamic policies adapt in real-time to traffic patterns, security threats, and service demands. These policies govern access control, quality of service, and resource allocation with precision, eliminating bottlenecks and ensuring equitable distribution of network capacity.

Through continuous telemetry analysis, orchestration engines reconcile policy intent with observed behaviors, automatically adjusting configurations to align with overarching operational objectives. The result is a network ecosystem that embodies both intelligence and flexibility.

Service Velocity Optimization

Service velocity optimization addresses the pressing demand for accelerated deployment of applications and services. By automating provisioning, scaling, and lifecycle management, orchestration platforms minimize latency between requirement identification and service activation. This velocity fosters business agility, enabling rapid experimentation, deployment of innovative solutions, and instantaneous adaptation to market shifts.

Operators employ automated pipelines, workflow engines, and template-driven deployment strategies to synchronize multi-domain components, achieving consistent and predictable service delivery across heterogeneous infrastructures.

Intent Translation Mechanisms

At the heart of advanced orchestration lies the translation of abstract intents into tangible configurations. Intent translation mechanisms interpret high-level business objectives, convert them into executable policies, and enforce them across network elements. These mechanisms leverage semantic models, rule-based engines, and machine learning algorithms to reconcile intent with operational constraints, delivering both precision and scalability.

By abstracting procedural details, intent translation empowers operators to focus on strategic objectives rather than manual configuration minutiae, fostering a culture of innovation and proactive network management.

Adaptive Security Orchestration

Security orchestration has evolved from reactive defenses to adaptive, anticipatory frameworks. Leveraging real-time telemetry, predictive analytics, and threat intelligence, orchestration platforms detect anomalies, isolate compromised segments, and deploy countermeasures autonomously. This adaptability ensures that networks remain resilient against emerging attack vectors without compromising performance or service continuity.

By embedding security into automated workflows, organizations achieve a unified posture that balances operational agility with robust threat mitigation. Engineers orchestrate security responses that scale seamlessly with evolving network topologies, creating ecosystems that are both intelligent and impervious.

Resource Convergence Strategies

Resource convergence strategies harmonize compute, storage, and networking assets to maximize efficiency. Orchestration platforms analyze utilization patterns, predict future demand, and dynamically allocate resources to maintain optimal performance. This convergence reduces wastage, lowers operational costs, and ensures that critical applications receive priority access to infrastructure.

By employing predictive heuristics and adaptive scheduling, operators orchestrate resources with surgical precision, enabling networks to accommodate peaks in demand without service degradation.

Event-Driven Automation

Event-driven automation enhances responsiveness by triggering workflows based on predefined conditions or spontaneous occurrences. Whether it is a spike in network traffic, a device failure, or a security alert, event-driven mechanisms activate corrective actions instantaneously, ensuring minimal disruption and maximum efficiency.

This automation paradigm relies on intelligent event correlation, contextual analysis, and rapid decision-making, transforming networks from passive conduits into active, self-optimizing ecosystems that respond with agility and foresight.

Multi-Layer Orchestration Frameworks

Multi-layer orchestration frameworks integrate diverse infrastructure layers—physical, virtual, and software-defined—into cohesive management planes. These frameworks facilitate end-to-end visibility, coordinated provisioning, and seamless policy enforcement, bridging the gaps between siloed subsystems.

Operators leverage abstraction, service modeling, and standardized APIs to ensure consistent orchestration across layers, enabling rapid deployment of complex services and maintaining operational continuity in highly dynamic environments.

Predictive Capacity Management

Predictive capacity management empowers networks to anticipate future demand and preemptively adjust resources. Through advanced analytics and historical trend assessment, orchestration engines forecast traffic patterns, potential congestion points, and resource bottlenecks. Proactive adjustments mitigate latency, prevent service degradation, and maintain optimal throughput.

By integrating predictive capabilities into orchestration workflows, operators achieve continuous alignment between capacity and demand, fostering networks that operate at peak efficiency while minimizing over-provisioning and waste.

Cognitive Fault Remediation

Cognitive fault remediation represents the evolution of network resilience. Leveraging artificial intelligence, orchestration platforms detect subtle anomalies, diagnose root causes, and implement corrective actions autonomously. These systems learn from prior incidents, refining their response strategies to improve accuracy and speed over time.

This intelligent remediation reduces downtime, enhances user experience, and minimizes manual intervention. Operators benefit from networks that not only respond to faults but also evolve in their capacity to prevent recurrence, creating self-healing environments.

Cross-Platform Integration

Cross-platform integration ensures that orchestration platforms can unify disparate technologies, protocols, and vendor solutions. By establishing standardized interfaces and abstraction layers, networks achieve interoperability without compromising functionality or security. This integration reduces complexity, streamlines management, and facilitates seamless deployment of multi-vendor solutions.

Operators can orchestrate end-to-end services across heterogeneous ecosystems, maintaining consistent performance, policy adherence, and operational visibility regardless of underlying infrastructure diversity.

Autonomous Scaling Algorithms

Autonomous scaling algorithms allow networks to adjust dynamically to fluctuating workloads. Leveraging telemetry, predictive analytics, and machine learning, these algorithms trigger horizontal or vertical scaling of resources in real-time. This ensures uninterrupted service delivery, maintains performance SLAs, and optimizes infrastructure utilization.

By embedding scaling intelligence into orchestration frameworks, operators achieve elasticity at unprecedented speeds, adapting to surges in demand without manual intervention or pre-defined thresholds.

Workflow Intelligence Engines

Workflow intelligence engines orchestrate complex sequences of automated actions across multiple network domains. These engines leverage decision logic, conditional triggers, and dependency mapping to ensure that tasks execute in the correct sequence and with precise timing. By embedding contextual awareness into workflows, orchestration platforms can respond to dynamic conditions, optimizing service delivery and operational efficiency.

This intelligence transforms networks into adaptive entities capable of handling intricate processes autonomously, freeing engineers to focus on strategic innovation rather than routine management.

Policy-Driven Traffic Shaping

Policy-driven traffic shaping allows operators to enforce performance, security, and compliance objectives in real-time. Orchestration platforms analyze traffic patterns, classify flows, and apply dynamic policies that prioritize critical applications, mitigate congestion, and maintain predictable performance. These mechanisms enable networks to adapt instantaneously to changing demands while adhering to business priorities.

By embedding policy enforcement within automated workflows, operators ensure consistent and reliable service delivery across diverse applications and environments.

AI-Augmented Orchestration

AI-augmented orchestration enhances traditional frameworks by infusing predictive and prescriptive intelligence into decision-making processes. Machine learning models analyze historical and real-time data, identify optimization opportunities, and suggest actionable improvements. These AI capabilities accelerate deployment, enhance fault tolerance, and improve resource allocation efficiency.

Operators leverage AI augmentation to create networks that learn, adapt, and evolve autonomously, achieving operational excellence without reliance on exhaustive manual oversight.

Jitter Analysis and Mitigation

Jitter, the capricious variation in packet arrival times, is a subtle adversary in high-performance networks. Unlike latency, which manifests as a perceivable delay, jitter disrupts timing-sensitive applications such as VoIP, video conferencing, and financial transaction systems. Understanding the stochastic properties of jitter requires a combination of statistical modeling and real-time monitoring. Techniques such as buffer management, packet prioritization, and adaptive queuing enable administrators to smooth temporal disparities, ensuring consistent quality of service. Advanced practitioners employ jitter histograms and moving-average algorithms to forecast and preempt fluctuations, creating networks that operate with surgical precision.

Granular Quality of Service Design

Quality of Service (QoS) is the linchpin of network optimization, encompassing a spectrum of techniques that prioritize traffic, manage congestion, and ensure equitable resource allocation. Modern QoS strategies employ intricate classification rules, mapping specific traffic types to distinct queues and service levels. By calibrating weight assignments and bandwidth reservations, administrators guarantee that latency-sensitive applications maintain integrity while bulk data transfers proceed without disruption. Understanding the interplay of DiffServ markings, traffic policing, and shaping mechanisms allows candidates to engineer networks that are both resilient and agile, reflecting the analytical rigor expected in 4A0-112 certification.

Intelligent Congestion Management

Congestion is an inevitable phenomenon in high-density networks, but its impact can be mitigated through intelligent algorithms and adaptive policies. Techniques such as random early detection, active queue management, and congestion avoidance protocols enable routers to proactively manage buffer utilization. Sophisticated approaches integrate traffic prediction models, allowing for dynamic bandwidth allocation that anticipates surges rather than reacting to them. This anticipatory management transforms networks from reactive entities into self-regulating systems, elevating both performance and reliability.

Multilayer Troubleshooting Methodologies

Advanced network troubleshooting transcends surface-level diagnostics, employing multilayer methodologies that span physical, data link, network, and application layers. At the physical layer, meticulous inspection of cabling, signal integrity, and electromagnetic interference is essential. Data link analysis encompasses VLAN integrity, frame errors, and switch port behaviors. Network layer evaluation includes routing table verification, protocol compliance, and path stability, while application-layer assessment scrutinizes end-to-end service availability and packet fidelity. This comprehensive, multilayered approach empowers administrators to isolate anomalies with unparalleled precision, aligning with the expertise required for 4A0-112 success.

Advanced Flow Analysis

Flow analysis, the systematic examination of data movement patterns, reveals nuanced insights into network efficiency and vulnerability. By aggregating flow statistics from NetFlow or sFlow collectors, administrators can identify excessive chatter, asymmetric routing, and potential bottlenecks. Temporal correlation of flows uncovers recurring patterns that may indicate misconfiguration, inefficient application behavior, or security risks. Mastery of flow analysis enables proactive intervention, ensuring optimal utilization of resources while preserving latency-sensitive performance metrics.

Proactive Fault Isolation

Proactive fault isolation is a paradigm shift from reactive troubleshooting to anticipatory problem-solving. Utilizing historical logs, event correlation, and anomaly detection, network professionals can identify latent issues before they manifest as critical outages. Predictive fault models leverage machine learning algorithms to infer the likelihood of device failure, interface saturation, or routing instability. This foresight enhances uptime, reduces operational overhead, and demonstrates a level of strategic network stewardship aligned with 4A0-112 competencies.

Temporal Traffic Profiling

Understanding network behavior requires more than instantaneous snapshots; it necessitates temporal traffic profiling. By analyzing traffic volumes, protocol usage, and session dynamics over extended periods, administrators can identify cyclical congestion patterns, peak usage intervals, and anomalous spikes. Temporal profiling informs capacity planning, enabling network architects to scale infrastructure judiciously and anticipate resource requirements with scientific accuracy. This analytical discipline underscores the importance of data-driven decision-making in network optimization.

Adaptive Bandwidth Allocation

Adaptive bandwidth allocation involves dynamically adjusting link capacities based on real-time demand and application criticality. Unlike static provisioning, which risks underutilization or saturation, adaptive strategies harness metrics such as throughput, latency, and packet loss to reassign resources fluidly. Techniques may include link aggregation, dynamic routing policies, or software-defined network interventions. Administrators who master adaptive allocation cultivate networks that respond intelligently to varying workloads, embodying the proactive agility required for 4A0-112 certification.

Anomaly Detection and Behavioral Analytics

Network anomalies often precede catastrophic failures or security incidents, making behavioral analytics a cornerstone of advanced troubleshooting. By defining baselines for normal network behavior and continuously monitoring deviations, administrators can pinpoint suspicious activity, misconfigurations, or impending performance degradation. Machine learning-enhanced systems analyze flow patterns, protocol deviations, and session anomalies to provide early warning indicators. This fusion of analytics and proactive intervention elevates network reliability and demonstrates a sophisticated understanding of operational resilience.

Protocol Convergence Optimization

Protocol convergence—the process by which routing protocols synchronize their knowledge across a network—has a profound impact on performance and stability. Delayed convergence can exacerbate packet loss, routing loops, and latency spikes. Fine-tuning timers, adjusting hello intervals, and optimizing link-state updates ensure rapid and reliable protocol synchronization. Experts leverage simulation tools and emulated environments to test convergence strategies under varied conditions, guaranteeing networks are resilient to topological changes or hardware failures.

Hierarchical Network Segmentation

Segmentation, both physical and logical, is a powerful mechanism to improve network manageability, performance, and fault containment. Hierarchical segmentation—using a combination of core, distribution, and access layers—reduces the propagation of broadcast traffic, isolates congestion, and enhances security. By implementing virtual routing and forwarding, VLAN segmentation, and micro-segmentation for sensitive workloads, administrators can construct networks that are modular, scalable, and resistant to systemic failures. This strategic structuring reflects the advanced design principles expected in 4A0-112 mastery.

Microburst Detection and Remediation

Microbursts, transient but intense surges in packet transmission, can overwhelm buffers and degrade network performance despite nominal average utilization. Detecting microbursts requires high-resolution monitoring and specialized metrics that capture sub-second traffic variations. Remediation strategies may include packet pacing, buffer tuning, and link redundancy, ensuring that bursts are absorbed without impacting latency-sensitive flows. Professionals who internalize microburst dynamics achieve a level of precision that separates competent operators from true optimization experts.

Cross-Layer Performance Correlation

True network optimization involves correlating performance metrics across layers to derive holistic insights. For instance, packet loss observed at the transport layer may originate from buffer overflows at the data link layer or congestion at the network layer. Cross-layer analysis integrates these observations, enabling administrators to target root causes rather than superficial symptoms. This comprehensive perspective enhances decision-making, reduces troubleshooting cycles, and ensures sustainable performance improvements aligned with 4A0-112 expertise.

Emergent Automation Strategies

The evolution of network automation has introduced emergent strategies that combine policy-driven orchestration with intelligent remediation. Administrators leverage software-defined networking (SDN) controllers, automation scripts, and self-healing mechanisms to streamline repetitive tasks and respond dynamically to environmental changes. These systems analyze traffic patterns, predict failures, and implement corrective actions autonomously, reducing human error and operational latency. Mastery of these emergent strategies equips candidates to manage modern networks with exceptional efficiency.

Latency-Sensitive Application Optimization

Certain applications, such as high-frequency trading platforms, cloud gaming, and VoIP systems, exhibit extreme sensitivity to latency and jitter. Optimizing networks for these workloads requires precise scheduling, traffic prioritization, and protocol tuning. Techniques may include edge computing, application-aware routing, and packet coalescing to minimize transmission delays. Professionals adept in these optimizations ensure critical services function seamlessly under diverse network conditions, demonstrating nuanced expertise relevant to 4A0-112 certification.

Redundant Path Engineering

Redundant path engineering is more than duplicating links; it requires strategic analysis of path diversity, load distribution, and failure probability. By designing multiple, non-overlapping routes, administrators mitigate single points of failure while preserving efficiency. Real-time path selection algorithms, coupled with intelligent failover protocols, guarantee uninterrupted connectivity even during hardware outages or congestion events. This engineering sophistication enhances both resilience and performance, hallmarks of advanced network stewardship.

Adaptive Zero-Trust Architectures

Zero-trust architecture has emerged as a cornerstone in resilient network design, emphasizing the principle of never assuming trust. Every access request, internal or external, is rigorously evaluated against dynamic policy engines. Adaptive authentication, contextual access evaluation, and continuous risk scoring converge to form a robust security lattice.

In this paradigm, micro-segmentation extends beyond traditional boundaries, creating dynamic trust zones that recalibrate based on behavioral cues. The network continuously interrogates device posture, user intent, and transaction anomalies to grant, restrict, or revoke access. This fluid model limits the impact of compromised credentials and minimizes lateral threat propagation.

Integration of AI-driven decision-making enhances zero-trust effectiveness. Algorithms evaluate historical access patterns, environmental factors, and threat intelligence feeds to anticipate suspicious behaviors. This anticipatory mechanism allows for proactive risk mitigation, elevating organizational resilience and safeguarding sensitive digital assets.

Quantum-Resistant Cryptography

Emerging quantum computational capabilities necessitate the evolution of cryptographic protocols. Classical encryption, once impervious to conventional attacks, faces potential compromise in a post-quantum era. Nokia networks are increasingly integrating quantum-resistant algorithms to future-proof communication channels.

Lattice-based encryption, hash-based signatures, and multivariate polynomial systems offer formidable defenses against quantum adversaries. Implementation requires meticulous attention to key management, computational overhead, and interoperability. By embedding these algorithms within network devices and software-defined infrastructure, organizations achieve a balance between cutting-edge security and operational efficiency.

Autonomous Threat Mitigation

Modern networks demand autonomous systems capable of detecting, analyzing, and neutralizing threats without human intervention. Automated orchestration engines leverage real-time telemetry, behavioral analytics, and threat intelligence to enact precise countermeasures.

These systems identify subtle anomalies, such as microsecond fluctuations in traffic flows or unexpected protocol deviations, that may signal reconnaissance or infiltration attempts. Automated containment strategies, including dynamic routing adjustments, virtual segmentation, and instant policy enforcement, neutralize threats before they manifest into disruptive incidents.

Machine learning models continuously refine detection rules by learning from historical events, creating an adaptive defense fabric that evolves with the threat landscape. The outcome is a self-healing network capable of mitigating emergent risks with minimal operational disruption.

Threat Hunting and Proactive Forensics

Threat hunting represents a paradigm shift from passive monitoring to active investigation. Skilled analysts probe network environments to uncover latent threats, exploiting subtle indicators of compromise that evade automated systems.

Behavioral baselining, anomaly detection, and advanced query frameworks enable hunters to identify hidden adversarial activities. These operations often uncover dormant malware, stealthy lateral movements, or misconfigurations that could evolve into severe breaches.

Proactive forensics complements threat hunting by enabling detailed reconstruction of attack vectors. Packet-level captures, log correlations, and memory forensics provide a comprehensive view of the adversary’s methodology. This intelligence informs adaptive security policies, fortifies defenses, and enhances organizational readiness against future incursions.

Supply Chain Risk Management

Network security extends beyond internal infrastructures to encompass the supply chain ecosystem. Dependencies on third-party vendors, cloud service providers, and hardware manufacturers introduce vectors for potential compromise.

Rigorous vetting of partners, continuous monitoring of software and hardware integrity, and contractual security obligations mitigate these risks. Threat intelligence sharing among trusted industry consortia enhances visibility into emergent vulnerabilities, ensuring that supply chain disruptions do not compromise core operations.

Auditing and verification protocols, including code reviews, firmware validation, and penetration testing, provide additional assurance. By integrating supply chain risk management into the broader security framework, organizations cultivate holistic resilience, safeguarding operations from multifaceted threats.

Behavioral Biometrics and Identity Assurance

Traditional authentication mechanisms often falter against sophisticated social engineering or credential theft. Behavioral biometrics, which analyzes patterns such as typing cadence, mouse movement, and device interaction, introduces an additional layer of identity assurance.

Continuous authentication validates user behavior throughout a session, detecting deviations that may indicate compromise. This approach minimizes reliance on static credentials, reducing the attack surface while enhancing user experience.

Combined with contextual risk analysis, behavioral biometrics ensures that access decisions are both dynamic and adaptive, aligning security enforcement with real-world usage patterns. Integration into network access protocols strengthens defense mechanisms without imposing undue friction on legitimate users.

Advanced Endpoint Detection and Response

Endpoints, as the interface between users and networks, require sophisticated monitoring. Advanced Endpoint Detection and Response (EDR) solutions collect high-fidelity telemetry from endpoints, including process execution, file changes, registry modifications, and network activity.

Machine learning algorithms identify deviations from normative behavior, flagging suspicious activity in near real-time. Automated response mechanisms can isolate compromised devices, terminate malicious processes, or enforce quarantine policies to prevent escalation.

EDR systems also support historical analysis, enabling organizations to reconstruct attack chains and identify systemic vulnerabilities. This continuous improvement loop fosters resilient endpoints that not only defend against immediate threats but also contribute to long-term network security intelligence.

Cyber Deception and Honeypot Strategies

Proactive defense extends into deception strategies designed to mislead adversaries. Honeypots, honeytokens, and decoy networks divert attackers from critical assets while simultaneously gathering intelligence on attack methodologies.

By simulating vulnerable environments, cyber deception mechanisms induce adversaries to reveal tactics, techniques, and procedures (TTPs). This intelligence informs security policy adjustments, enhances incident response, and strengthens overall defensive postures.

The implementation of deception requires careful orchestration to maintain realism without compromising operational continuity. When executed effectively, these strategies provide a dual benefit: delaying malicious actors and equipping administrators with actionable insights for strategic hardening.

Cloud Security Orchestration

The proliferation of cloud services necessitates a reevaluation of security orchestration. Hybrid and multi-cloud environments introduce complex visibility challenges, requiring dynamic policy enforcement, workload segmentation, and continuous compliance monitoring.

Automation plays a pivotal role in cloud security orchestration. Continuous configuration checks, API-driven policy application, and real-time threat analytics ensure that workloads remain isolated, encrypted, and resilient against both internal misconfigurations and external threats.

Integration with identity management systems, access control policies, and encryption frameworks ensures that data integrity and confidentiality are maintained across diverse cloud platforms. Organizations achieve a cohesive security posture capable of adapting to fluid operational demands without compromising protection.

Network Resilience and Redundancy

Robust security architecture is inseparable from network resilience. Redundant pathways, failover mechanisms, and dynamic load balancing ensure that operations continue uninterrupted, even under stress or attack.