Product Screenshots

Frequently Asked Questions

How does your testing engine works?

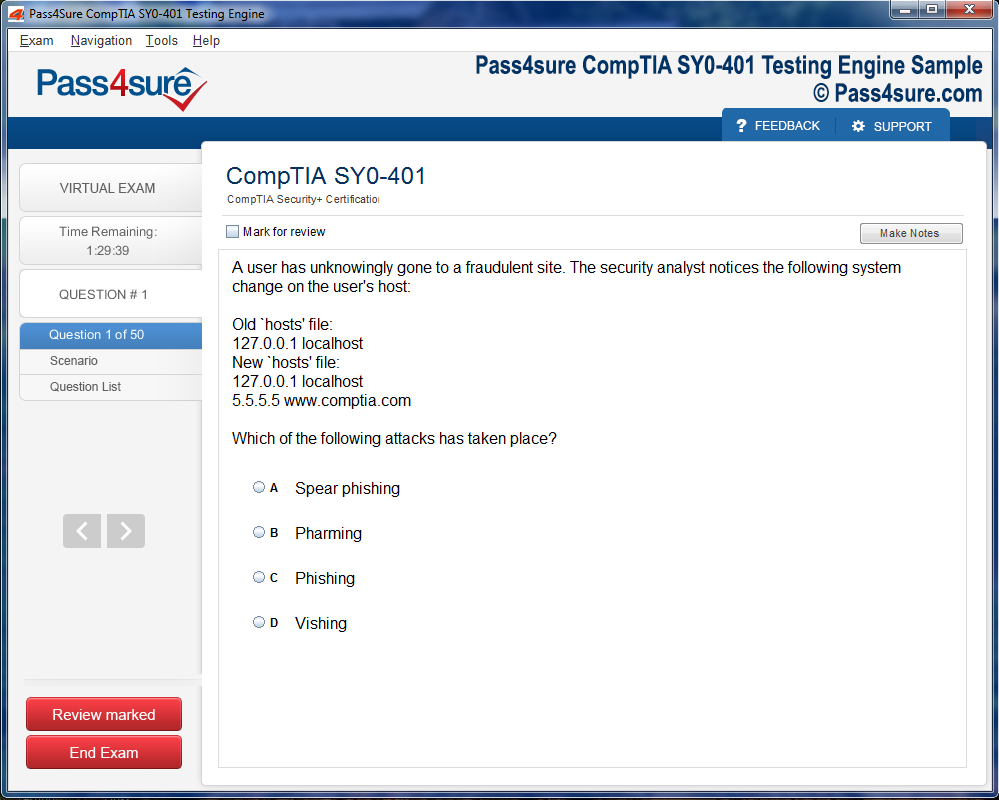

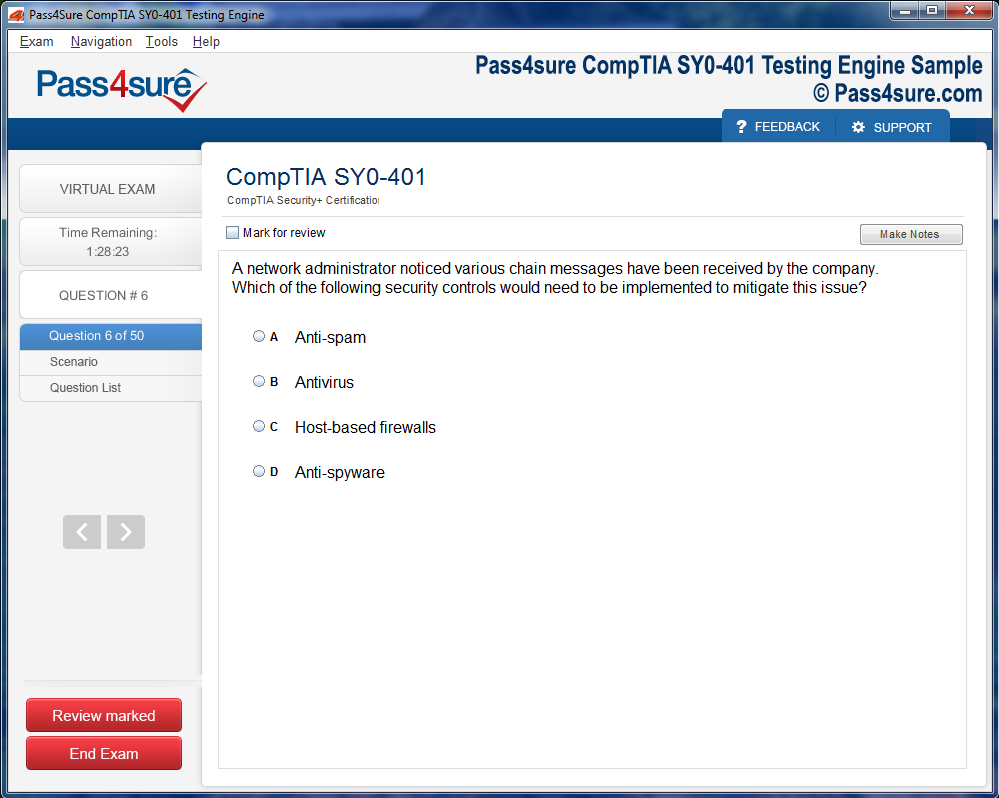

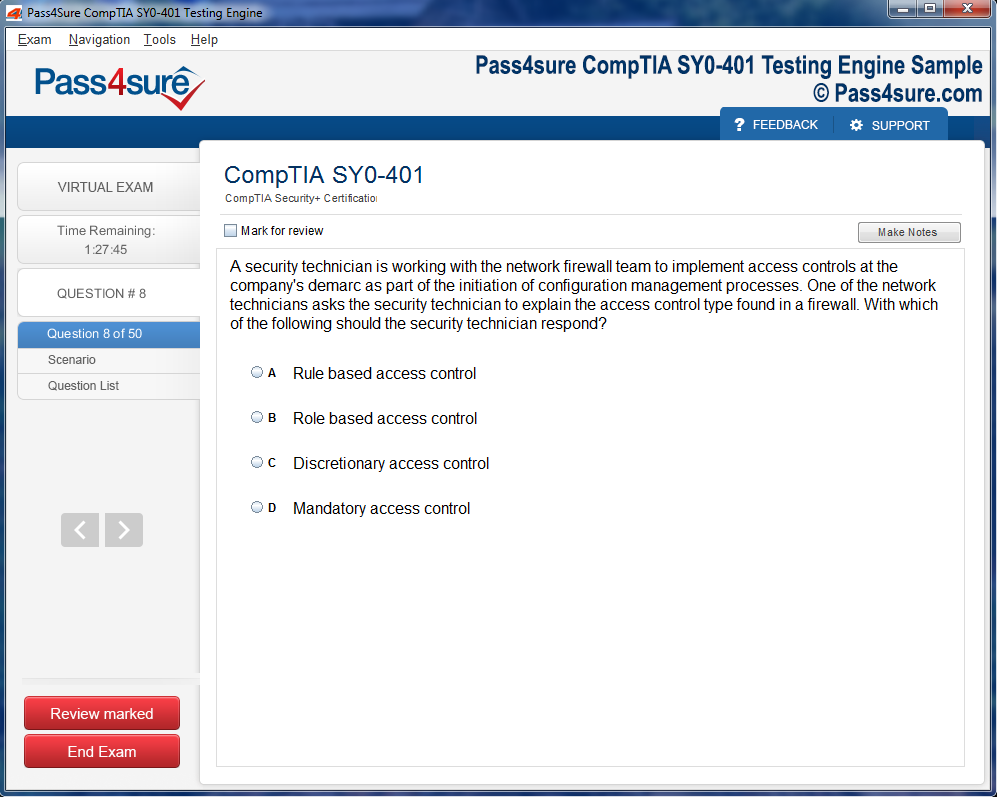

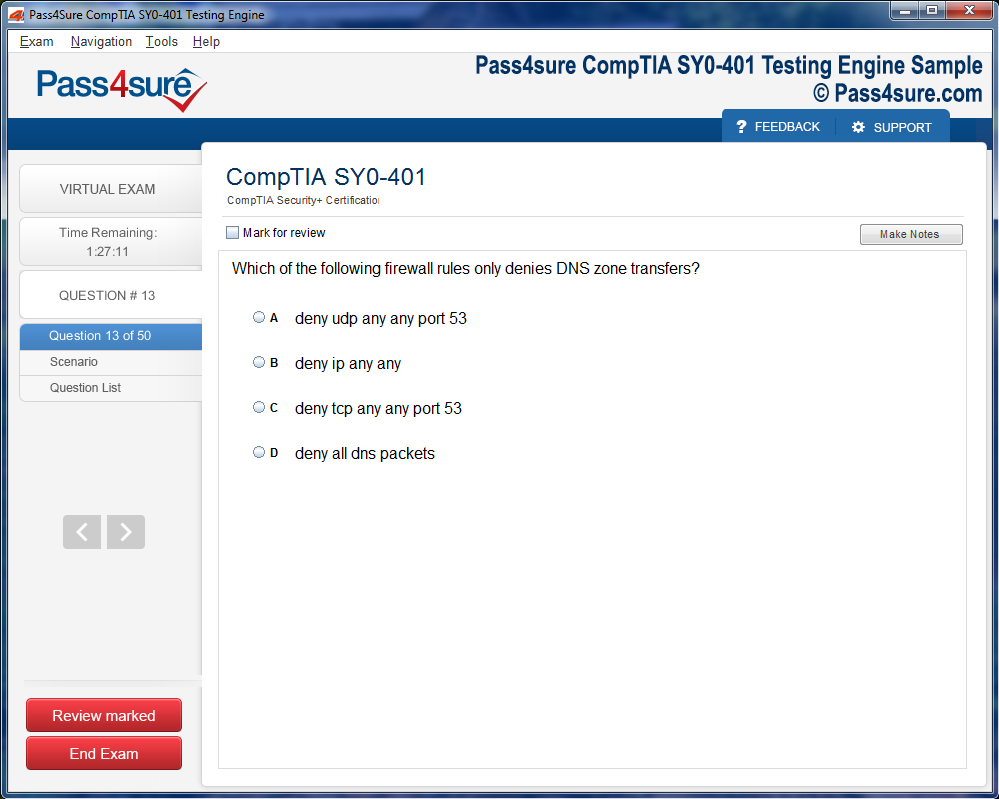

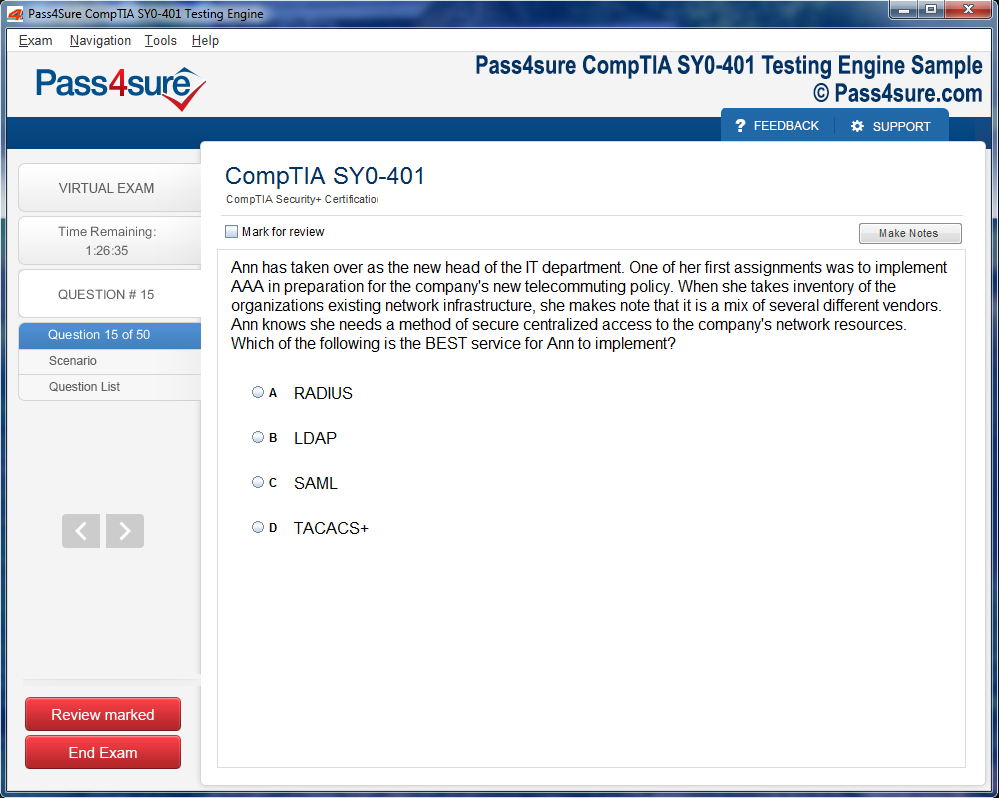

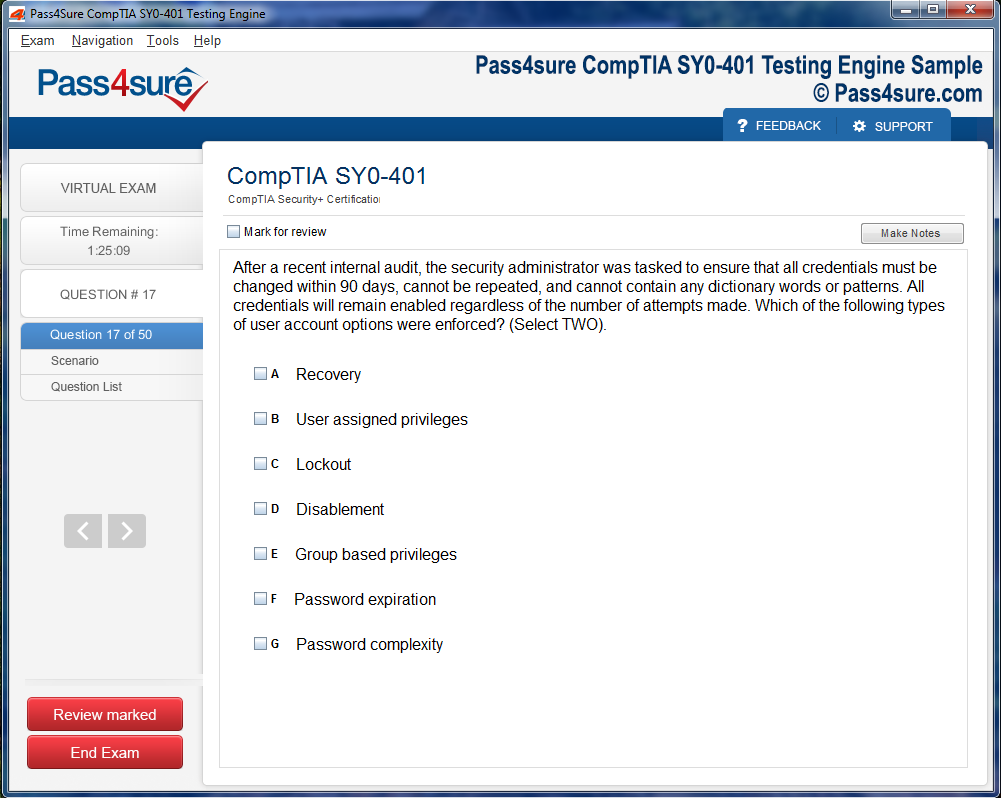

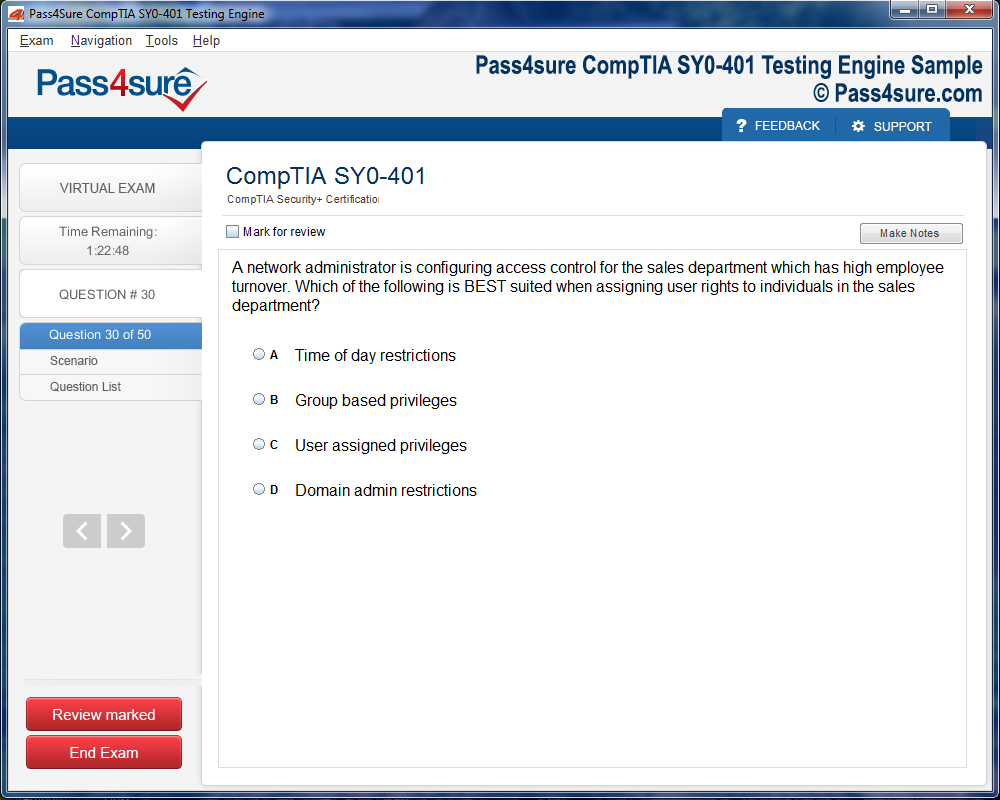

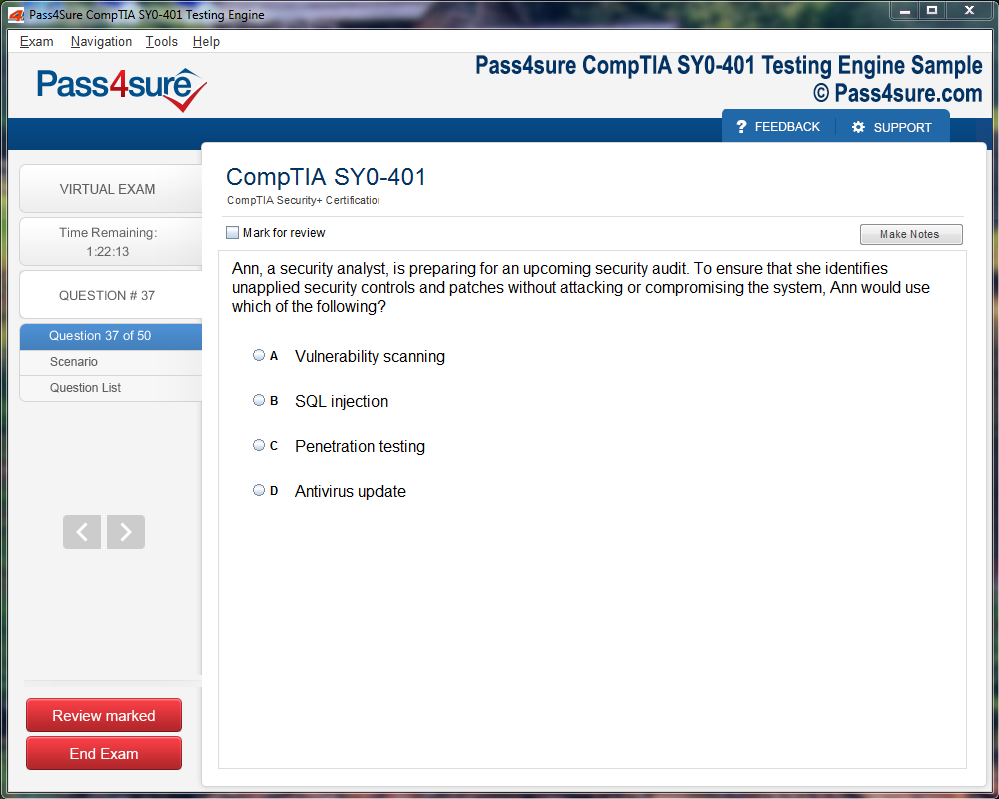

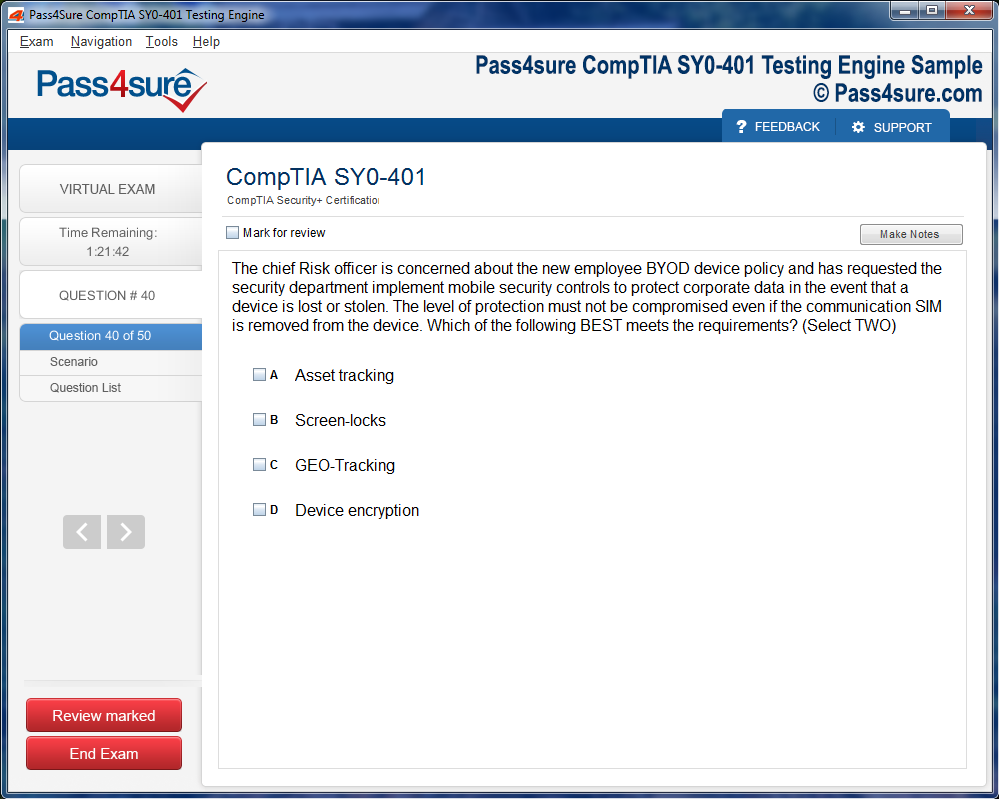

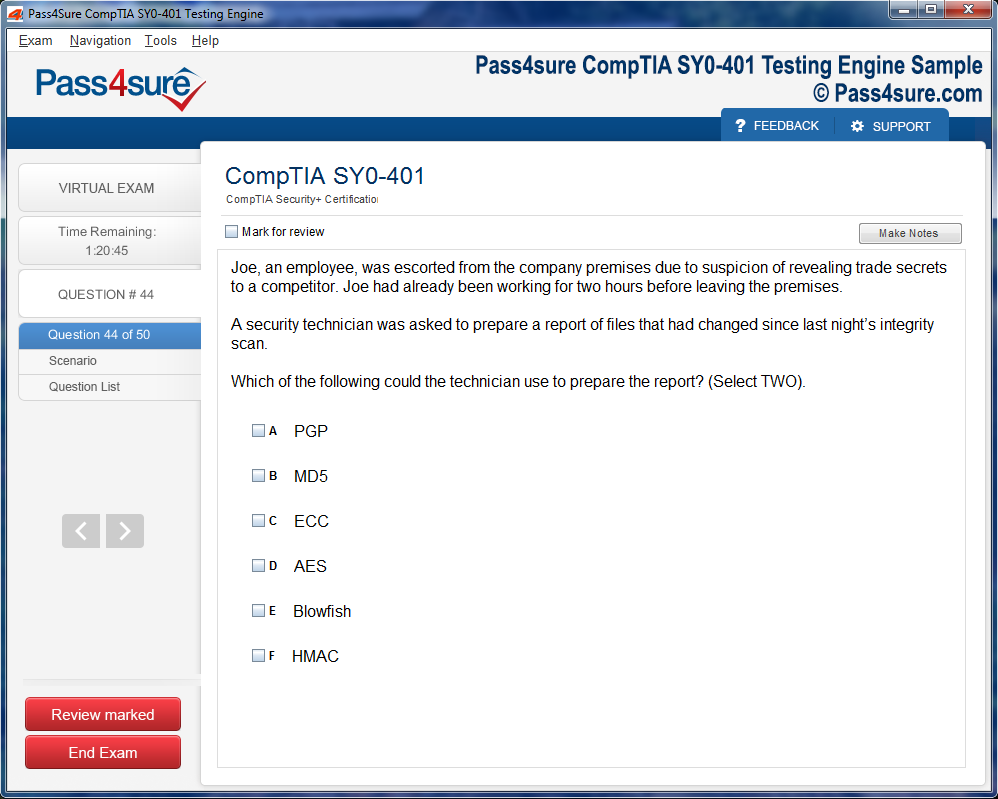

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations.

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.

Complete Nokia 4A0-M05 Certification Guide: Cloud Packet Core Essentials

At the nucleus of contemporary mobile networks lies the cloud packet core, a modular, virtualized ecosystem orchestrated to handle data flow, policy enforcement, and mobility management with unprecedented flexibility. Unlike monolithic legacy cores, cloud packet cores deconstruct traditional network functions into discrete, virtualized nodes, each with clearly delineated responsibilities. Control plane nodes orchestrate signaling, authentication, and session management, while user plane nodes carry subscriber traffic with agility and minimal latency. This architectural demarcation enables independent scaling, high fault tolerance, and dynamic resource optimization. Aspirants for the Nokia 4A0-M05 exam must internalize not only the constituent components but also the symbiotic interactions that sustain seamless operation under fluctuating network conditions.

Virtualized Network Functions and Orchestration Dynamics

The ethereal transformation of physical network functions into virtualized instances is the cornerstone of cloud packet core efficacy. Virtualization abstracts service logic from hardware, enabling elasticity and rapid deployment. Nodes may be instantiated across distributed data centers, allowing operators to tailor resources to real-time demand. Orchestration functions as the neural conductor of this ecosystem, governing deployment, scaling, service chaining, and fault mitigation. Understanding orchestration requires recognition of its multidimensional role: it aligns virtualized resources with traffic patterns, enforces policy adherence, and anticipates potential disruptions. For exam candidates, mastering orchestration principles is crucial, as scenario-based questions frequently interrogate operational decision-making under simulated network stresses.

Subscriber Lifecycle and Context Management

Subscriber management within a cloud packet core is a multifaceted undertaking, encompassing registration, authentication, mobility handling, and session termination. Each subscriber session encapsulates a constellation of data, from policy rules to real-time context, requiring precise tracking to ensure uninterrupted service. Control plane nodes maintain session integrity, while user plane nodes dynamically follow data flows. The 4A0-M05 exam emphasizes the candidate’s ability to navigate this lifecycle, particularly in scenarios involving handovers, network congestion, or partial node failures. Conceptual fluency with subscriber state orchestration ensures resilience, minimizes service disruption, and optimizes resource allocation across virtualized nodes.

Mobility and Seamless Handover Strategies

In a cloud packet core, mobility is more than geographic movement; it is a complex choreography of signaling, routing, and session persistence. As subscribers traverse different radio access nodes, the packet core must execute seamless handovers, ensuring minimal latency and uninterrupted service. Control plane nodes propagate session context, while user plane nodes reroute data dynamically. Candidates must comprehend the interdependent mechanisms that facilitate this dance, including context caching, predictive routing, and preemptive resource allocation. The 4A0-M05 exam often presents mobility anomalies, challenging aspirants to trace signaling pathways, diagnose disruptions, and implement corrective orchestration strategies.

Policy Frameworks and QoS Enforcement

Policy enforcement in cloud packet cores transcends static rulesets; it embodies a dynamic, context-aware framework that shapes user experience, traffic prioritization, and resource utilization. Enforcement points, embedded within virtualized nodes, enable operators to apply granular, subscriber-specific policies, responsive to temporal, locational, or behavioral stimuli. Understanding the hierarchy of Quality of Service parameters, traffic shaping mechanisms, and policy chaining is essential for candidates. Exam scenarios may probe the candidate’s proficiency in resolving policy conflicts, optimizing throughput, or adapting enforcement mechanisms to emergent network conditions, reinforcing the criticality of both theoretical and operational mastery.

Resiliency, Redundancy, and Fault Mitigation

The cloud packet core’s resilience is derived from intelligent orchestration rather than mere hardware duplication. Virtualized instances provide ephemeral redundancy, automatically scaled or redistributed to mitigate failures. Candidates must appreciate the propagation dynamics of node failures and the orchestrated strategies employed to preserve service continuity. Automated failover, load balancing, and resource reallocation collectively enhance reliability, but misconfigurations or orchestration delays can precipitate cascading disruptions. Exam questions often simulate high-stress network conditions, requiring aspirants to apply analytical reasoning and problem-solving heuristics to restore operational equilibrium while preserving latency-sensitive traffic flows.

Monitoring, Analytics, and Proactive Operations

Operational excellence in cloud packet core networks is contingent upon meticulous monitoring and analytics. Latency, jitter, throughput, session success rates, and handover efficiency provide diagnostic insights, guiding operational decisions. Virtualized environments facilitate centralized aggregation of these metrics, enabling proactive issue detection and preemptive intervention. Candidates must develop the acumen to interpret metric anomalies, correlate them with potential orchestration or configuration errors, and propose mitigative actions. The 4A0-M05 exam frequently incorporates scenario-based analytics challenges, testing the candidate’s ability to translate metric interpretation into operational optimization strategies.

Security Paradigms in Virtualized Core Networks

Virtualization introduces a nuanced security landscape, demanding vigilance at multiple layers. Authentication protocols, encryption schemas, access controls, and secure orchestration mechanisms collectively safeguard the integrity of cloud packet cores. Candidates must grasp both theoretical frameworks and practical enforcement strategies, recognizing potential vulnerabilities such as unauthorized access, misconfigured nodes, and exposed interfaces. Exam scenarios often evaluate the candidate’s capability to harmonize security imperatives with operational continuity, testing their ability to implement robust defenses without compromising latency, throughput, or service availability.

Interworking with External Networks

The cloud packet core’s functionality is inseparable from its interconnection with external networks, including internet gateways, interconnects, and application servers. Data routing, policy enforcement, and QoS assurance are paramount to maintain service integrity across these interfaces. Candidates must comprehend topological, protocol-driven, and policy-based interactions, recognizing how variations in routing paths, gateway latency, or policy misalignment impact end-user experience. The 4A0-M05 exam may present complex interconnect scenarios requiring candidates to optimize routing, reconcile policy conflicts, and sustain operational efficiency under varied traffic conditions.

Dynamic Scaling and Elastic Resource Management

The inherent volatility of mobile traffic necessitates dynamic scaling capabilities within cloud packet cores. Virtualized nodes are instantiated, scaled, or decommissioned according to real-time demand, ensuring optimal resource utilization. Candidates must master the orchestration triggers and thresholds that govern these elastic operations, understanding the potential consequences of misconfiguration such as congestion, dropped sessions, or inefficient computational utilization. Exam scenarios often challenge aspirants to design scaling strategies that balance performance, cost-efficiency, and fault resilience, testing both conceptual understanding and operational dexterity.

Automation and DevOps Integration

Cloud packet core operations increasingly integrate automation and DevOps principles to achieve rapid, reliable service deployment. Automated configuration management, continuous integration workflows, and orchestration-driven resource allocation reduce manual intervention while enhancing scalability and resilience. Candidates must understand how automation interfaces with virtualized network functions, policy enforcement mechanisms, and monitoring systems. The 4A0-M05 exam evaluates the aspirant’s ability to leverage these tools to optimize service delivery, troubleshoot anomalies, and maintain compliance with operational best practices.

Service Chaining and Multi-Tenant Environments

Service chaining enables the sequential application of multiple network functions to subscriber traffic, supporting diverse services and differentiated experiences. In multi-tenant environments, this capability is critical for isolating traffic, enforcing policies, and optimizing resource allocation. Candidates must grasp the interplay between service chains, virtualized nodes, and orchestration workflows, understanding how misalignment can lead to latency degradation or policy violation. Exam scenarios often simulate multi-tenant deployments, requiring candidates to design chains that maximize efficiency, preserve security, and maintain service continuity.

Edge Integration and Low-Latency Applications

The proliferation of low-latency applications, such as augmented reality, autonomous systems, and real-time analytics, underscores the importance of edge integration within cloud packet cores. Edge nodes extend the reach of virtualized functions closer to subscribers, minimizing latency and optimizing throughput. Candidates must understand how edge deployments interface with central core nodes, manage session continuity, and enforce localized policies. The 4A0-M05 exam may challenge aspirants to architect edge-integrated solutions, balancing latency reduction, resource distribution, and orchestration complexity.

Troubleshooting and Root Cause Analysis

Effective management of cloud packet cores necessitates advanced troubleshooting capabilities. Candidates must develop the ability to trace signaling flows, identify performance bottlenecks, diagnose orchestration errors, and reconcile policy conflicts. Root cause analysis extends beyond symptomatic mitigation; it requires comprehension of interdependent node behaviors, virtualized workflows, and orchestration dynamics. The exam often presents layered fault scenarios, evaluating the candidate’s analytical rigor, operational foresight, and methodological reasoning in restoring network equilibrium.

Quality Assurance and SLA Compliance

Maintaining quality of service and adherence to service-level agreements is paramount in cloud packet core operations. Monitoring mechanisms, orchestration policies, and resource allocation strategies collectively ensure compliance with latency, throughput, and reliability standards. Candidates must understand how to leverage monitoring data to validate SLA adherence, preemptively adjust configurations, and optimize subscriber experiences. Exam scenarios may simulate SLA violations, compelling aspirants to propose corrective interventions while balancing operational efficiency and resource constraints.

Orchestration Challenges in Multi-Domain Networks

Modern mobile networks often span multiple administrative and technological domains, including 4G, 5G, and converged cloud environments. Orchestration in such contexts becomes a multidimensional challenge, requiring harmonization of policies, scaling strategies, and fault mitigation across heterogeneous infrastructures. Candidates must understand inter-domain dependencies, potential conflicts, and mitigation techniques to ensure uninterrupted service. The 4A0-M05 exam frequently incorporates multi-domain orchestration scenarios, evaluating the candidate’s capacity to reason across diverse network topologies, technologies, and operational constraints.

Real-Time Analytics and Predictive Operations

Proactive management in cloud packet core networks increasingly relies on real-time analytics and predictive operations. Machine learning and AI-driven algorithms analyze traffic patterns, subscriber behavior, and node performance to anticipate congestion, predict faults, and optimize resource allocation. Candidates must appreciate the conceptual underpinnings of predictive orchestration, including anomaly detection, trend analysis, and automated remediation. Exam questions may challenge aspirants to design predictive frameworks, interpret analytics outputs, and integrate these insights into operational strategies for sustained network performance.

Integration with Cloud Ecosystems and Public Infrastructure

Cloud packet core deployments often interface with broader cloud ecosystems and public infrastructure, leveraging shared resources, virtualized data centers, and orchestration platforms. Candidates must comprehend the complexities of multi-cloud integration, including network function placement, latency considerations, security enforcement, and orchestration synchronization. The 4A0-M05 exam may present scenarios requiring candidates to optimize cross-cloud deployments, reconcile policy divergences, and ensure service continuity across distributed infrastructures.

Network Slicing and Differentiated Services

Network slicing represents a critical innovation in cloud packet core design, enabling operators to create virtualized, logically isolated networks tailored to specific service requirements. Each slice may have distinct policies, throughput guarantees, and security parameters. Candidates must understand slice orchestration, resource allocation, and performance monitoring within multi-slice environments. Exam scenarios often simulate complex slice deployments, requiring candidates to balance resource efficiency, service differentiation, and subscriber experience across multiple concurrent slices.

Adaptive Traffic Management and Congestion Control

The dynamic nature of mobile network traffic necessitates adaptive traffic management strategies. Virtualized nodes, orchestration frameworks, and policy enforcement mechanisms collectively regulate congestion, prioritize critical flows, and maintain service quality. Candidates must grasp the algorithms, thresholds, and orchestration interventions that sustain optimal traffic conditions. The 4A0-M05 exam may present congestion scenarios, evaluating the candidate’s proficiency in diagnosing bottlenecks, reallocating resources, and implementing adaptive control measures to preserve operational stability.

Advanced Troubleshooting Paradigms in Packet Core Networks

Troubleshooting within cloud packet core networks transcends simple fault rectification; it is an intricate cognitive exercise, blending deductive reasoning, systemic analysis, and predictive inference. Candidates must approach alarms, logs, and performance anomalies as cryptic narratives, each signal revealing fragments of the underlying systemic state. Decoding these narratives requires both a meticulous eye for detail and an appreciation of the network’s holistic orchestration.

Effective troubleshooting demands the synthesis of multiple data streams. Temporal analysis of throughput fluctuations, correlation between policy enforcement anomalies, and recognition of cascading failures are all essential components. Candidates adept at this multidimensional evaluation can anticipate failure propagation, mitigate service degradation, and propose interventions that optimize both immediate resolution and long-term network resilience.

In complex scenarios, the network behaves as a dynamic system, its elements interacting in non-linear and sometimes counterintuitive ways. Candidates must internalize these interactions, developing mental models that allow rapid hypothesis testing, scenario simulation, and operational foresight. This form of anticipatory troubleshooting not only enhances examination performance but also cultivates an intrinsic intuition for real-world network management.

Interpretive Mastery of Mobility Events

Mobility events, including handovers, session redirection, and roaming transitions, represent a critical axis of complexity in cloud packet core networks. Candidates must understand the subtleties of session continuity, signaling behavior, and latency implications during these transitions. Each mobility event embodies a delicate balance between control plane orchestration and user plane efficiency.

The interpretive mastery of mobility phenomena extends beyond rote memorization of handover types. Candidates must analyze the ramifications of resource allocation, policy enforcement, and node load, predicting emergent behaviors under stress or high-density scenarios. Understanding mobility events as dynamic, context-dependent occurrences cultivates an anticipatory mindset, enabling candidates to propose proactive solutions during high-stakes examinations and operational crises alike.

Strategic Cognitive Mapping for Policy Optimization

Policy optimization is an exercise in strategic cognitive mapping, wherein candidates reconcile system objectives with operational constraints. Policies are rarely isolated; they interact with session lifecycles, orchestration algorithms, and subscriber management protocols. Misalignment can produce unforeseen bottlenecks, degrade quality of service, or induce security vulnerabilities.

Candidates must cultivate the ability to visualize policy landscapes, anticipate systemic interactions, and simulate potential outcomes. This requires abstract reasoning, scenario extrapolation, and an intuitive grasp of causality within distributed networks. Mastery of policy optimization transforms a candidate’s approach from reactive configuration to proactive orchestration, enhancing both examination efficacy and operational acumen.

Analytical Forensics of Traffic Surges

Traffic surges, whether due to seasonal demand, viral application usage, or sudden subscriber influx, present a formidable challenge in packet core networks. Analytical forensics of these surges demands meticulous examination of load distribution, node capacity, and session orchestration. Candidates must discern not only the immediate impact on throughput and latency but also latent consequences for redundancy, policy compliance, and security enforcement.

This analytical approach requires multi-layered reasoning. By evaluating patterns of congestion, identifying potential choke points, and predicting emergent bottlenecks, candidates can devise mitigation strategies that balance scalability, performance, and reliability. The ability to interpret surges as complex systemic phenomena, rather than isolated anomalies, distinguishes proficient candidates from those reliant on superficial troubleshooting.

Cognitive Synthesis of Alarm Systems and Event Correlation

Alarm systems in cloud packet core networks are repositories of operational intelligence, each alert a potential clue to systemic health. Candidates must develop a cognitive synthesis, integrating disparate alarms into coherent narratives that illuminate underlying network conditions. Event correlation transforms isolated signals into predictive insights, enabling proactive mitigation before service degradation occurs.

This requires a nuanced understanding of interdependencies, thresholds, and signal hierarchies. Candidates who cultivate interpretive agility can discern patterns across nodes, sessions, and subscriber behaviors, translating abstract indicators into actionable intelligence. This skill set is invaluable in both the examination context and real-world operations, where rapid, accurate decision-making determines both performance and reliability.

Dynamic Orchestration in Multi-Domain Environments

Multi-domain orchestration represents the apex of operational complexity in modern packet core networks. Candidates must navigate the interplay between core functions, edge deployments, and external network interfaces, ensuring seamless coordination across diverse topologies. Each domain exhibits distinct operational parameters, resource constraints, and policy priorities, yet they converge to deliver a unified service experience.

Strategic reasoning in this context requires both abstraction and precision. Candidates must anticipate inter-domain conflicts, predict cascading effects of configuration changes, and orchestrate resource allocation with foresight. Mastery of multi-domain orchestration transforms the candidate’s approach from reactive problem-solving to proactive, system-wide strategic planning.

Scenario-Based Scaling and Load Balancing

Scaling within cloud packet core networks is a dynamic, context-dependent process. Candidates must reconcile immediate demand spikes with long-term resource optimization, balancing load across user plane functions, policy engines, and orchestration nodes. Effective load balancing requires anticipatory reasoning, predicting where bottlenecks may emerge and how traffic distribution will evolve over time.

Scenario-based practice enhances this understanding. Candidates can simulate high-density traffic, node failures, and policy conflicts to observe emergent behaviors. By internalizing these experiences, they develop an intuitive grasp of scaling mechanisms, learning to orchestrate resources, mitigate congestion, and preserve service quality under both routine and extreme conditions.

Adaptive Interpretation of Subscriber Profiles

Subscriber profiles encapsulate critical operational intelligence, influencing policy application, session management, and quality of service prioritization. Candidates must interpret these profiles with adaptive acuity, discerning subtle variations that impact orchestration, throughput, and security.

Adaptive interpretation extends beyond static analysis. Candidates must consider mobility patterns, historical usage trends, and potential anomalies. This dynamic approach enables proactive adjustments, whether through policy refinement, session migration, or resource reallocation. Mastery of subscriber intelligence enhances operational efficiency and informs strategic decision-making in both exam scenarios and practical deployments.

Cognitive Calibration for Multi-Step Problem Solving

Examination scenarios frequently present multi-step problems, where each solution step impacts subsequent outcomes. Candidates must calibrate their cognition, maintaining situational awareness while progressing through sequential challenges. This requires iterative reasoning, error anticipation, and strategic prioritization.

Cognitive calibration is both a mental discipline and a strategic methodology. Candidates learn to segment complex problems into manageable sub-tasks, evaluating each against operational objectives, policy constraints, and systemic interdependencies. By cultivating this skill, candidates navigate exam scenarios with precision and confidence, translating abstract theory into effective, practical solutions.

Interpreting Redundancy and Failover Mechanisms

Redundancy and failover mechanisms are pillars of network resilience, ensuring continuity in the face of node failures, traffic surges, or orchestration anomalies. Candidates must understand these mechanisms both conceptually and operationally, analyzing how alternate pathways, replication strategies, and automated failover processes preserve service integrity.

Interpretive mastery involves predicting the systemic ramifications of component failures, evaluating the efficacy of redundancy protocols, and recommending proactive optimizations. Candidates who internalize these principles cultivate an anticipatory mindset, capable of designing networks that are both robust and adaptive, while excelling in examination scenarios that test these competencies.

Holistic Evaluation of KPI Trends

Key performance indicators are not merely metrics; they are interpretive instruments that reveal the network’s operational vitality. Candidates must evaluate KPI trends holistically, discerning patterns, anomalies, and emerging risks. Each KPI—whether latency, throughput, session success rate, or policy compliance—interacts with others, forming a multidimensional matrix of network health.

Holistic evaluation requires both analytical rigor and cognitive synthesis. Candidates must integrate historical data, predictive modeling, and scenario simulation to derive actionable insights. This interpretive approach transforms KPIs from static numbers into dynamic guides for both examination problem-solving and operational excellence.

Strategic Integration of Security and Performance Objectives

Modern packet core networks operate at the nexus of security, performance, and scalability. Candidates must integrate these objectives strategically, ensuring that throughput maximization and latency reduction do not compromise integrity, and that policy enforcement aligns with operational and regulatory mandates.

Strategic integration involves both anticipatory reasoning and systemic insight. Candidates evaluate potential conflicts, simulate policy outcomes, and prioritize interventions based on holistic operational objectives. This approach fosters a nuanced understanding of the delicate equilibrium between performance and protection, critical for both exam success and real-world network stewardship.

The paradigm shift from hardware-centric architectures to cloud-native packet core systems signifies more than a technological evolution; it epitomizes a philosophical reconceptualization of mobile network design. Legacy networks, bounded by rigid physical infrastructures, often imposed static limitations on scaling and service innovation. In contrast, cloud packet cores embody the ethos of fluidity, elasticity, and dynamic orchestration. The transition is not merely a migration of functionalities but a transmutation of operational philosophy, necessitating a reconceptualization of resource allocation, fault mitigation, and service agility. Exam candidates must internalize this philosophical pivot, as theoretical knowledge alone is insufficient without appreciating its operational ramifications.

Virtualization and the Ethereal Plane of Network Functions

Virtualization lies at the nucleus of cloud packet core design, transforming conventional network functions into ephemeral entities unbound by physical constraints. Control plane elements, once tethered to discrete hardware appliances, now traverse virtual landscapes, instantiated on-demand across data centers. The user plane, orchestrating actual subscriber traffic, gains the capacity to proliferate dynamically, adapting to fluctuating network exigencies. Understanding the interplay between virtualized nodes, orchestration layers, and service chaining is paramount for operational efficacy. The 4A0-M05 exam probes candidates’ ability to conceptualize these interactions, ensuring that the abstraction of virtualization does not obscure the tangible implications for throughput, latency, and fault tolerance.

Subscriber Cognition and Contextual Continuity

Central to the efficacy of cloud packet core networks is the meticulous management of subscriber context. Each session embodies a mosaic of authentication credentials, mobility patterns, and policy enforcements that must be meticulously preserved. The separation of control and user planes facilitates the continuity of this context across dynamic topologies, yet it also introduces the potential for ephemeral inconsistencies if orchestration fails. Candidates must grasp the intricacies of session establishment, context handover, and subscriber state persistence. Scenario-based queries in the 4A0-M05 exam often challenge aspirants to diagnose disruptions in subscriber continuity, emphasizing the significance of comprehensive operational cognition.

Orchestration as a Neural Conductor

Orchestration in cloud packet core networks functions analogously to a neural conductor, coordinating disparate virtualized functions to produce harmonious service delivery. It governs instantiation, scaling, policy application, and fault mitigation across a complex constellation of nodes. Automated workflows, policy-driven templates, and service chaining constitute the primary mechanisms through which orchestration exerts its influence. Exam candidates must not only identify these components but also elucidate their interdependencies, understanding how misconfigurations or delayed responses propagate through the network fabric. Mastery of orchestration principles ensures operational resilience and underpins the candidate’s ability to navigate high-complexity scenarios with precision.

Mobility Management and the Dance of Data

The dynamism of subscriber mobility introduces a perpetual choreography within the packet core. As users traverse geographical domains, control plane nodes maintain session continuity, while user plane nodes adapt routing paths to preserve data integrity and minimize latency. This dance necessitates seamless handovers, intelligent routing, and preemptive context transfer mechanisms. Candidates must appreciate that mobility management extends beyond mere signaling; it encapsulates predictive resource allocation, proactive fault avoidance, and real-time monitoring. Exam scenarios often interrogate the candidate’s capacity to trace mobility flows, identify performance bottlenecks, and optimize handover strategies under conditions of network flux.

Policy Enforcement in the Virtual Dominion

Policy enforcement within a cloud packet core transcends rudimentary access control; it constitutes a sophisticated, multi-dimensional framework that shapes subscriber experience, resource prioritization, and service quality. Virtualized enforcement points imbue operators with the capacity to implement granular policies dynamically, reacting to temporal, locational, or behavioral triggers. The 4A0-M05 exam frequently evaluates the candidate’s discernment in aligning policy objectives with network performance imperatives, probing their understanding of QoS hierarchies, traffic shaping, and subscriber-specific constraints. Effective policy orchestration is thus a crucible of both technical mastery and strategic foresight.

Resiliency and Redundancy in Ephemeral Networks

Reliability in cloud packet core networks is no longer a function of duplicated hardware; it emerges from dynamic redundancy, automated failover, and intelligent resource redistribution. The ephemeral nature of virtualized instances necessitates sophisticated monitoring and predictive mitigation strategies. Candidates must comprehend how node failures propagate, the mechanisms by which orchestration mitigates service disruption, and the subtleties of load balancing across geographically dispersed infrastructure. Exam questions often simulate cascading failures, challenging aspirants to deploy analytical reasoning and operational heuristics to restore service continuity while minimizing latency and throughput degradation.

Analytical Vigilance and Metrics Interpretation

Operational proficiency demands the cultivation of analytical vigilance. Latency, jitter, session success rate, handover efficiency, and throughput constitute more than mere metrics; they represent diagnostic signals, guiding the operator through the labyrinthine complexity of virtualized networks. Candidates must decipher these metrics within the context of orchestration workflows, resource scaling thresholds, and policy enforcement dynamics. The 4A0-M05 exam often presents candidates with performance anomalies, requiring precise interpretation of metric trends to identify root causes and propose remediation strategies.

Security in the Cloud Paradigm

The virtualization inherent in cloud packet core networks introduces a nuanced security landscape, replete with potential vulnerabilities at every interface and orchestration point. Authentication protocols, encryption schemas, access control matrices, and secure orchestration mechanisms collectively fortify the network’s integrity. Candidates must not only recognize threats but also conceptualize proactive mitigations that harmonize security with service continuity. Exam scenarios may probe the aspirant’s aptitude in identifying misconfigurations, detecting unauthorized access attempts, or optimizing security policies without compromising performance.

Interfacing with External Networks

The packet core’s efficacy is contingent upon its seamless integration with external networks. Internet gateways, interconnects, and application servers constitute the conduits through which subscriber traffic flows. Effective routing, policy enforcement, and QoS assurance are imperative to preserve service integrity. Candidates must understand the topological, protocol-driven, and policy-based interactions that facilitate these interfaces. The 4A0-M05 exam may present intricate scenarios requiring the candidate to optimize routing paths, reconcile policy conflicts, or evaluate the impact of interconnect latency on user experience.

Dynamic Scaling and Elastic Resource Management

Traffic in mobile networks is inherently capricious, fluctuating across temporal, geographical, and behavioral dimensions. Cloud packet core architectures leverage elasticity to instantiate, scale, and decommission virtual nodes in real time. Candidates must master the triggers, thresholds, and orchestration strategies that govern dynamic scaling, appreciating how improper configuration can precipitate congestion, dropped sessions, or inefficient computational utilization. The exam challenges aspirants to reconcile theoretical knowledge with scenario-driven problem solving, demanding both foresight and analytical dexterity.

Cloud Packet Core: An Exposition of Virtualized Network Architecture

The cloud packet core represents a veritable lattice of interdependent virtualized nodes, a nexus through which subscriber data, signaling, and policy enforcement converge. Understanding this architecture transcends rote memorization, demanding a synthesis of modular interactions, dynamic orchestration, and high-throughput operational intelligence. Within its virtual confines, three cardinal layers orchestrate the delicate ballet of connectivity: the control plane, the user plane, and the orchestration layer. Each serves a discrete function while remaining symbiotically entwined with the others, manifesting a resilient, adaptable, and high-performing network edifice.

Control Plane Dynamics and Functionalities

At the helm of signaling and session governance lies the control plane, a cerebral hub where authentication, policy enforcement, and mobility management coalesce. Within its dominion, entities such as the mobility management entity, policy control function, and subscriber data repositories operate in seamless synchrony, exchanging session contexts, signaling messages, and authentication tokens. Each interaction must be meticulously calibrated; misconfigurations propagate instability, undermining mobility continuity and policy adherence. Candidates examining this domain must cultivate an intuitive grasp of inter-node synergies, the subtleties of signaling sequences, and the imperatives of policy application to ensure a fault-tolerant and subscriber-centric ecosystem.

The control plane's architecture exemplifies modular elegance, wherein nodes can be instantiated, scaled, or migrated independently. Such modularity confers not only operational flexibility but also facilitates targeted fault recovery. When mobility events traverse cell boundaries or diverse radio access technologies, the control plane orchestrates seamless handovers, synchronizing subscriber context while upholding stringent policy and security mandates. The interplay between signaling flows and state maintenance is intricate, requiring acute comprehension of message orchestration, session lifecycle management, and authentication reciprocity.

User Plane Throughput and Data Continuity

Where the control plane commands, the user plane executes. It channels the torrent of subscriber data—voice, video, and internet traffic—between endpoints and external networks. This bifurcation of control and data facilitates independent scaling, enabling user plane nodes to be meticulously optimized for latency reduction, throughput maximization, and jitter minimization. In this realm, the architecture demonstrates its acumen for high-volume traffic, resilient redundancy, and intelligent load balancing. Understanding interface dynamics between control and user planes is paramount; misalignments precipitate session drops, throughput bottlenecks, or policy discrepancies, eroding the network’s reliability and subscriber satisfaction.

User plane architecture is inherently designed for horizontal scalability, wherein traffic surges trigger on-demand instantiation of additional nodes. This elasticity embodies the quintessence of cloud-native design, allowing dynamic augmentation of capacity without perturbing ongoing sessions. Redundancy protocols, failover algorithms, and real-time load distribution are interwoven to uphold continuous data flow under duress. Candidates exploring this domain must assimilate the nuances of throughput optimization, node-level failover orchestration, and the ramifications of user plane latency on service-level agreements.

Orchestration Layers and Virtual Network Functionality

The orchestration layer is the cerebral cortex of the cloud packet core, coordinating deployment, configuration, and scaling of virtualized network functions. Orchestrators employ service templates, chaining policies, and automated recovery procedures to maintain operational continuity. The orchestration ecosystem leverages abstraction to decouple resources from functions, enabling adaptive allocation, intelligent scaling, and automated failover. Candidates navigating this landscape must grasp the intricacies of service chaining, node instantiation workflows, and automated policy application, as these competencies directly correlate with both operational efficacy and examination evaluation metrics.

Orchestration is more than mere automation; it embodies a symbiotic blend of policy-driven governance and elastic resource distribution. Templates define node behavior, service chains delineate inter-node traffic flow, and automated recovery protocols safeguard continuity under fault conditions. Such sophistication permits networks to fluidly respond to fluctuating traffic, geographic subscriber density shifts, or emergent mobility patterns. Orchestration also encapsulates lifecycle management, ensuring virtual functions are instantiated, scaled, or retired with minimal human intervention while maintaining stringent policy and security fidelity.

Node Interfaces and Protocol Interoperability

The interfaces connecting nodes form the sinews of the packet core, facilitating communication and signaling propagation across planes. Standardized protocols such as GTP, Diameter, PFCP, and S1-C delineate precise message flows between control and user plane entities, policy functions, and gateways. Mastery of these protocols requires comprehension of session initiation, routing mechanisms, error handling, and state maintenance. The correct interpretation of interface behaviors ensures interoperability, mitigates session disruptions, and optimizes overall network responsiveness. Candidates must internalize these flows to diagnose faults effectively, configure nodes precisely, and anticipate anomalies arising from misalignment or protocol violation.

Interface design is as much art as science. Latency-sensitive interactions necessitate minimal message overhead, intelligent queue management, and resilient retry logic. Each protocol embodies both operational semantics and error-resilience patterns, permitting dynamic session continuity even under transient network impairments. Understanding the interstitial mechanics of these flows enables network architects to preemptively resolve potential bottlenecks, optimize signaling efficiency, and maintain high availability across diverse deployment topologies.

Security Architecture and Operational Integrity

Security within the cloud packet core transcends mere encryption; it is an omnipresent lattice integrated into each layer. Control plane nodes enforce authentication, policy compliance, and signaling integrity, while user plane nodes manage encrypted traffic and secure routing. Orchestration layers embed access controls, audit trails, and proactive monitoring to preserve the integrity of virtualized functions. The confluence of security and operational reliability necessitates rigorous comprehension of threat surfaces, misconfiguration ramifications, and protocol hardening strategies. Candidates must appreciate that security is not ancillary but integral to both fault tolerance and service continuity.

Security paradigms extend beyond passive defense. Authentication sequences, encryption algorithms, and policy enforcement protocols operate in concert with orchestration to provide end-to-end resilience. Control plane nodes validate subscriber identities and session legitimacy, whereas user plane entities shield payload integrity from interception or modification. The orchestration layer enforces governance policies, monitors anomalous behaviors, and triggers automated remediation when deviations are detected. Understanding this tripartite synergy empowers candidates to conceptualize a secure, resilient, and policy-compliant network.

High Availability and Resilience Strategies

The cloud packet core is predicated on continuity; redundancy, dynamic failover, and automated recovery mechanisms underpin its resilience. Nodes are instantiated in clusters, replicated across geographies, and synchronized to maintain state coherence. Fault tolerance protocols ensure that node failures do not cascade into service interruptions, with backup strategies, replication schedules, and failover logic woven into the operational fabric. Candidates must internalize the methodologies for achieving high availability, the principles of state synchronization, and the mechanics of automated recovery within virtualized environments.

Resilience is multi-dimensional. It encompasses hardware redundancy, software failover algorithms, and orchestration-driven load redistribution. Networks dynamically adjust to traffic spikes, hardware malfunctions, or software anomalies, maintaining uninterrupted service delivery. Mastery of these mechanisms equips network professionals to anticipate failures, implement proactive mitigation, and ensure seamless subscriber experiences across varying operational contexts.

Scalability and Elastic Network Functioning

Scalability is intrinsic to cloud packet core design, permitting dynamic augmentation of virtual nodes in response to traffic flux. Mobile networks exhibit temporal, spatial, and behavioral variability, necessitating elastic architectures capable of sustaining peak loads without impairing ongoing sessions. Orchestration policies dictate scaling triggers, migration protocols, and resource reallocation strategies. Candidates must comprehend the underpinnings of elasticity, the orchestration mechanics that facilitate dynamic instantiation, and the implications for throughput, latency, and session fidelity.

Elasticity is manifested in automated scaling, predictive resource allocation, and policy-governed instantiation of virtual functions. The network adapts not only to volume surges but also to temporal traffic patterns, geographic concentration of subscribers, and emergent service demands. Understanding these dynamics enables architects to optimize capacity, preempt congestion, and maintain stringent service-level objectives even under unpredictable operational conditions.

Architectural Optimization and Performance Implications

The architecture of the cloud packet core directly influences performance metrics such as latency, throughput, handover success, and signaling efficiency. Strategic node placement, interface design, and orchestration workflows optimize operational outcomes. Candidates must correlate architectural decisions with empirical performance insights, leveraging metrics to refine deployment strategies, minimize signaling overhead, and enhance subscriber experience. Optimizing architecture requires a holistic comprehension of the interplay between control plane logic, user plane throughput, orchestration elasticity, and inter-node interface fidelity.

Optimization is both proactive and iterative. Network architects monitor traffic patterns, identify congestion nodes, and recalibrate orchestration policies to sustain performance objectives. Performance analytics guide strategic adjustments, ensuring latency minimization, throughput maximization, and signaling efficiency. In practice, such optimization translates into enhanced subscriber satisfaction, operational cost savings, and resilient, high-performing cloud packet core deployments.

Configuration Paradigms in Cloud Packet Core Networks

The orchestration of cloud packet core elements necessitates a profound understanding of intricate configuration paradigms. Nodes are not mere entities; they are cognitive junctures where virtual intelligence coalesces with network directives. Each instantiation embodies a complex amalgamation of virtualized resources, policy templates, and operational heuristics. The adept administrator must navigate inter-node topologies, ensuring that signaling conduits, control channels, and user plane pathways are meticulously aligned. Misalignment can precipitate latent performance anomalies that ripple through the network fabric.

Deployment Dynamics and Virtual Instantiation

Deployment within cloud ecosystems is a choreography of virtual machines, containers, and ephemeral instances. Templates dictate not only resource allocation but also connectivity fidelity and compliance with service-level stipulations. The deployment lifecycle is an odyssey, commencing with node instantiation, proceeding through dynamic configuration, scaling in response to traffic exigencies, and culminating in judicious decommissioning. The sagacious operator appreciates the subtleties of orchestration sequences, ensuring that each node emerges fully synchronized with its network cohort.

Subscriber Lifecycle and Data Fidelity

Subscriber management transcends basic authentication and registration; it is the meticulous curation of digital identities, contextualized session information, and individualized policy parameters. Nodes serve as custodians of subscriber profiles, mediating access privileges and service entitlements. The interplay between subscriber repositories, policy control nodes, and mobility management entities demands perspicacity. Erroneous provisioning can engender authentication lapses, session fragmentation, or inadvertent policy breaches, undermining both service integrity and user confidence.

Session Topology and Continuity Assurance

Session configuration encompasses the meticulous orchestration of IP allocation, bearer path determination, and routing policies. Control plane nodes act as sentinels, harmonizing with user plane counterparts to preserve session continuity during mobility transitions. Understanding session lifecycle management—from inception to modification, mobility handling, and termination—is indispensable. Each session represents a delicate equilibrium of voice, video, and data streams; misconfiguration can fracture this equilibrium, resulting in perceptible degradation of user experience.

Mobility Schemes and Handover Precision

Mobility management is an intricate lattice interwoven with configuration and session governance. Nodes must accommodate handover thresholds, tracking area delineations, and session timers tailored to spatial and temporal traffic dynamics. Mastery of intra-technology and inter-technology mobility, particularly LTE to 5G handovers, is requisite. Configuring nodes with precision mitigates dropped sessions, signaling congestion, and unnecessary retransmissions, thereby safeguarding continuity in high-velocity environments.

Security Constructs and Policy Enforcement

Security configuration is an indispensable vector of operational integrity. Nodes must enforce authentication schemas, cryptographic protocols, and access policies consistently across virtualized domains. The vigilant administrator ensures that signaling remains impervious to interception, subscriber data is sanctified, and policy compliance is omnipresent. Misconfigurations can catalyze vulnerabilities, service interruptions, or regulatory infractions, highlighting the imperative for methodical and anticipatory security design.

Orchestration and Workflow Synthesis

Orchestration constitutes the fulcrum of configuration agility. Automated workflows, template-driven deployments, and service chaining empower rapid instantiation, scaling, and real-time updates. Comprehension of orchestration mechanisms enables operators to deftly manipulate resource allocation, synchronize node deployment, and enforce policies coherently. Scenario exercises often simulate surges in demand, necessitating rapid orchestration recalibration to ensure seamless service delivery.

Performance Metrics and Optimization Heuristics

Monitoring and performance tuning are inseparable from configuration management. KPI thresholds, traffic steering priorities, and load balancing protocols are not static—they require dynamic adjustments to preserve service quality. Understanding the implications of configuration alterations on throughput, latency, session persistence, and overall subscriber satisfaction is vital. Operators must cultivate a nuanced acumen, interpreting telemetry to refine operational parameters proactively rather than reactively.

Documentation and Knowledge Continuity

Comprehensive documentation of configuration and deployment practices is a cornerstone of operational continuity. Maintaining precise records of parameter settings, node software versions, and the rationale for modifications provides an invaluable reference for troubleshooting, auditing, and replication of successful configurations. In professional practice, documentation functions as both a heuristic guide and an institutional memory, ensuring that operational excellence persists despite personnel transitions.

Adaptive Deployment in High-Density Scenarios

High-density deployments necessitate anticipatory configuration strategies. Nodes must scale elastically in response to transient load spikes, while policy frameworks adapt to maintain equitable resource allocation. Cognitive load balancing, preemptive session routing, and intelligent handover prioritization exemplify the advanced techniques required to maintain seamless service continuity. Candidates should assimilate these paradigms to understand how high-density deployment scenarios are managed effectively.

Inter-Nodal Coordination and Signaling Efficiency

The efficiency of inter-nodal communication underpins network resilience. Nodes must maintain synchronized session tables, real-time policy enforcement, and seamless signaling across control and user planes. The adept practitioner orchestrates inter-nodal dialogue to minimize latency, prevent congestion, and optimize resource utilization. Understanding the mechanics of signaling propagation and state synchronization is essential for both operational excellence and examination preparedness.

The Imperative of Fault Cognizance in Cloud Packet Cores

Fault cognizance constitutes the bedrock of resilient cloud packet core architectures. In an ecosystem pervaded by virtualization and ephemeral network slices, latent anomalies can cascade into systemic instability. The judicious interpretation of alarms, key performance indicators, and operational logs forms the nexus through which network custodians apprehend aberrations. Familiarity with alarm hierarchies, severity stratifications, and propagation vectors is indispensable for orchestrating swift, calibrated interventions that forestall service disruptions.

KPI Exegesis and Latent Trend Analysis

Metrics serve as the cartography of network health. Latency fluctuations, throughput aberrations, session establishment success rates, handover efficacy, and signaling expediency converge to narrate the network’s operational saga. By deciphering the tapestry of these indicators, practitioners anticipate congestion loci and preemptively recalibrate resources. The perspicacity to correlate KPI deviations with latent causative agents—be they node incapacitations, configuration incongruities, or interface aberrations—empowers the operator to act with preemptive precision.

Diagnostic Apparatus and Analytical Instrumentation

Intricate diagnostic apparatus undergirds effective fault remediation. Protocol analyzers illuminate signaling trajectories, while network management platforms delineate topological stress points. Virtualization dashboards provide synoptic views of node comportment under dynamic load conditions. The ability to synthesize these data streams into coherent causal narratives is paramount; a surge in session establishment failures may denote control plane saturation, whereas attenuation in user plane throughput can presage resource exhaustion or misrouted traffic.

Methodical Troubleshooting and Node Interdependence

Structured troubleshooting transcends mere reactive intervention; it is a cerebral methodology. It encompasses meticulous data aggregation, isolation of affected nodes, hypothesis validation, and the execution of corrective stratagems. Scenario-driven rehearsal inculcates analytical rigor, enhancing both operational dexterity and examination preparedness. Awareness of the intricate interdependencies among nodes and hierarchical strata is crucial, as perturbations in one segment may reverberate throughout the network lattice.

Proactive Vigilance and Predictive Prognostication

Proactivity in monitoring metamorphoses fault management from a remedial practice into a prescient endeavor. Virtualized nodes, dynamic resource orchestration, and automated analytic engines enable anticipatory interventions that circumvent service degradation. Understanding the interplay of these mechanisms allows practitioners to invoke automated remediation protocols before transient anomalies evolve into critical failures, cultivating a resilient and self-adaptive network environment.

Security-Infused Fault Recognition

Network aberrations do not always arise from benign causes; security intrusions often masquerade as performance anomalies. Unauthorized ingress, misaligned security policies, or compromised nodes may trigger alarms that mimic conventional faults. Cognizance of this interplay between cybersecurity and fault management ensures that mitigation strategies preserve operational integrity while fortifying defense postures.

Archival Discipline and Knowledge Codification

Documentation is not a perfunctory task; it is an intellectual repository of experiential learning. Recording the genesis of alarms, the diagnostic reasoning employed, corrective measures instituted, and resultant outcomes creates a compendium of recurring scenarios. Such archival discipline enhances future troubleshooting acuity and provides a reference scaffold for complex operational contingencies.

The Synthesis of Analytical Acumen and Operational Dexterity

Fault management in cloud packet cores is not a rote exercise but an intricate synthesis of analytical reasoning, tool mastery, and systematic methodology. By integrating KPI exegesis, alarm interpretation, diagnostic instrumentation, and proactive surveillance, network custodians optimize service reliability. This cerebral orchestration fosters a network ecosystem that is both robust against perturbations and agile in resource adaptation.

Optimization Paradigms in Cloud Packet Core Networks

Optimization within cloud packet core networks necessitates a symphonic understanding of resource orchestration. Network architects must navigate the labyrinthine pathways of load distribution, dynamic resource provisioning, session routing, and traffic prioritization to achieve peak efficiency. The nuanced interplay between throughput maximization and latency minimization requires a perspicacious comprehension of algorithmic traffic modulation and predictive congestion management. Latency jitter, often imperceptible in sporadic trials, can cascade into catastrophic service degradation if not preemptively mitigated through intelligent routing heuristics.

Dynamic resource allocation forms the backbone of optimized cloud packet cores, wherein computational, storage, and network resources are fluidly apportioned in response to real-time demand fluctuations. The confluence of proactive analytics and reactive adjustments ensures that ephemeral spikes do not compromise Quality of Service. Load balancing extends beyond mere redistribution, encompassing predictive anticipatory mechanisms that leverage historical traffic telemetry and machine learning to preempt congestion nodes. In essence, optimization transforms network infrastructure from a static pipeline into a living, adaptive organism, attuned to its own fluctuating operational tempo.

Elastic Scaling and Virtualized Node Management

Scaling within cloud packet cores transcends rudimentary capacity augmentation, evolving into a finely tuned orchestration of virtualized entities. Network nodes, once rigidly fixed in topology, now inhabit a virtualized landscape where instantiation, migration, and decommissioning occur fluidly. Orchestration platforms choreograph this ballet, responding to traffic surges with anticipatory instantiations while retracting dormant nodes to conserve resources. The art of scaling mandates a meticulous comprehension of trigger thresholds, hysteresis parameters, and orchestration workflows that prevent oscillatory instability in node deployment.

Virtualization enables an unprecedented granularity of control. Nodes may be ephemeral, yet they carry the full functional gravitas of their physical counterparts. Candidates must internalize the subtle implications of scaling strategies, such as the risk of resource contention during concurrent instantiation or the latency penalty associated with inter-zone migrations. Beyond mere operational pragmatism, scaling embodies a philosophical shift in network architecture: the capacity to mold infrastructure in real time according to ephemeral demand patterns, achieving a harmony between efficiency, resilience, and agility.

Traffic Prioritization and Session Routing Intricacies

Effective session routing within cloud packet cores is an intricate symphony of decision-making, informed by a multitude of parameters ranging from subscriber profile to application criticality. Traffic prioritization serves as the fulcrum upon which Quality of Service pivots, dictating which packets traverse the network with minimal impedance. Sophisticated prioritization algorithms employ multifactorial metrics, weighing latency sensitivity, bandwidth consumption, and service level agreement mandates. Subtle misalignments in prioritization policies can yield disproportionate network inefficiencies, underscoring the necessity for perspicacious traffic engineering.

Session routing, in tandem, orchestrates the spatial and temporal distribution of user flows across network nodes. Techniques such as least-loaded path selection, weighted path assignment, and adaptive rerouting coalesce to maintain equilibrium in network utilization. The dynamic nature of routing in virtualized environments introduces complexity, as nodes may be instantiated or decommissioned on-demand. Candidates must grasp how ephemeral node availability intersects with routing stability, ensuring that session continuity is maintained even amidst volatile infrastructure states.

Security Imperatives in Dynamic Environments

Security in cloud packet core networks is not a monolithic bulwark but an ever-evolving tapestry woven into optimization and scaling. Authentication frameworks, encryption schemas, access control matrices, and secure orchestration protocols converge to safeguard subscriber data and network integrity. The ephemeral nature of virtualized nodes introduces a unique attack surface, wherein nodes may exist briefly yet carry sensitive payloads or control plane responsibilities. Insecure configurations during node instantiation or migration can propagate vulnerabilities exponentially across the network fabric.

Network architects must internalize the cascading consequences of misapplied security policies. Access control inconsistencies, weak encryption protocols, or lax orchestration authentication can compromise the entire system, particularly during periods of rapid scaling. Security must therefore be tightly interwoven with operational procedures, ensuring that each virtualized instance inherits the full spectrum of protections automatically. Beyond technical measures, security awareness encompasses anticipatory threat modeling, scenario-based drills, and vigilant telemetry monitoring, forging a proactive posture against emergent risks.

Energy-Aware Orchestration Strategies

Energy efficiency emerges as a compelling frontier in cloud packet core management, intertwining economic prudence with ecological stewardship. Dynamically powering nodes up or down based on real-time demand diminishes operational expenditure while curtailing environmental impact. Orchestration strategies that integrate energy consumption metrics with load balancing and scaling decisions create a multi-dimensional optimization problem, where performance, cost, and sustainability coalesce.

The challenge lies in harmonizing service continuity with energy curtailment. Abrupt node deactivation may impair user sessions, while overly conservative approaches erode potential energy savings. Candidates must internalize the principles of energy-aware orchestration, encompassing predictive load forecasting, temporal load smoothing, and intelligent resource consolidation. The judicious application of these strategies transforms cloud packet cores into not only agile and secure networks but also environmentally conscientious infrastructures.

Scenario-Based Practice for Conceptual Synthesis

Practical mastery of optimization, scaling, and security emerges most effectively through scenario-based exercises. Candidates benefit from iterative engagement with simulated network conditions, adjusting parameters, orchestrating scaling decisions, and applying security policies under variable traffic loads. Such exercises illuminate the interdependencies among performance metrics, revealing subtle trade-offs that theoretical study alone cannot convey. The documentation of outcomes fosters reflective learning, consolidating an understanding of cause-and-effect relationships within complex systems.

Scenario-based practice also cultivates intuition regarding emergent phenomena, such as congestion propagation, orchestration race conditions, or security-policy conflicts. Candidates develop a nuanced perception of network behavior, recognizing patterns that indicate imminent instability or inefficiency. By embedding these experiences into cognitive frameworks, practitioners acquire not merely procedural knowledge but strategic foresight, enabling adaptive responses in real-world operational environments.

Interplay Between Latency, Throughput, and Resource Utilization

Latency, throughput, and resource utilization form a triad of metrics whose optimization requires delicate balancing. Excessive emphasis on throughput may engender latency spikes, while stringent latency constraints can underutilize available resources. Cloud packet core networks demand a holistic approach, wherein traffic shaping, resource scheduling, and prioritization policies are harmonized to achieve maximal network efficacy. Candidates must apprehend the emergent properties of network performance, recognizing that localized adjustments may have global repercussions across the virtualized fabric.

Predictive analytics and machine learning offer potent tools for navigating this complex interplay. By modeling traffic patterns, node availability, and session demands, orchestration platforms can anticipate stress points and preemptively redistribute loads. This foresight mitigates the risk of cascading failures and ensures that service quality remains consistent under fluctuating conditions. Mastery of these principles requires not only technical acumen but a systems-level mindset attuned to the dynamic choreography of modern cloud packet cores.

Adaptive Orchestration Workflows

Adaptive orchestration embodies the synthesis of optimization, scaling, and security into cohesive operational protocols. Orchestration workflows govern the lifecycle of virtualized nodes, orchestrate load redistribution, enforce security policies, and align energy consumption with demand. The sophistication of these workflows determines the network’s resilience and agility, transforming reactive administration into anticipatory management.

Candidates must comprehend the architecture of orchestration engines, including policy enforcement mechanisms, dependency resolution, and failure-handling routines. Adaptive workflows are predicated on continuous feedback loops, monitoring system metrics and dynamically adjusting node behavior. This iterative control structure ensures that cloud packet cores remain robust against unpredictable traffic surges, security threats, and resource constraints. Mastery of adaptive orchestration represents the pinnacle of operational proficiency in contemporary network management.

Navigating the Labyrinth of Cloud Packet Core Architecture

The realm of cloud packet core architecture is an intricate tapestry of nodes, virtualized functions, and orchestrated pathways, demanding a nuanced comprehension beyond superficial acquaintance. Each element—be it the control plane, user plane, or policy management node—functions as a symbiotic entity, interlacing intelligence, redundancy, and agility. Mastery of these constructs requires not merely rote memorization but a perspicacious understanding of interdependent dynamics.

Operators must cultivate an ability to envision traffic flux across the network, predicting congestion points and potential bottlenecks with anticipatory acuity. Architectural diagrams, while schematic, conceal labyrinthine dependencies that manifest only under stress, surge, or partial failure. Analytical reasoning thus becomes an indispensable tool, allowing candidates to navigate the convolutions of network design with both foresight and strategic improvisation.

Cognitive Approaches to Scenario-Based Reasoning

Scenario-based reasoning elevates preparation from theoretical recitation to pragmatic application. Examiners often contrive situations simulating node failure, abrupt mobility handovers, or anomalous throughput behavior. Candidates confronted with these contingencies must demonstrate adaptive cognition, unraveling multi-tiered complications with methodical precision.

Analytical foresight entails hypothesizing potential cascading effects, evaluating latency implications, and discerning subtle discrepancies in session continuity. This is not a mere academic exercise; rather, it cultivates a mental schema capable of predicting network behavior under duress. Candidates who integrate pattern recognition, probabilistic reasoning, and contextual awareness are best poised to dissect the underlying causality of complex events.

Embedding Case Study Insights into Operational Proficiency

Case studies are more than illustrative anecdotes; they constitute a repository of tacit knowledge, bridging the chasm between textbook comprehension and operational dexterity. Exam preparation enriched by these narratives equips candidates with a repertoire of strategic responses, whether orchestrating node migrations, recalibrating policy frameworks, or optimizing subscriber throughput.

These documented deployments reveal subtle interdependencies often invisible in abstract diagrams. Candidates can extrapolate lessons on redundancy design, fault isolation, and multi-domain orchestration, enhancing their ability to propose cogent, resilient solutions during high-stakes scenarios. The cognitive synergy between study and practice engenders both confidence and dexterity, fostering a mindset oriented toward holistic network stewardship.

Strategizing Temporal Resource Allocation

Temporal resource allocation is a linchpin in both exam performance and network management. Candidates must judiciously apportion attention to high-yield domains, calibrating intensity based on their cognitive resonance with architectural, procedural, and operational facets. Time management in the exam mirrors traffic orchestration within the network: latency, throughput, and prioritization must all harmonize to achieve optimal performance.

A methodical cadence—alternating between deep-dive analysis, scenario simulation, and metric interpretation—enhances cognitive elasticity. Candidates who cultivate temporal awareness while engaging with complex topologies gain a meta-perspective, discerning not only how components function in isolation but also how they converge to yield emergent behaviors across distributed systems.

Analytical Decoding of Performance Metrics

Performance metrics serve as the veritable language of network vitality, conveying nuances imperceptible to casual observation. Deciphering these metrics requires a semiotic sensitivity, translating numerical indicators into operational narratives. Through careful analysis, candidates can discern latent congestion, subtle degradation in packet processing, or inefficiencies in policy enforcement that might otherwise escape attention.

This analytical acumen extends beyond static interpretation; it encompasses trend extrapolation, predictive anomaly detection, and correlation of disparate data streams. Candidates who cultivate this interpretive agility can anticipate systemic stress points, propose corrective interventions, and validate optimizations with empirical rigor, rendering them formidable problem solvers within both examination and operational milieus.

Immersive Simulation and Lab-Based Experiential Learning

Hands-on simulation constitutes the crucible of experiential learning, transforming theoretical abstraction into tactile familiarity. Engaging with lab environments, whether through node instantiation, session orchestration, or policy manipulation, engenders a kinesthetic understanding of network dynamics. Candidates become attuned to the subtle interplays of scaling, load balancing, and fault recovery.

Within these controlled microcosms, errors evolve into instructive phenomena rather than sources of frustration. Iterative experimentation fosters procedural fluency, reinforcing both retention and strategic reasoning. The cognitive scaffolding developed through immersive practice allows candidates to navigate unfamiliar exam scenarios with confidence, drawing upon internalized operational logic rather than superficial memorization.

Navigating Fault Management and Resilience Engineering

Fault management is not merely a technical requisite; it is a cognitive discipline, demanding anticipatory foresight and judicious prioritization. Candidates must understand the taxonomy of network failures, from transient session interruptions to systemic orchestration anomalies, and evaluate remediation strategies with both efficacy and expediency.

Resilience engineering extends this concept, emphasizing proactive configuration, redundancy optimization, and policy-driven mitigation. The interplay of recovery mechanisms, alarm interpretation, and contingency orchestration exemplifies the holistic mindset essential for high-stakes decision-making. Candidates who internalize these principles demonstrate not only technical proficiency but also strategic acumen, capable of mitigating cascading failures before they manifest in critical performance degradation.

Strategic Interpretation of Policy Enforcement Mechanisms

Policy enforcement in modern cloud packet core networks is a nuanced interplay of regulation, prioritization, and contextual adaptation. Candidates must comprehend the symbiotic relationships between subscriber profiles, traffic categorization, and dynamic rule application. Misalignment of policy rules can induce unintended throttling, suboptimal routing, or service degradation.

A strategic approach necessitates analytical foresight, enabling candidates to simulate policy interactions and predict their operational ramifications. This skill is particularly salient in exam scenarios, where nuanced trade-offs between quality of service, resource allocation, and network security are tested. Mastery of policy enforcement transcends mere configuration, requiring a conceptual synthesis of operational strategy, systemic foresight, and tactical execution.

Integrating Security Paradigms into Holistic Network Management

Security paradigms in the 4A0-M05 framework extend beyond conventional firewall or encryption protocols, encompassing proactive threat anticipation, anomaly detection, and policy-aligned mitigation. Candidates must internalize the symbiosis between performance optimization and security resilience, ensuring that latency minimization and throughput enhancement do not compromise the network’s integrity.

Understanding attack vectors, authentication orchestration, and access control intricacies is imperative. Candidates who develop a security-conscious mindset are able to anticipate vulnerabilities, engineer resilient architectures, and adaptively respond to emergent threats. This perspective transforms security from a reactive obligation into an integral component of strategic network management.

Cultivating Cognitive Resilience and Exam Adaptability

Examination success is as much a test of cognitive resilience as technical knowledge. Candidates encounter complex, multi-step problems under temporal constraints, necessitating calm deliberation, iterative reasoning, and adaptive strategy. Stress management techniques—focused breathing, deliberate pacing, and scenario visualization—fortify the candidate against cognitive fatigue and performance erosion.

Adaptability is paramount; the ability to pivot between conceptual frameworks, recalibrate assumptions, and integrate cross-domain knowledge differentiates proficient candidates from exceptional ones. By cultivating mental elasticity, candidates can navigate unforeseen scenarios, dissect ambiguities, and formulate coherent solutions with confidence and precision.

Optimizing Subscriber Management and Quality Assurance

Subscriber management in cloud packet core networks is a multidimensional construct, intertwining identification, authentication, policy enforcement, and performance assurance. Candidates must reconcile competing priorities, ensuring seamless service delivery while maintaining system integrity. Understanding session lifecycles, mobility patterns, and data throughput characteristics enables candidates to propose optimization strategies that balance efficiency, scalability, and quality of experience.

Quality assurance extends beyond reactive monitoring; it encompasses anticipatory analysis, trend detection, and proactive remediation. Candidates proficient in these domains can interpret subscriber behavior patterns, identify systemic inefficiencies, and recommend policy adjustments that enhance operational reliability and user satisfaction.

Holistic Comprehension of Network Orchestration and Scaling