Exam Code: CCD-410

Exam Name: Cloudera Certified Developer for Apache Hadoop (CCDH)

Certification Provider: Cloudera

Corresponding Certification: CCDH

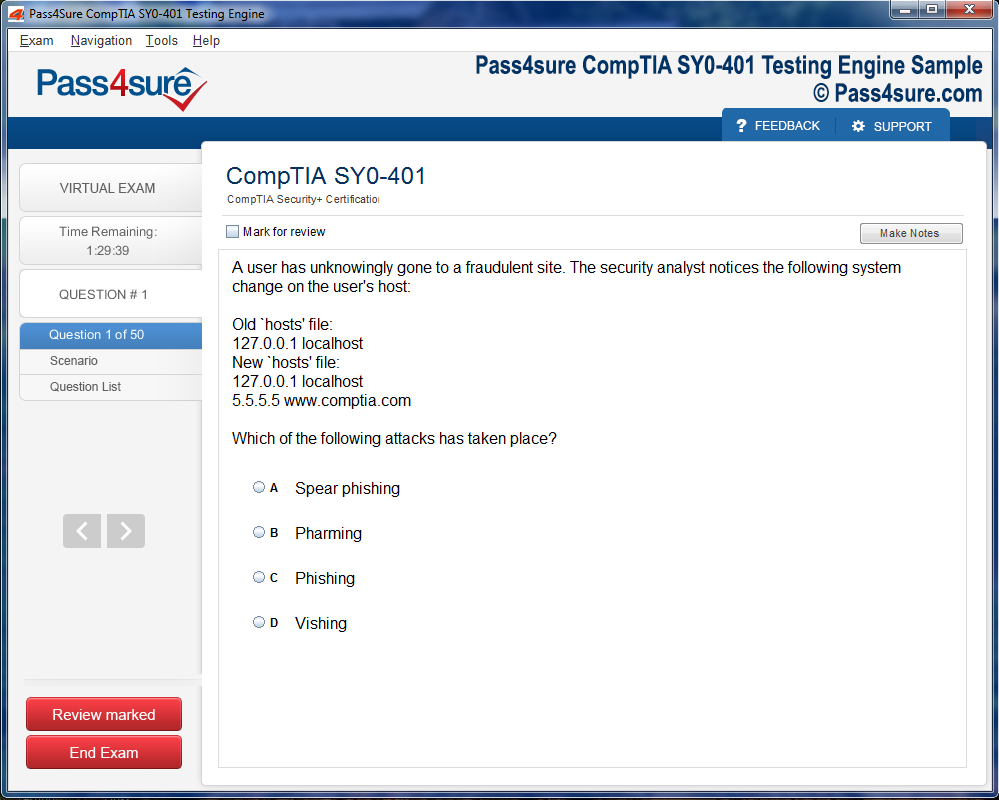

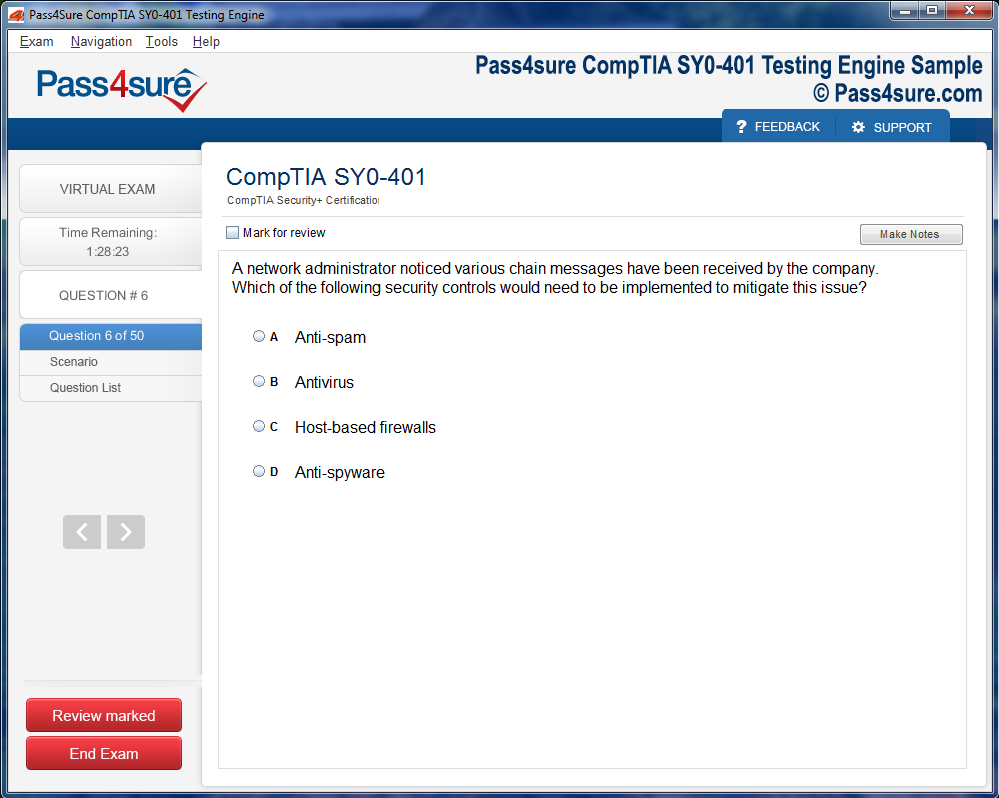

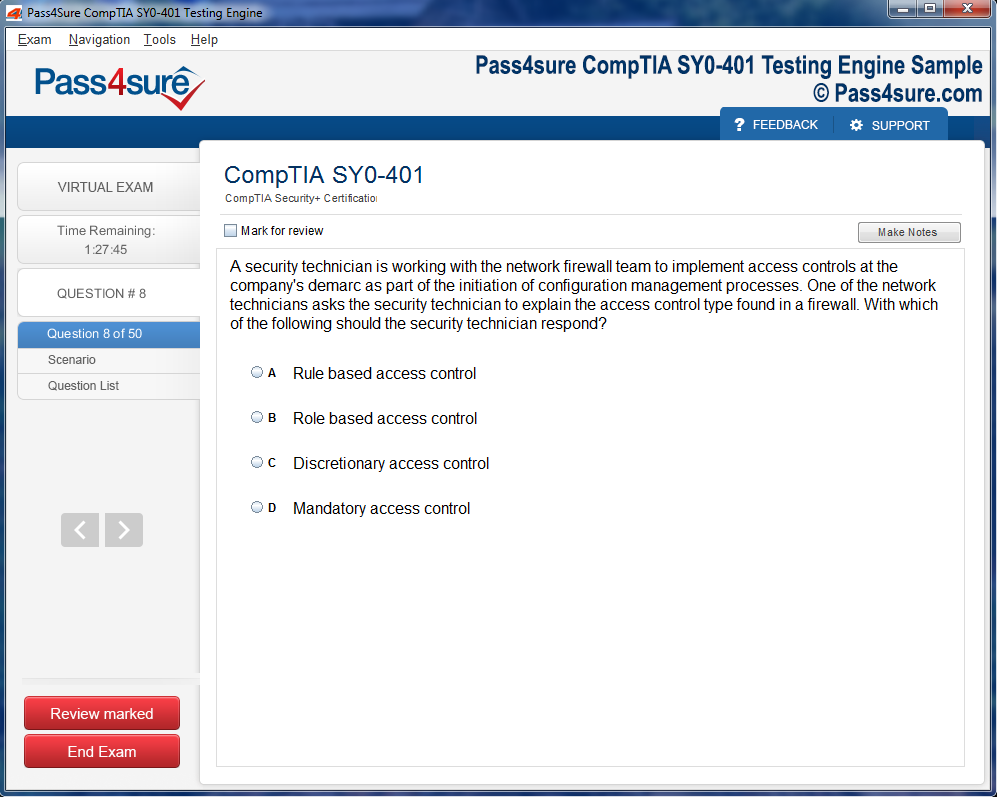

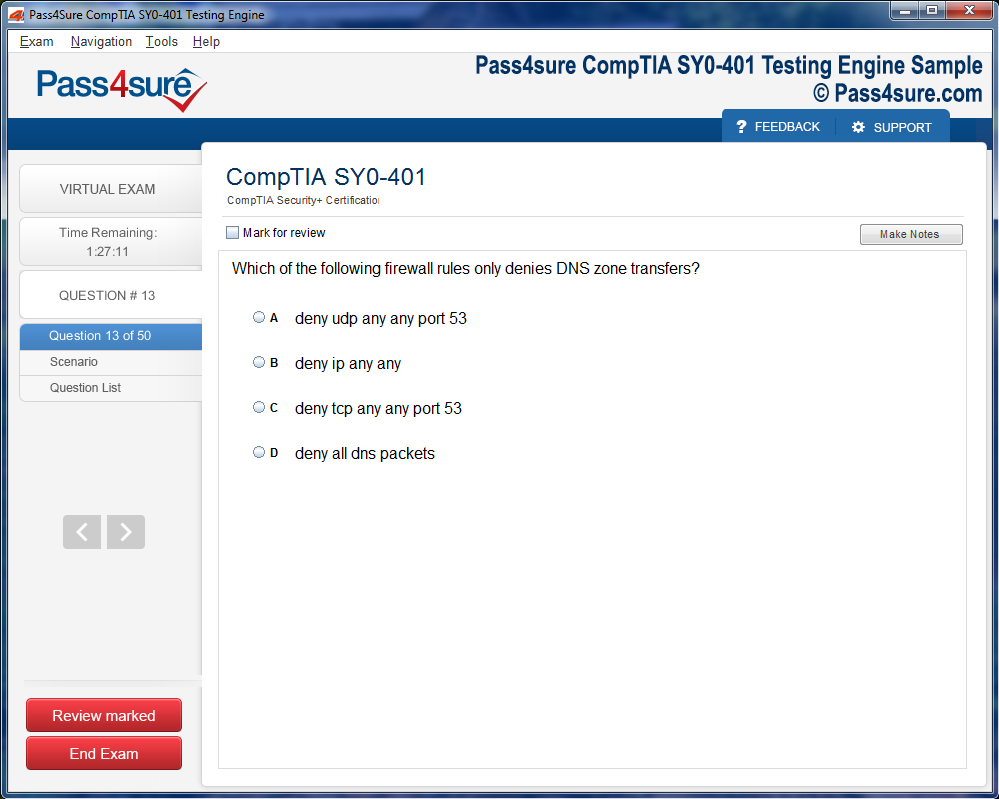

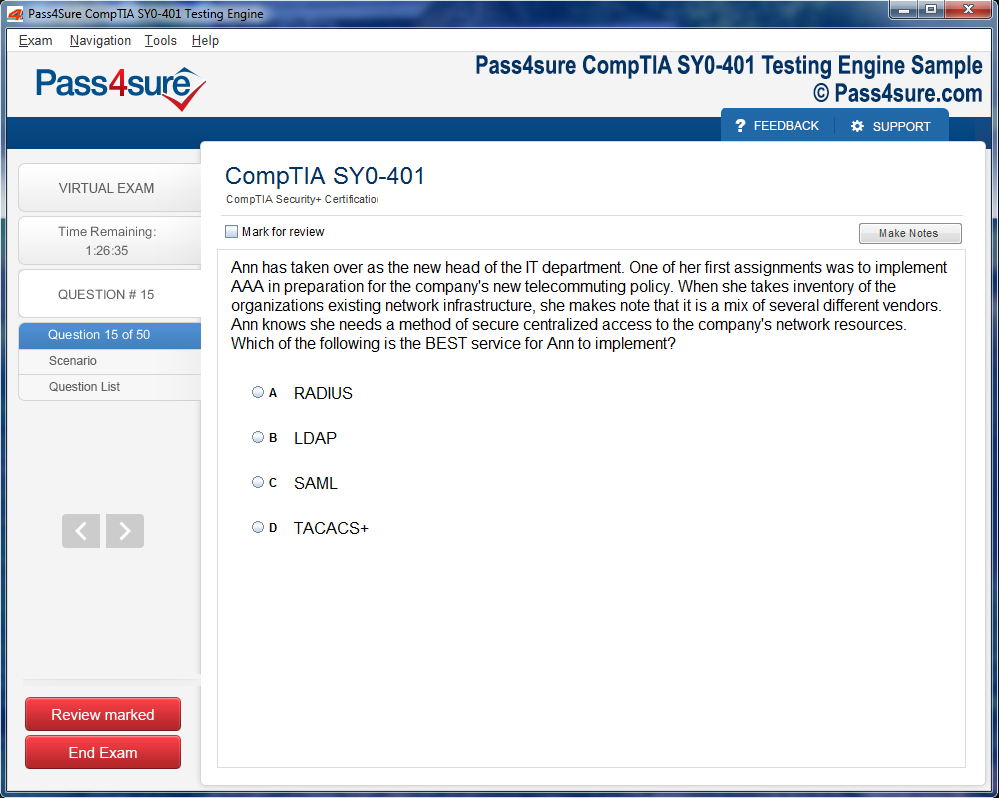

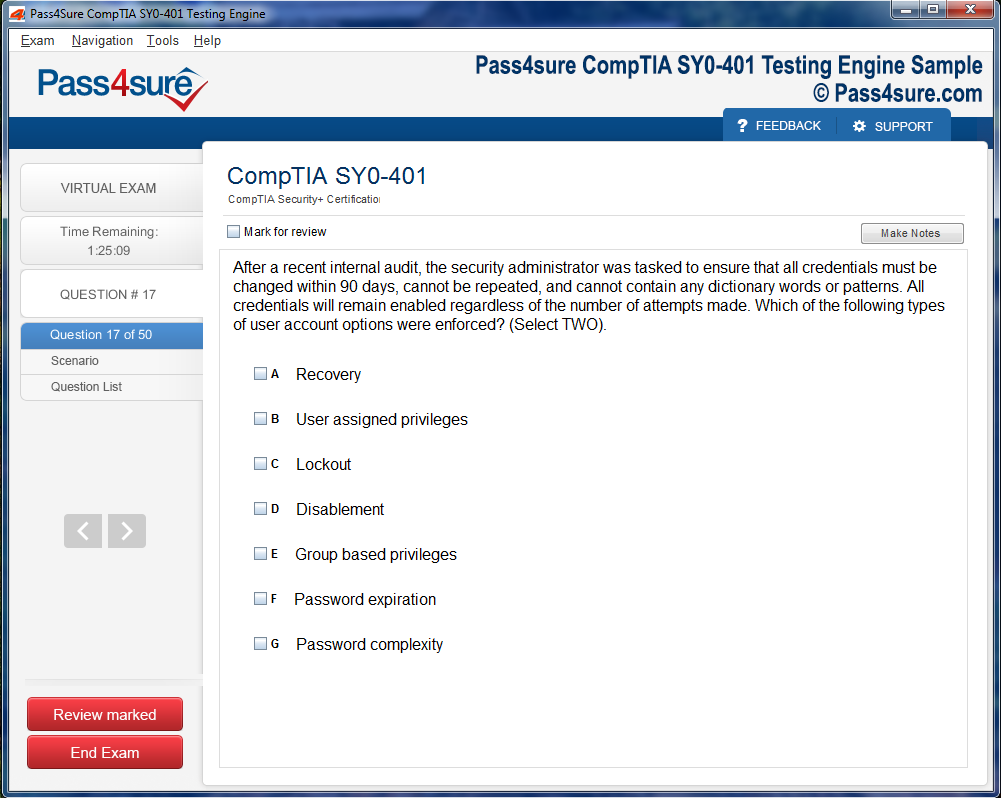

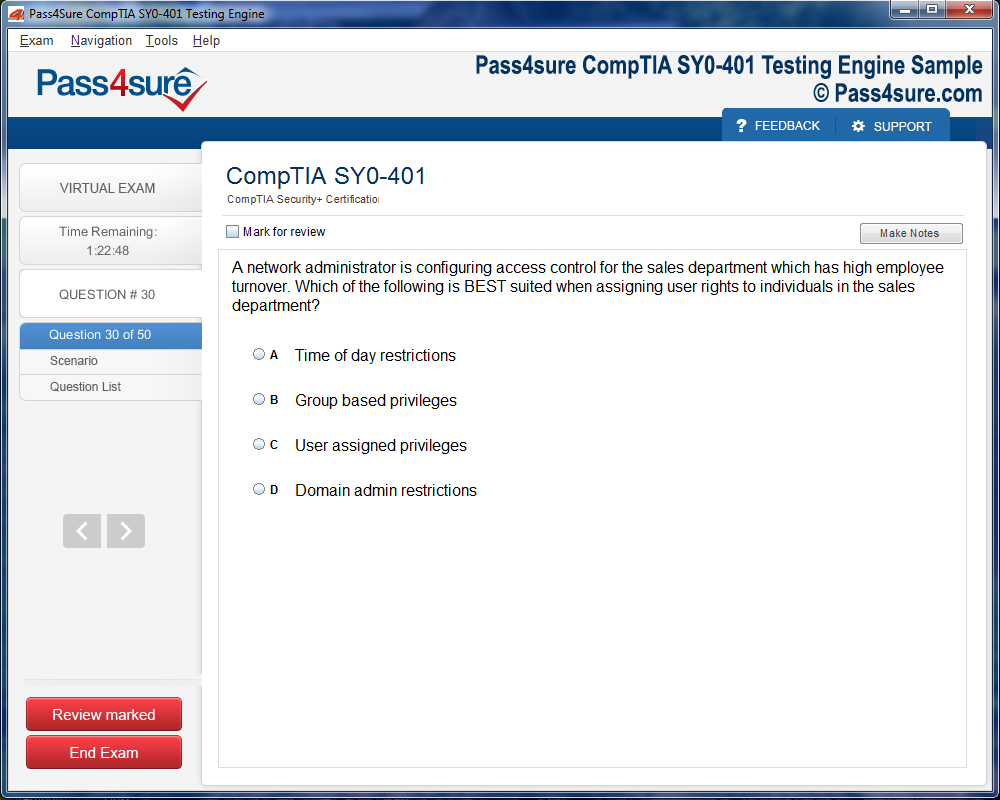

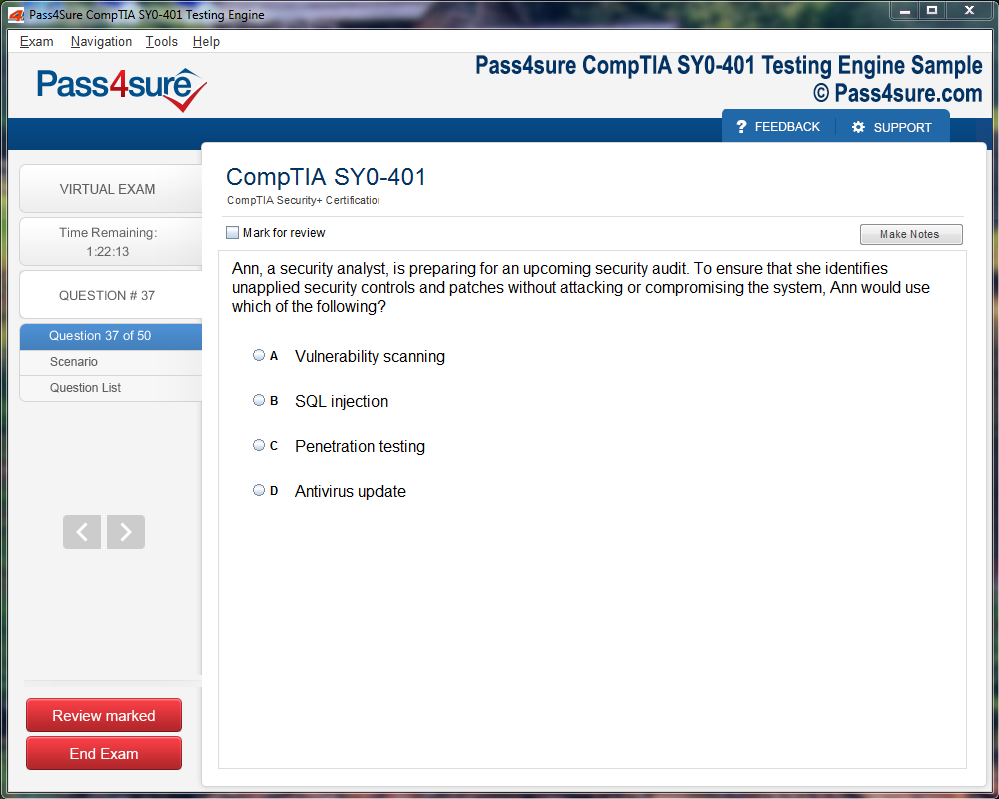

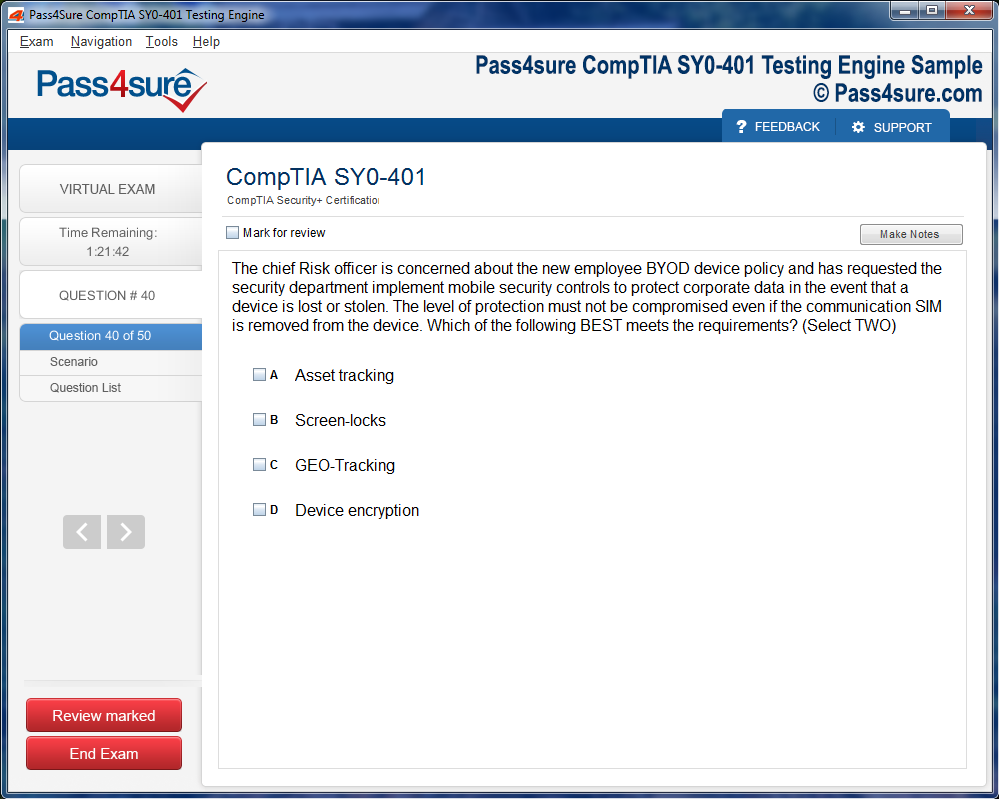

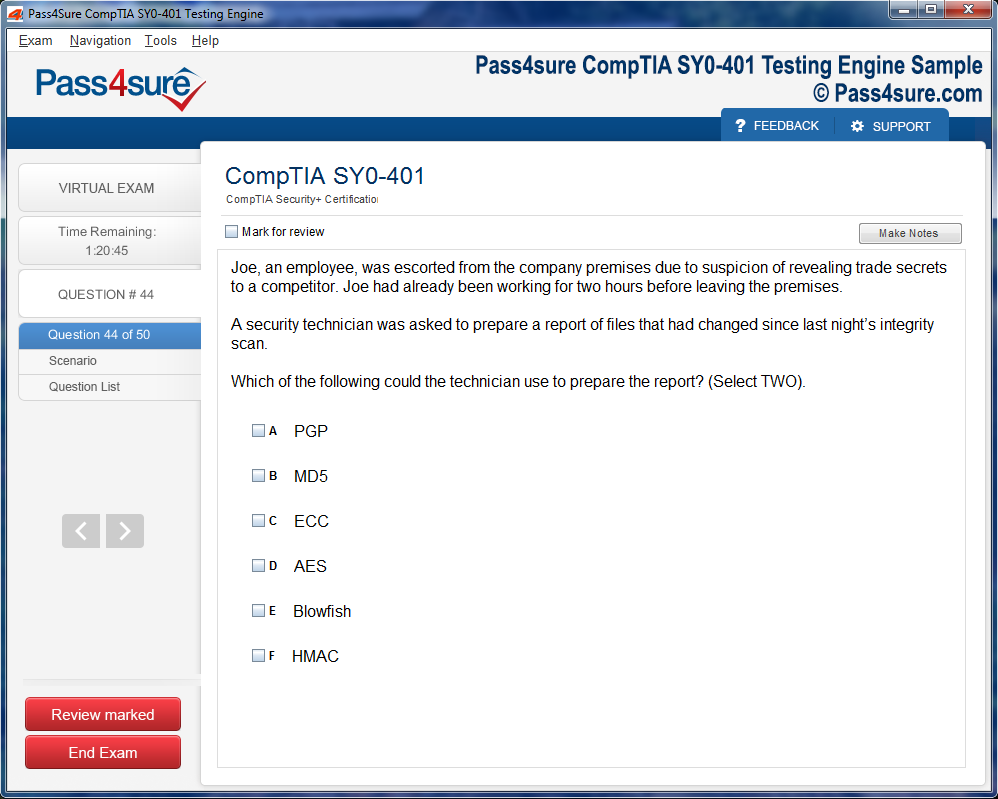

Product Screenshots

Frequently Asked Questions

How does your testing engine works?

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations.

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.

Mastering CCD-410: Your Guide to Cloudera Certified Developer Certification

Venturing into the labyrinthine world of Cloudera development necessitates an embrace of complexity and nuance. The CCD-410 certification is not merely a credential; it is a crucible where one hones the dexterity required to navigate distributed architectures, orchestrate data pipelines, and cultivate an acumen for high-velocity, voluminous datasets. At its nucleus lies Hadoop, whose dual pillars of HDFS and MapReduce define the scaffolding upon which modern data ecosystems are constructed. HDFS ensures redundancy through replication and provides resilience against nodal failures, while MapReduce injects a functional programming paradigm into distributed computation, demanding both conceptual comprehension and tactical proficiency.

Understanding Hadoop’s intricate mechanisms is an intellectual endeavor that extends beyond rudimentary familiarity. The interplay of block size optimization, replication strategies, and nodal allocation creates a dynamic environment where latency and throughput must be balanced with exacting precision. MapReduce further complicates this terrain with a multi-phase lifecycle, where mappers, combiners, and reducers form an interconnected choreography. The CCD-410 exam probes this understanding through scenarios that require nuanced judgment, challenging aspirants to reconcile theoretical knowledge with operational pragmatics.

Navigating Cloudera’s Ecosystem

Cloudera’s ecosystem is a sprawling constellation of tools, each calibrated for specific functions yet synergistic in orchestration. Hive abstracts data interrogation into a familiar SQL-like syntax, translating declarative statements into execution plans that exploit MapReduce or Tez engines. Impala, by contrast, thrives on immediacy, delivering real-time querying capabilities that mitigate latency. Pig scripts distill complex transformations into succinct, readable instructions, while Spark leverages in-memory computation to expedite iterative operations with unprecedented velocity. Mastery of CCD-410 entails discerning the optimal instrument for each task, appreciating not merely syntax but the contextual advantages and constraints of each framework.

Equally critical is an intimate familiarity with data serialization and storage paradigms. Developers must navigate an array of formats—text, CSV, JSON, Avro, ORC, Parquet—each bearing unique implications for performance, schema evolution, and storage efficiency. The judicious selection of a data format impacts not only execution speed but the maintainability and scalability of entire pipelines. Serialization frameworks like Avro ensure consistency across producers and consumers, a subtlety often woven into exam scenarios that test applied judgment rather than rote recall.

Orchestrating Workflows and Optimizing Performance

The orchestration of data pipelines is akin to conducting a symphony, where each task must perform in harmony with dependencies, conditional branches, and failure contingencies. Tools such as Oozie allow developers to choreograph these sequences with sophistication, ensuring resilience, fault tolerance, and scalability. The CCD-410 examines one’s ability to devise strategies for job scheduling, error handling, and dependency management, demanding both analytical acuity and experiential insight.

Error detection, debugging, and performance tuning constitute a further dimension of professional dexterity. Distributed systems are inherently capricious, with node failures, task latency, and resource contention posing constant challenges. Proficiency involves interpreting logs, diagnosing bottlenecks, and adjusting parameters such as memory allocation, parallelism, and shuffle behavior. Memorization of configurations is insufficient; understanding the rationale behind each setting allows developers to reason through scenarios that mirror real-world exigencies, a skill the CCD-410 implicitly values.

Programming Paradigms for Distributed Systems

Programming languages form the backbone of practical mastery. Java underpins Hadoop, while Python and Scala dominate Spark environments. Beyond syntactic fluency, aspirants must internalize functional programming paradigms, lazy evaluation, and partitioning strategies to craft efficient, maintainable code. Pig scripting demands comprehension of dataflows and transformation optimizations, while Spark jobs necessitate judicious selection of actions and transformations. The CCD-410 rewards the ability to translate conceptual frameworks into robust implementations that navigate the vagaries of distributed processing with finesse.

Cognitive agility is indispensable. Exam scenarios often present multifaceted problems, requiring candidates to weigh trade-offs between performance, reliability, and maintainability. Decisions such as whether to pre-aggregate data in Hive or compute it dynamically in Spark test not just knowledge, but analytical foresight. Developing this evaluative mindset parallels the decision-making demands of enterprise-scale environments, where efficiency and correctness must coexist.

Experiential Learning and Practical Immersion

Immersive, hands-on experience is the fulcrum upon which theoretical understanding pivots into mastery. Constructing a personal Cloudera environment, experimenting with synthetic or real datasets, and executing end-to-end pipelines cultivates an intuitive grasp of system behavior. Running Spark jobs, tuning Hive queries, exploring Pig scripts, and simulating failure scenarios fosters a tactile understanding that reading alone cannot impart. The CCD-410 exam, while structured as multiple-choice and practical exercises, subtly privileges candidates who have traversed these practical terrains and internalized lessons beyond abstraction.

Hive as the Alchemical Forge of Data

In the labyrinthine corridors of Cloudera’s ecosystem, Hive emerges as an alchemical forge where raw datasets are transmuted into actionable insights. Unlike conventional SQL engines tethered to relational databases, Hive imbues queries with an aura of scalability, metamorphosing them into executable plans that dance across a distributed cluster. Understanding Hive requires an appreciation of the invisible machinery beneath its veneer: MapReduce, Tez, and the orchestration of multiple stages that translate declarative commands into concrete computation. The subtle art of partitioning, akin to slicing a colossal tome into digestible chapters, and bucketing, which meticulously arranges data into manageable fragments, forms the bedrock of performance optimization. Mastery demands an almost symbiotic relationship with the data: a developer must anticipate cardinalities, skewed distributions, and join cardinalities before a single query executes.

Hive’s interaction with various data formats elevates its versatility. Columnar formats such as ORC and Parquet function as crystalline lenses, allowing analytical operations to pierce selectively through dimensions of information while minimizing I/O expenditure. Row-based formats like JSON and CSV, though less sophisticated in performance, offer a canvas for rapid prototyping. A sophisticated practitioner balances the trade-offs between speed, storage efficiency, and query complexity, a calculus often tested through scenarios requiring nuanced decisions rather than rote syntax recollection.

Impala: The Crescendo of Low-Latency Analytics

Impala occupies a liminal space between batch processing and real-time analytical sorcery. Its daemon-based architecture eschews the episodic overhead of MapReduce in favor of persistent, memory-resident execution, producing a cadence of queries that is both immediate and fluid. The choice between Hive and Impala is a deliberation in temporal economics: Hive excels in the grandiose orchestration of bulk transformations, while Impala thrives in nimble interrogations of data for instantaneous decision-making. An intimate understanding of execution engines, caching mechanisms, and concurrency patterns allows a practitioner to exploit Impala’s latency-sensitive design to its fullest potential. Running the same query across both engines illuminates the interplay of resource allocation, in-memory computation, and execution strategy, offering a prism through which data behaviors are observed and optimized.

Impala’s efficacy also relies on a profound comprehension of metadata management. Its interactions with Hive Metastore, column statistics, and partition metadata determine the efficiency of query planning. Candidates who grasp these subtleties can navigate complex queries with a discerning eye, recognizing latent bottlenecks and preemptively structuring datasets for maximum throughput. The mastery here is both cerebral and empirical, necessitating a habitual engagement with trial, observation, and iterative refinement.

Pig: The Cartographer of Dataflows

Pig diverges from the declarative traditions of Hive and Impala, offering a scripting paradigm where dataflows are sketched as sequential operations. Its Latin-esque nomenclature—LOAD, FILTER, FOREACH—evokes the cadence of a poetic ritual, yet beneath lies a potent mechanism for orchestrating multifaceted ETL processes. Pig’s potency is unveiled when contending with datasets that demand convoluted transformations: filtering, joining, aggregating, and reshaping are performed in a singular, cohesive script that abstracts away the arduous choreography of individual MapReduce jobs. This fluency in dataflow architecture fosters agility in manipulating sprawling datasets, a skill that CCD-410 scenarios often probe through exercises requiring restructured workflows and performance-aware modifications.

Optimization in Pig is an art of reconfiguration, a labyrinthine ballet of operation reordering and minimization of intermediate outputs. Recognizing the subtle cost of each transformation step, and orchestrating them to reduce memory footprint and execution latency, distinguishes the novice from the adept. Pig’s interaction with file formats, like Hive and Impala, adds a further layer of sophistication, compelling practitioners to choose between the immediacy of row-oriented storage and the analytical efficiency of columnar schemas.

Harmonizing Data Formats Across the Triad

A nuanced understanding of file formats functions as a lingua franca across Hive, Impala, and Pig. The judicious selection between row-based and columnar storage profoundly impacts both computational efficiency and storage overhead. Columnar formats act as scalpel-like instruments, excising only the requisite dimensions for analysis, whereas row-based formats offer the pliability necessary for ad hoc transformations and flexible ingestion pipelines. The perspicacious engineer anticipates how these choices reverberate across joins, aggregations, and partitioning schemes, ensuring that pipelines remain robust, scalable, and performant under the weight of growing datasets.

File format mastery also entails cognizance of compression algorithms, serialization overheads, and interoperability across tools. Selecting an inappropriate format can cascade inefficiencies throughout a pipeline, exacerbating latency, memory contention, and disk I/O. Conversely, an informed choice amplifies throughput, reduces computational drag, and enhances the interpretability of intermediate datasets, a competence consistently interrogated through CCD-410’s scenario-based examinations.

Performance Tuning as an Esoteric Craft

Beyond syntax lies the realm of performance tuning, where Hive, Impala, and Pig metamorphose from instruments into extensions of a practitioner’s analytical intuition. Memory allocation, parallelism, and execution strategy form the tripod of optimization. Hive practitioners manipulate execution engines, join algorithms, and MapReduce task sizing with the precision of a master artisan. Impala’s tuning revolves around cache management, query concurrency, and thread allocation, demanding a balance between immediacy and resource saturation. Pig requires an orchestration of dataflows to minimize intermediate artifacts, judiciously leveraging combiners and reducers to streamline execution.

The craft of tuning extends beyond individual tools; it encompasses a holistic comprehension of cluster behavior, disk I/O patterns, network latencies, and the stochastic nature of distributed execution. Candidates adept in this esoteric art intuitively discern inefficiencies, preempt contention, and sculpt queries that marry algorithmic elegance with computational pragmatism.

Scenario-Based Analytical Dexterity

The crucible of CCD-410 is scenario-based analysis, a testing ground for both technical knowledge and applied judgment. Candidates encounter datasets swelling exponentially, slow-running ETL jobs, and convoluted joins that strain conventional wisdom. In these scenarios, the capacity to synthesize understanding of partitioning, bucketing, file formats, and engine selection becomes paramount. Hands-on exercises—spinning up sample tables, executing experimental queries, and iterating optimizations—transform theoretical knowledge into practiced intuition. The scenarios simulate the dynamism of production environments, demanding analytical dexterity and the ability to architect resilient pipelines under duress.

Integration Within the Cloudera Cosmos

Hive, Impala, and Pig do not exist in isolation; they inhabit a cosmos entwined with HDFS, Oozie, and Spark. The interdependencies dictate not only data movement but also orchestration and scheduling. A robust data engineer navigates these relationships with foresight, integrating Pig scripts into Oozie workflows, funneling transformed datasets into Hive tables, and leveraging Impala for near-real-time dashboards. This synthesis ensures data pipelines are not merely functional but elegant, maintainable, and extensible, reflecting an understanding that transcends individual tool mastery and enters the realm of holistic architecture.

Unveiling Spark’s In-Memory Alchemy

In the labyrinthine expanse of modern data engineering, Spark emerges as a fulcrum of high-velocity computation, transmuting raw information into actionable insights with remarkable celerity. Unlike archaic batch-oriented paradigms, Spark orchestrates an intricate ballet of distributed datasets in memory, circumventing disk-bound latency. Its cognitive architecture, predicated on Resilient Distributed Datasets, manifests fault-tolerant, horizontally scalable pipelines capable of handling gargantuan data volumes. CCD-410 aspirants must not merely code with Spark but internalize its cognitive schema to decipher the subtleties of iterative transformations and lineage dependency graphs.

RDDs: The Quintessence of Distributed Cognition

Resilient Distributed Datasets, or RDDs, encapsulate the arcane essence of Spark’s efficiency. Each RDD represents a distributed, immutable collection, enabling fault-tolerant computation through lineage tracking. Transformations, performed lazily, encode operations without immediate execution, preserving computational resources while allowing dynamic optimization. Actions catalyze the metamorphosis of abstract transformations into tangible results, demanding a meticulous understanding of execution triggers. Mastery of RDDs necessitates fluency in map, filter, flatMap, reduceByKey, and their nuanced interplays, as well as insight into task partitioning and memory locality.

The Elegance of DataFrames and Datasets

Beyond the granular realm of RDDs lies the more sophisticated stratum of DataFrames and Datasets. DataFrames, with their tabular abstraction, emulate relational paradigms while benefiting from Spark’s catalyst optimizer, which transmutes declarative queries into highly efficient execution plans. Datasets augment this paradigm with type safety and compile-time guarantees, minimizing semantic errors and fostering code reliability. For CCD-410, understanding the trade-offs between RDDs, DataFrames, and Datasets is crucial; the judicious selection among these constructs can dictate the performance and scalability of production pipelines.

Partitioning and Shuffling: Navigating Performance Topography

In distributed computation, partitioning is both an art and a science, sculpting how data fragments traverse nodes and converge during processing. Imbalanced partitions engender skew, stymying parallelism and precipitating performance degradation. Shuffling, an operation of redistribution across partitions during joins or aggregations, constitutes a notorious bottleneck if mismanaged. Practitioners must discern the optimal granularity of partitions, manipulate coalescing and repartitioning strategies, and scrutinize task execution metrics to maintain equilibrium across clusters. The acumen to diagnose skewed workloads epitomizes the proficiency expected from CCD-410 candidates.

Symbiosis with Cloudera Ecosystem

Spark’s prowess is magnified when seamlessly interwoven with the Cloudera ecosystem. Data ingestion from HDFS, Hive, or live streaming sources amplifies its versatility, allowing hybrid pipelines that merge batch, interactive, and real-time computation. Spark SQL offers declarative querying of structured datasets, while Structured Streaming renders temporal dataflows tractable. Such integrations underscore Spark’s flexibility, demanding both practical competence and architectural foresight from aspirants. Scenario-based questions frequently probe the candidate’s ability to assemble coherent pipelines that reconcile diverse data modalities.

Optimization and Memory Cognition

The crucible of Spark performance is memory management. Persisting frequently accessed datasets, judicious selection of storage levels, and evasion of redundant recomputation are cornerstones of efficient design. The internal alchemy of Catalyst and Tungsten orchestrates query plan refinement and code generation, abstracting complex optimizations from the end user while rewarding those who apprehend the underlying mechanisms. In CCD-410, questions often transcend mere syntactic correctness, probing conceptual comprehension of execution efficiency and resource utilization.

Error Mitigation and Observability

Robust Spark deployments confront the inevitability of failure: executor crashes, straggler tasks, and data inconsistencies are quotidian realities. Mastery entails not only debugging but constructing resilient architectures via checkpointing, DAG analysis, and log scrutiny. The Spark UI offers a window into execution topology, enabling practitioners to pinpoint bottlenecks and preempt anomalies. Candidates proficient in observability techniques distinguish themselves, demonstrating the capability to maintain reliable, fault-tolerant pipelines at scale.

Constructing Transformative Pipelines

Practical acumen is honed through the deliberate construction of transformative pipelines. Reading from heterogeneous sources, orchestrating intricate transformations, executing joins, and persisting results encapsulate the quintessential Spark workflow. Exposure to both batch and streaming paradigms fosters intuition regarding engine selection and performance trade-offs. Comparative exercises involving Hive or MapReduce illuminate the superior performance and flexibility afforded by in-memory computation, cementing the practitioner’s strategic discernment.

Strategic Cognition in High-Velocity Workflows

Beyond technical dexterity, Spark proficiency demands strategic cognition. CCD-410 assesses the ability to select optimal constructs, anticipate computational bottlenecks, and scale solutions across clusters. The successful candidate internalizes patterns of workload optimization, reconciling theoretical knowledge with empirical experimentation. This cognitive synthesis underpins effective decision-making in real-world data engineering scenarios, where voluminous and variegated datasets challenge conventional paradigms of processing.

Iterative Refinement and Benchmarking

Iterative refinement embodies the spirit of high-performance Spark engineering. By instrumenting pipelines, analyzing stage-level metrics, and benchmarking transformations, practitioners cultivate a granular understanding of system behavior. CCD-410 aspirants should embrace a culture of empirical validation, iteratively enhancing pipelines to balance throughput, latency, and resource consumption. This methodology engenders not only examination success but enduring competence in designing resilient, scalable data solutions.

The Sublime Architecture of Data Orchestration

In contemporary data ecosystems, the sheer magnitude of raw information demands more than computational prowess; it necessitates orchestrated choreography. Oozie emerges as a sentinel within this domain, providing a lattice of control over sprawling data pipelines. Its capacity to coordinate multifaceted workflows allows practitioners to traverse the labyrinthine corridors of ingestion, transformation, and analytical processing without succumbing to chaos. For aspirants navigating CCD-410, the conceptual scaffolding of workflow orchestration is a crucible for both understanding and ingenuity.

XML as the Lexicon of Workflow Logic

Workflow definition within Oozie relies upon XML, an austere yet potent notation that codifies each procedural step. Beyond mere syntax, XML functions as a cognitive map, guiding developers through dependencies, conditional execution, and error contingencies. The disciplined rigor of writing XML encourages foresight and preemptive troubleshooting, which is indispensable in large-scale pipelines. CCD-410 candidates benefit from mastering this lexicon, not as rote memorization, but as a language to articulate complex data choreography with precision.

Temporal and Data-Driven Triggers

One of the most ineffable aspects of Oozie is its mastery of triggers, which may be temporal or data-dependent. Coordinator jobs empower pipelines to awaken at predetermined intervals or respond instantaneously to incoming data streams. This is particularly resonant in environments characterized by relentless data proliferation, such as IoT networks or continuous transactional flows. The subtle art lies in architecting workflows that are simultaneously reactive and anticipatory, a skill that distinguishes proficient developers from novices in certification assessments.

Resilience Through Error Containment

The orchestration of massive data systems is seldom a journey free of obstacles. Nodes falter, networks fluctuate, and processes occasionally derail. Oozie equips developers with mechanisms to insulate pipelines from cascading failure. Through retry schemas, catch constructs, and meticulous failure notifications, a robust workflow transforms potential disruption into managed contingencies. Aspirants must internalize these paradigms to navigate CCD-410 scenarios that simulate real-world volatility, emphasizing resilience over theoretical design.

Integrative Synergy with Analytical Engines

Oozie’s potency derives not only from its orchestration capabilities but also from its symbiotic integration with Cloudera’s analytical arsenal. A single workflow may interweave Hive queries, Pig scripts, and Spark transformations into a seamless narrative of data evolution. Understanding these interdependencies ensures a flow that is both coherent and optimized, mitigating the friction that arises from heterogeneous processing layers. Proficiency in this integration is a hallmark of mastery, as it demands both systemic insight and operational dexterity.

Modular Pipelines Through Parameterization

Complexity often begets opacity, yet Oozie mitigates this through modularization and parameterization. Sub-workflows fragment elaborate pipelines into comprehensible segments, promoting readability and maintainability. Parameterized jobs enhance flexibility, allowing a singular workflow to accommodate varied datasets or environmental configurations without redundant reinvention. Mastery of these strategies equips candidates with the cognitive tools to design adaptive, scalable systems, reflecting the dynamic reality of enterprise-scale data operations.

Diagnostic Acumen and Log Analysis

A crucial skill for CCD-410 aspirants is the ability to decode the signals embedded within workflow logs. Oozie captures granular execution data, revealing bottlenecks, misconfigurations, and latent errors. Developing diagnostic acumen allows practitioners to preemptively adjust workflows, optimize resource allocation, and remediate failures with surgical precision. This analytic sensibility transforms abstract logs into actionable intelligence, cultivating a proactive rather than reactive approach to workflow stewardship.

Beyond Oozie: Conceptual Universality

While Oozie provides a robust framework, understanding the universality of orchestration concepts is indispensable. Modern alternatives, exemplified by platforms such as Apache Airflow, operate on analogous principles: task dependency, scheduling cadence, error containment, and modularity. By internalizing these foundational tenets, developers gain the cognitive elasticity to adapt to evolving ecosystems, ensuring that expertise remains relevant irrespective of the specific toolset employed. This conceptual transcendence fortifies both exam performance and practical competency.

Strategic Design of Conditional Workflows

Conditional logic within Oozie allows workflows to respond dynamically to data-driven contingencies. This stratagem transforms linear pipelines into intelligent networks capable of branching based on runtime parameters. Designing such workflows demands foresight, as each branch introduces potential points of latency or failure. CCD-410 aspirants must cultivate a mindset attuned to conditional interdependencies, fostering an ability to predict emergent behaviors within intricate data landscapes.

The Aesthetic of Scalable Orchestration

Beyond technical precision, workflow orchestration embodies an aesthetic of scalability. Elegantly designed pipelines exhibit clarity, modularity, and adaptability, balancing operational efficiency with cognitive comprehensibility. The skillful orchestration of interdependent tasks transforms raw data into a symphony of actionable insight. For developers, this aesthetic transcends procedural competence, instilling a deeper appreciation for the artistry inherent in large-scale data engineering.

In the ever-expanding cosmos of data engineering, proficiency is measured not merely by the ability to manipulate datasets but by the dexterity with which one orchestrates large-scale pipelines. The CCD-410 certification, revered for its rigor, challenges practitioners to transcend rudimentary operations and embrace the arcane intricacies of performance tuning, diagnostic reasoning, and operational foresight. As datasets swell into terabytes and petabytes, conventional paradigms falter, demanding a meticulous understanding of optimization strategies that balance computational efficiency with resource pragmatism.

The Alchemy of Data Storage

Performance optimization commences with a perspicacious understanding of data storage structures. Columnar formats, such as ORC and Parquet, embody a sophistication that minimizes I/O overhead for analytical queries. Their architecture allows selective retrieval of columns, thereby evading the computational tedium of parsing entire rows. Conversely, row-oriented schemas like CSV and JSON, though seemingly archaic, retain utility in lightweight ingestion or transactional contexts. Candidates must cultivate a discernment for the nuanced trade-offs embedded in compression, partitioning, and bucketing strategies. While high compression ratios conserve disk capacity, they exact a CPU toll during decompression cycles, a subtle yet consequential consideration in high-throughput pipelines.

The perceptive engineer anticipates workload contours: whether queries emphasize broad-scope aggregations or selective, iterative computations. CCD-410 scenarios often present labyrinthine datasets, compelling aspirants to adjudicate the optimal storage schema. Preemptive choices in format and compression can delineate the boundary between sluggish execution and seamless orchestration. Understanding these trade-offs transcends rote knowledge—it requires an intuitive grasp of data kinetics and the cascading impact of storage decisions on downstream computational layers.

Execution Optimization: Orchestrating Computational Cadence

Beyond storage, the choreography of job execution is pivotal. Engines like Hive, Pig, and Spark furnish a plethora of tunable parameters—memory allocation, mapper and reducer counts, parallelism thresholds—that constitute the levers of performance orchestration. Spark, in particular, demands vigilance with partition sizing, caching mechanisms, and shuffle avoidance. Suboptimal partitioning induces straggler tasks, which metastasize into systemic slowdowns, while excessive caching squanders precious memory, precipitating evictions and recomputation.

Oozie workflows, often the linchpin of orchestrated pipelines, gain efficacy through judicious scheduling and concurrency control. Candidates may encounter scenarios wherein jobs languish inexplicably. A meticulous evaluation might reveal skewed datasets, suboptimal join strategies, or insufficient memory provisioning as culprits. The aptitude to dissect these bottlenecks and prescribe surgical adjustments is emblematic of advanced mastery. Each optimization knob is more than a parameter; it is a conduit through which computational potential is either liberated or squandered.

The Art and Science of Debugging

Even the most meticulously crafted pipeline is susceptible to entropy. Debugging, therefore, is both an art and a science. Logs serve as the primary lexicon through which pipeline behavior is interpreted. Deciphering error messages, tracing job progress, and interrogating DAG visualizations in Spark equips the developer with forensic acumen. Hive and Pig amplify this capability through query plans, which illuminate the transformation of logical instructions into physical execution.

Effective debugging entails more than identifying failures; it requires anticipating them. Understanding the interplay between data distribution, resource allocation, and execution patterns fosters preemptive mitigation strategies. CCD-410 aspirants are frequently tested on their capacity to reason through complex failure scenarios, where the solution is not merely a corrective action but a strategic recalibration that ensures enduring pipeline resilience.

Strategic Design for Performance Amplification

Optimization is as much about preemptive design as reactive tuning. Strategic interventions—such as pre-aggregating data to reduce downstream computational burden or caching intermediate results to obviate redundant computation—can dramatically enhance efficiency. Selecting the appropriate execution engine is another axis of strategic consideration. Spark excels in iterative, in-memory computations, whereas Hive demonstrates robustness in batch aggregation.

Candidates may encounter exam scenarios depicting convoluted workflows, where astute redesign choices yield multiplicative gains in execution velocity and resource economy. Such scenarios underscore the necessity of holistic thinking: recognizing that pipeline efficiency is a symphony of interdependent decisions, each resonating across computational stages.

Resource Management: The Economics of Computation

Cloudera clusters are finite ecosystems. CPU cores, memory nodes, and disk capacity constitute the currency of computational success. Prodigal consumption of these resources invites contention, instability, and performance degradation. Efficient resource management is therefore paramount. Techniques span tuning Spark executor memory, calibrating YARN container sizes, and adjusting Hive and Pig parallelism parameters.

The relationship between resource allocation and performance is nuanced. Over-allocation may starve concurrent tasks, whereas under-allocation throttles throughput. Mastery demands both theoretical insight and empirical intuition. CCD-410 aspirants must navigate simulated resource constraints, demonstrating proficiency not only in execution but in strategic prioritization—balancing the competing demands of simultaneous workloads while preserving cluster integrity.

Temporal Dynamics and Pipeline Synchronization

Optimization extends beyond the spatial allocation of resources into the temporal domain. Pipeline stages, particularly those with interdependencies, require precise orchestration to avoid idling or contention. Understanding the cadence of job execution, predicting bottlenecks, and preemptively staggering dependent tasks can yield substantial performance dividends. Spark DAGs, Hive execution plans, and Oozie scheduling converge to form a temporal tapestry wherein misalignment can cascade into systemic inefficiency.

Advanced candidates cultivate an awareness of asynchronous execution, task pipelining, and speculative execution. They anticipate the ebb and flow of workloads, aligning computational demand with available resources. This temporal literacy transforms static tuning into dynamic orchestration, ensuring that pipelines not only perform optimally in isolation but harmonize seamlessly within the broader data ecosystem.

Iterative Refinement Through Experiential Learning

The path to mastery is experiential. Hands-on experimentation with sample clusters illuminates subtleties that theoretical study cannot replicate. Manipulating configurations, simulating failures, and observing resultant behaviors cultivate a visceral understanding of cause and effect. The aspirant learns to iterate: hypothesize, implement, observe, and recalibrate. Each cycle enriches intuition, converting abstract principles into actionable insights.

Experiential learning also engenders resilience. Encountering and resolving failures in controlled environments fosters confidence under real-world conditions. CCD-410 aspirants benefit from methodical exploration, where each experiment reinforces both technical competence and analytical rigor. The ability to internalize lessons from controlled trials translates into superior performance in exam scenarios and operational contexts alike.

Predictive Diagnostics and Proactive Mitigation

Beyond reactive debugging, the advanced practitioner develops predictive acumen. By analyzing historical job metrics, resource utilization patterns, and data distribution trends, one can anticipate failures before they manifest. Proactive mitigation strategies—such as dynamic partitioning, adaptive caching, and load balancing—transform pipeline management from a reactive endeavor into a predictive science.

This foresight necessitates a granular understanding of pipeline mechanics. Memory bottlenecks, I/O latency, and network congestion are not random occurrences; they are deterministic consequences of system design and operational patterns. By cultivating an anticipatory mindset, candidates transcend conventional troubleshooting, positioning themselves as architects of resilient, high-performance data ecosystems.

Optimization as an Iterative Art Form

Advanced optimization is a recursive process, a continual refinement akin to sculpting. Each adjustment—whether in storage format, execution parameter, or resource allocation—reverberates through the pipeline. The expert recognizes that maximal performance is not a singular achievement but an emergent property of continuous assessment and adjustment.

Observing metrics, analyzing logs, and tuning iteratively cultivates a nuanced understanding of systemic interdependencies. In CCD-410, this approach manifests as scenario-based questions that test both conceptual knowledge and applied insight. Candidates must navigate a labyrinth of choices, each decision impacting execution latency, resource utilization, and system stability. Success demands both precision and creativity, balancing the rigor of engineering with the intuition of artistry.

Holistic Perspective on Pipeline Efficiency

True mastery emerges from a synthesis of storage strategy, execution tuning, debugging acumen, resource stewardship, and experiential learning. No single adjustment operates in isolation; pipelines are complex, interdependent ecosystems. Understanding these interconnections enables engineers to optimize holistically, balancing competing priorities to achieve sustainable performance.

Candidates are challenged to view pipelines not merely as sequences of transformations but as dynamic systems in perpetual flux. The interplay between data distribution, execution mechanics, and cluster economics demands continuous vigilance and adaptive strategy. CCD-410 scenarios often simulate these complexities, requiring candidates to synthesize knowledge across multiple domains to formulate effective solutions.

Cognitive Frameworks for Problem Solving

Finally, advanced candidates cultivate cognitive frameworks for systematic problem-solving. By segmenting issues into discrete components—data layout, execution orchestration, resource allocation, and temporal coordination—they can address each aspect methodically. This structured reasoning accelerates diagnosis, enables precise interventions, and fosters confidence in navigating unfamiliar challenges.

The CCD-410 examination rewards such cognitive rigor. Aspirants who internalize this approach demonstrate not only technical competence but intellectual agility, capable of translating theoretical principles into practical, high-impact solutions. Over time, this mindset transforms routine pipeline management into a domain of strategic optimization and predictive mastery.

Embarking on the CCD-410 Odyssey

The odyssey of CCD-410 unfolds as a labyrinthine expedition into the realm of big data engineering, where mere familiarity with tools is insufficient. Aspirants must cultivate a nuanced comprehension of distributed ecosystems, conjuring an amalgamation of dexterity and strategic foresight. This stage demands transcending rote memorization, embracing cognitive alchemy to fuse conceptual grasp with pragmatic implementation. The journey metamorphoses candidates into architects of data pipelines, orchestrators of streams, and virtuosos of computational optimization.

Immersive Project Alchemy

Engaging in real-world project simulations is the crucible in which expertise crystallizes. Constructing end-to-end data conduits—from ingestion into HDFS, transformation with Hive, Pig, or Spark, to orchestration via Oozie and incisive analytics through Impala—fosters a synoptic vision. The process necessitates interaction with heterogeneous datasets, deciphering anomalies, calibrating performance bottlenecks, and navigating workflow aberrations. These experiential undertakings not only simulate the exigencies of the exam but also cultivate a repertoire of problem-solving heuristics. Every project becomes an artifact of learning, a compendium of insights that conveys proficiency beyond superficial comprehension.

Stratagems for Exam Conquest

Mastering CCD-410 requires more than familiarity with components; it demands cerebral stratagems. The examination amalgamates multiple-choice interrogatives, scenario-driven dilemmas, and practical coding exercises. Temporal allocation emerges as a fulcrum of success—questions must be approached with judicious distribution of effort. Meticulous scrutiny of scenarios, discerning salient constraints, and selecting tools with tactical acumen yield more efficacy than rote regurgitation. Regular immersion in exemplar questions and temporal simulations engenders cognitive resilience, tempering anxiety and fostering decisive acuity under examination duress.

Cognitive Pitfalls and Avoidance

Adept candidates recognize the insidious nature of cognitive pitfalls. Misreading scenario subtleties, constructing unnecessarily labyrinthine solutions, or neglecting systemic trade-offs can culminate in suboptimal outcomes. CCD-410 valorizes analytical perspicacity and applied insight; it rewards the architect who reasons methodically, leveraging hands-on experience to navigate complexity. By anchoring decisions in pragmatic rationale rather than superficial familiarity, aspirants achieve answers that resonate with best practices and demonstrate holistic mastery of the data engineering landscape.

Consolidating Conceptual Fortitude

The final phase of preparation necessitates introspective reinforcement. Revisiting Hive, Impala, Pig, Spark, and Oozie with granular attention to nuanced functionalities ensures foundational robustness. Delving into optimization heuristics, debugging intricacies, and orchestrating multifaceted workflows primes candidates to tackle questions that interweave multiple systems. Familiarity with diverse data formats, latency considerations, and performance tuning amplifies confidence, enabling seamless navigation through convoluted scenarios. Iterative engagement through mock projects and timed drills solidifies cognitive agility, transforming fragmented knowledge into a cohesive, retrievable schema.

Harmonizing Mindset and Methodology

The pinnacle of CCD-410 mastery is predicated not solely on technical virtuosity but on cultivating an equanimous, methodical mindset. The examination evaluates problem-solving acumen, adaptability under constraints, and judicious decision-making. Approaching the challenge with composure, relying on internalized principles rather than ephemeral memorization, allows candidates to operationalize knowledge with precision. Cognitive poise, coupled with strategic foresight, transmutes stress into performance, and hesitation into decisive action, elevating the candidate from mere participant to confident practitioner.

The Synthesis of Knowledge and Practice

Engagement with CCD-410 is akin to orchestrating a symphony of data operations, where each instrument—be it Hive, Pig, Spark, Impala, or Oozie—must harmonize seamlessly. The synthesis of theoretical knowledge and practical application yields an elevated comprehension, enabling candidates to anticipate system interactions, optimize resource allocation, and mitigate anomalies preemptively. Documentation of project undertakings serves a dual function: reinforcing internal cognition and presenting an external testament to proficiency. The alchemy of this integrative process transforms knowledge into discernible competence.

Navigating Complexity with Elegance

As the journey progresses, aspirants confront intricately layered challenges requiring both precision and creativity. Optimizing complex queries, orchestrating fault-tolerant workflows, and mitigating bottlenecks demand not only technical dexterity but intellectual agility. Candidates learn to traverse data labyrinths with elegance, leveraging both heuristics and algorithmic foresight. The capacity to abstract patterns, identify latent inefficiencies, and implement scalable solutions becomes a defining hallmark of mastery, distinguishing adept engineers from transient practitioners.

Iterative Mastery through Simulated Trials

Structured, iterative trials emulate real-world exigencies, fostering mastery through repetition and reflection. Simulated environments allow aspirants to explore edge cases, evaluate error propagation, and refine optimization strategies without risk. This cyclic process engenders deep procedural memory, ensuring that strategies and solutions are internalized rather than superficially understood. Through meticulous trial and reflective analysis, candidates cultivate both speed and accuracy, essential attributes for thriving in examination and professional contexts alike.

The Art of Applied Reasoning

In the crucible of CCD-410, applied reasoning eclipses theoretical recall. Candidates are compelled to synthesize disparate knowledge domains, discerning optimal pathways through multifaceted scenarios. Strategic tool selection, resource optimization, and error mitigation converge in a dynamic interplay requiring both analytical rigor and experiential intuition. Mastery emerges not merely from executing commands but from understanding their systemic ramifications, predicting interactions, and preemptively resolving conflicts. This cognitive choreography transforms technical competency into a nuanced, anticipatory skill set.

Orchestrating Data Pipelines with Precision

The mastery of CCD-410 is inseparable from the capacity to engineer data pipelines with meticulous precision. Every stage—from ingestion to transformation, aggregation, and analysis—demands not only familiarity with tool syntax but an appreciation of systemic interplay. Ingesting data through HDFS is not merely about movement; it involves careful consideration of partitioning, replication, and schema evolution. Candidates who can anticipate downstream dependencies, preempt bottlenecks, and architect robust ingestion mechanisms distinguish themselves as engineers capable of operational excellence.

Transformation layers, whether realized through Hive, Pig, or Spark, require nuanced understanding of both declarative and imperative paradigms. Hive’s SQL-like abstractions necessitate attention to execution plans, while Spark’s RDD and DataFrame manipulations compel awareness of memory allocation, shuffles, and caching strategies. Pig Latin scripts offer expressive data flows, but they too demand foresight into join strategies and parallel execution. The orchestration of these layers, compounded by workflow management through Oozie, demands intellectual dexterity and a holistic mindset.

Navigating Real-Time Analytics

Impala and similar analytical engines introduce the dimension of immediacy, where latency is both a metric and a constraint. Real-time analytics is not a mere extension of batch processing; it requires recalibrating strategies to accommodate streaming ingestion, incremental updates, and instantaneous query responses. Candidates must learn to predict query execution costs, understand caching mechanisms, and optimize table structures to balance responsiveness with accuracy. The subtle interplay between hardware utilization, data locality, and query planning can mean the difference between a performant solution and one that falters under real-world load.

The Philosophy of Troubleshooting

Troubleshooting in CCD-410 is less a procedural activity than a philosophical exercise. Candidates must cultivate a mindset of investigative curiosity, approaching errors not as obstacles but as revelatory opportunities. Failures—be they workflow interruptions, resource contention, or transformation inconsistencies—offer insights into systemic fragilities. Developing diagnostic heuristics, such as pattern recognition in logs, anomaly detection, and dependency tracing, allows engineers to navigate complexity efficiently. Mastery emerges when errors are anticipated, preemptively mitigated, and systematically resolved with minimal disruption.

Optimization as an Art Form

Optimization in big data engineering transcends mere performance tuning; it is a form of artistry, balancing trade-offs across speed, memory, storage, and network utilization. Candidates must develop an intuition for bottlenecks, whether arising from inefficient queries, skewed partitions, or misconfigured cluster resources. Hive optimizations—through indexing, partition pruning, and query rewrites—coexist with Spark strategies such as broadcasting small tables, persisting intermediate results, and leveraging Catalyst optimizations. The ability to weigh competing concerns and implement elegant, resource-aware solutions epitomizes the cognitive sophistication expected of CCD-410 aspirants.

Temporal Management in Examination Contexts

Temporal acumen is pivotal in navigating the CCD-410 examination environment. Time is an inexorable constraint, and candidates must allocate it with judicious precision. Complex scenario-based questions require deep analysis, whereas multiple-choice segments may be efficiently addressed through pattern recognition and elimination strategies. Practicing with timed simulations instills not only speed but also the cognitive discipline to pivot between micro-level problem-solving and macro-level strategy. Mastery is achieved when temporal awareness is coupled with confidence, preventing indecision or undue haste.

The Interplay of Tools and Thought

Proficiency in CCD-410 entails recognizing the symbiotic relationship between tools and analytical thought. Tools are not mere implements; they are extensions of cognitive processes, enabling candidates to operationalize abstract reasoning into tangible outcomes. Hive queries, Pig transformations, Spark operations, Oozie workflows, and Impala analytics each embody paradigms of computation. Understanding their limitations, interdependencies, and optimal applications allows candidates to orchestrate sophisticated solutions with elegance. Intellectual agility—the ability to transpose concepts across tools—is as critical as syntactic fluency.

Mental Models for Complex Systems

Successful candidates cultivate robust mental models to navigate multifaceted data ecosystems. Conceptualizing data flows as networks of dependencies, visualizing execution plans, and anticipating failure points transforms abstract complexity into tractable problem spaces. Mental models allow rapid diagnosis, informed decision-making, and strategic orchestration under pressure. The capacity to iterate these models dynamically, adjusting for real-time feedback, mirrors the operational demands of professional data engineering, bridging examination performance with practical competence.

Resilience through Repetition

Repetition is the crucible through which theoretical knowledge crystallizes into procedural mastery. Iterative engagement with project-based exercises, mock scenarios, and debugging challenges reinforces neural pathways, enabling rapid retrieval and application under stress. Beyond mechanical repetition, reflective practice—analyzing errors, understanding their genesis, and revisiting alternative strategies—cultivates a resilience that is cognitive, emotional, and strategic. This layered repetition ensures that candidates are prepared not only to answer questions but to navigate unanticipated complexities with composure.

Embracing Adaptive Problem Solving

CCD-410 emphasizes adaptability as a core competence. Scenarios often integrate multiple tools and require fluid transitions between paradigms, demanding that candidates assess context, predict system behavior, and recalibrate strategies dynamically. Adaptive problem solving combines analytical rigor with experiential intuition, enabling engineers to identify optimal pathways even in ambiguous or evolving circumstances. The ability to pivot seamlessly, integrating both breadth and depth of knowledge, distinguishes mastery from rote competency.

Integrating Multi-Tool Workflows

Integration across tools constitutes the backbone of real-world data engineering. Building pipelines that seamlessly traverse Hive, Pig, Spark, and Impala, orchestrated via Oozie, requires meticulous attention to compatibility, performance harmonization, and fault tolerance. Candidates must anticipate the ramifications of each transformation, the dependencies inherent in data flow, and the operational limits of each platform. Integration is not merely technical; it is strategic, requiring foresight, planning, and iterative refinement to ensure that workflows are resilient, efficient, and scalable.

The Subtlety of Data Governance

Data governance, while often understated, is an integral facet of CCD-410 mastery. Candidates must comprehend the nuances of schema evolution, metadata management, access control, and lineage tracking. Adherence to governance principles ensures data integrity, reproducibility, and auditability. Recognizing the subtle ways in which governance intersects with performance, security, and analytical fidelity equips aspirants with the foresight to anticipate challenges before they manifest, reinforcing the professional caliber expected of certified practitioners.

The Synthesis of Creativity and Logic

Ultimately, CCD-410 mastery emerges at the nexus of creativity and logic. Engineering solutions demands imaginative foresight, envisioning possibilities beyond immediate constraints, while simultaneously grounding actions in analytical rigor and systemic comprehension. Creativity manifests in optimization strategies, workflow orchestration, and novel approaches to problem scenarios, whereas logic ensures structural soundness, predictability, and reproducibility. The harmonious interplay of these faculties transforms candidates from technicians into data architects capable of both innovation and reliability.

Cultivating Cognitive Poise

Cognitive poise—the capacity to maintain clarity, focus, and adaptability under pressure—is an intangible yet indispensable attribute. Candidates must navigate complex scenarios, time constraints, and multi-layered problem spaces without succumbing to cognitive overload. Techniques such as deliberate pacing, scenario decomposition, and mental rehearsal strengthen poise, enabling candidates to approach questions methodically, prioritize effectively, and respond with precision. This equilibrium between mental discipline and flexibility epitomizes the advanced competence CCD-410 seeks to certify.

Memory Orchestration in Distributed Environments

Memory is the lifeblood of distributed data processing, yet it is often the most capricious resource. Spark, YARN, and Hive operate in environments where memory mismanagement can induce cascading failures. Executors with insufficient heap space can precipitate task evictions, while overly generous allocations lead to underutilized capacity and contention with concurrent processes.

Advanced practitioners approach memory management as both a science and an art. They dissect memory usage into storage, execution, and shuffle components, calibrating each according to workload characteristics. For iterative algorithms, caching strategies must be carefully aligned with executor memory to prevent recomputation while avoiding garbage collection overhead. In YARN, container sizing is not arbitrary; it must anticipate peak loads while leaving headroom for auxiliary processes. CCD-410 scenarios often simulate memory pressure, challenging candidates to fine-tune allocations dynamically to prevent task failure without compromising throughput.

Shuffle Complexity and Network Contention

In distributed systems, shuffling represents a latent source of inefficiency. Shuffles redistribute data across nodes, enabling joins, aggregations, and sorts. However, excessive shuffling can saturate network bandwidth, inflate job latency, and exhaust memory buffers. Candidates must evaluate the lineage of data transformations to identify opportunities for shuffle minimization.

Techniques such as partitioning by join keys, pre-aggregating intermediate results, and coalescing small partitions reduce shuffle overhead. Spark’s narrow dependencies allow pipelined execution, whereas wide dependencies necessitate costly data movement. Understanding the distinction empowers candidates to redesign workflows for minimal network contention. In exam simulations, scenarios may present sprawling DAGs, task stragglers, and uneven partition distributions, testing the aspirant’s capacity to rationalize shuffle strategies under high-pressure conditions.

Data Skew: Diagnosis and Remediation

Data skew is a subtle yet pernicious adversary in distributed computation. When partitions vary drastically in size, some tasks complete rapidly while others languish, producing stragglers that prolong overall execution. Detecting skew requires vigilant monitoring of task duration, executor metrics, and data distribution histograms.

Mitigation strategies are manifold. Salting keys in joins redistributes hotspot data, while adaptive partitioning dynamically balances workload across nodes. Broadcast joins may circumvent skew for smaller datasets by replicating one side of the join entirely. CCD-410 aspirants must internalize the nuances of these techniques, recognizing that each solution carries implications for memory, network, and CPU utilization. The ability to reason about skew not only improves pipeline performance but also ensures robustness under unpredictable workloads.

Advanced Caching Strategies

Caching is more than a convenience; it is a lever for dramatic performance gains. The strategic placement of cached datasets in Spark can obviate repeated computation, reduce I/O latency, and accelerate iterative processing. However, indiscriminate caching can backfire, consuming memory unnecessarily and triggering eviction cascades.

Seasoned engineers implement multi-tiered caching: in-memory storage for hot data, disk storage for intermediate datasets, and lineage-aware persistence to recover from failures. CCD-410 challenges may present complex DAGs, requiring candidates to determine which nodes or transformations merit caching. Success hinges on balancing cost against benefit, factoring in execution frequency, data size, and downstream dependencies.

Query Plan Forensics

Query plans are cartographic representations of computational flow. Hive and Pig offer visualizations that reveal transformation sequences, join orders, and filter placements. Reading these plans is akin to forensic analysis—each node exposes potential inefficiencies, from Cartesian joins to redundant scans.

Advanced candidates cultivate the ability to anticipate performance pitfalls from query plans alone. They can identify which stages are CPU-bound, I/O-bound, or network-bound and prescribe interventions such as predicate pushdown, join reordering, or partition pruning. CCD-410 examinations frequently present obfuscated query plans, compelling aspirants to decode them under timed conditions. This skill underscores the interplay between analytical reasoning and operational expertise, rewarding those who can navigate abstract representations to actionable insights.

Iterative Algorithms and In-Memory Optimization

Iterative algorithms, such as machine learning model training or graph computations, are highly sensitive to execution strategy. Repeated passes over datasets magnify inefficiencies, making in-memory optimization imperative. Spark’s RDD lineage and DataFrame caching enable retention of intermediate states, mitigating repeated disk I/O.

Candidates must understand checkpointing and persistence levels, distinguishing between MEMORY_ONLY, MEMORY_AND_DISK, and DISK_ONLY strategies. Misalignment between algorithmic demands and caching strategy can introduce performance penalties that compound exponentially over iterations. CCD-410 scenarios may test knowledge of iterative workflows, challenging candidates to choose optimal persistence schemes while conserving cluster resources.

Failure Simulation and Resilience Engineering

Resilience is a hallmark of expert pipeline engineering. Beyond performance tuning, the advanced candidate must anticipate and accommodate failures. Task crashes, node failures, and transient network outages are endemic in distributed environments.

Simulating these failures under controlled conditions provides critical insights. By intentionally inducing task interruptions, experimenting with checkpointing, and monitoring recovery behavior, practitioners gain intuition for pipeline robustness. CCD-410 aspirants are often required to devise mitigation strategies that maintain data integrity, minimize recomputation, and ensure consistent output despite operational volatility. This proactive approach differentiates reactive troubleshooting from anticipatory engineering.

Resource Contention and Multi-Tenancy

In multi-tenant clusters, resource contention amplifies the complexity of optimization. Jobs may compete for CPU cores, memory, and network bandwidth, creating performance oscillations. Advanced practitioners implement priority queues, fair scheduling, and dynamic allocation policies to harmonize workload execution.

Understanding the interplay between concurrent tasks and resource limits is essential. CCD-410 scenarios may present simulated environments where multiple workflows run simultaneously, testing a candidate’s ability to anticipate contention, allocate resources judiciously, and maintain pipeline performance. Mastery of multi-tenancy management elevates the practitioner from competent technician to strategic operator.

Temporal Load Balancing and Adaptive Scheduling

Beyond static resource allocation, temporal dynamics influence performance. Workloads fluctuate, data arrival patterns vary, and peak demand periods stress cluster capacity. Adaptive scheduling anticipates these fluctuations, redistributing tasks to avoid bottlenecks and optimize throughput.

Techniques such as speculative execution, task preemption, and dynamic concurrency adjustments allow pipelines to respond in real time to shifting conditions. CCD-410 aspirants are expected to conceptualize temporal load balancing strategies, translating abstract principles into concrete scheduling solutions. This temporal literacy ensures that pipelines remain performant under variable operational rhythms, aligning resource utilization with workload demand.

Logging and Observability: The Diagnostic Nexus

Logs are not mere records; they are diagnostic gold. Comprehensive logging, coupled with observability metrics, illuminates pipeline behavior across dimensions of time, resource consumption, and error incidence. Advanced candidates correlate log patterns with performance anomalies, identifying subtle bottlenecks or latent failures.

Instrumenting pipelines with detailed metrics, analyzing task-level execution, and visualizing system state in dashboards creates a feedback loop that informs iterative optimization. CCD-410 scenarios frequently assess candidates’ proficiency in interpreting logs, requiring precise diagnosis and actionable recommendations. Mastery of this nexus transforms passive monitoring into an active tool for predictive and prescriptive interventions.

Data Locality and Computation Proximity

Efficiency in distributed computation is profoundly influenced by data locality. Placing computation near data minimizes network latency, reduces shuffle overhead, and enhances throughput. Spark and Hadoop scheduling algorithms leverage locality preferences, yet the practitioner must understand when manual intervention is warranted.

Techniques such as node-affinity scheduling, co-locating input splits with computation nodes, and prefetching hot partitions augment automated scheduling. Candidates must reason about the trade-offs between locality optimization and load balancing, especially in heterogeneous clusters where storage distribution is uneven. CCD-410 questions often simulate skewed or geographically dispersed data, testing the ability to maintain locality without sacrificing parallelism.

Pipeline Refactoring and Evolutionary Optimization

Optimization is not a static endeavor; it is evolutionary. As datasets grow, workflows change, and cluster configurations evolve, pipelines require continuous refactoring. Candidates must assess historical performance metrics, identify emerging bottlenecks, and implement iterative improvements.

Refactoring may involve reordering transformations, replacing suboptimal joins, consolidating intermediate outputs, or adopting new storage formats. CCD-410 aspirants are expected to demonstrate not only technical acumen but strategic foresight, anticipating future workloads and designing pipelines resilient to growth. This mindset elevates optimization from a reactive process to a continuous, proactive discipline.

Orchestrating Multi-Stage Data Pipelines

Data pipelines rarely exist as isolated constructs; they are often woven into multi-stage architectures that span ingestion, transformation, enrichment, and analytical delivery. Oozie excels in managing these labyrinthine pipelines by allowing developers to sequence dependent tasks while maintaining visibility into the workflow’s state. Each stage can be fine-tuned, monitored, and retried independently, providing resilience against partial failures. For CCD-410 aspirants, mastering multi-stage orchestration requires not just familiarity with commands but an intuitive grasp of how data propagates through complex systems.

Temporal Nuances and Event-Driven Triggers

Temporal orchestration in Oozie extends beyond simple cron-like scheduling. Developers can leverage frequency, start-time, end-time, and interval parameters to synchronize workflows with precision. Event-driven triggers, on the other hand, introduce reactive dynamism, activating pipelines only upon data arrival. In scenarios such as real-time sensor data or incremental log ingestion, the judicious combination of temporal and event-based triggers ensures that workflows remain efficient without unnecessary resource consumption. Candidates must conceptualize these triggers not merely as mechanics but as instruments to optimize throughput and latency.

Advanced Error Recovery Patterns

While Oozie provides basic retry and failure handling, complex environments demand sophisticated error recovery patterns. Techniques such as idempotent task design, checkpointing, and compensatory workflows transform error-prone pipelines into self-healing architectures. Idempotence ensures that repeated executions do not corrupt downstream data, while checkpoints preserve intermediate states, allowing workflows to resume from the last successful stage rather than restarting entirely. These strategies mirror enterprise best practices, and CCD-410 assessments frequently probe candidates’ ability to architect resilient solutions under hypothetical failures.

Integrating Heterogeneous Analytical Engines

Oozie’s orchestration prowess shines most when coordinating disparate engines. A single workflow might combine a Hive ETL job, a Spark machine learning transformation, and a Pig aggregation, all harmonized to deliver a coherent data product. Understanding the idiosyncrasies of each engine—execution latency, resource utilization, and failure semantics—is essential for designing pipelines that balance performance and reliability. Practical exercises in integrating heterogeneous engines solidify the aspirant’s competence, fostering the ability to foresee bottlenecks and optimize execution strategies.

Sub-Workflow Hierarchies and Reusability

Sub-workflows represent an underappreciated facet of Oozie’s design philosophy. By decomposing large workflows into nested sub-units, developers gain modularity, readability, and reusability. Parameterization further amplifies flexibility, allowing a single sub-workflow to process multiple datasets or adapt to different runtime environments. These hierarchies reflect software engineering principles within the data domain, emphasizing separation of concerns, maintainability, and scalability. CCD-410 scenarios often reward aspirants who demonstrate both structural clarity and adaptive pipeline design.

Logging, Metrics, and Workflow Telemetry

Observability is the linchpin of effective orchestration. Oozie provides detailed logs and metrics for each action within a workflow, encompassing start and end times, resource utilization, and error codes. Aspirants must develop the skill to interpret these logs, detect anomalies, and make informed adjustments. Beyond reactive troubleshooting, workflow telemetry supports proactive optimization, such as balancing load across cluster nodes, reducing task latency, and identifying redundant computations. Cultivating an analytic mindset toward telemetry data transforms workflow management from maintenance to strategic enhancement.

Conditional Branching and Dynamic Execution Paths

Conditional execution is more than a programming convenience; it is a mechanism to align workflows with business logic and data-driven imperatives. In Oozie, conditional branches can be predicated on data characteristics, job outcomes, or external triggers. Designing effective branching logic involves evaluating the probabilistic distribution of potential outcomes, mitigating latency in the longest paths, and ensuring idempotent execution where tasks may be retried. Mastery of these concepts allows aspirants to engineer workflows that are both intelligent and resilient, capable of adapting to real-time data realities.

Security, Permissions, and Governance Considerations

Enterprise-scale orchestration cannot ignore the imperatives of security and governance. Oozie integrates with Hadoop’s security model, ensuring that workflows execute with appropriate permissions, data access is restricted to authorized entities, and audit trails are preserved for compliance purposes. Candidates should understand how to configure workflow credentials, handle sensitive datasets, and integrate with Kerberos or LDAP authentication mechanisms. Governance-conscious design is increasingly scrutinized in CCD-410 assessments, reflecting the intersection of technical skill and organizational responsibility.

Workflow Optimization and Resource Efficiency

Beyond functional correctness, high-performing pipelines require optimization at multiple levels. Resource allocation, parallelism, and task prioritization directly influence throughput and cost efficiency. Oozie allows developers to define concurrent execution paths, throttle job execution, and assign resources judiciously. Understanding the trade-offs between parallelism and cluster contention is crucial for designing efficient pipelines. CCD-410 aspirants benefit from simulating scenarios that balance speed, reliability, and resource utilization, reflecting real-world constraints in distributed data environments.

Temporal Recovery and Catch-Up Execution

In dynamic environments, workflows may occasionally miss scheduled triggers due to cluster downtime or upstream delays. Oozie’s ability to support catch-up execution enables missed jobs to be retroactively processed, preserving data completeness. Implementing catch-up requires careful planning to avoid overwhelming cluster resources or introducing data duplication. Candidates should develop strategies to determine which missed executions are critical, how to schedule them efficiently, and how to maintain idempotency to prevent inconsistencies in downstream analytics.

Metrics-Driven Decision Making

Data orchestration is increasingly guided by metrics rather than intuition. Oozie workflows can generate actionable insights regarding latency, success rates, and resource consumption. By leveraging these metrics, developers can implement continuous improvement cycles, adjusting workflow design to optimize performance over time. Aspirants who internalize metrics-driven decision-making demonstrate the cognitive agility expected at the CCD-410 level, translating raw workflow data into strategic enhancements.

Ecosystem Interoperability and Extensibility

Modern orchestration requires not only mastery of a single tool but the ability to navigate ecosystem interoperability. Oozie’s XML-based architecture, sub-workflows, and parameterization provide extensibility that can interact with other orchestration frameworks, monitoring systems, and cloud-based platforms. Understanding these interactions enables aspirants to design hybrid architectures that leverage the strengths of multiple tools without redundancy or conflict. This forward-looking perspective aligns with real-world expectations where ecosystems evolve rapidly and integration skills are paramount.

Conclusion

The journey through the Cloudera Certified Developer (CCD-410) certification is both challenging and rewarding. Across six comprehensive parts, we explored the full spectrum of knowledge required to become a proficient Cloudera developer—from foundational concepts to advanced performance tuning and real-world project implementation. Candidates who engage deeply with these topics develop not only the skills to pass the exam but also the capability to tackle complex, large-scale data challenges in professional environments.

Foundational understanding of Hadoop, HDFS, and MapReduce sets the stage, providing clarity on how data is stored, processed, and managed across distributed clusters. Hive, Impala, and Pig allow developers to interact with this data efficiently, each offering unique strengths in batch processing, real-time analytics, and complex transformations. Mastery of these tools requires both theoretical knowledge and extensive hands-on practice, enabling developers to make informed decisions about query design, optimization, and workflow integration.

Spark further elevates a developer’s skillset, introducing high-performance, in-memory data processing. Concepts such as RDDs, DataFrames, Datasets, partitioning, shuffling, and caching are crucial for building efficient, scalable pipelines. Equally important is the ability to diagnose performance issues, optimize execution, and design workflows that handle large datasets reliably.

Workflow orchestration with Oozie ensures that these pipelines operate seamlessly. Scheduling, dependencies, error handling, and modular design are essential for maintaining robust, maintainable pipelines. Combining orchestration with advanced optimization techniques—such as tuning job parameters, managing cluster resources, and implementing fault-tolerant strategies—prepares developers to solve real-world challenges and respond to dynamic data environments.

Finally, the emphasis on practical projects, scenario-based problem-solving, and disciplined exam strategies bridges the gap between knowledge and certification success. Real-world practice strengthens intuition, sharpens analytical thinking, and fosters confidence, ensuring that candidates are prepared for both the CCD-410 exam and professional responsibilities in enterprise data environments.

In essence, mastering CCD-410 is not just about certification—it’s about cultivating a mindset of continuous learning, problem-solving, and adaptability. By combining conceptual mastery, hands-on experience, and strategic thinking, aspirants emerge as capable, confident Cloudera developers, ready to leverage big data to drive insights, innovation, and tangible results. The journey may be rigorous, but the skills acquired are invaluable, setting the stage for a successful career in the ever-evolving field of big data.