Exam Code: TB0-124

Exam Name: TIBCO MDM 8

Certification Provider: Tibco

Corresponding Certification: TCP

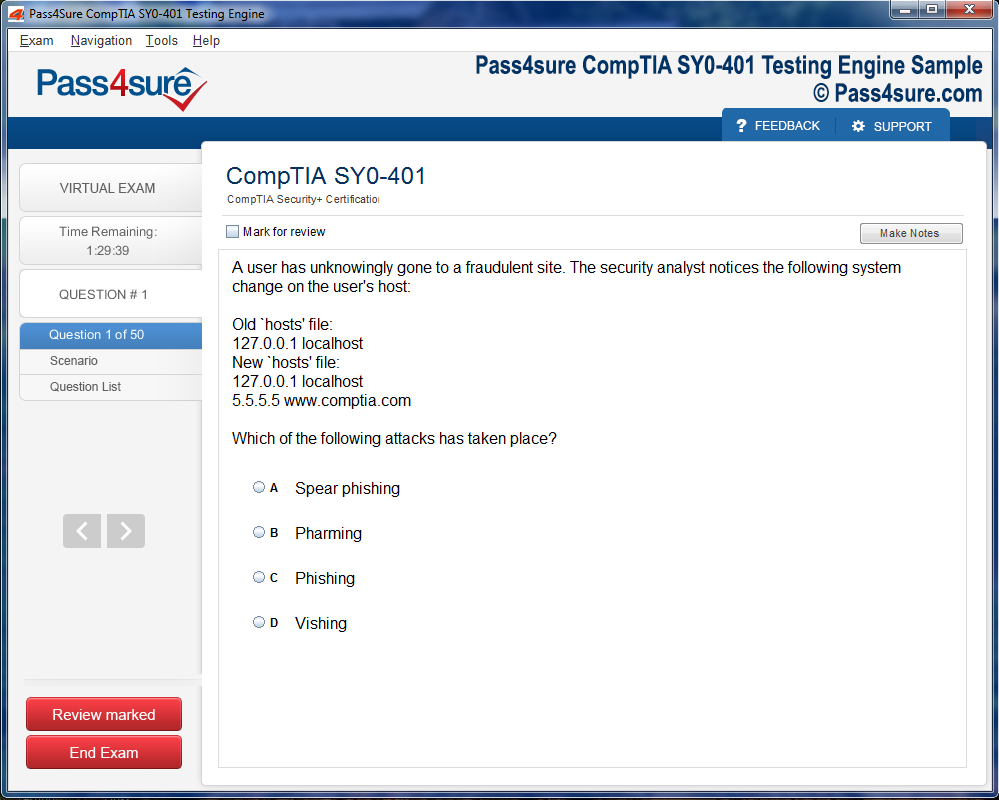

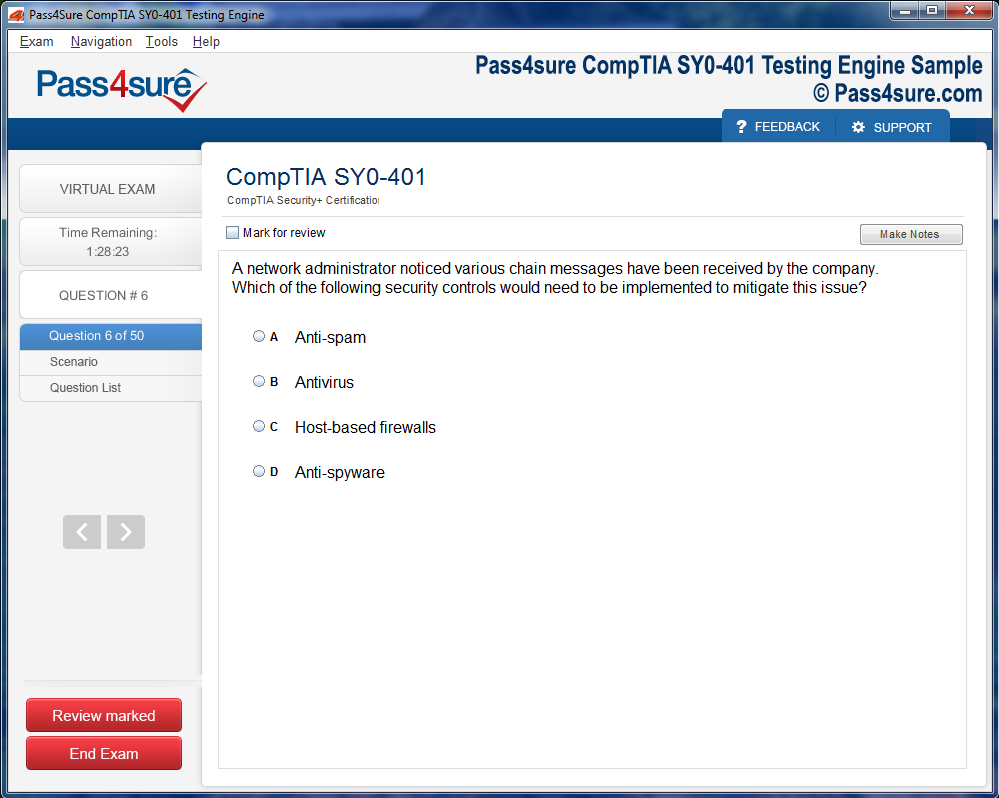

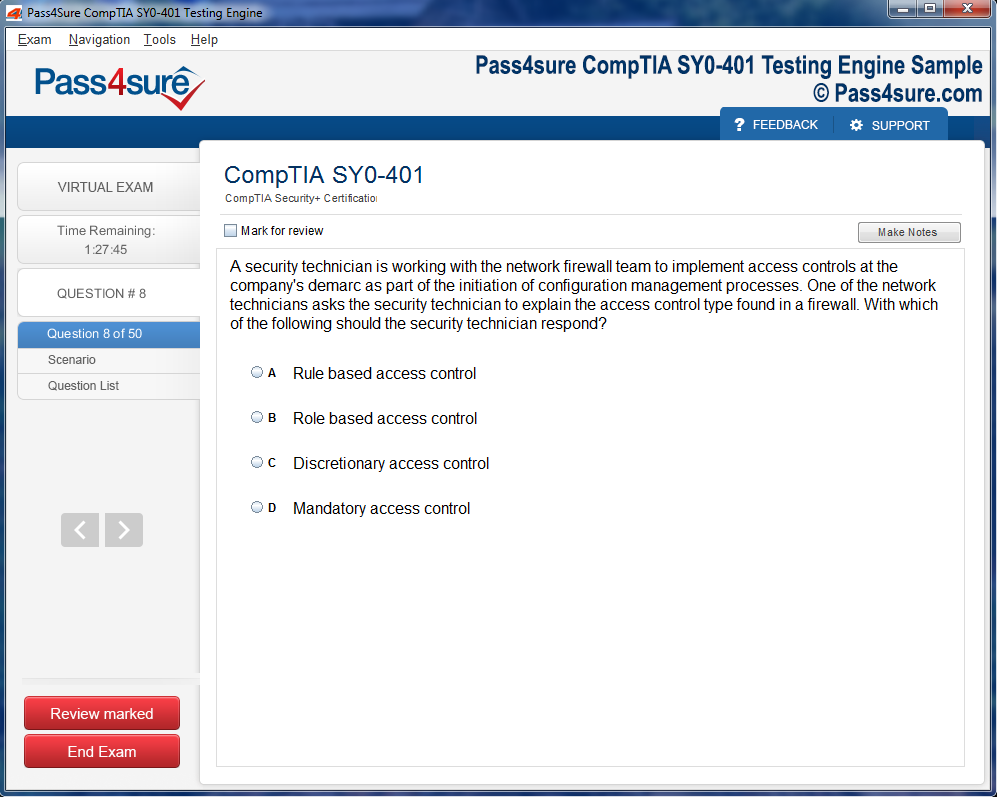

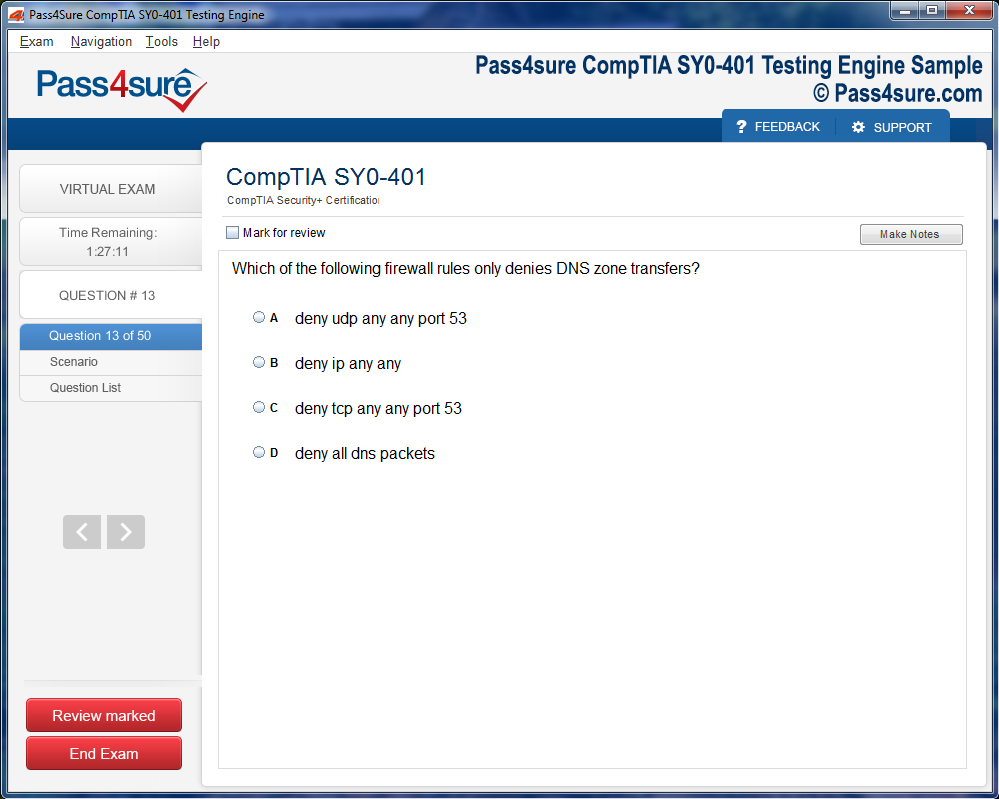

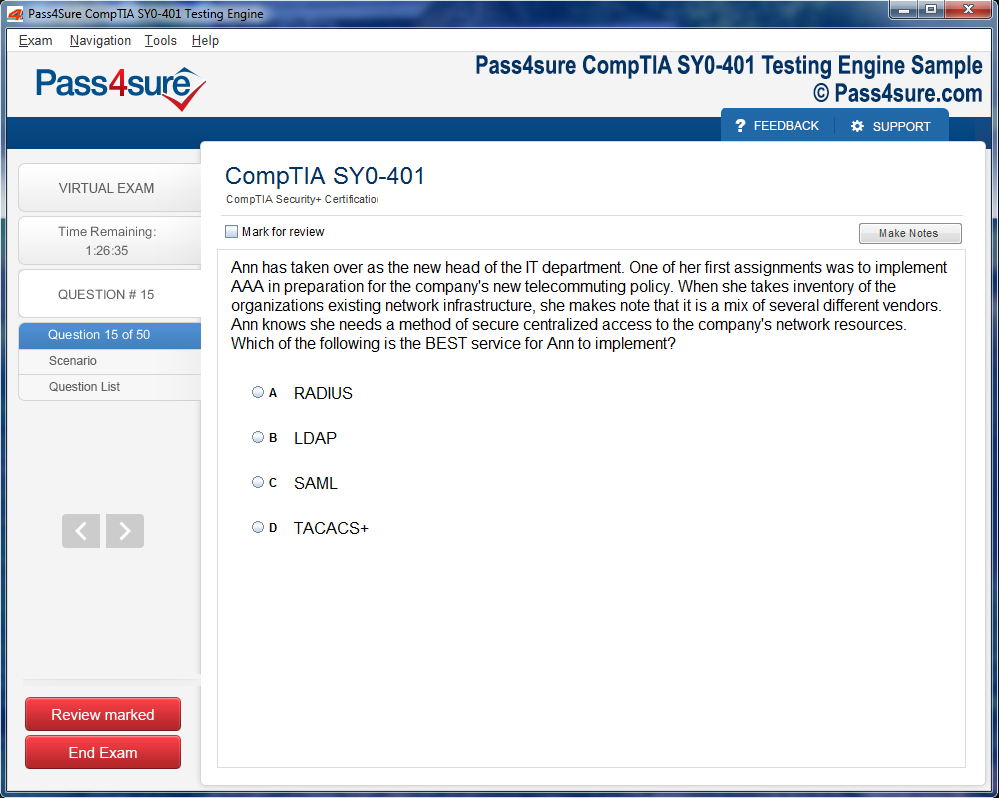

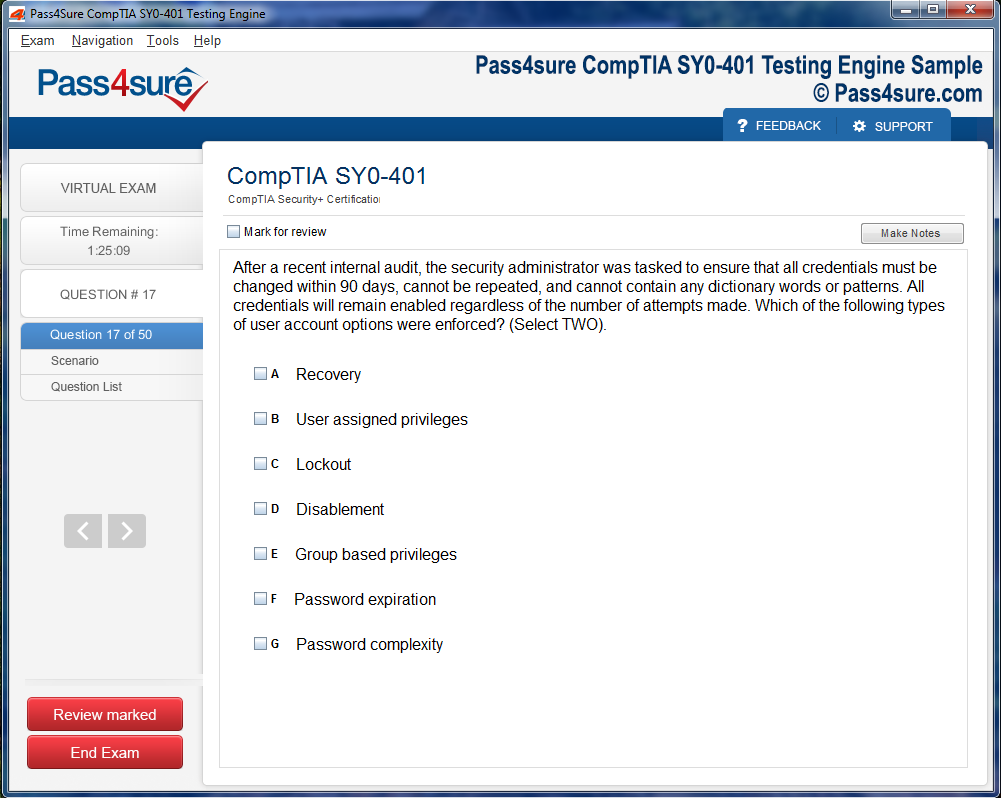

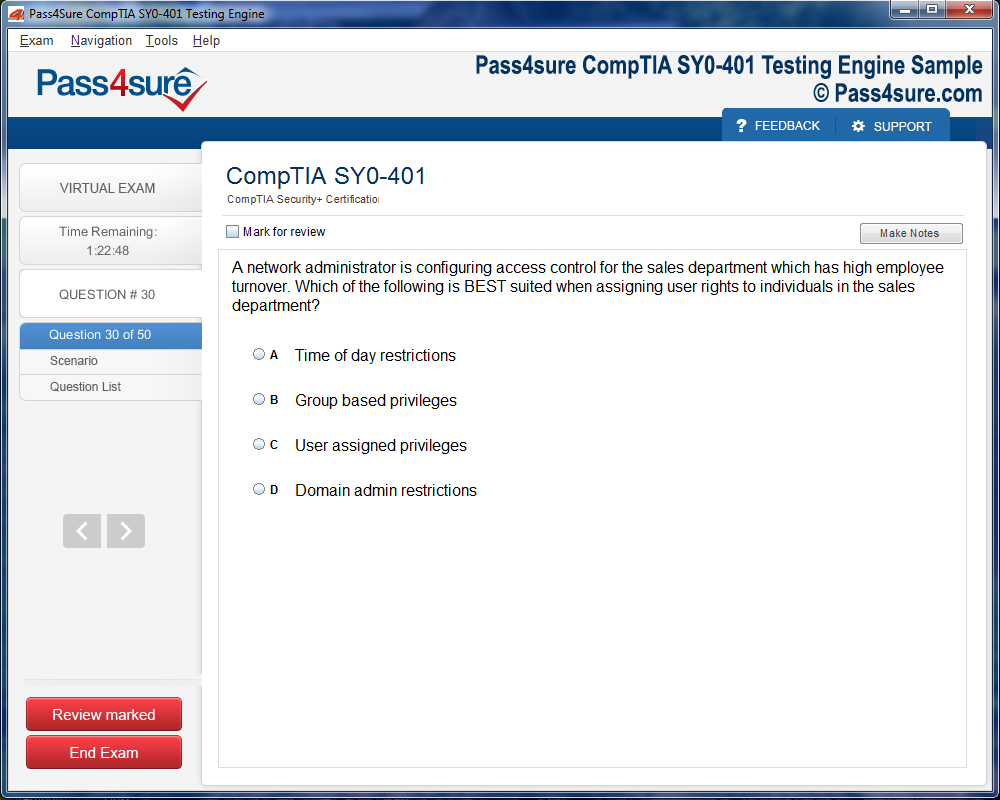

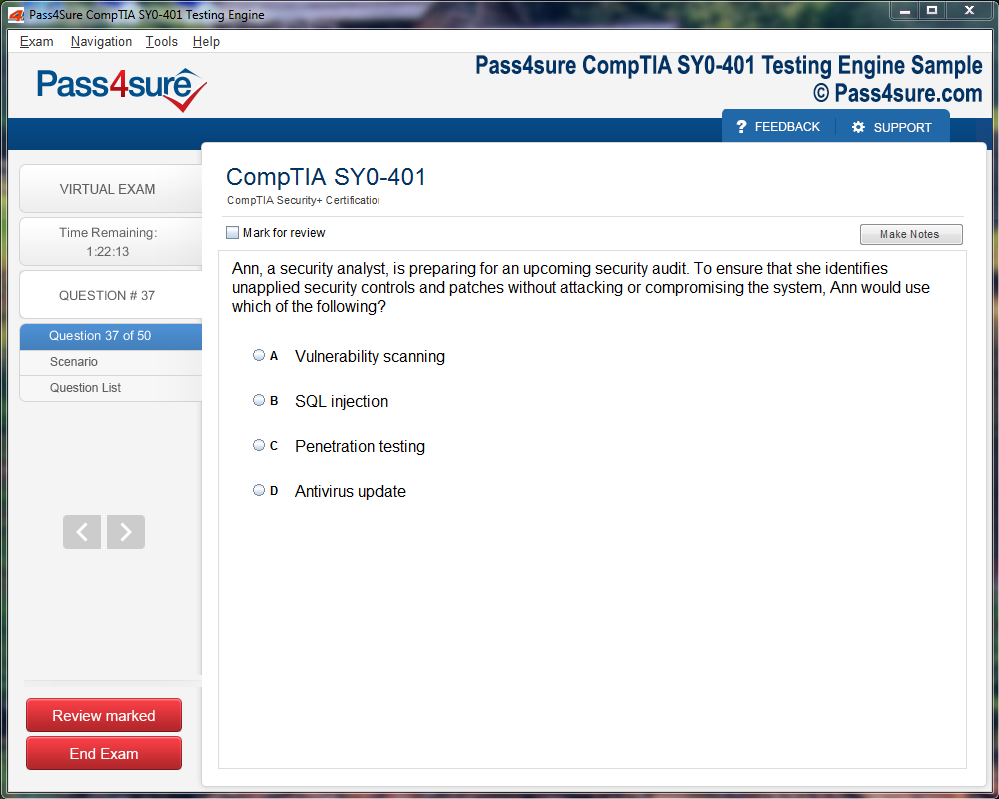

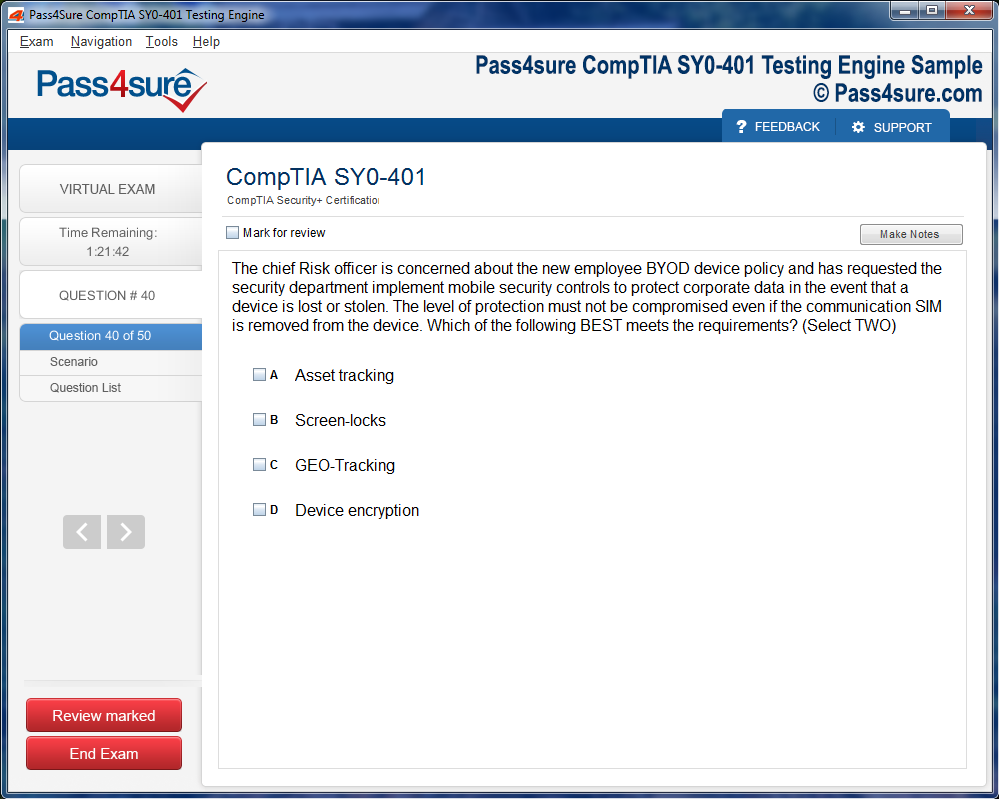

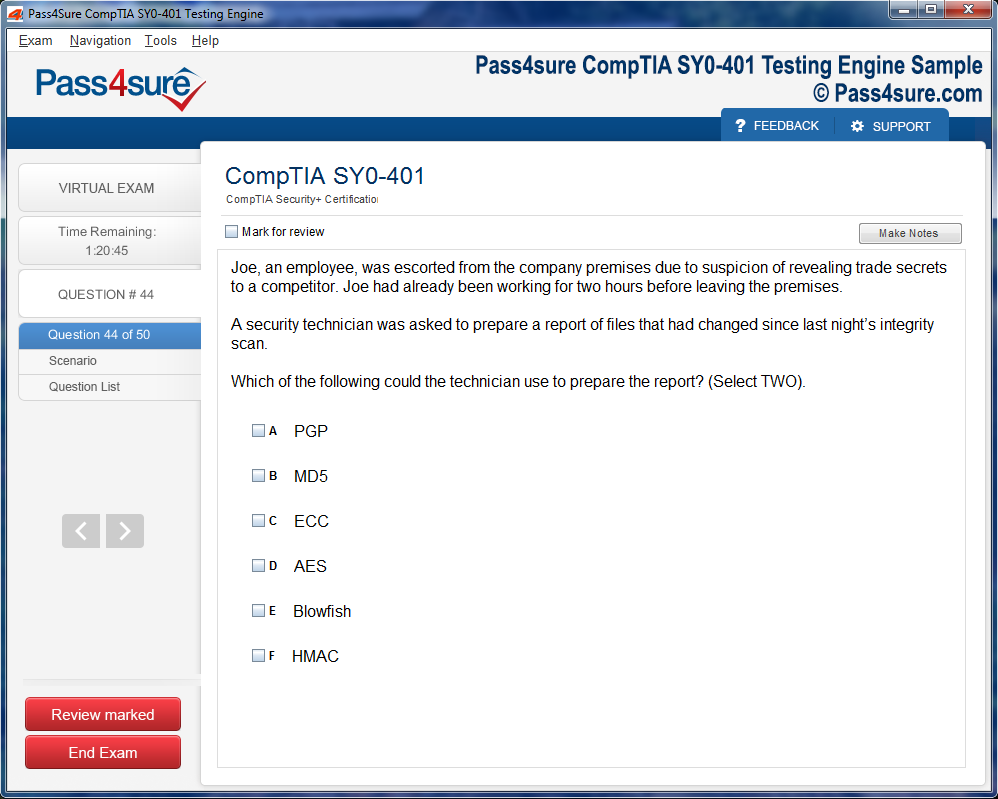

Product Screenshots

Frequently Asked Questions

How does your testing engine works?

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations.

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.

TB0-124 Certification Exam Secrets Every Candidate Should Know

The TIBCO Master Data Management 8 platform emerges as a pivotal solution in modern enterprise data landscapes. Organizations face an ever-growing need to harmonize information across myriad systems, applications, and departments. TIBCO addresses this necessity by providing a centralized mechanism to manage critical business data. The platform ensures consistency, integrity, and accessibility of data, allowing businesses to make well-informed decisions.

Unlike traditional data management systems, TIBCO does not merely store data. It establishes a framework where data quality, governance, and lifecycle management coexist. By offering robust tools for data stewardship, organizations can monitor, cleanse, and enrich their data. This unified approach reduces redundancy, minimizes errors, and streamlines operations across the enterprise. The platform’s architecture is designed to be scalable and adaptable, accommodating evolving business requirements and integrating seamlessly with existing infrastructure.

Data Modeling and Structure

One of the cornerstone capabilities of TIBCO is its sophisticated data modeling functionality. The platform allows professionals to define complex data structures that represent real-world business entities accurately. By implementing hierarchical, relational, and attribute-based models, organizations can capture a comprehensive view of their data landscape. Data modeling in TIBCO extends beyond basic definitions; it incorporates rules, constraints, and relationships that reflect the interdependencies within the business.

Proper data modeling ensures that information is consistent, accurate, and usable across all applications. The platform provides intuitive tools to visualize entities, relationships, and hierarchies. This visualization capability aids in understanding complex data interactions and supports better decision-making. Moreover, data models in TIBCO can evolve over time, adapting to new business processes, regulatory requirements, or emerging data sources. Professionals skilled in data modeling within the MDM environment can design frameworks that minimize duplication, enforce consistency, and enable seamless data integration.

Data Governance and Stewardship

TIBCO emphasizes the critical role of data governance in maintaining high-quality information. Governance within the platform involves defining policies, standards, and workflows that ensure data is reliable, secure, and compliant with organizational rules. Effective data governance promotes accountability and transparency, making certain that stakeholders understand the origin, usage, and lifecycle of each data element.

Data stewardship is an essential component of governance in TIBCO . Stewards are responsible for monitoring data quality, resolving inconsistencies, and validating changes. The platform provides tools for automated validation, alert generation, and exception handling, allowing stewards to address issues efficiently. By integrating governance into everyday operations, TIBCO ensures that data is not only accurate but also aligned with strategic business goals. This structured oversight reduces operational risks, prevents compliance violations, and enhances the credibility of data-driven decisions.

User Management and Security

Managing user access and maintaining security are paramount in enterprise environments, and TIBCO addresses these needs comprehensively. The platform offers flexible user management mechanisms, allowing administrators to define roles, permissions, and responsibilities at granular levels. By controlling access to sensitive data, organizations safeguard against unauthorized use, manipulation, or leakage of critical information.

TIBCO provides tools for authentication, authorization, and auditing, which are integral to maintaining data security. Administrators can track changes, monitor user activities, and generate reports for compliance purposes. The system supports collaborative workflows while ensuring that only authorized personnel can modify or approve specific data sets. By implementing rigorous security and user management practices, businesses mitigate potential risks and cultivate a culture of accountability among employees and stakeholders.

Data Integration and Interoperability

In today’s interconnected digital landscape, data rarely exists in isolation. Organizations utilize multiple applications, databases, and platforms, making integration a critical requirement. TIBCO excels in connecting diverse systems, ensuring that data flows seamlessly across the enterprise. The platform supports various integration protocols, allowing synchronization between internal systems, external partners, and cloud-based services.

Integration in TIBCO is not merely a technical function; it is a strategic enabler for unified data management. By connecting disparate sources, the platform creates a single version of truth, reducing redundancy and conflicting information. Data enrichment and transformation capabilities allow organizations to harmonize incoming information, ensuring consistency with predefined standards. The interoperability of TIBCO facilitates real-time data updates, automated workflows, and analytics-ready datasets, empowering businesses to act decisively based on reliable insights.

System Configuration and Administration

Efficient configuration and administration are essential to leverage the full potential of TIBCO . The platform provides administrators with a comprehensive suite of tools to customize settings, define business rules, and monitor system performance. Configuration is designed to be intuitive, enabling rapid deployment while maintaining flexibility for complex business scenarios.

Administrators can set up validation rules, approval workflows, and alert mechanisms that align with organizational objectives. The platform also supports scheduling, batch processing, and task automation, which enhance operational efficiency. System monitoring tools provide real-time insights into performance, usage patterns, and potential bottlenecks. By mastering configuration and administration, professionals ensure that the MDM system operates optimally, providing reliable data services to all stakeholders and supporting continuous improvement initiatives.

Enhancing Data Quality and Analytics

Data quality remains at the heart of TIBCO ’s value proposition. The platform incorporates mechanisms to cleanse, standardize, and enrich data, eliminating inconsistencies that can undermine business decisions. High-quality data is essential for accurate reporting, predictive modeling, and strategic planning. TIBCO empowers organizations to detect anomalies, merge duplicate records, and enforce standardization rules consistently.

The improved data quality achieved through TIBCO has a direct impact on analytics and business intelligence initiatives. Reliable data enables accurate trend analysis, customer insights, and operational forecasting. Organizations can leverage this information to identify opportunities, mitigate risks, and optimize processes. The platform’s data quality features integrate seamlessly with reporting tools and analytics engines, providing a robust foundation for advanced decision-making. By maintaining rigorous data standards, businesses cultivate trust in their data and strengthen their competitive advantage.

Conclusion of Professional Significance

Mastery of TIBCO translates into significant professional value. Certification through exams like TB0-124 validates one’s expertise in deploying, managing, and optimizing the platform. Professionals skilled in MDM implementation are capable of transforming fragmented data environments into cohesive, well-governed ecosystems. They facilitate strategic decision-making, improve operational efficiency, and ensure regulatory compliance. The platform’s comprehensive suite of capabilities requires a holistic understanding, encompassing data modeling, governance, integration, security, and quality management.

Possessing TIBCO certification signals to employers that an individual can navigate complex data landscapes, configure systems efficiently, and uphold high standards of data quality. Certified professionals are well-positioned to lead MDM initiatives, contribute to enterprise-wide digital transformation, and support sustainable business growth. Their expertise ensures that organizations fully capitalize on their data assets, fostering innovation and enabling smarter, data-driven strategies.

Understanding the Architecture of TIBCO

The architecture of TIBCO exhibits a sophisticated yet coherent design that empowers organizations to manage master data with precision. At its nucleus lies a service-oriented architecture, a framework that promotes modularity, reusability, and seamless integration. This structure ensures that each component can operate independently yet harmoniously within a larger ecosystem, fostering scalability for enterprises of varying sizes.

The MDM Server is the central pillar of this architecture. It functions as the authoritative repository for master data, meticulously managing the lifecycle of data entities from creation to retirement. The server is not merely a storage mechanism; it incorporates robust business logic that enforces data consistency, integrity, and validation. This ensures that all records across the enterprise adhere to predefined standards and are synchronized across multiple systems.

Encircling the MDM Server are various specialized modules. These modules are tailored to handle specific facets of data management, such as cleansing, validation, enrichment, and synchronization. The data cleansing module scrutinizes incoming records to detect anomalies, redundancies, and inconsistencies, correcting them before they propagate across the organization. Enrichment modules supplement raw data with additional context, integrating information from external sources to create a more holistic view of entities. Synchronization modules guarantee that updates are propagated accurately and promptly across integrated systems, preventing discrepancies that could disrupt business operations.

The User Interface layer in TIBCO is meticulously designed to provide an intuitive experience for administrators, data stewards, and business users. This layer presents dashboards that consolidate essential metrics, offering a comprehensive view of data health and operational efficiency. Through configurable reports and interactive screens, users can perform advanced searches, monitor workflows, and manage data entities with minimal technical intervention. This design philosophy emphasizes user empowerment, ensuring that data governance practices are seamlessly embedded into day-to-day operations.

Integration capabilities in TIBCO are both versatile and robust, reflecting the platform’s adaptability to complex enterprise environments. The system supports multiple integration paradigms, including web services, messaging protocols, and batch processing. Web services enable real-time communication between disparate applications, while messaging systems allow asynchronous exchanges, ensuring that critical updates are transmitted even in high-volume scenarios. Batch processing remains vital for bulk operations, facilitating periodic data synchronization and migration tasks without affecting system performance. These integration mechanisms collectively ensure that master data is harmonized across the enterprise, eliminating silos and enabling a single source of truth.

Security considerations in TIBCO are meticulously embedded throughout the architecture. The platform employs role-based access control to delineate permissions and responsibilities, ensuring that users only access data pertinent to their functions. Encryption protocols safeguard sensitive information both in transit and at rest, mitigating risks associated with unauthorized access. Additionally, audit logging provides comprehensive visibility into system interactions, enabling organizations to track changes, monitor compliance, and respond swiftly to anomalies. By integrating these security measures into the core architecture, TIBCO establishes a resilient framework that balances accessibility with data protection.

Scalability and performance optimization are also inherent features of the architecture. The service-oriented design allows the platform to scale horizontally, accommodating increasing data volumes and user loads without compromising responsiveness. Advanced caching mechanisms reduce latency in data retrieval, while optimized query execution ensures that operations remain efficient even under high demand. This focus on performance is crucial for enterprises that rely on real-time insights and rapid decision-making processes.

Understanding the underlying architecture of TIBCO is indispensable for professionals preparing for the TB0-124 certification. A comprehensive grasp of how each component interconnects and operates allows candidates to design and implement robust MDM solutions. By recognizing the interplay between the MDM Server, supporting modules, user interface, integration frameworks, and security protocols, professionals can architect systems that not only maintain data integrity but also drive business efficiency and strategic advantage.

TIBCO ’s architecture also promotes flexibility, which is particularly important in dynamic business environments. Organizations frequently encounter evolving requirements, whether due to regulatory mandates, market expansions, or technological advancements. The platform’s modular nature allows for the addition, removal, or modification of components without destabilizing the entire system. This adaptability reduces operational risks and enhances the organization’s ability to respond to unforeseen challenges, ensuring sustained value from the MDM investment.

The orchestration of workflows within the architecture is another critical aspect. TIBCO incorporates sophisticated workflow engines that automate routine tasks, enforce business rules, and coordinate interactions between modules. Workflows ensure that data processes follow predefined sequences, reducing manual intervention and minimizing errors. These orchestrated processes are particularly valuable in complex scenarios where multiple systems interact or when data must pass through numerous validation and enrichment stages. By embedding workflow intelligence into the architecture, TIBCO enhances operational efficiency and ensures consistent application of governance policies.

Data quality management is deeply intertwined with architectural design. The platform provides mechanisms for continuous monitoring, profiling, and assessment of data health. Metrics such as completeness, consistency, accuracy, and timeliness are measured against organizational standards, with automated alerts highlighting deviations. This proactive approach to data quality, integrated directly into the architecture, ensures that organizations can maintain a high level of confidence in their master data.

Finally, TIBCO ’s architecture is designed to support extensibility and future-proofing. APIs and plugin frameworks allow developers to extend core functionality, integrate emerging technologies, or incorporate advanced analytics tools. This forward-looking capability ensures that the platform remains relevant as business landscapes evolve, enabling organizations to leverage new insights, automation techniques, and technological innovations without the need for a complete system overhaul.

In essence, the architecture of TIBCO is a harmonious blend of robustness, flexibility, and intelligence. Each component, from the MDM Server to integration frameworks, user interfaces, and security modules, contributes to a cohesive ecosystem that ensures data integrity, operational efficiency, and strategic insight. Mastery of this architecture equips professionals with the knowledge to implement MDM solutions that are resilient, scalable, and aligned with organizational objectives.

The Essence of Data Modeling in TIBCO

Data modeling in TIBCO forms the backbone of enterprise master data management. It allows organizations to structure complex information in a way that mirrors real-world relationships, ensuring clarity and consistency across systems. The platform supports a highly flexible modeling framework, empowering professionals to define entities, attributes, and relationships that reflect the organization’s operational reality. The emphasis is on creating a coherent and adaptable structure that supports business processes without introducing unnecessary complexity. By carefully defining entities and their characteristics, organizations can ensure that master data remains accurate, complete, and reliable.

The Business Object Model (BOM) is central to this process. BOM acts as the blueprint that governs how master data entities interact and relate to one another. Entities within BOM represent tangible or conceptual business objects such as customers, suppliers, products, or contracts. Each entity is composed of attributes capturing essential information, and relationships that define the interactions between these entities. The careful design of BOM facilitates seamless data management, enabling organizations to maintain a single source of truth while supporting diverse operational needs.

TIBCO ’s modeling capabilities extend beyond mere representation. The platform allows for hierarchical structures, enabling entities to inherit characteristics and constraints from parent entities. This hierarchical approach reduces redundancy and enhances maintainability, providing a more elegant and scalable model. By employing inheritance and relationship rules effectively, organizations can ensure that the data model aligns with real-world complexities while remaining manageable and comprehensible.

Attributes and Their Role in Master Data

Attributes in TIBCO are more than simple data fields; they are the descriptors that define the unique characteristics of each entity. Attributes capture both intrinsic and derived information, ensuring that every aspect of an entity is represented accurately. Intrinsic attributes include essential identifiers such as customer ID, product code, or supplier number. Derived attributes, on the other hand, are calculated based on rules or transformations, allowing organizations to enrich their master data with meaningful insights.

A well-structured attribute framework enhances data quality and usability. Attributes are assigned data types, constraints, and validation rules to maintain consistency and prevent errors. For instance, a product price attribute might enforce numeric values within a specific range, while a customer email attribute would adhere to a defined format. By meticulously defining attributes and their properties, organizations can ensure that master data is precise, actionable, and aligned with operational standards.

Furthermore, attributes play a pivotal role in supporting advanced features such as search optimization, reporting, and analytics. By tagging attributes with metadata and indexing them appropriately, TIBCO enables rapid retrieval of critical information. This not only accelerates decision-making but also enhances the overall responsiveness of enterprise applications dependent on master data.

Relationships and Hierarchies

In TIBCO , relationships between entities form the connective tissue that binds master data into a coherent whole. Relationships define how entities interact, reflecting dependencies and associations that exist in real-world business processes. These relationships can be simple one-to-one mappings or more complex many-to-many associations, depending on the nature of the business scenario. The platform supports both standard and custom relationship types, giving organizations the flexibility to model nuanced interactions.

Hierarchies complement relationships by establishing structured layers of data, allowing entities to inherit properties from parent nodes and participate in organized groupings. For example, a product hierarchy might include categories, subcategories, and individual SKUs, enabling structured reporting and streamlined operations. Hierarchical relationships also facilitate efficient governance, as policies and rules can propagate from higher-level entities to dependent nodes, reducing administrative overhead and ensuring consistency.

By mastering relationships and hierarchies, organizations can achieve a comprehensive view of their master data landscape. Properly modeled relationships enable seamless data integration, enhance analytical capabilities, and support advanced operational scenarios such as supply chain optimization, customer segmentation, and regulatory compliance.

Configuration of Validation Rules

Validation rules are a critical component of TIBCO configuration. They ensure that data adheres to predefined standards and policies before it is accepted into the system. This proactive approach minimizes errors, maintains data integrity, and reduces downstream operational risks. Validation rules can be simple, such as ensuring mandatory fields are populated, or complex, involving conditional logic and cross-entity dependencies.

The platform provides a graphical interface for defining rules, making it accessible even to professionals without extensive programming knowledge. At the same time, scripting capabilities allow for more advanced scenarios, enabling organizations to implement sophisticated logic and calculations. By combining visual configuration with scripting, TIBCO ensures that validation is both powerful and adaptable to diverse business requirements.

Effective validation rules also play a role in supporting regulatory compliance. Many industries require stringent adherence to standards for data quality, reporting, and auditing. By embedding these requirements directly into validation rules, organizations can ensure compliance automatically, reducing manual oversight and enhancing operational efficiency.

Customizing Workflows for Master Data Management

Workflows in TIBCO orchestrate the lifecycle of master data, dictating how data is created, reviewed, approved, and retired. Customizing workflows allows organizations to align the system with internal processes, ensuring that data moves through the correct channels and adheres to governance policies. Workflows can be designed to accommodate approvals, notifications, exception handling, and automated actions, creating a streamlined and auditable process.

The platform’s workflow designer offers a visual representation of processes, enabling stakeholders to map out each step and decision point clearly. By modeling workflows accurately, organizations can reduce bottlenecks, enforce compliance, and improve the efficiency of master data operations. Additionally, workflows can be modified over time to reflect evolving business needs, providing agility and adaptability in an ever-changing environment.

Integrating workflows with validation rules further enhances data quality. For instance, a workflow can route a customer record for verification if certain attributes fail validation, ensuring that only accurate and complete data enters the system. This combination of workflows and rules creates a robust framework for maintaining master data integrity throughout its lifecycle.

User Interface Configuration and Usability

The usability of TIBCO heavily relies on effective user interface configuration. A well-designed interface enhances productivity, reduces errors, and encourages adoption among users. The platform allows administrators to configure forms, layouts, and navigation paths, tailoring the interface to the specific needs of different roles within the organization. For example, a data steward may require detailed attribute views and validation feedback, while a business analyst may prioritize reporting and search capabilities.

Dynamic interfaces can display relevant information based on the context of the user or the stage of the workflow, providing a personalized and intuitive experience. By leveraging conditional visibility, contextual prompts, and interactive dashboards, organizations can ensure that users have access to the right data at the right time. This reduces confusion, accelerates data entry, and enhances overall operational efficiency.

Moreover, interface customization supports the adoption of best practices in data governance. By guiding users to follow defined processes and validation rules, the system reinforces organizational policies, reduces the likelihood of errors, and ensures consistent application of standards across the enterprise.

Integrating TIBCO with Enterprise Ecosystems

Integration is a cornerstone of TIBCO ’s configuration capabilities. Organizations rarely operate in isolation, and master data often needs to flow seamlessly across multiple systems. TIBCO provides a rich set of connectors, adapters, and APIs to facilitate integration with ERP systems, CRM platforms, data warehouses, and other enterprise applications. This ensures that all systems reflect consistent and accurate master data, enhancing operational efficiency and reducing duplication.

The integration process involves mapping data structures, transforming data formats, and synchronizing updates in near real-time. By establishing automated integration pipelines, organizations can maintain a single source of truth while supporting diverse business processes. Integration also enables advanced analytics, reporting, and decision-making, as data from multiple sources can be consolidated and harmonized within the MDM platform.

Configuring integration effectively requires a clear understanding of both source and target systems, data quality requirements, and performance considerations. By meticulously planning integration workflows and validation checkpoints, organizations can achieve reliable and scalable data synchronization that supports strategic objectives.

Implementing Data Governance Policies

Data governance is a fundamental aspect of TIBCO configuration. Governance policies define how data is created, maintained, and retired, ensuring consistency, quality, and compliance. These policies encompass roles and responsibilities, access controls, approval workflows, and auditing mechanisms, creating a structured framework for managing master data.

By embedding governance directly into the MDM platform, organizations can enforce policies automatically, reducing reliance on manual oversight. Data stewards can monitor compliance, identify anomalies, and resolve issues proactively, maintaining trust in the integrity of master data. Governance policies also support regulatory requirements, providing an auditable trail of data actions and decisions that can be referenced during audits or inspections.

TIBCO supports flexible governance models, allowing organizations to define policies at multiple levels, including entity, attribute, and workflow levels. This granularity enables targeted governance that aligns with organizational priorities while minimizing administrative overhead. When combined with validation rules, workflows, and user interface configuration, governance policies form a comprehensive framework for maintaining high-quality master data.

In contemporary enterprise environments, data has emerged as one of the most valuable assets. The ability to manage, protect, and utilize this data effectively can define the success trajectory of an organization. TIBCO has been meticulously designed to cater to these needs, providing a comprehensive suite of tools to manage master data with precision. Among the myriad functionalities it offers, user management and security stand as pivotal components, ensuring that access is not only controlled but also that data integrity is maintained across all operational layers.

User management within TIBCO is far from a trivial administrative task. It involves the orchestration of roles, permissions, authentication methods, and monitoring mechanisms, all harmonized to create an ecosystem where data is protected yet accessible to authorized personnel. Security is interwoven into the platform's architecture, reflecting a philosophy that robust data governance begins with disciplined user management. Organizations that implement these mechanisms proficiently can mitigate risks associated with unauthorized access, accidental data corruption, and regulatory non-compliance.

The platform’s security architecture rests on several pillars, including role-based access control, sophisticated authentication protocols, audit logging, and encryption. Each pillar contributes to an ecosystem where data security is enforced without impeding the productivity of end-users. For IT professionals and administrators, mastering these features is indispensable, particularly for those preparing for certifications like TB0-124, where nuanced knowledge of MDM security protocols is essential.

Role-Based Access Control and Its Significance

Role-based access control, commonly referred to as RBAC, is the cornerstone of user management in TIBCO . At its core, RBAC is a method of regulating access to system resources based on the roles assigned to individual users. Each role encapsulates a set of permissions that determine what actions the user can perform, which data sets they can view, modify, or delete, and which administrative functionalities they can utilize.

The significance of RBAC lies in its ability to enforce the principle of least privilege. By granting users only the permissions necessary for their roles, organizations reduce the attack surface for potential security breaches. For instance, a data steward responsible for verifying and cleansing master data may have permissions to update records but not to alter workflow configurations or modify user roles. This compartmentalization minimizes the possibility of accidental or malicious data manipulation.

RBAC in TIBCO is highly granular, allowing administrators to define roles at multiple levels of the system. From operational access to functional privileges and administrative oversight, the flexibility of RBAC ensures that security policies can be precisely aligned with organizational structures. Additionally, role inheritance and hierarchical role definitions enable complex enterprises to maintain consistency and scalability in their access management strategy.

Proper implementation of RBAC also facilitates regulatory compliance. Many industry regulations, such as GDPR, HIPAA, or SOX, mandate that access to sensitive data be restricted and well-documented. By leveraging RBAC, organizations can demonstrate that data access policies are systematically enforced, providing auditors with clear evidence of adherence to compliance standards.

Authentication Mechanisms in TIBCO

Authentication serves as the first line of defense against unauthorized access. In TIBCO , authentication mechanisms are designed to balance security with user convenience. The platform supports traditional username-password authentication, single sign-on (SSO), and integration with enterprise identity management solutions such as LDAP or Active Directory.

Single sign-on provides a seamless experience for users who access multiple applications across the enterprise. By authenticating once, a user can navigate through different systems without repeatedly entering credentials, reducing friction while maintaining high-security standards. SSO in TIBCO is compatible with industry-standard protocols like SAML and OAuth, enabling secure interoperability with external identity providers.

For environments requiring additional security layers, multi-factor authentication (MFA) can be integrated. MFA mandates that users provide two or more verification factors, which may include something they know, something they have, or something they are. This added layer drastically reduces the risk of credential compromise and strengthens the overall security posture.

TIBCO also provides mechanisms for session management, including configurable session timeouts and account lockouts. These controls prevent unauthorized prolonged access and mitigate the impact of potential intrusions. Collectively, these authentication strategies ensure that the identity of every user interacting with the system is verifiable and trustworthy.

Audit Logging and Activity Monitoring

Audit logging is an indispensable component of user management and security. TIBCO maintains comprehensive logs of all user activities, capturing details such as login attempts, record modifications, administrative changes, and workflow interactions. These logs create a transparent history of interactions with the system, serving both operational and compliance needs.

Activity monitoring allows administrators to detect anomalous behaviors or unauthorized access attempts proactively. By analyzing audit logs, organizations can identify patterns such as repeated failed login attempts, unusual data modifications, or access outside normal operational hours. Such insights enable timely interventions, minimizing the risk of data breaches or policy violations.

Moreover, audit logs provide a forensic trail that is crucial in regulated industries. They enable organizations to demonstrate accountability and traceability, showing exactly who accessed what data, when, and what actions were performed. This capability not only satisfies regulatory requirements but also instills confidence in stakeholders that master data is managed responsibly and securely.

For users preparing for the TB0-124 certification, understanding the intricacies of audit logging and activity monitoring is essential. The ability to configure, interpret, and act upon audit data is a core competency that reflects both technical proficiency and adherence to governance principles.

Data Encryption and Protection

Protecting sensitive information extends beyond controlling access. TIBCO employs advanced encryption mechanisms to safeguard data both at rest and in transit. Data at rest, which resides in databases or file storage, is encrypted using industry-standard algorithms to prevent unauthorized access in case of physical or logical breaches.

Encryption in transit ensures that data exchanged between clients, servers, and external systems remains secure from interception or tampering. Secure communication protocols such as HTTPS and TLS are integral to TIBCO , providing confidentiality and integrity during data transmission. This is particularly vital in distributed environments where master data flows across multiple nodes and network segments.

In addition to encryption, TIBCO supports data masking and tokenization for scenarios where sensitive information must be shared with external parties or across departments. By masking personally identifiable information or sensitive attributes, organizations can maintain operational transparency without exposing critical data. Tokenization further enhances security by replacing sensitive data with surrogate values, ensuring that the original information cannot be reconstructed without proper authorization.

Understanding the principles of encryption and data protection is critical for administrators and IT professionals. Proper implementation reduces vulnerabilities, protects organizational reputation, and ensures compliance with privacy regulations.

Integration of Security with Business Processes

User management and security in TIBCO are not isolated features; they are deeply integrated with business processes. Workflows, data validation rules, and approval chains are designed to incorporate security considerations at every step. For example, data changes may require multiple approvals based on the sensitivity of the information or the role of the individual initiating the change.

This integration ensures that security policies are consistently enforced, minimizing the likelihood of human error or policy circumvention. By embedding security within business logic, TIBCO enables organizations to maintain operational efficiency without compromising on control. Moreover, this approach supports audit readiness, as every action can be traced and verified within the context of business processes.

Security integration also facilitates dynamic role adjustments and adaptive access controls. As organizational structures evolve, administrators can modify permissions and workflows without disrupting day-to-day operations. This agility is crucial for enterprises that operate in fast-changing regulatory environments or engage in frequent mergers and acquisitions.

Best Practices for User Management and Security

Achieving optimal security in TIBCO requires more than understanding individual features; it demands adherence to best practices that combine technical expertise with governance discipline. Administrators are encouraged to periodically review role definitions, update authentication protocols, monitor audit logs consistently, and apply encryption standards rigorously.

Periodic security audits and vulnerability assessments help identify gaps before they can be exploited. Establishing clear policies for password complexity, session management, and account lifecycle ensures that user credentials remain secure throughout their tenure. Additionally, educating users about security responsibilities reinforces the human element, which is often the weakest link in enterprise security.

Implementing a culture of proactive security, rather than reactive measures, enhances resilience against internal and external threats. It also positions the organization to respond effectively to audits, regulatory inquiries, or operational incidents, reflecting a mature approach to master data management.

Integration and Data Synchronization in TIBCO

Integration and data synchronization form the backbone of enterprise data management. In TIBCO , these processes are designed to ensure that master data remains consistent, accurate, and reliable across diverse systems. Organizations often operate multiple applications, databases, and platforms that handle overlapping data. Without robust integration, discrepancies may arise, causing operational inefficiencies and decision-making errors. TIBCO addresses these challenges through a comprehensive framework that facilitates seamless data exchange, synchronization, and governance.

The essence of integration lies in connecting TIBCO MDM with other enterprise systems. These systems could include ERP platforms, CRM solutions, supply chain applications, or legacy databases. The ability to integrate effectively ensures that any change in master data—such as a new customer record, updated supplier information, or modified product detail—is accurately reflected in all dependent systems. This harmonization prevents data silos and allows organizations to maintain a single, reliable source of truth.

Data synchronization is closely intertwined with integration. While integration establishes the connection, synchronization guarantees that the data flowing between systems remains current and consistent. TIBCO provides mechanisms for both real-time and batch synchronization, allowing organizations to choose the best approach based on operational requirements. Real-time synchronization is crucial in scenarios where immediate updates are necessary, such as financial transactions or inventory adjustments. Batch synchronization, on the other hand, is suitable for periodic updates where instant consistency is less critical but efficiency is paramount.

Connectivity Methods and Approaches

TIBCO supports multiple connectivity methods to integrate with external systems. These methods include web services, messaging systems, and batch processing. Web services, based on standards such as SOAP or REST, allow external applications to interact with MDM services over HTTP. This approach provides a flexible, standardized method for exchanging data, making it compatible with a wide range of applications.

Messaging systems, such as JMS (Java Message Service), enable asynchronous communication between systems. This method is particularly effective in high-volume environments, where real-time processing of every transaction may be impractical. Messaging ensures that updates are reliably queued and delivered, even during periods of high system load or temporary network disruptions.

Batch processing allows large volumes of data to be synchronized in scheduled intervals. This method is often used for legacy systems or when real-time integration is unnecessary. Batch jobs can be configured to extract, transform, and load data between MDM and other systems, ensuring that large datasets remain consistent without overwhelming operational resources.

The flexibility in connectivity methods allows organizations to design integration strategies that align with their business needs, system capabilities, and resource constraints. Understanding the strengths and limitations of each method is essential for architects and developers implementing TIBCO MDM solutions.

Adapters and Connectors

One of the hallmarks of TIBCO is its extensive use of adapters and connectors. These components act as bridges between the MDM platform and external applications, simplifying the integration process. Adapters handle protocol translation, message formatting, and other communication intricacies, allowing developers to focus on business logic rather than low-level connectivity issues.

For instance, an ERP adapter enables TIBCO MDM to communicate with systems like SAP or Oracle E-Business Suite, while a CRM adapter connects MDM to platforms such as Salesforce or Microsoft Dynamics. Database connectors facilitate direct interaction with relational databases, supporting operations like inserts, updates, and deletes. By leveraging these pre-built adapters, organizations can accelerate integration projects, reduce errors, and improve reliability.

Adapters also support complex operations such as incremental data updates, error handling, and retry mechanisms. Incremental updates ensure that only modified records are transmitted, optimizing bandwidth and reducing processing time. Error handling mechanisms capture integration failures, log them for review, and initiate automated retries where applicable. This level of sophistication enhances operational resilience and reduces manual intervention, contributing to smoother enterprise data operations.

Data Transformation and Mapping

When integrating disparate systems, differences in data formats, structures, and semantics are common. Data transformation is critical to bridge these differences. TIBCO includes robust tools for mapping and transforming data, allowing organizations to convert information accurately between systems.

Data mapping involves defining correspondences between source and target data elements. For example, a customer’s “first name” in an ERP system may need to be mapped to “given name” in MDM. Transformation extends this by modifying the data itself, such as formatting dates, normalizing text, or concatenating multiple fields. Effective mapping and transformation ensure that data retains its meaning, integrity, and context across all systems.

TIBCO MDM supports complex transformation logic, including conditional rules, concatenation, splitting, and data enrichment. Data enrichment allows the augmentation of records with additional attributes, enhancing the quality and usability of the data. For example, adding geographic information or classification codes to a product record can improve analytics and reporting capabilities.

Change Detection and Conflict Resolution

Maintaining consistent master data requires more than just integration and transformation. TIBCO incorporates sophisticated mechanisms for change detection and conflict resolution. Change detection identifies modifications in source systems, triggering synchronization processes to propagate updates. This ensures that all connected systems reflect the most recent information.

Conflicts arise when different systems contain divergent updates to the same record. For example, two departments may independently update a customer’s address in separate systems. TIBCO MDM provides conflict resolution strategies to reconcile these differences. Rules can be defined to prioritize one system over another, merge values intelligently, or flag conflicts for manual review. These capabilities safeguard data integrity while minimizing operational disruptions.

Automated change detection and conflict resolution are particularly important in large enterprises where data is highly dynamic. By reducing manual reconciliation efforts, organizations can focus on strategic initiatives while ensuring operational data remains trustworthy.

Event-Driven Synchronization

TIBCO also supports event-driven synchronization, a modern approach that enhances efficiency and responsiveness. In this model, changes in master data trigger events that propagate updates to subscribed systems. Event-driven synchronization is highly suitable for real-time applications, where immediate awareness of data changes is crucial.

For example, when a new product is added to MDM, an event can trigger updates in e-commerce platforms, inventory systems, and marketing tools simultaneously. This approach minimizes latency, reduces the risk of inconsistent information, and improves overall responsiveness of business operations. Event-driven architectures complement traditional batch and messaging methods, providing a versatile framework for comprehensive data integration.

Best Practices for Integration and Synchronization

Successful integration and synchronization require careful planning, design, and execution. TIBCO users should adhere to best practices to maximize efficiency and reliability. Clear documentation of data flows, system interfaces, and transformation rules is essential. This documentation serves as a blueprint for development, troubleshooting, and future enhancements.

Testing is another critical aspect. Integration tests should cover various scenarios, including high-volume data loads, network failures, and error handling. Simulating conflicts and verifying resolution rules ensures that synchronization processes behave as intended. Regular monitoring and auditing of data flows help detect anomalies early and maintain system health.

Security considerations are equally important. Data in transit and at rest should be encrypted, and access controls must be enforced to prevent unauthorized operations. TIBCO provides robust security features, including role-based access and audit logging, to protect sensitive enterprise information during integration processes.

Finally, collaboration between business and technical teams is vital. Understanding business requirements, data semantics, and operational workflows ensures that integration solutions align with organizational objectives. Continuous improvement and adaptation to changing requirements further enhance the value of TIBCO in maintaining consistent, high-quality master data.

Establishing a Robust Data Governance Framework

Implementing TIBCO effectively begins with a robust data governance framework. This framework forms the foundation for managing critical business data, ensuring accuracy, consistency, and traceability across the organization. A successful governance strategy involves clearly defining policies for data creation, modification, archiving, and retirement. This ensures that master data does not degrade over time and remains a reliable source for business decisions.

Data stewardship is an integral component of governance. Assigning accountability for specific datasets allows for continuous monitoring and validation. By having designated stewards, organizations can enforce rules for data quality, consistency, and compliance. Moreover, integrating automated validation and approval workflows within TIBCO further strengthens the governance model, reducing human errors and accelerating data processing.

Organizations should also focus on establishing data standards. These standards encompass naming conventions, data formats, and classification rules. When master data adheres to consistent standards, integration with downstream systems becomes seamless. In turn, reporting and analytics become more accurate, enabling strategic insights that are grounded in reliable data.

Performance Tuning and Optimization

Optimal performance of TIBCO is crucial to handle large volumes of enterprise data efficiently. Performance tuning begins with understanding system architecture and identifying potential bottlenecks. The platform’s core components, including the repository, data services, and process engines, need continuous monitoring to ensure responsiveness.

Indexing is a fundamental optimization technique that enhances query performance. By strategically creating indexes on frequently accessed data attributes, retrieval operations become faster and more efficient. Caching mechanisms also play a pivotal role in performance. Temporary storage of frequently requested data reduces redundant processing and accelerates response times.

Query optimization is another vital aspect. Writing efficient queries and leveraging the platform’s query planner ensures that system resources are used effectively. Batch processing of large data sets, as opposed to processing them in real-time, can prevent performance degradation during peak loads. Regularly reviewing performance metrics and applying adjustments proactively helps maintain a consistently high-performing MDM environment.

Backup and Recovery Strategies

Data protection is paramount in any enterprise data management solution. TIBCO requires well-defined backup and recovery strategies to safeguard critical master data. A comprehensive approach involves regular automated backups, scheduled during off-peak hours to minimize system impact.

Recovery procedures should be tested frequently to validate their effectiveness. Simulating failure scenarios and restoring data ensures that the recovery process is reliable and efficient. Storing backups in multiple locations, including offsite or cloud repositories, provides additional protection against unforeseen events such as hardware failures or cyberattacks.

Versioning of data is another key practice. Maintaining historical copies of master data allows organizations to revert to previous states if inconsistencies or errors occur. Combining version control with backup and recovery processes ensures continuity of operations and preserves data integrity, even in complex enterprise environments.

Troubleshooting Techniques and Problem Resolution

Troubleshooting in TIBCO requires a structured methodology. Professionals must be adept at identifying, diagnosing, and resolving issues quickly to maintain system stability. The platform offers comprehensive logging tools that capture detailed operational data, which can be leveraged for root cause analysis.

Common issues include data conflicts, synchronization errors, and performance bottlenecks. Data conflicts often arise from concurrent modifications or inconsistent data across sources. Resolving these conflicts requires understanding of the data model and business rules, as well as the ability to reconcile discrepancies effectively.

Synchronization issues may occur when integrating with external systems or data sources. Ensuring proper mapping, validating transformation rules, and monitoring data flows can prevent such issues. Performance bottlenecks often manifest during high transaction volumes and require careful analysis of system metrics, queries, and resource allocation to resolve efficiently.

A methodical troubleshooting approach involves isolating the problem, reviewing relevant logs, testing potential solutions, and documenting the resolution. Consistently following this process reduces downtime and prevents recurrence of similar issues, ensuring the reliability of the MDM system.

Documentation and Knowledge Management

Maintaining thorough documentation is essential for both best practices and troubleshooting. Comprehensive records of system configurations, workflows, customizations, and integrations serve as a reference for administrators and developers. Clear documentation facilitates onboarding of new personnel, reduces dependency on specific individuals, and ensures continuity of knowledge.

Workflow diagrams, data models, and process descriptions help visualize complex operations within TIBCO . These visual aids complement written documentation and simplify understanding of system processes. Additionally, documenting common issues and their resolutions forms a knowledge repository that expedites problem-solving in the future.

Knowledge management also includes capturing lessons learned from previous incidents. By analyzing past challenges and successes, organizations can refine processes, improve system performance, and enhance governance practices. A strong culture of documentation and knowledge sharing ensures long-term sustainability and operational excellence.

Integration Best Practices

TIBCO often operates within a larger enterprise ecosystem, necessitating seamless integration with other systems. Following integration best practices ensures smooth data flows, reduces errors, and maintains consistency across platforms. Designing clear data exchange protocols, using standardized formats, and implementing robust validation mechanisms are fundamental practices.

API-based integrations offer flexibility and scalability, enabling real-time or near-real-time data synchronization. Message queuing and event-driven architectures further enhance reliability by decoupling systems and allowing asynchronous processing. Data transformation and mapping should be handled consistently, ensuring that master data retains its integrity when moving between systems.

Regular monitoring of integrations is crucial to detect anomalies early. Automated alerts for failed transactions or inconsistent data can prevent downstream disruptions. By adhering to these best practices, organizations can maintain a cohesive and reliable data ecosystem that maximizes the value of TIBCO .

Continuous Monitoring and System Health

Sustaining the effectiveness of TIBCO requires continuous monitoring of system health and operational metrics. Monitoring includes tracking system performance, data quality, transaction volumes, and error rates. By proactively observing these indicators, potential issues can be addressed before they escalate.

Dashboards and reporting tools help visualize system performance trends, making it easier for administrators to identify anomalies and take corrective actions. Automated alerts for unusual activity, such as spikes in processing time or data inconsistencies, support rapid response and minimize operational disruptions.

Periodic audits of system configurations and data integrity checks reinforce the overall health of the MDM environment. These practices not only maintain performance and reliability but also build confidence among stakeholders that master data is accurate, secure, and dependable for business operations.

Data Quality Management

Maintaining high data quality is a central pillar in the success of TIBCO implementations. Accurate, complete, and consistent master data enables organizations to make strategic decisions with confidence. Data quality management involves continuous validation, cleansing, and enrichment processes to prevent errors from propagating across systems.

Validation rules are critical for ensuring that incoming data meets predefined standards. These rules can range from simple checks, such as mandatory fields and correct formats, to complex validations that enforce business-specific logic. By embedding validation rules within the MDM platform, organizations can prevent invalid or incomplete data from entering the system.

Data cleansing is another essential activity. It involves detecting and correcting errors, inconsistencies, and duplicates in master data. Deduplication algorithms within TIBCO can automatically merge redundant records while preserving the integrity of essential information. Enrichment processes further enhance data by integrating additional contextual information, such as geographic details, demographic attributes, or supplier classifications.

Monitoring data quality through metrics such as completeness, accuracy, timeliness, and consistency provides insight into the effectiveness of quality management initiatives. Organizations can proactively identify weak points and implement corrective measures, ensuring that master data remains a trustworthy asset.

Metadata Management and Usage

Metadata management is often overlooked but plays a critical role in TIBCO operations. Metadata provides information about data, including its source, structure, lineage, and usage. Effective metadata management ensures transparency and supports decision-making by revealing the context of each data element.

Documenting metadata involves capturing definitions, field properties, relationships, and dependencies. This documentation facilitates understanding of data flows and simplifies the resolution of issues when they arise. Metadata also enables impact analysis; when changes are required, understanding the dependencies prevents unintended consequences across related datasets.

Furthermore, leveraging metadata supports compliance and audit requirements. Regulators often demand clear evidence of how data is managed and transformed, and metadata provides an organized and accessible record. By maintaining a robust metadata repository, organizations enhance their ability to govern data efficiently, integrate systems seamlessly, and preserve trust in their master data.

Security and Access Management

Protecting sensitive master data is a non-negotiable requirement in TIBCO deployments. Security and access management practices prevent unauthorized access, ensure confidentiality, and uphold regulatory compliance. Implementing role-based access controls (RBAC) is fundamental for restricting system operations based on user responsibilities.

User authentication and authorization mechanisms safeguard the platform from unauthorized manipulation. Access policies should define who can view, create, modify, or delete master data records. Segregation of duties is another critical practice that prevents conflicts of interest and reduces the risk of errors or malicious activities.

Data encryption both at rest and in transit adds another layer of security. Encrypting sensitive information ensures that even if data is intercepted or accessed by unauthorized parties, it remains unreadable and protected. Periodic security audits, vulnerability assessments, and monitoring of system logs reinforce the security posture, maintaining the confidentiality and integrity of critical business data.

Advanced Workflow Management

TIBCO provides powerful workflow capabilities that allow organizations to define complex business processes for data management. Effective workflow design ensures that data moves through structured, controlled, and auditable paths, minimizing errors and improving operational efficiency.

Workflow automation reduces manual intervention, speeds up approval processes, and ensures consistency in data handling. Conditional logic within workflows can enforce business rules dynamically, allowing the system to respond intelligently to varying scenarios. Notifications and alerts embedded in workflows ensure that relevant stakeholders are informed of critical events, enabling timely action.

Monitoring workflow performance is essential to optimize processes. Tracking key metrics such as processing times, bottlenecks, and error rates helps identify inefficiencies and informs continuous improvement efforts. Advanced workflow management not only enhances data quality and governance but also strengthens overall organizational agility by ensuring that master data processes are predictable and reliable.

Change Management in MDM Implementation

Implementing TIBCO often requires significant organizational and technical change. Change management practices ensure smooth transitions, minimize resistance, and foster adoption among users. A structured change management approach involves communication, training, stakeholder engagement, and iterative implementation strategies.

Training is essential for enabling users to leverage the MDM platform effectively. Providing hands-on sessions, user manuals, and ongoing support empowers staff to understand workflows, data governance policies, and troubleshooting practices. Communicating the benefits of accurate master data, such as improved reporting and operational efficiency, reinforces user buy-in and encourages active participation.

Incremental deployment strategies reduce risk by rolling out new processes or modules in phases. This approach allows teams to validate functionality, identify challenges, and adjust workflows before a full-scale implementation. Effective change management ensures that the MDM system becomes an integrated part of organizational operations rather than a disruptive technology.

Analytics and Reporting in TIBCO

Leveraging analytics and reporting capabilities within TIBCO transforms raw data into actionable insights. Accurate master data provides a reliable foundation for analytics, enabling organizations to measure performance, track trends, and support strategic decisions.

Reports generated from master data can span operational, tactical, and strategic perspectives. Operational reports focus on day-to-day activities, ensuring that transactions and processes are running smoothly. Tactical reports help monitor team performance, data quality metrics, and workflow efficiency. Strategic reports provide leadership with insights into long-term trends, resource allocation, and business opportunities.

Integrating analytics dashboards enhances visibility, allowing users to explore data interactively and identify patterns or anomalies quickly. Real-time reporting capabilities empower organizations to respond to changing conditions promptly. By embedding analytics and reporting into MDM processes, organizations can maximize the value of their data and strengthen decision-making across all levels.

System Scalability and Future-Ready Architecture

TIBCO must support growing business needs and evolving data requirements. Planning for scalability ensures that the platform can handle increasing volumes of data, users, and transactions without performance degradation. Scalability strategies include optimizing infrastructure, distributing workloads, and leveraging cloud-based resources when appropriate.

Future-ready architecture emphasizes flexibility and adaptability. Designing modular processes, standardized interfaces, and configurable workflows allows organizations to adjust rapidly to changing business conditions. Scalable data models accommodate new entities, relationships, and attributes without requiring extensive redesign, preserving system stability and reducing implementation costs.

Monitoring resource utilization, conducting periodic capacity planning, and implementing proactive scaling measures ensure that the MDM environment remains resilient. A focus on scalability and future-ready design enables organizations to maintain high performance, minimize disruptions, and confidently expand their data management capabilities as business demands grow.

Conclusion

The TB0-124 certification represents a significant milestone for professionals seeking to demonstrate their expertise in TIBCO . Achieving this credential validates a deep understanding of master data management concepts, platform architecture, data modeling, configuration, integration, security, and best practices. It equips individuals with the skills necessary to implement robust and efficient MDM solutions that drive organizational efficiency and support data-driven decision-making.

Mastering TIBCO requires not only technical knowledge but also a strategic approach to data governance, system integration, and performance optimization. By adhering to best practices, understanding user management and security, and maintaining consistent data synchronization, certified professionals can ensure that their organization’s critical data remains accurate, secure, and accessible.

Ultimately, the TB0-124 certification opens doors to new career opportunities, positions individuals as valuable assets within their organizations, and fosters confidence in their ability to manage complex data environments. It is a testament to a professional’s dedication to excellence in the field of master data management and their commitment to leveraging technology for meaningful business outcomes.