Certification: IBM Certified Solution Developer - InfoSphere DataStage v11.3

Certification Full Name: IBM Certified Solution Developer - InfoSphere DataStage v11.3

Certification Provider: IBM

Exam Code: C2090-424

Exam Name: InfoSphere DataStage v11.3

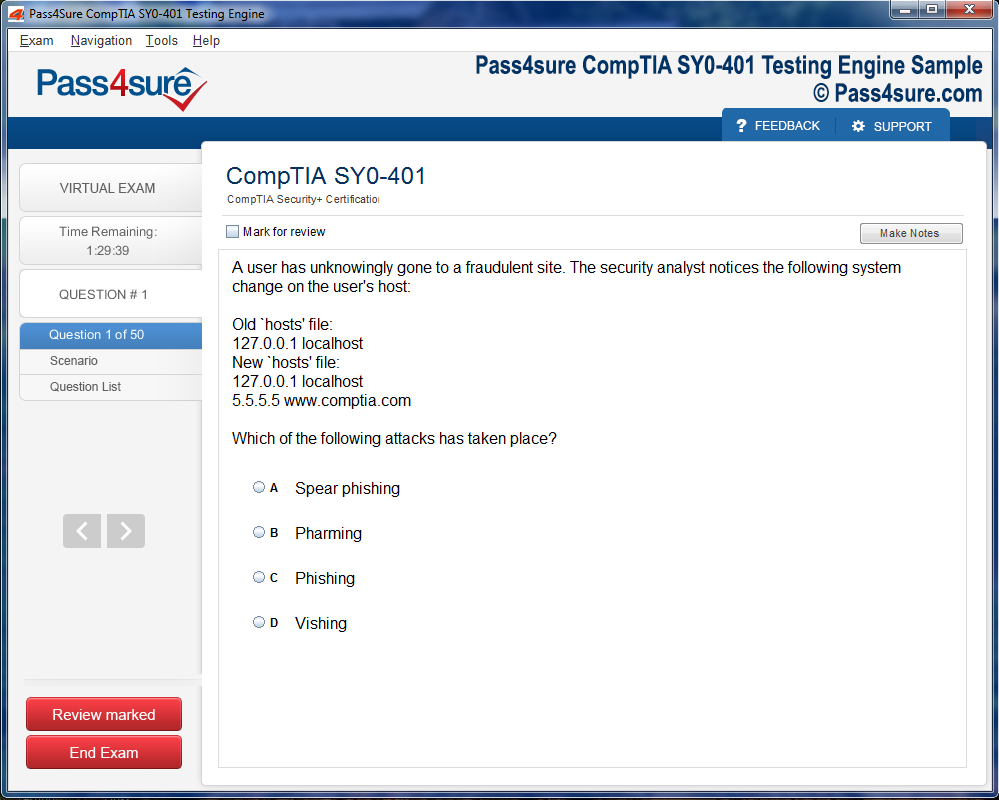

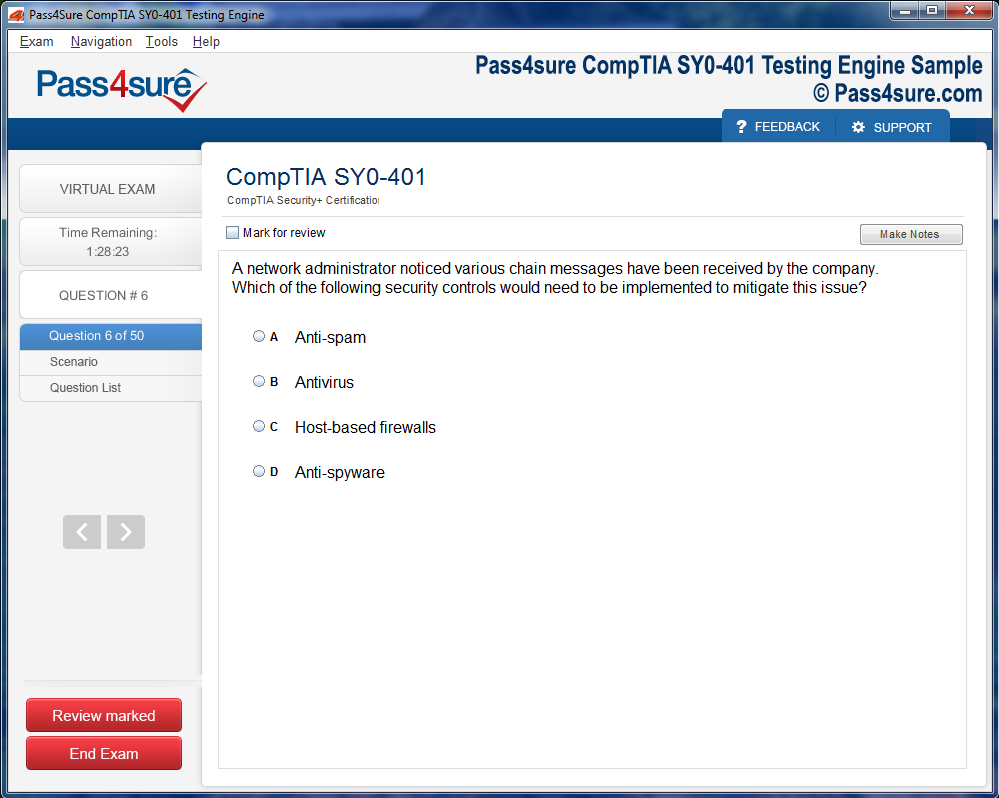

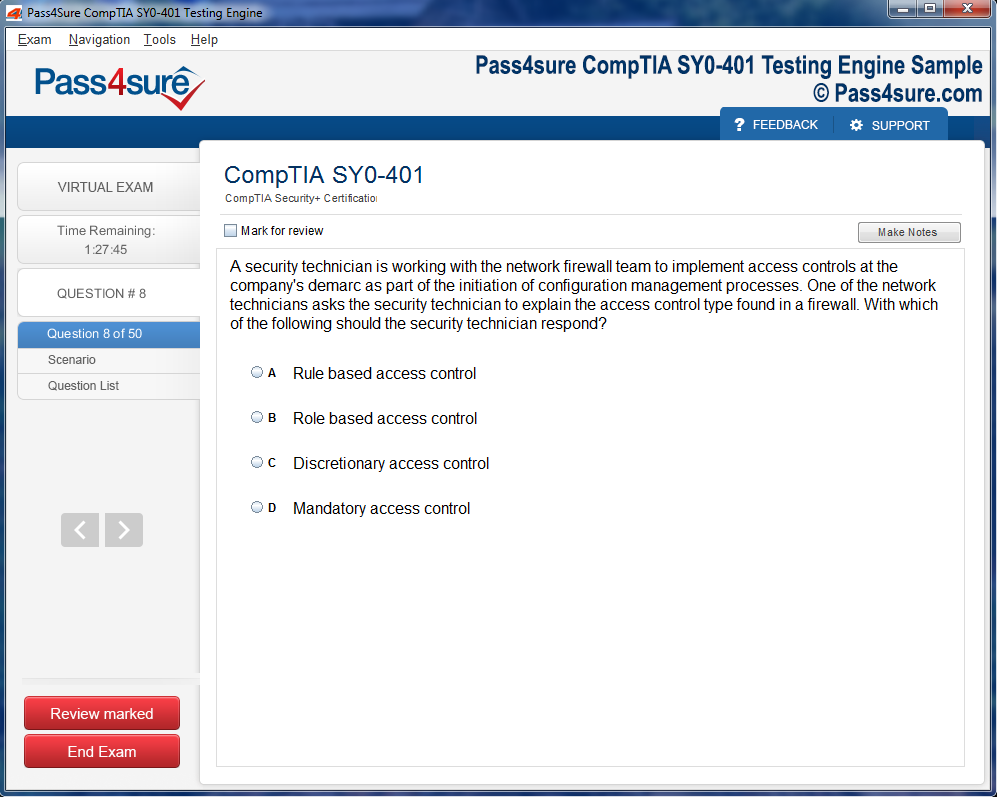

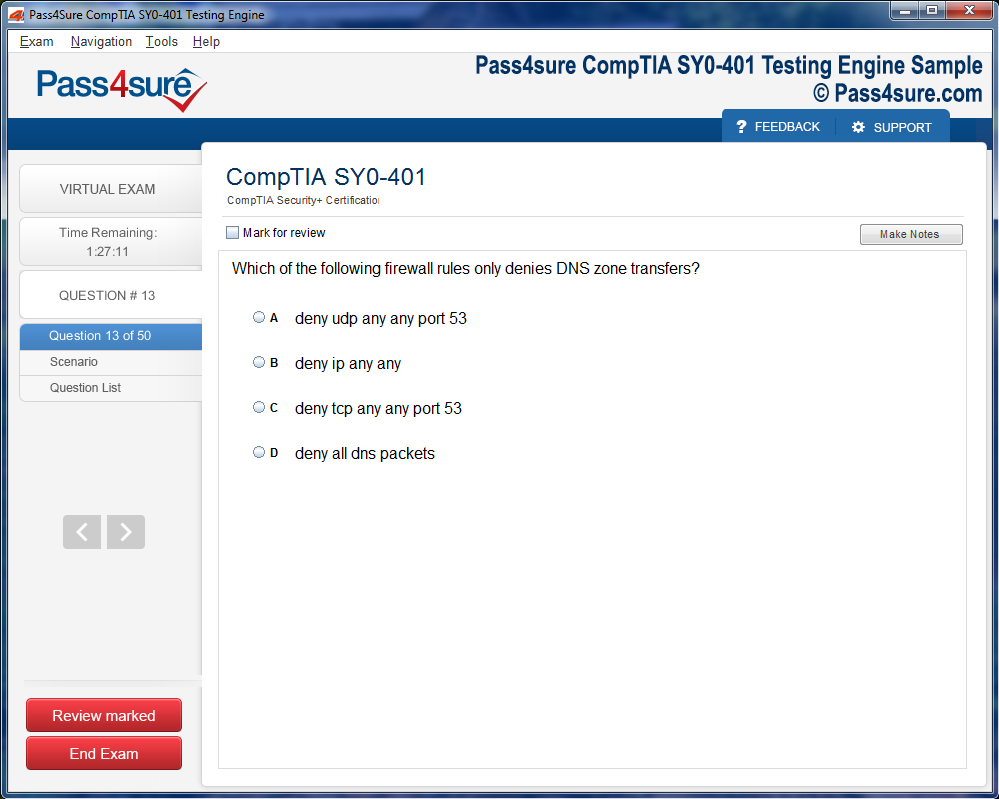

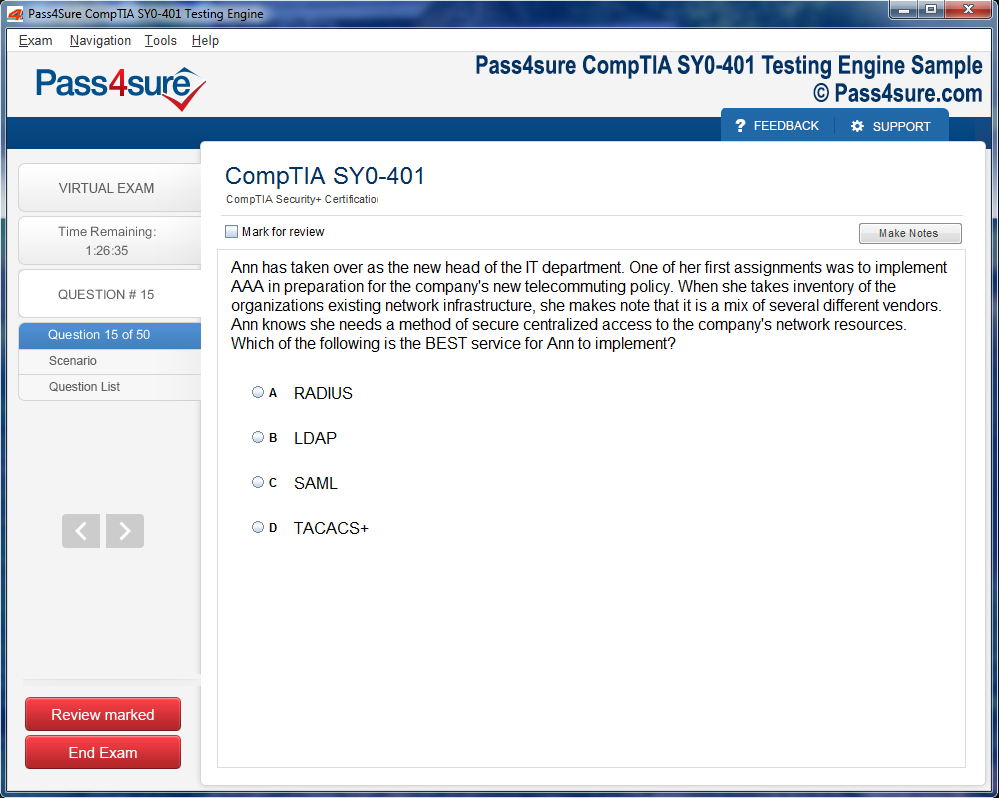

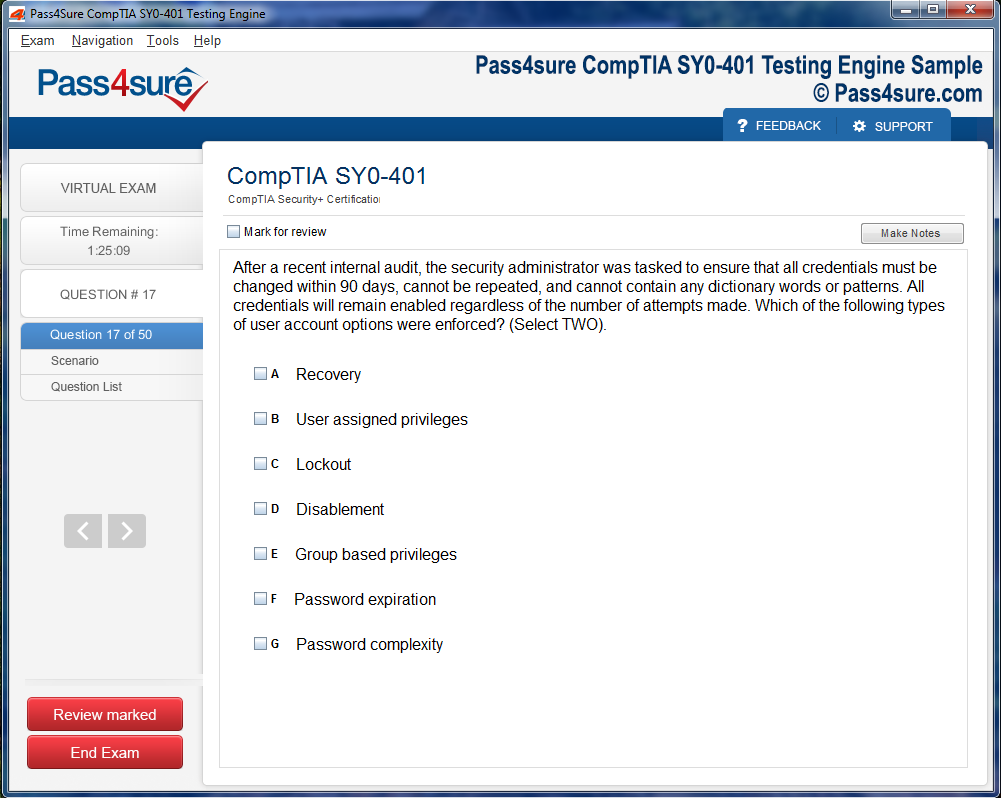

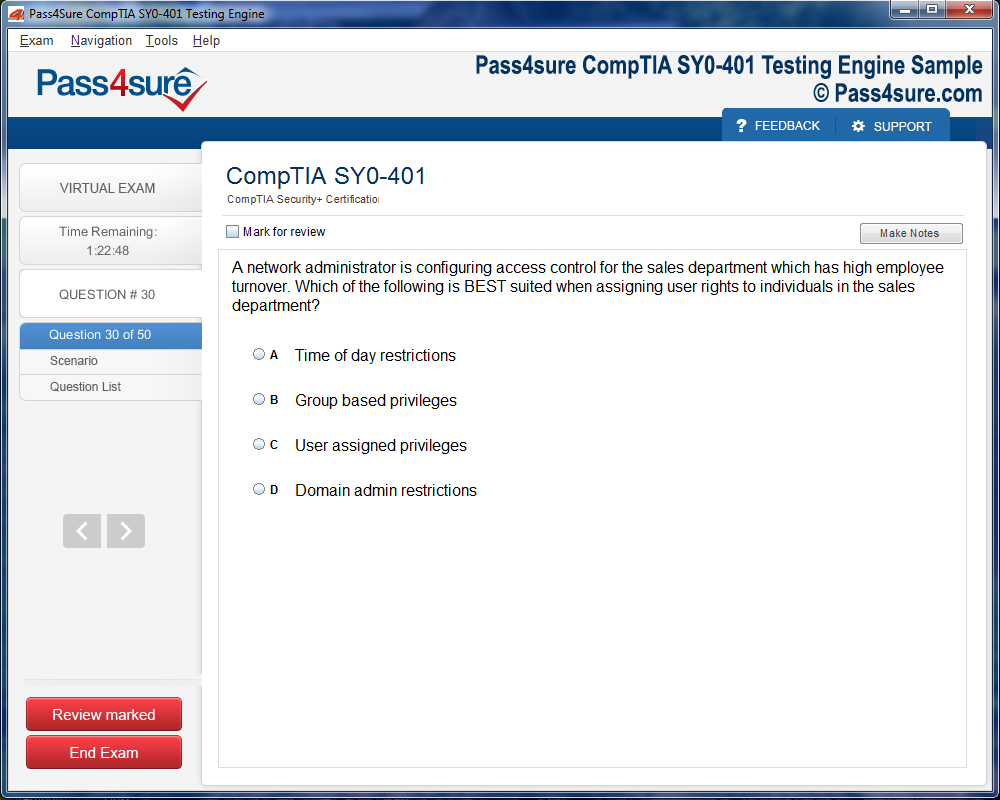

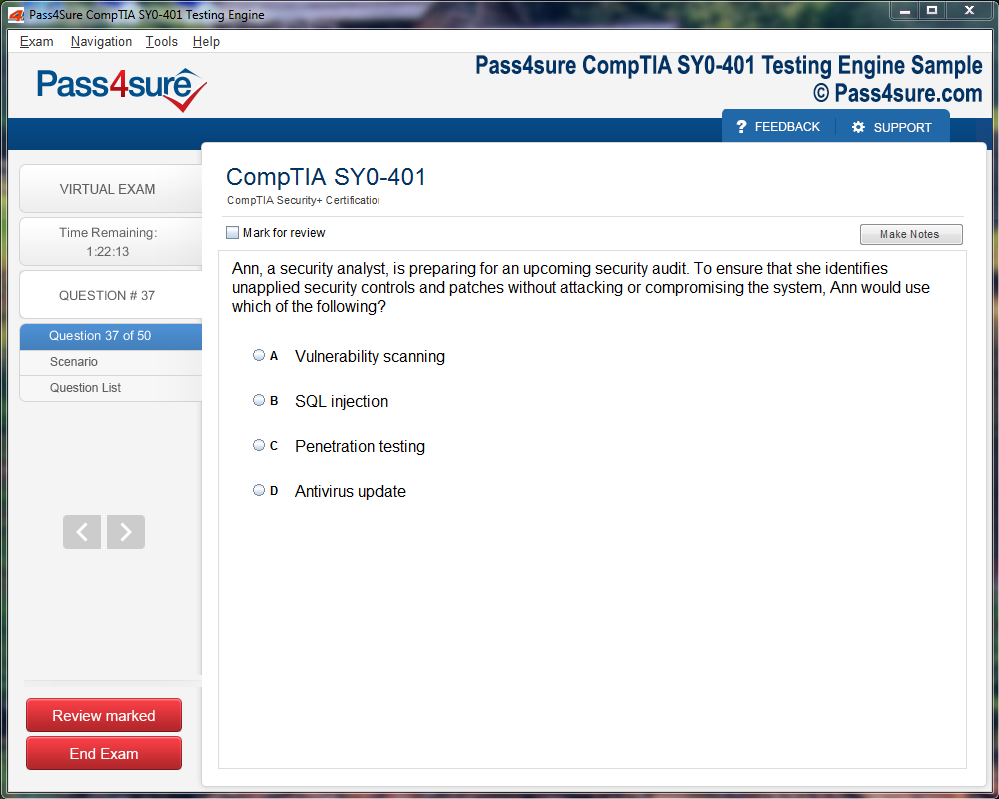

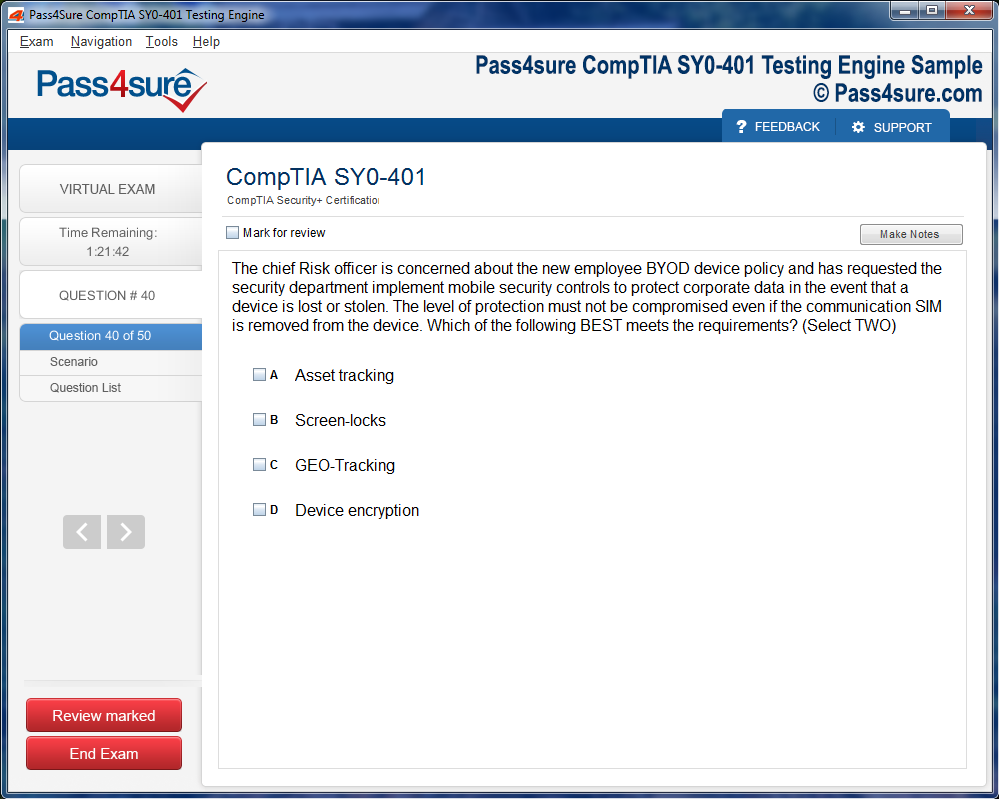

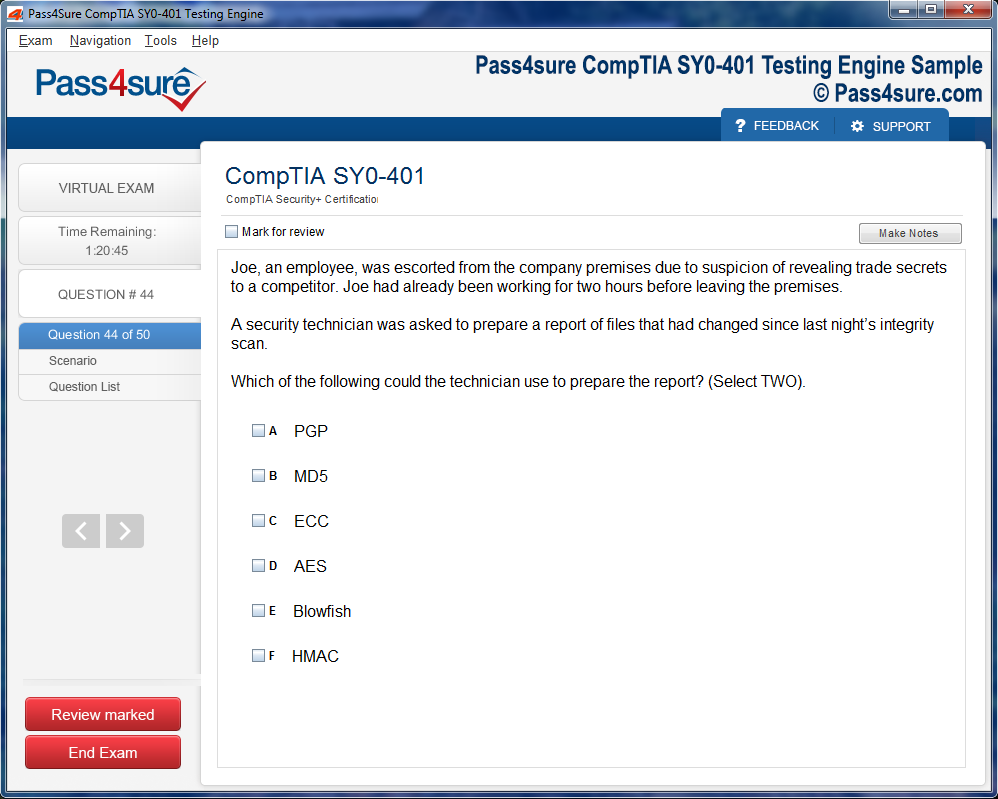

Product Screenshots

How to Earn the IBM Certified Solution Developer InfoSphere DataStage v11.3 Credential

The IBM Certified Solution Developer InfoSphere DataStage v11.3 credential represents a significant milestone for data integration professionals seeking to validate their expertise in enterprise ETL solutions. This certification demonstrates proficiency in designing, developing, and deploying robust data integration solutions using one of the industry's most powerful platforms. As organizations increasingly rely on data-driven decision making, the demand for skilled DataStage developers continues to grow exponentially across industries.

Achieving this certification requires dedication, structured preparation, and hands-on experience with the platform. Similar to how professionals prepare for Power BI data analysis certifications, candidates must immerse themselves in both theoretical concepts and practical applications. The certification validates your ability to work with parallel jobs, understand metadata management, implement data quality rules, and optimize performance for large-scale data integration projects in real-world business scenarios.

Prerequisites and Required Knowledge Base for DataStage Certification

Before attempting the IBM InfoSphere DataStage v11.3 certification exam, candidates should possess fundamental knowledge of database concepts, SQL programming, and basic ETL principles. A strong foundation in data warehousing concepts helps significantly when understanding how DataStage fits into broader enterprise architecture. Most successful candidates have at least six months to one year of hands-on experience working with DataStage in production environments before attempting the certification.

Understanding configuration files and scripting languages proves essential for DataStage mastery. Just as developers need to master YAML multi-line string handling for modern DevOps workflows, DataStage professionals must become proficient in parameter files, environment variables, and job sequencing scripts. Familiarity with Unix/Linux operating systems enhances your ability to troubleshoot issues, manage server resources, and implement automation solutions that streamline data integration workflows.

Installation and Environment Setup for Hands-On Practice Sessions

Setting up a proper practice environment is crucial for certification success. IBM provides evaluation versions of InfoSphere DataStage that allow candidates to gain hands-on experience without requiring enterprise licenses. Installing DataStage on your local machine or virtual environment enables unlimited practice time to experiment with different stages, transformers, and job designs. Ensure your system meets the minimum hardware requirements, including adequate RAM, processor speed, and disk space for optimal performance.

Modern infrastructure automation tools can simplify environment management significantly. Concepts similar to Terraform dynamic block implementations can help automate DataStage environment provisioning in cloud or on-premises settings. Configure your development environment with sample databases, create test data sets, and establish connectivity to various data sources including relational databases, flat files, XML sources, and cloud storage platforms to replicate real-world integration scenarios.

Mastering DataStage Designer Interface and Core Components Effectively

The DataStage Designer interface serves as the primary workspace for developing ETL jobs. Familiarize yourself with the palette of stages, including database stages, file stages, processing stages, and development stages. Understanding how to drag and drop these components onto the canvas, configure their properties, and link them together forms the foundation of job development. Practice creating both server jobs and parallel jobs to understand their differences and appropriate use cases.

Learning the interface requires the same systematic approach as cracking Linux system administration, where understanding each command and tool builds comprehensive expertise. Explore the Director client for job monitoring and administration, the Administrator client for managing users and projects, and the Manager client for metadata analysis. Each component plays a vital role in the DataStage ecosystem, and certification questions often test your knowledge of when and how to use each tool appropriately.

Parallel Job Design Patterns and Best Practices Implementation

Parallel jobs represent the core strength of IBM InfoSphere DataStage, enabling high-performance data processing through partitioning and parallel execution. Master the concept of partitioning methods including hash, modulus, random, round-robin, and range partitioning to distribute data effectively across multiple processing nodes. Understanding when to use each partitioning method based on data characteristics and transformation requirements is critical for both certification success and real-world performance optimization.

Network engineering principles apply similarly to DataStage parallel processing architecture. Just as professionals pursue Cisco CCIE expert certifications to master complex networking concepts, DataStage developers must understand data flow optimization and resource management. Learn to implement proper sorting strategies, use buffering effectively, and minimize repartitioning overhead to create jobs that process millions of records efficiently while maintaining data integrity and transformation accuracy.

Database Connectivity and SQL Transformation Techniques Mastery

DataStage supports connectivity to virtually every major database platform including Oracle, SQL Server, DB2, Teradata, MySQL, and PostgreSQL. Understanding how to configure ODBC connections, native database stages, and dynamic database queries is essential for the certification exam. Practice writing efficient SQL statements within DataStage stages, including SELECT statements with WHERE clauses, JOIN operations, and aggregate functions that push processing to the database when appropriate.

Similar to how network professionals compare CCNP ENCOR versus CCIE paths, DataStage developers must decide between database processing and DataStage processing for transformations. Learn when to use database stages versus ODBC stages, how to implement sparse lookups for dimension table queries, and master the use of the Transformer stage for complex business logic that cannot be efficiently handled through SQL alone in source databases.

File Handling and Data Format Processing Capabilities

DataStage excels at processing various file formats including sequential files, complex flat files, XML documents, and Excel spreadsheets. Master the Sequential File stage for reading and writing delimited and fixed-width files, understanding options for header rows, quote characters, null handling, and record delimiters. Practice working with the Complex Flat File stage for hierarchical file structures that contain parent-child relationships within a single flat file format.

The complexity of file handling in DataStage parallels the rigor of CCIE Enterprise Infrastructure examinations, requiring both theoretical knowledge and practical troubleshooting skills. Learn to process XML files using the XML Input and XML Output stages, understanding XPath expressions and schema validation. Master techniques for handling large files through row buffering, understand how to process compressed files directly, and implement error handling for malformed records that might otherwise cause job failures.

Transformer Stage Logic and Business Rules Implementation

The Transformer stage serves as the workhorse of DataStage parallel jobs, enabling complex data transformations, business logic implementation, and derivation of new columns from existing data. Master the use of stage variables for sequential processing and lookups, understand constraint expressions for filtering records, and learn to use derivation expressions effectively. Practice writing expressions using DataStage's built-in functions for string manipulation, date/time conversion, mathematical operations, and null handling.

Advanced transformation logic requires methodical practice similar to CCIE Data Center preparation, where each concept builds upon previous knowledge. Learn to implement slowly changing dimension logic within Transformer stages, create custom functions for reusable business rules, and use loop variables for complex iterative processing. Understanding transformer optimization techniques including constraint ordering and stage variable efficiency helps create jobs that maintain high performance even with complex transformation requirements.

Lookup Stages and Reference Data Integration Strategies

Lookup operations are fundamental to data integration, enabling enrichment of source data with reference information from dimension tables or external sources. Master the different lookup stage types including Lookup File Set, Database Lookup, and Range Lookup, understanding when each type provides optimal performance. Practice implementing sparse versus normal lookups based on the percentage of source records expected to find matches in reference data.

Preparation for lookup mastery parallels the analytical rigor required for LSAT examination success, demanding both strategic thinking and attention to detail. Learn to handle lookup failures gracefully through reject links and default value assignments, implement multiple lookups efficiently within a single job, and understand how lookup caching affects memory utilization and performance. Master techniques for self-referencing lookups and implementing business logic that depends on hierarchical reference data relationships.

Job Sequencing and Workflow Orchestration Fundamentals

Job sequences enable orchestration of multiple DataStage jobs into cohesive workflows with conditional logic, loops, and error handling. Master the Job Activity stage for invoking parallel or server jobs within sequences, understand how to pass parameters between jobs, and implement conditional branching using Sequencer stages. Practice creating robust workflows that handle exceptions gracefully, send notifications on failures, and implement retry logic for transient errors.

Workflow design requires comprehensive planning similar to complete LSAT test preparation, where understanding the overall structure is as important as individual components. Learn to use Wait-For-File activities for event-driven processing, implement loops for processing multiple files or date ranges, and leverage email notification stages for operational monitoring. Master the use of nested sequences for modular workflow design and understand how to implement restart capabilities for long-running batch processes.

Parameter Management and Configuration Control Techniques

Parameters enable job reusability and environment portability by externalizing configuration values from job designs. Master the use of job parameters for values that change at runtime, environment variables for settings that vary between development, testing, and production environments, and parameter sets for grouping related configuration values. Practice creating parameter files for batch job invocation and understand how to override parameter values at different levels.

Configuration management principles apply across all technical domains, much like modern LSAT practice methodology, where systematic practice leads to mastery. Learn to implement encrypted parameters for sensitive information like passwords and connection strings, use parameter validation to prevent invalid values, and leverage default values to simplify job invocation. Understanding the parameter precedence hierarchy helps troubleshoot unexpected behavior and ensures consistent job execution across environments.

Error Handling and Data Quality Validation Approaches

Robust error handling distinguishes production-quality DataStage jobs from simple prototypes. Master the use of reject links to capture records that fail validation rules, understand how to implement data quality checks using Transformer constraints, and learn to log rejected records with descriptive error messages for troubleshooting. Practice implementing different reject handling strategies based on business requirements, including job termination on errors versus continuation with rejected record logging.

Quality validation methodologies mirror analytical approaches in LSAT practice test evaluation, where systematic review identifies areas for improvement. Implement data quality stages for standardization, matching, and survivorship rules when processing customer or product master data. Master techniques for implementing business rules validation, referential integrity checks, and data profiling to ensure that data integration processes deliver trusted information to downstream systems and analytics platforms.

Performance Optimization and Tuning Strategies for Large Datasets

Performance optimization is critical for DataStage jobs processing millions or billions of records in batch windows. Master techniques for analyzing job performance using the DataStage Director's performance statistics, understand how to identify bottlenecks through stage execution times and row counts, and learn to implement solutions based on performance analysis. Practice optimizing partitioning strategies to balance workload across nodes and minimize data skew.

Performance tuning requires deep system understanding comparable to React Context advanced patterns, where architectural decisions impact overall system efficiency. Learn to tune buffer sizes for optimal memory utilization, implement parallel processing configurations based on available server resources, and use database push-down operations when appropriate. Master techniques for optimizing lookup operations through proper indexing, sort optimization through early sorting, and aggregation optimization through proper grouping strategies.

Metadata Management and Repository Navigation Skills

The DataStage repository stores all metadata about jobs, stages, table definitions, and routines. Master the use of DataStage Manager for browsing repository contents, searching for objects, and analyzing metadata dependencies. Practice exporting and importing job designs between projects and environments, understanding the importance of table definitions and shared containers for promoting consistency and reusability across development teams.

Metadata management principles parallel code management practices seen in JavaScript array manipulation methods, where understanding relationships and dependencies ensures maintainability. Learn to use impact analysis features to identify which jobs are affected by changes to table definitions or shared containers, implement naming conventions for objects to improve searchability, and leverage parameter set inheritance for consistent configuration management across related jobs.

Data Warehousing Integration and Dimensional Modeling Concepts

DataStage serves as a primary ETL tool for populating data warehouses, making understanding of dimensional modeling essential for certification. Master the concepts of fact tables and dimension tables, understand slowly changing dimension types, and learn to implement SCD Type 1, Type 2, and Type 3 processing logic in DataStage jobs. Practice creating jobs that load star schema and snowflake schema designs with proper surrogate key generation.

Warehouse concepts require foundational knowledge similar to data warehouse technology fundamentals, where architectural understanding drives implementation decisions. Learn to implement incremental loading strategies using change data capture techniques, master the use of Surrogate Key Generator stages for dimension management, and understand how to process late-arriving dimensions and facts. Implement efficient fact table loading through parallel partition-based processing and dimension lookups.

Job Development Lifecycle and Version Control Practices

Professional DataStage development follows structured lifecycle practices including requirements analysis, design, development, testing, and deployment. Master the use of DataStage's built-in version control features for tracking changes to job designs, understand how to compare versions to identify differences, and learn to roll back to previous versions when necessary. Practice implementing peer review processes for job designs before promotion to production environments.

Development practices mirror software engineering approaches comparing developers versus engineers roles, where methodical processes ensure quality outcomes. Implement naming conventions and documentation standards for job designs, use annotations within jobs to explain complex logic, and maintain external documentation for operational procedures. Learn to integrate DataStage development with external version control systems like Git for enterprise-scale collaboration and change management.

Server Jobs and Their Specific Use Cases

While parallel jobs handle most production workloads, server jobs remain relevant for specific scenarios including complex transformations that require row-by-row processing, integration with legacy systems, and certain types of lookup operations. Master the differences between server jobs and parallel jobs in terms of architecture, available stages, and performance characteristics. Practice creating server jobs when parallel processing overhead outweighs benefits for small data volumes or complex sequential logic.

Understanding when to use different job types parallels knowing Kubernetes complexity versus benefits, where architectural decisions depend on specific requirements. Learn to implement before/after SQL processing in server jobs, master the use of server job stages like Aggregator and Transformer that differ from parallel equivalents, and understand how to integrate server jobs within parallel job designs through job activity stages when necessary.

Containers and Code Reusability Patterns

Shared containers promote code reusability by encapsulating common transformation logic that can be referenced by multiple jobs. Master the creation of both local containers and shared containers, understanding when each type is appropriate based on reuse requirements. Practice creating parameterized shared containers that accept input parameters to customize behavior while maintaining a single source of truth for transformation logic.

Container architecture concepts mirror modern deployment patterns seen in Docker client-daemon-registry architecture, where modular design enables efficiency and consistency. Learn to version shared containers appropriately to avoid breaking existing jobs when updating logic, implement testing procedures for shared containers before deployment, and use container parameters to enable flexible reuse across different business contexts while maintaining centralized control over transformation rules.

Exam Preparation Strategies and Study Resources

Effective exam preparation combines theoretical study with hands-on practice on real DataStage environments. Review IBM's official certification guide thoroughly, noting the exam objectives and weighting of different topics. Create a study schedule that allocates time proportional to topic importance and your current proficiency level. Practice answering sample questions to familiarize yourself with question formats and identify knowledge gaps.

Certification preparation methodologies parallel strategies used for SAP certification changes adaptation, where staying current with exam requirements ensures success. Join DataStage user communities and forums to learn from other professionals' experiences, participate in practice labs and hands-on workshops when available, and consider instructor-led training for complex topics. Create flashcards for memorizing stage properties, default values, and specific configuration options frequently tested on the exam.

Practice Labs and Real-World Scenario Simulation

Hands-on experience proves invaluable for certification success. Create practice scenarios that mirror real-world business requirements such as customer data integration, order processing workflows, and financial data consolidation. Implement complete solutions from requirements through testing, documenting your approach and lessons learned. Build increasingly complex scenarios that combine multiple concepts including lookups, aggregations, slowly changing dimensions, and error handling.

Practical application reinforces theoretical knowledge similar to Salesforce CPQ specialist preparation, where hands-on practice converts concepts into skills. Set up test databases with realistic data volumes to practice performance optimization, implement job scheduling to understand operational aspects, and practice troubleshooting deliberately introduced errors to develop diagnostic skills. Document your practice solutions as reference materials for exam review and future professional work.

Building Robust Change Data Capture Solutions

Change Data Capture represents a critical capability for maintaining data warehouse currency without full table reloads. Master techniques for implementing CDC using timestamp-based detection, trigger-based capture, and log-based CDC approaches. Learn to design DataStage jobs that identify inserted, updated, and deleted records efficiently by comparing source system snapshots or processing change logs. Practice implementing incremental loading patterns that minimize data transfer and processing time.

CDC implementation requires systematic planning and execution across different data platforms. Organizations pursuing SNIA storage networking certifications understand infrastructure considerations that impact data movement efficiency. Design jobs that handle edge cases including late-arriving changes, out-of-order updates, and source system outages. Master the use of CDC stages available in DataStage for automated change detection and propagation to target systems while maintaining referential integrity.

Real-Time Integration with Near Real-Time Processing

While DataStage primarily handles batch processing, understanding near real-time integration patterns expands your architectural toolkit. Learn to implement micro-batch processing that runs frequently throughout the day, practice designing jobs that process message queues, and understand how to integrate DataStage with streaming platforms. Master techniques for reducing batch window duration through job optimization and parallel processing.

Modern data integration increasingly demands low-latency processing similar to cloud platforms like Snowflake data warehousing that enable near real-time analytics. Design event-driven architectures where DataStage jobs respond to file arrival or message queue triggers rather than scheduled execution. Implement efficient incremental processing with minimal overhead to enable frequent execution cycles and learn to balance freshness requirements against system resource utilization.

Service-Oriented Architecture Integration Patterns

Enterprise architectures increasingly expose data integration capabilities as services that other applications can invoke programmatically. Master techniques for wrapping DataStage jobs as web services, understand how to implement RESTful APIs that trigger job execution, and practice designing jobs that accept JSON or XML input documents. Learn to implement response handling that returns job status and results to calling applications.

Service integration principles align with SOA architectural frameworks that enable flexible, reusable integration capabilities across the enterprise. Design DataStage solutions that participate in broader service orchestration workflows, implement proper error handling and status reporting for programmatic invocation, and master authentication and authorization patterns for secure service exposure. Understanding service-oriented patterns positions you for advanced integration scenarios beyond traditional batch processing.

Cloud Platform Integration and Hybrid Architectures

Modern enterprises operate hybrid environments combining on-premises systems with cloud platforms. Master DataStage capabilities for reading from and writing to cloud storage services including Amazon S3, Azure Blob Storage, and Google Cloud Storage. Practice configuring cloud database connectivity to platforms like Amazon Redshift, Azure Synapse Analytics, and Google BigQuery. Learn to implement jobs that efficiently transfer data between on-premises and cloud systems.

Cloud integration skills prove increasingly valuable as organizations migrate workloads. Professionals pursuing SOFE cloud certifications recognize the importance of hybrid integration capabilities. Design jobs that leverage cloud-native services for transformation when appropriate, understand network and security considerations for cloud connectivity, and master techniques for optimizing data transfer costs through compression and incremental processing. Learn to deploy DataStage itself in cloud environments for scalable integration infrastructure.

Data Quality Stage Configuration and Standardization

DataStage Quality stages enable sophisticated data cleansing, standardization, and matching operations essential for master data management. Master the configuration of standardization rules for addresses, names, and other entity attributes using built-in rulesets and custom patterns. Practice implementing data validation rules that identify suspect records based on business logic and reference data comparisons. Learn to use investigation stages for pattern analysis and profiling.

Quality management capabilities extend DataStage beyond basic ETL into comprehensive software certification domains that encompass data governance and master data management. Implement match stages for identifying duplicate records using phonetic matching, fuzzy matching, and custom matching algorithms. Master survivorship rules for selecting the best version of truth when consolidating duplicate records and understand how to implement data quality scorecards that measure integration process effectiveness.

Enterprise Architecture Alignment and Governance

DataStage implementations must align with enterprise architecture frameworks that govern technology standards and integration patterns. Understand how DataStage fits within broader architecture perspectives including business, information, application, and technology layers. Master techniques for documenting DataStage solutions using architecture diagrams, component models, and data flow representations. Learn to participate in architecture review processes that evaluate integration solutions against established standards.

Architecture alignment often follows established frameworks like TOGAF 9 certified methodologies that provide structure for enterprise architecture development. Practice creating architecture artifacts that document DataStage job designs at appropriate abstraction levels for different audiences including business stakeholders, technical architects, and operations teams. Understand governance processes for approving new integration patterns and ensure your solutions comply with established architectural principles and constraints.

Foundation Architecture Principles for Integration Solutions

Solid architectural foundations ensure DataStage solutions remain maintainable and scalable as business requirements evolve. Master principles including separation of concerns, modularity through shared containers, and parameterization for flexibility. Practice designing layered integration architectures with distinct staging, transformation, and presentation zones. Learn to implement canonical data models that standardize integration across multiple source and target systems.

Foundation principles mirror established architecture frameworks such as TOGAF 9 Foundation concepts that emphasize systematic design approaches. Design jobs that separate business logic from technical implementation details, enabling business rule changes without technical modifications. Implement configuration-driven patterns where job behavior adapts based on metadata rather than hard-coded values and master techniques for building self-documenting solutions through consistent naming and annotation standards.

Troubleshooting Complex Job Failures and Performance Issues

Production DataStage jobs occasionally fail or perform poorly, requiring systematic troubleshooting skills. Master techniques for analyzing job logs to identify error root causes, understand how to use DataStage Director's monitoring features to track job execution progress, and learn to enable detailed tracing for problematic jobs. Practice using DataStage's row sampling features to examine data at different points in job execution.

Troubleshooting methodologies apply across technology domains similar to TCP certification competencies that emphasize systematic problem resolution. Develop hypothesis-driven approaches to isolating performance bottlenecks, learn to use operating system tools for monitoring resource utilization during job execution, and master techniques for reproducing issues in controlled test environments. Understanding common failure patterns accelerates diagnosis and resolution in production support scenarios.

Automation and Orchestration with External Schedulers

Enterprise DataStage implementations integrate with enterprise scheduling platforms for workflow orchestration across multiple systems. Master techniques for invoking DataStage jobs from schedulers like Control-M, Autosys, and Tivoli Workload Scheduler using command-line interfaces. Practice implementing dependency management where jobs wait for upstream completion before executing. Learn to design jobs that communicate status to schedulers for proper workflow progression.

Orchestration capabilities extend DataStage integration into broader automation frameworks. Organizations implementing UiPath RPA certifications recognize the value of cross-platform automation. Design integration patterns where DataStage jobs participate in complex workflows including data extraction, file transfer, transformation, loading, and reporting. Master exit code management to signal success or failure appropriately and implement checkpoint restart capabilities for long-running batch processes.

Security Implementation and Data Protection Strategies

Security proves critical for data integration solutions handling sensitive information. Master DataStage security features including user authentication, role-based authorization, and encryption for credentials and sensitive data. Practice implementing data masking and tokenization for personally identifiable information in non-production environments. Learn to configure network security for database connectivity and understand compliance requirements for data processing.

Security practices align with broader information protection frameworks. Professionals pursuing VCE CIAE certifications understand infrastructure security principles applicable to data integration platforms. Implement audit logging for tracking data access and job execution, design encryption strategies for data at rest and in transit, and master secure credential management avoiding hard-coded passwords in job designs. Understanding security positioning enables trusted deployment in regulated industries.

Advanced Data Science Integration Patterns

DataStage can integrate with advanced analytics and data science workflows, preparing data for machine learning models and distributing scored results. Master techniques for implementing feature engineering transformations that prepare data for predictive models, practice creating jobs that invoke external scoring services, and learn to process structured output from analytics platforms. Understand data format requirements for popular machine learning frameworks.

Analytics integration skills align with emerging data science platforms. Organizations adopting Snowflake Advanced Data Scientist capabilities recognize the importance of robust data preparation. Design jobs that handle large-scale feature extraction efficiently, implement sampling strategies for model training dataset creation, and master techniques for versioning training data to ensure model reproducibility. Learn to create feedback loops where model predictions flow back into operational systems through DataStage jobs.

Core Platform Administration and Maintenance

While primarily a developer certification, understanding administrative tasks proves valuable. Master project creation and configuration, learn to manage user accounts and permissions, and practice importing and exporting DataStage objects between environments. Understand repository maintenance tasks including backups, purging old metadata, and managing disk space utilization. Practice installing DataStage patches and upgrades in test environments.

Platform administration knowledge complements development skills similar to SnowPro Core administration competencies that cover platform management. Learn to monitor DataStage server health using administrative interfaces and system monitoring tools, master techniques for managing concurrent job execution and resource allocation, and understand connection pooling configuration for optimal database connectivity. Administrative awareness enables better collaboration with operations teams supporting production environments.

Recertification Pathways and Continuing Education

IBM certifications require periodic recertification to ensure skills remain current with platform evolution. Understand recertification requirements including timeframes and methods for maintaining certification status. Stay informed about DataStage product updates and new features through IBM documentation, user communities, and training offerings. Plan continuing education to expand skills into related areas including data quality, master data management, and cloud integration.

Maintaining certification demonstrates ongoing professional commitment. Programs like SnowPro Core Recertification recognize that technology platforms evolve continuously. Participate in user conferences and webinars to learn about new capabilities, contribute to community forums sharing knowledge with other practitioners, and pursue advanced certifications in specialized areas. Continuous learning ensures your DataStage skills remain relevant as organizations adopt new integration patterns and technologies.

Service Technology Fundamentals for Integration

Understanding service-oriented architecture fundamentals enhances your ability to design DataStage solutions that participate in modern integration ecosystems. Master REST and SOAP web service concepts, learn to consume services from DataStage jobs using HTTP stages and XML processing, and practice implementing service error handling and retry logic. Understand service contract design including request/response message structures and API versioning strategies.

Service fundamentals align with established principles taught in S90-01 service technology courses that provide foundational service-oriented architecture knowledge. Design jobs that expose DataStage processing as callable services for application integration, implement proper logging for service invocations to support troubleshooting, and master techniques for handling asynchronous processing patterns where job execution continues independently of service response timeframes.

Service Design Principles for Reusable Integration

Applying service design principles to DataStage solutions promotes reusability and maintainability. Master concepts including service contract standardization, service loose coupling, and service abstraction that shield consumers from implementation details. Practice designing parameterized jobs that function as reusable services accepting varied input parameters. Learn to implement versioning strategies that allow service evolution without breaking existing consumers.

Design principles covered in frameworks like S90-02 service design curricula apply directly to integration job design. Create jobs with well-defined input and output contracts using parameter sets and standardized table definitions, implement error handling patterns that return meaningful status information to calling processes, and master techniques for backwards-compatible service evolution. Understanding these principles positions you to build enterprise-class integration solutions.

Service Architecture Concepts for Enterprise Integration

Advanced service architecture concepts enable DataStage participation in complex enterprise integration patterns. Master understanding of service composition where multiple services orchestrate to achieve business processes, learn about service registry and discovery patterns, and practice implementing service governance that ensures consistent service behavior. Understand how DataStage jobs fit within microservices architectures that decompose monolithic applications into specialized services.

Architecture concepts taught through S90-03 service architecture frameworks provide context for positioning DataStage within modern integration landscapes. Design jobs that implement business process steps within orchestrated workflows, master techniques for handling distributed transactions across multiple services and systems, and learn to implement compensation logic for handling failures in multi-step processes. Advanced architecture understanding enables sophisticated integration solution design.

Service-Oriented Analysis and Design Methodologies

Applying formal analysis and design methodologies improves DataStage solution quality and alignment with business requirements. Master techniques for gathering integration requirements from business stakeholders, practice creating logical data flow diagrams before physical job implementation, and learn to document job designs with specifications that enable peer review. Understand how to decompose complex integration requirements into manageable job components.

Methodical approaches similar to S90-08 analysis frameworks ensure solutions meet business needs while following technical best practices. Practice creating traceability matrices linking requirements to job implementations, design test scenarios that validate business logic and edge case handling, and master techniques for estimating development effort based on complexity analysis. Structured methodologies particularly benefit large integration projects with multiple stakeholders.

Service Infrastructure Components and Platform Integration

Understanding service infrastructure components helps position DataStage within enterprise integration platforms. Master knowledge of enterprise service buses, API gateways, and message queues that complement batch integration capabilities. Practice designing hybrid solutions where DataStage handles batch processing while other platforms manage real-time messaging. Learn to implement standardized integration patterns that leverage appropriate tools for specific requirements.

Infrastructure awareness parallels concepts from S90-09 service infrastructure courses that cover integration platform capabilities. Design jobs that publish results to message queues for downstream consumption, implement file-based integration where DataStage deposits transformed files for service ingestion, and master techniques for coordinating batch jobs with real-time service availability. Understanding the broader integration ecosystem enables optimal tool selection.

Fraud Examination Patterns in Data Integration

Data integration plays a critical role in fraud detection by consolidating information across systems for analysis. Master techniques for implementing fraud detection rules within DataStage jobs including anomaly detection based on historical patterns, velocity checks that identify suspicious transaction frequencies, and cross-reference validation against blacklists and watch lists. Practice creating jobs that flag suspicious records for investigation while allowing normal transactions to proceed.

Fraud detection methodologies align with professional practices taught in AFE fraud examination programs that emphasize systematic investigation techniques. Design jobs that calculate risk scores based on multiple attributes and behavioral patterns, implement real-time scoring where latency requirements allow, and master techniques for logging flagged transactions with supporting evidence for investigation. Understanding fraud patterns positions you to build integration solutions for financial services and e-commerce industries.

Business Analysis Skills for Requirements Gathering

Effective DataStage developers possess strong business analysis skills for eliciting and documenting integration requirements. Master techniques for conducting stakeholder interviews, practice creating use cases that capture integration scenarios, and learn to document data mapping specifications that translation source attributes to target structures. Understand how to resolve conflicting requirements from different stakeholder groups through facilitated discussions.

Business analysis competencies similar to CSBA certified business analyst skills enhance your ability to deliver solutions aligned with business needs. Practice creating process flow diagrams that illustrate data movement across systems, develop data dictionaries that define business terms consistently, and master techniques for validating requirements with business stakeholders before development begins. Strong analysis skills reduce rework caused by misunderstood requirements.

Software Testing Principles for Quality Assurance

Thorough testing ensures DataStage jobs function correctly before production deployment. Master unit testing techniques that validate individual job components, practice creating integration tests that verify end-to-end data flow, and learn to implement regression testing that confirms changes don't break existing functionality. Understand how to create comprehensive test datasets covering normal scenarios and edge cases.

Testing methodologies align with professional practices from CSTE software testing certification programs that emphasize systematic quality assurance. Design automated testing frameworks where possible to enable efficient regression testing, implement data reconciliation checks that verify source and target record counts and data values match expectations, and master techniques for performance testing that validates jobs handle production data volumes within acceptable timeframes. Rigorous testing practices prevent production issues.

Hybrid Cloud Observability for Integration Monitoring

Modern integration solutions require comprehensive monitoring across hybrid on-premises and cloud environments. Master techniques for implementing end-to-end monitoring that tracks data flow from source systems through DataStage transformation to target systems. Practice configuring alerting rules that notify operations teams of job failures or performance degradation. Learn to implement dashboards that provide visibility into integration health and throughput metrics.

Observability practices align with modern monitoring platforms offering Hybrid Cloud Network Monitoring capabilities that span diverse infrastructure components. Design monitoring solutions that capture job execution metrics, record processing statistics, and track data quality trends over time. Implement integration with enterprise monitoring platforms for centralized visibility and master techniques for diagnostic data collection that accelerates incident resolution when issues occur.

Network Performance Monitoring for Integration Infrastructure

Integration job performance often depends on network throughput and latency between DataStage servers and source or target systems. Master techniques for monitoring network performance metrics, understand how network congestion impacts job execution times, and learn to diagnose connectivity issues that cause job failures. Practice analyzing network traces to identify communication bottlenecks between distributed components.

Network monitoring skills complement infrastructure knowledge from NPM network performance programs that emphasize proactive performance management. Design jobs that implement connection pooling to minimize network overhead, understand timeout configuration for network operations, and master techniques for optimizing data transfer through compression and batch operations. Network awareness enables effective collaboration with infrastructure teams supporting DataStage environments.

Splunk Integration for Operational Intelligence

Enterprise organizations increasingly leverage Splunk for operational intelligence including integration monitoring and troubleshooting. Master techniques for forwarding DataStage job logs to Splunk for centralized analysis, practice creating Splunk queries that identify error patterns and performance trends, and learn to build dashboards that visualize integration metrics. Understand how to implement alerting based on log analysis that proactively identifies emerging issues.

Splunk integration aligns with enterprise platform adoption requiring SCP-500 Splunk certification knowledge for effective platform utilization. Design logging strategies within DataStage jobs that emit structured log messages optimized for Splunk parsing, implement correlation IDs that enable end-to-end transaction tracking across multiple jobs and systems, and master techniques for using Splunk search processing language to analyze integration patterns and anomalies.

Splunk Core Fundamentals for Log Analysis

Understanding Splunk core capabilities enhances your ability to troubleshoot DataStage jobs through log analysis. Master basic Splunk search commands for filtering and analyzing log events, practice creating custom field extractions from DataStage log formats, and learn to use statistical functions for aggregating metrics. Understand how to save searches and create alerts for operational monitoring.

Core competencies from SPLK-1001 Splunk fundamentals enable effective log analysis for DataStage operations. Practice correlating DataStage job execution with database logs and application logs to understand end-to-end transaction flows, master techniques for identifying performance bottlenecks through log timing analysis, and learn to use Splunk visualizations for presenting integration metrics to stakeholders. Log analysis skills prove invaluable for production support.

Splunk Power User Techniques for Advanced Analysis

Advanced Splunk techniques enable sophisticated analysis of DataStage integration patterns and issues. Master lookups that enrich log data with contextual information from reference files or other Splunk sources, practice creating subsearches that filter results based on complex criteria, and learn to use transaction commands for correlating related log events. Understand how to optimize search performance for large log volumes.

Power user skills from SPLK-1002 advanced courses elevate your analytical capabilities beyond basic searching. Design macros that encapsulate complex search patterns for reuse across multiple analyses, implement scheduled searches that generate metrics reports automatically, and master techniques for using statistical commands to identify anomalous behavior in integration patterns. Advanced analytical skills support proactive operations management.

Splunk Admin Fundamentals for Platform Management

Understanding Splunk administration helps you collaborate effectively with operations teams managing centralized logging infrastructure. Master basic concepts including index management, data retention policies, and user access controls. Practice configuring data inputs for DataStage log ingestion, learn to manage forwarders that collect logs from distributed DataStage servers, and understand licensing implications of log volume growth.

Administrative knowledge from SPLK-1003 Splunk admin courses facilitates effective logging infrastructure utilization. Design logging strategies within DataStage that balance detail for troubleshooting against index storage consumption, implement log rotation policies that prevent disk space exhaustion on DataStage servers, and master techniques for configuring parsed fields at ingestion time that accelerate search performance. Administrative awareness prevents operational issues.

Splunk Advanced Admin for Enterprise Deployment

Enterprise Splunk deployments involve distributed architectures requiring advanced administration knowledge. Master concepts including distributed search across multiple indexers, search head clustering for high availability, and indexer clustering for data replication. Practice configuring data routing to appropriate indexes based on log sources and retention requirements. Learn to implement authentication integration with enterprise directory services.

Advanced administration from SPLK-1004 enterprise Splunk courses ensures robust logging infrastructure for DataStage operations. Design index strategies that separate DataStage logs from other application logs for optimized storage and retention, implement role-based access controls ensuring appropriate teams access relevant log data, and master techniques for capacity planning that accommodate growing log volumes from expanding DataStage deployments. Enterprise administration knowledge supports scalable solutions.

Splunk Cloud Administration for SaaS Deployments

Organizations increasingly adopt Splunk Cloud for managed logging infrastructure. Master differences between Splunk Cloud and on-premises deployments including available customization options and administrative limitations. Practice configuring data inputs using Splunk Cloud's interface, learn to manage user access and apps in cloud environments, and understand how to work with Splunk support for advanced configuration requirements.

Cloud administration knowledge from SPLK-1005 Splunk Cloud courses enables effective utilization of managed services. Design HTTP Event Collector implementations for streaming DataStage job events directly to Splunk Cloud, implement secure data transmission using encryption and authentication, and master techniques for monitoring ingestion to ensure logs flow reliably from DataStage environments to cloud infrastructure. Cloud knowledge suits organizations preferring managed services.

Splunk Enterprise Certified Admin Expertise

Comprehensive Splunk administration skills position you to manage logging infrastructure supporting DataStage and broader integration platforms. Master deployment planning including sizing calculations based on expected log volumes, practice implementing distributed architectures for scalability and resilience, and learn to configure disaster recovery procedures including index replication and backup strategies. Understand performance tuning for search and indexing operations.

Enterprise administration expertise from SPLK-2001 advanced certification ensures production-grade logging infrastructure. Design monitoring solutions for the Splunk infrastructure itself ensuring availability for critical operational needs, implement automation for routine administrative tasks using Splunk's REST API and CLI tools, and master techniques for upgrade planning that minimizes disruption to ongoing log collection and analysis. Comprehensive skills support mission-critical deployments.

Final Exam Preparation and Test-Taking Strategies

As exam day approaches, focus preparation efforts on areas showing weakness in practice assessments. Review DataStage documentation for specific stage properties and configuration options frequently tested. Practice time management by completing sample questions within allocated timeframes. Create summary sheets covering key concepts, default values, and configuration requirements for quick review before the exam.

Effective test-taking strategies maximize your score by ensuring you answer all questions within the time limit. Read each question carefully noting keywords like "always," "never," "best," and "except" that guide correct answer selection. Answer easier questions first to bank time for difficult questions requiring analysis. Use process of elimination to narrow options when unsure of the correct answer, and flag questions for review if time permits at the exam conclusion.

Post-Certification Career Development and Skill Application

Earning the IBM Certified Solution Developer InfoSphere DataStage v11.3 credential opens career opportunities across industries requiring enterprise data integration expertise. Update your professional profiles highlighting your certification achievement and specific DataStage skills. Pursue challenging projects that apply advanced techniques learned during certification preparation. Share knowledge with colleagues through presentations or mentoring to reinforce your expertise while building professional reputation.

Continuous skill development ensures your certification investment yields ongoing value. Stay current with DataStage product updates and new features through IBM documentation and user community participation. Explore adjacent technologies including data quality platforms, master data management tools, and cloud integration services that complement DataStage capabilities. Consider pursuing additional certifications in related areas to build comprehensive data integration and analytics expertise supporting long-term career growth.

Conclusion

Mastering DataStage involves understanding parallel processing architectures that enable high-performance data transformation, database connectivity patterns that optimize data movement across heterogeneous platforms, and transformation logic that implements complex business rules efficiently. The certification validates your ability to design jobs leveraging appropriate stages for specific requirements, implement error handling that ensures production reliability, and optimize performance for large-scale data volumes meeting enterprise service level agreements. These technical skills combine with architectural awareness positioning DataStage within broader integration ecosystems including service-oriented architectures, cloud platforms, and hybrid infrastructure deployments that characterize modern enterprise environments.

Beyond technical proficiency, successful DataStage professionals demonstrate strong analytical skills for gathering requirements from business stakeholders, translating business needs into technical designs, and validating solutions through comprehensive testing before production deployment. The certification preparation journey develops systematic troubleshooting approaches essential for diagnosing job failures and performance issues in production support scenarios. Understanding operational aspects including monitoring, logging, security, and maintenance ensures your solutions remain reliable and maintainable throughout their lifecycle, delivering ongoing business value beyond initial implementation.

The certification exam tests your knowledge across all these dimensions through scenario-based questions requiring you to select best approaches for specific business and technical requirements. Effective preparation involves hands-on practice building complete solutions from requirements through testing, not merely memorizing stage properties and configuration options. Create diverse practice scenarios covering dimensional modeling implementations, change data capture patterns, file processing workflows, and service integration designs that exercise the full breadth of DataStage capabilities. Supplement practical experience with systematic study of official documentation, participation in user communities, and completion of practice assessments identifying knowledge gaps requiring additional focus.

Your certification achievement demonstrates professional commitment to excellence in data integration, differentiating you in competitive job markets and positioning you for challenging projects requiring enterprise ETL expertise. The credential opens doors to roles including DataStage Developer, ETL Architect, Data Integration Specialist, and BI Developer across industries including financial services, healthcare, retail, telecommunications, and manufacturing that depend on robust data integration infrastructure. Beyond immediate career benefits, certification provides foundation for continuous learning as you encounter new integration challenges, explore adjacent technologies, and grow into senior technical or leadership roles guiding enterprise data strategies.

The knowledge and skills acquired during certification preparation deliver value extending far beyond the exam itself. You gain comprehensive understanding of data integration principles, architectural patterns, and best practices applicable across multiple ETL platforms and integration scenarios. Problem-solving approaches developed through troubleshooting complex scenarios transfer to other technical domains requiring analytical thinking and systematic issue resolution. Project management capabilities built through planning and executing practice implementations prepare you for real-world projects with competing requirements, constrained resources, and demanding timelines requiring effective prioritization and stakeholder communication.

As you complete your certification journey and embark on applying your validated DataStage expertise professionally, remember that technology platforms continuously evolve with new features, enhanced capabilities, and emerging integration patterns. Maintain your skills through ongoing learning, participate actively in user communities sharing knowledge and learning from peers, and pursue recertification when required to ensure your credential remains current. Consider expanding expertise into complementary areas including data quality management, master data management, data governance, cloud integration platforms, and advanced analytics that increasingly integrate with traditional ETL workflows in comprehensive data management solutions.

The IBM Certified Solution Developer InfoSphere DataStage v11.3 credential represents both an ending and a beginning: culmination of focused preparation efforts and foundation for ongoing professional growth in the dynamic field of enterprise data integration. Approach your certification achievement with deserved pride while maintaining humility and curiosity that fuel continuous learning and improvement. Share your knowledge generously with colleagues and community members, contribute to forums and discussions that elevate the profession collectively, and seek challenging opportunities that push your skills beyond current comfort zones into new areas of mastery.

Your investment in certification preparation develops not just platform-specific skills but broader professional capabilities including discipline, persistence, systematic thinking, and commitment to excellence that serve you throughout your career regardless of specific technologies or roles. These transferable skills combined with demonstrated DataStage expertise position you for long-term success in the evolving data management landscape where integration remains critical for enabling analytics, supporting operations, and driving business value from organizational information assets. Congratulations on your certification journey, and may your validated expertise open doors to rewarding opportunities advancing both your professional goals and organizational missions through powerful, reliable data integration solutions.

Frequently Asked Questions

How does your testing engine works?

Once download and installed on your PC, you can practise test questions, review your questions & answers using two different options 'practice exam' and 'virtual exam'. Virtual Exam - test yourself with exam questions with a time limit, as if you are taking exams in the Prometric or VUE testing centre. Practice exam - review exam questions one by one, see correct answers and explanations).

How can I get the products after purchase?

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?

Pass4sure products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team, will be automatically downloaded on to computer to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?

Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area.

Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?

We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

How many computers I can download Pass4sure software on?

You can download the Pass4sure products on the maximum number of 2 (two) computers or devices. If you need to use the software on more than two machines, you can purchase this option separately. Please email sales@pass4sure.com if you need to use more than 5 (five) computers.

What are the system requirements?

Minimum System Requirements:

- Windows XP or newer operating system

- Java Version 8 or newer

- 1+ GHz processor

- 1 GB Ram

- 50 MB available hard disk typically (products may vary)

What operating systems are supported by your Testing Engine software?

Our testing engine is supported by Windows. Andriod and IOS software is currently under development.